Overview of adaptive parallel reasoning.

What if a reasoning mannequin may resolve for itself when to decompose and parallelize impartial subtasks, what number of concurrent threads to spawn, and how you can coordinate them based mostly on the issue at hand? We offer an in depth evaluation of current progress within the subject of parallel reasoning, particularly Adaptive Parallel Reasoning.

Disclosure: this put up is a component panorama survey, half perspective on adaptive parallel reasoning. One of many authors (Tony Lian) co-led ThreadWeaver (Lian et al., 2025), one of many strategies mentioned beneath. The authors intention to current every method by itself phrases.

Motivation

Current progress in LLM reasoning capabilities has been largely pushed by inference-time scaling, along with knowledge and parameter scaling (OpenAI et al., 2024; DeepSeek-AI et al., 2025). Fashions that explicitly output reasoning tokens (by way of intermediate steps, backtracking, and exploration) now dominate math, coding, and agentic benchmarks. These behaviors enable fashions to discover different hypotheses, appropriate earlier errors, and synthesize conclusions reasonably than committing to a single answer (Wen et al., 2025).

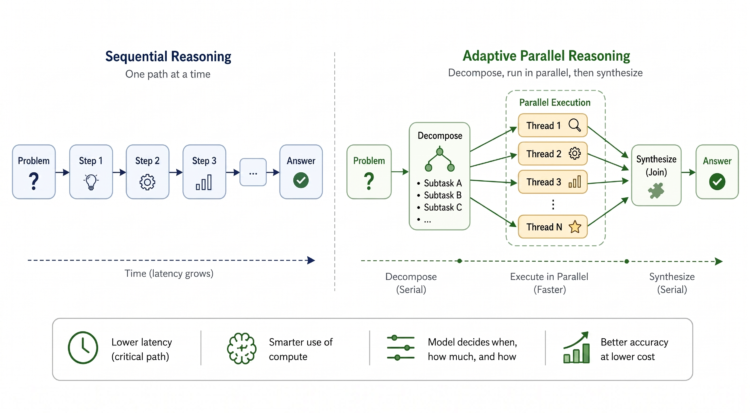

The issue is that sequential reasoning scales linearly with the quantity of exploration. Scaling sequential reasoning tokens comes at a price, as fashions danger exceeding efficient context limits (Hsieh et al., 2024). The buildup of intermediate exploration paths makes it difficult for the mannequin to disambiguate amongst distractors when attending to data in its context, resulting in a degradation of mannequin efficiency, also referred to as context-rot (Hong, Troynikov and Huber, 2025). Latency additionally grows proportionally with reasoning size. For advanced duties requiring thousands and thousands of tokens for exploration and planning, it’s not unusual to see customers wait tens of minutes and even hours for a solution (Qu et al., 2025). As we proceed to scale alongside the output sequence size dimension, we additionally make inference slower, much less dependable, and extra compute-intensive. Parallel reasoning has emerged as a pure answer. As a substitute of exploring paths sequentially (Gandhi et al., 2024) and accumulating the context window at each step, we will enable fashions to discover a number of threads independently (threads don’t depend on one another’s context) and concurrently (threads might be executed on the similar time).

Determine 1: Sequential vs. Parallel Reasoning

Over current years, a rising physique of labor has explored this concept throughout artificial settings (e.g., the Countdown recreation (Katz, Kokel and Sreedharan, 2025)), real-world math issues, and common reasoning duties.

From Fastened Parallelism to Adaptive Management

Present approaches present that parallel reasoning will help, however most of them nonetheless resolve the parallel construction outdoors the mannequin reasonably than letting the mannequin select it.

Easy fork-and-join.

- Self-consistency/Majority Voting — independently pattern a number of full reasoning traces, extract closing reply from every, and return the commonest one (Wang et al., 2023).

- Greatest-of-N (BoN) — just like self-consistency, however makes use of a skilled verifier to pick the perfect answer as an alternative of utilizing majority voting (Stiennon et al., 2022).

- Though easy to implement, these strategies usually incur redundant computation throughout branches since trajectories are sampled independently.

Heuristic-based structured search.

- Tree / Graph / Skeleton of Ideas — a household of structured decomposition strategies that explores a number of different “ideas” utilizing recognized search algorithms (BFS/DFS) and prunes through LLM-based analysis (Yao et al., 2023; Besta et al., 2024; Ning et al., 2024).

- Monte-Carlo Tree Search (MCTS) — estimates node values by sampling random rollouts and expands the search tree with Higher Confidence Sure (UCB) type exploration-exploitation (Xie et al., 2024; Zhang et al., 2024).

- These strategies enhance upon easy fork-and-join by decomposing duties into non-overlapping subtasks; nevertheless, they require prior data concerning the decomposition technique, which isn’t all the time recognized.

Current variants.

- ParaThinker — trains a mannequin to run in two fastened levels: first producing a number of reasoning threads in parallel, then synthesizing them. They introduce trainable management tokens (

- GroupThink — a number of parallel reasoning threads can see one another’s partial progress at token stage and adapt mid-generation. In contrast to prior concurrent strategies that function on impartial requests, GroupThink runs a single LLM producing a number of interdependent reasoning trajectories concurrently (Hsu et al., 2025).

- Hogwild! Inference — a number of parallel reasoning threads share KV cache and resolve how you can decompose duties with out an express coordination protocol. Employees generate concurrently right into a shared consideration cache utilizing RoPE to sew collectively particular person KV blocks in several orders with out recomputation (Rodionov et al., 2025).

Determine 2: Numerous Methods for Parallel Reasoning

The strategies above share a standard limitation: the choice to parallelize, the extent of parallelization, and the search technique are imposed on the mannequin, no matter whether or not the issue truly advantages from it. Nevertheless, completely different issues want completely different ranges of parallelization, and that’s one thing crucial to the effectiveness of parallelization. For instance, a framework that applies the identical parallel construction to “What’s 25+42?” and “What’s the smallest planar area in which you’ll be able to constantly rotate a unit-length line section by 180°?” is losing compute on the previous and doubtless utilizing the mistaken decomposition technique for the latter. Within the approaches described above, the mannequin will not be taught this adaptive conduct. A pure query arises: What if the mannequin may resolve for itself when to parallelize, what number of threads to spawn, and how you can coordinate them based mostly on the issue at hand?

Adaptive Parallel Reasoning (APR) solutions this query by making parallelization a part of the mannequin’s generated management movement. Formally outlined, adaptivity refers back to the mannequin’s potential to dynamically allocate compute between parallel and serial operations at inference time. In different phrases, a mannequin with adaptive parallel reasoning (APR) functionality is taught to coordinate its management movement — when to generate sequences sequentially vs. in parallel.

It’s necessary to notice that the idea of adaptive parallel reasoning was launched by the work Studying Adaptive Parallel Reasoning with Language Fashions (Pan et al., 2025), however is a paradigm reasonably than a selected technique. All through this put up, APR refers back to the paradigm, whereas “the APR technique” denotes the particular instantiation from Pan et al. (2025).

This shift issues for 3 causes. In comparison with Tree-of-Ideas, APR doesn’t want domain-specific heuristics for decomposition. Throughout RL, the mannequin learns common decomposition methods from trial and error. Actually, fashions uncover helpful parallelization patterns, corresponding to operating the following step together with the self-verification of a earlier step, or hedging a major method with a backup one, in an emergent method that may be troublesome to hand-design (Yao et al., 2023; Wu et al., 2025; Zheng et al., 2025).

In comparison with BoN, APR avoids redundant computation. APR fashions have management over what every parallel thread will do earlier than branching out. Due to this fact, APR can be taught to provide a set of distinctive, non-overlapping subtasks earlier than assigning them to impartial threads (Wang et al., 2023; Stiennon et al., 2022; Pan et al., 2025; Yang et al., 2025).

In comparison with non-adaptive approaches, APR can select to not parallelize. Adaptive fashions can alter the extent of parallelization to match the complexity of the issue towards the complexity and overhead of parallelization (Lian et al., 2025).

In follow, that is applied by having the mannequin output particular tokens that management when to cause in parallel versus sequentially. Beneath is a condensed ThreadWeaver-style hint: two outlines and two paths below a

Determine 3: Instance of an Adaptive Parallel Reasoning Trajectory from ThreadWeaver, manually condensed for ease of illustration.

Determine 4: Particular Tokens Variants throughout Adaptive Parallel Reasoning Papers

Inference Programs for Adaptive Parallelism

How will we truly execute parallel branches? We take inspiration from laptop programs, and particularly, multithreading and multiprocessing. Most of this work might be considered as leveraging a fork-join design.

At inference time, we’re successfully asking the mannequin to carry out a map-reduce operation:

- Fork the issue into subtasks/threads, course of them concurrently

- Be a part of them right into a closing reply

Determine 5: Fork-join Inference Design

Particularly, the mannequin will encounter an inventory of subtasks. It is going to then prefill every of the subtasks and ship them off as impartial requests for the inference engine to course of. These threads then decode concurrently till they hit an finish token or exceed max size. This course of blocks till all threads end decoding after which aggregates the outcomes. That is frequent throughout numerous adaptive parallel reasoning approaches. Nevertheless, one problem arises throughout aggregation: the content material generated in branches can’t be simply aggregated on the KV cache stage. It is because tokens in impartial threads begin at similar place IDs, leading to encoding overlap and non-standard conduct when merging KV cache again collectively. Equally, since impartial threads don’t attend to one another, their concatenated KV cache leads to a non-causal consideration sample, which the bottom mannequin has not seen throughout coaching.

To handle this problem, the sphere splits into two colleges of thought on how you can execute the aggregation course of, outlined by whether or not they modify the inference engine or work round it.

Multiverse modifies the inference engine to reuse KV cache throughout the be a part of. Earlier than taking a deeper look into Multiverse (Yang et al., 2025)’s reminiscence administration, let’s first perceive how KV cache is dealt with up till the “be a part of” section. Discover how every of the impartial threads share the prefix sequence, i.e., the record of subtasks. With out optimization, every thread must prefill and recompute the KV cache for the prefix sequence. Nevertheless, this redundancy might be averted with SGLang’s RadixAttention (Sheng et al., 2023), which organizes a number of requests right into a radix tree, a trie (prefix tree) with sequences of components of various lengths as an alternative of single components. This fashion, the one new KV cache entries are these from impartial thread era.

Determine 6: RadixAttention’s KV Cache Administration Technique

Now, if every part went properly, all of the impartial threads have come again from the inference engine. Our purpose is now to determine how you can synthesize them again right into a single sequence to proceed decoding for subsequent steps. It seems, we will reuse the KV cache of those impartial threads throughout the synthesis stage. Particularly, Multiverse (Yang et al., 2025), Parallel-R1 (Zheng et al., 2025), and NPR (Wu et al., 2025) modify the inference engine to repeat over the KV cache generated by every thread and edits the web page desk in order that it stitches collectively non-contiguous reminiscence blocks right into a single KV cache sequence. This avoids the redundant computation of a second prefill and reuses present KV cache as a lot as potential. Nevertheless, this has a number of main limitations.

First, this method requires modifying the inference engine to carry out non-standard reminiscence dealing with, which may end up in surprising behaviors. Particularly, for the reason that synthesis request references KV cache from earlier requests, it creates fragility within the system and the opportunity of unhealthy pointers. One other request can are available and evict the referenced KV cache earlier than the synthesis request completes, requiring it to halt and set off a re-prefilling of the earlier thread request. This drawback has led the Multiverse researchers (Yang et al., 2025) to restrict the batch dimension that the inference engine can deal with, which restricts throughput.

Determine 7: KV Cache “Stitching” Throughout Multiverse Inference

Second, this method modifies how fashions see the sequence, which creates a distributional shift that fashions aren’t pretrained on, due to this fact requiring extra in depth coaching to align conduct. Particularly, once we sew collectively KV cache this fashion, we create a sequence with non-standard place encoding. Throughout independent-thread era, all threads began on the similar place index and attended to the prior subtasks, NOT one another. So when the threads merge again, the ensuing KV cache has a non-standard positional encoding and doesn’t use causal consideration. Due to this fact, this method requires in depth coaching to align the mannequin to this new conduct. To handle this, Multiverse (Yang et al., 2025) and associated works apply a modified consideration masks throughout coaching to stop impartial threads from attending to one another, aligning the coaching and inference behaviors.

Determine 8: Multiverse’s Consideration Masks

With these points arising from non-standard KV cache administration, can we strive an method with out engine modifications?

ThreadWeaver retains the inference engine unchanged and strikes orchestration to the consumer. ThreadWeaver (Lian et al., 2025) treats parallel inference purely as a client-side drawback. The “Fork” course of is sort of similar to Multiverse’s, however the be a part of section handles reminiscence very in another way because it does NOT modify engine internals. As a substitute, the consumer concatenates all textual content outputs from impartial branches into one contiguous sequence. Then, the engine performs a second prefill to generate the KV cache for the conclusion era step. Whereas this introduces computational redundancy that Multiverse tries to keep away from, the price of prefill is considerably decrease than decoding. As well as, this doesn’t require particular consideration dealing with throughout inference, because the second prefill makes use of causal consideration (threads see one another), making it simpler to adapt sequential autoregressive fashions for this job.

Determine 9: ThreadWeaver’s Prefill and Decode Technique

How ought to we practice a mannequin to be taught this conduct? Naively, for every parallel trajectory, we will break it down into a number of sequential items following our inference sample. As an illustration, we’d practice the mannequin to output the subtasks given immediate, particular person threads given immediate+subtask project, and conclusion given immediate+subtasks+corresponding threads. Nevertheless, this appears redundant and never compute environment friendly. Can we do higher? Seems, sure. As in ThreadWeaver (Lian et al., 2025), we will arrange a parallel trajectory right into a prefix-tree (trie), flatten it right into a single sequence, and apply an ancestor-only consideration masks throughout coaching (not inference!).

Determine 10: Constructing the Prefix-tree and Flattening right into a single coaching sequence

Particularly, we apply masking and place IDs to imitate the inference conduct, such that every thread is barely conditioned on the immediate+subtasks, with out ever attending to sibling threads or the ultimate conclusion.

The engine-agnostic design makes adoption straightforward because you don’t want to determine a separate internet hosting technique and may leverage present {hardware} infra. It additionally will get higher as present inference engines get higher. What’s extra, with an engine-agnostic technique, we will serve a hybrid mannequin that switches between sequential and parallel pondering modes simply.

Coaching Fashions to Use Parallelism

As soon as the inference path exists, the following drawback is educating a mannequin to make use of it. Demonstrations are wanted as a result of the mannequin should be taught to output particular tokens that orchestrate management movement. We discovered the instruction-following capabilities of base fashions inadequate for producing parallel threads.

An fascinating query right here is: does SFT coaching induce a basic reasoning functionality for parallel execution that was beforehand absent, or does it merely align the mannequin’s present pre-trained capabilities to a selected control-flow token syntax. Typical knowledge is SFT teaches new data; however opposite to frequent perception, some papers—notably Parallel-R1 (Zheng et al., 2025) and NPR (Wu et al., 2025)—argue that their SFT demonstrations merely induce format following (i.e., how you can construction parallel requests). We depart this as future work.

Determine 11: Sources of Parallelization Demonstration Information

Demonstrations train the syntax of parallel management movement, however they don’t totally resolve the inducement drawback. In a super world, we solely have to reward the result accuracy, and the parallelization sample emerges naturally provided that it learns to output particular tokens by way of SFT, just like the emergence of lengthy CoT. Nevertheless, researchers (Zheng et al., 2025) noticed that this isn’t sufficient, and we do the truth is want parallelization incentives. The query then turns into, how will we inform when the mannequin is parallelizing successfully?

Construction-only rewards are too straightforward to recreation. Naively, we can provide a reward for the variety of threads spawned. However fashions can spawn many brief, ineffective threads to hack the reward. Okay, that doesn’t work. How a few binary reward for merely utilizing parallel construction appropriately? This partially solves the difficulty of fashions spamming new threads, however fashions nonetheless be taught to spawn threads after they don’t have to. The authors of Parallel-R1 (Zheng et al., 2025) launched an alternating-schedule, solely rewarding parallel construction 20% of the time, which efficiently elevated using parallel construction (13.6% → 63%), however had little affect on total accuracy.

With this structure-only method, we may be drifting away from our authentic purpose of accelerating accuracy and decreasing latency… How can we optimize for the Pareto frontier straight? Accuracy is straightforward — we simply take a look at the result. How about latency?

Effectivity rewards want to trace the crucial path. In sequential-only trajectories, we will measure latency based mostly on the overall variety of tokens generated. To increase this to parallel trajectories, we will deal with the crucial path, or the longest sequence of tokens which can be causally dependent, as this straight determines our end-to-end era time (i.e., wall-clock time). For example, when there are two

Determine 12: Essential Path Size Illustration

The purpose is to attenuate the size of the crucial path. Concurrently, we’d nonetheless just like the mannequin to be spending tokens exploring threads in parallel. To mix the 2 goals, we will deal with making the crucial path a smaller fraction of the overall tokens spent. Authors of ThreadWeaver (Lian et al., 2025) framed the parallelization reward as $1 – L_{mathrm{crucial}} / L_{mathrm{complete}}$, which is 0 for a sequential trajectory, and will increase linearly because the crucial path will get smaller in comparison with the overall tokens generated.

Parallel effectivity ought to be gated by correctness. Intuitively, when a number of trajectories are appropriate we should always assign extra reward to the trajectories which can be extra environment friendly at parallelization. However how about when they’re all incorrect? Ought to we assign any reward in any respect? Most likely not.

To formalize this, $R = R_{mathrm{correctness}} + R_{mathrm{parallel}}$. Assuming binary final result correctness, this may be written as $R = mathbf{1}(textual content{Correctness}) + mathbf{1}(textual content{Correctness}) instances (textual content{some parallelization metric})$. This fashion, a mannequin solely will get a parallelization reward when it solutions appropriately, since we don’t need to pose parallelization constraints on the mannequin if it couldn’t reply the query appropriately.

Determine 13: Variations in Reward Designs Throughout Adaptive Parallel Reasoning Works

Analysis and Open Questions

When all is alleged and completed, how properly do these adaptive parallel strategies truly carry out? Properly…it is a onerous query, as they differ in mannequin selection and metrics. The mannequin choice depends upon the coaching technique, SFT drawback issue, and sequence size. When operating SFT on troublesome datasets like s1k, which comprises graduate-level math and science issues, researchers selected a big base mannequin (Qwen2.5 32B for Multiverse (Yang et al., 2025)) to seize the advanced reasoning construction behind the answer trajectories. When operating RL, researchers selected a small, non-CoT, instruct mannequin (4B, 8B) as a consequence of compute value constraints.

Determine 14: Distinction in Mannequin Alternative Throughout Adaptive Parallel Reasoning Papers

Every paper additionally presents a barely completely different interpretation about how adaptive parallel reasoning contributes to the analysis subject. They optimize for various theoretical goals, so that they use barely completely different units of metrics:

- Multiverse and ThreadWeaver (Yang et al., 2025; Lian et al., 2025) intention to ship sequential-AR-model-level accuracy at sooner speeds. Multiverse reveals that APR fashions can obtain larger accuracy below the identical fastened context window, whereas ThreadWeaver reveals that the APR mannequin achieves shorter end-to-end token latency (crucial path size) whereas getting comparable accuracy.

- NPR (Wu et al., 2025) treats sequential fallback as a failure mode and optimizes for 100% Real Parallelism Charge, measured because the ratio of parallel tokens to complete tokens.

- Parallel-R1 (Zheng et al., 2025) doesn’t deal with end-to-end latency and as an alternative optimizes for exploration variety, presenting APR as a type of mid-training exploration scaffold that gives a efficiency enhance after RL.

Open Questions

Whereas Adaptive Parallel Reasoning represents a promising step towards extra environment friendly inference-time scaling, important open questions stay.

As famous above, Parallel-R1 (Zheng et al., 2025) presents APR as a type of mid-training exploration scaffold reasonably than a primarily inference-time method. This invitations a extra basic query: Does parallelization at inference-time constantly enhance accuracy, or is it primarily useful as a training-time exploration scaffold? Parallel-R1 means that the range induced by parallel construction throughout RL could matter greater than the parallelization itself at take a look at time.

A associated concern is stability. There’s additionally a persistent tendency for fashions to break down again to sequential reasoning when parallelization rewards are relaxed. Parallel-R1 authors confirmed that eradicating parallelization reward after 200 steps leads to the mannequin reverting to sequential conduct. Is that this a coaching stability problem, a reward sign design problem, or proof that parallel construction genuinely conflicts with how autoregressive pretraining shapes the mannequin’s prior?

Past whether or not APR works, deployment introduces its personal questions. Can we design coaching strategies that account for out there compute finances at inference time, so parallelization selections are hardware-aware reasonably than purely problem-driven?

Lastly, the parallel buildings thought-about above are primarily flat. What if we enable parallelization depth > 1? Recursive language fashions (RLMs; Zhang, Kraska and Khattab, 2026) successfully handle lengthy context and present promising inference-time scaling capabilities. How properly do RLMs carry out when skilled with end-to-end RL that incentivizes adaptive parallelization?

Acknowledgements

We thank Nicholas Tomlin and Alane Suhr for offering us with useful suggestions. We thank Christopher Park, Karl Vilhelmsson, Nyx Iskandar, Georgia Zhou, Kaival Shah, and Jyoti Rani for his or her insightful solutions. We thank Vijay Kethana, Jaewon Chang, Cameron Jordan, Syrielle Montariol, Erran Li, and Anya Ji for his or her useful discussions. We thank Jiayi Pan, Xiuyu Li, and Alex Zhang for his or her constructive correspondences about Adaptive Parallel Reasoning and Recursive Language Fashions.