Hapag-Lloyd stands as one of many world’s main liner transport corporations, working a contemporary fleet of 313 container ships with a complete transport capability of two.5 million TEU (Twenty-foot Equal Unit—a typical unit of measurement for cargo capability in container transport). The corporate maintains a container capability of three.7 million TEU, which incorporates one of many trade’s largest and most fashionable fleets of reefer containers. With roughly 14,000 workers within the Liner Transport Section and greater than 400 workplaces unfold throughout 140 nations, Hapag-Lloyd maintains a strong world presence. By way of 133 liner companies worldwide, we facilitate dependable connections between greater than 600 ports throughout the continents.

The corporate’s Digital Buyer Expertise and Engineering crew, distributed between Hamburg and Gdańsk, drives digital innovation by growing and sustaining customer-facing internet and cellular merchandise.

Over the previous years, the Digital Buyer Expertise and Engineering crew has developed from a delivery-focused channel into a real digital product driver, with robust buyer focus, engineering excellence, and measurable enterprise impression. We take end-to-end possession of our digital merchandise, combining customer-centric innovation with engineering craft to straight help progress and enterprise outcomes. Constructing on a contemporary, independently owned tech stack and a excessive degree of engineering maturity, we’re dedicated to staying on the forefront of expertise. Now, we’re taking the following step by shifting towards changing into AI-native, investing closely in synthetic intelligence as a core functionality. This journey is about amplifying highly effective engineering with AI to construct smarter merchandise, sooner innovation, and larger buyer worth.

Understanding person impression.

Thus far, our buyer suggestions evaluation course of had largely been guide and reactive. Particularly forward of assessment ceremonies, manually analyzing buyer suggestions may take hours, generally days, when a whole lot of rankings and feedback wanted to be reviewed. Each two weeks, Product Managers exported buyer suggestions information as CSV information, learn by means of massive volumes of feedback, and manually categorized sentiment and themes. Though this work was invaluable and deeply related to product choices, it was additionally repetitive, time-consuming, and troublesome to scale, limiting flexibility each time sooner or deeper insights had been wanted.

With our generative AI answer, we essentially modified this method. As an alternative of manually aggregating and deciphering suggestions, we now automate the whole workflow: gathering buyer feedback, extracting sentiment, figuring out themes, and surfacing actionable insights. Product Managers and groups can focus much less on operational evaluation and extra on technique, innovation, and creating distinctive person experiences.

On this put up, we stroll you thru our generative AI–powered suggestions evaluation answer constructed utilizing Amazon Bedrock, Elasticsearch, and open-source frameworks like LangChain and LangGraph. Amazon Bedrock is a totally managed service that gives a alternative of high-performing basis fashions from main AI corporations corresponding to AI21 Labs, Anthropic, Cohere, DeepSeek, Luma, Meta, Mistral AI and Amazon by means of a single API, together with a broad set of capabilities you want to construct generative AI functions with safety, privateness, and accountable AI. With this answer, you’ll be able to mechanically ingest buyer feedback, generate wealthy summaries, and ship focused insights. This enables our product groups to make sooner, smarter choices and drive steady enchancment.

We stroll you thru the structure and implementation of this answer, demonstrating how utilizing generative AI foundations, corresponding to orchestration, information administration, safety, and privateness, allowed us to quickly construct a scalable, production-ready suggestions processing pipeline.

Answer overview

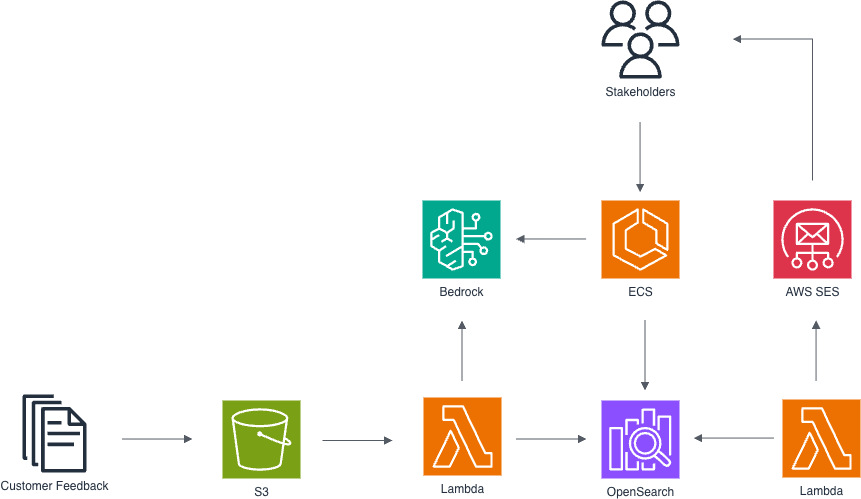

AWS structure for automated suggestions processing and evaluation, using Lambda features for information ingestion from Amazon S3, Amazon Bedrock for AI-powered insights accessed by stakeholders through Amazon ECS, and Elasticsearch for indexing and querying suggestions information with e-mail notifications through SES.

The answer is constructed on AWS structure designed to handle these challenges by means of scalability, maintainability, and safety. It’s deployed by utilizing AWS CloudFormation.

- Steady & Quarterly Suggestions Assortment

- Our internet and cellular functions serve a whole lot of hundreds of shoppers every month.

- Customers can depart a score plus textual content feedback, serving to us perceive person expertise and enhance companies.

- Every day Suggestions Ingestion & Processing

- A AWS Lambda perform runs as soon as per day to fetch the brand new suggestions entries.

- We use Amazon Bedrock to classify sentiment (constructive, unfavourable, blended, or impartial) for every open remark, streamlining downstream evaluation.

- Processed data are listed in Amazon OpenSearch Service, serving each as our full-text search engine and vector database.

- Interactive suggestions exploration through OpenSearch Service

- Stakeholders can entry real-time suggestions insights by means of OpenSearch Dashboards, giving them a hen’s-eye view of person sentiment, rankings, and traits over time.

- Beginning with high-level visualizations, corresponding to sentiment distribution, score scores, and suggestions quantity, customers can drill down into particular functions, options, and even particular person feedback.

- Dashboards help filtering by time interval, remark sentiment, product model, and extra, enabling focused root trigger evaluation.

- For instance, a Product Supervisor can visualize how sentiment round a current app replace modified week over week, or zoom into unfavourable feedback mentioning a selected function.

- AI-Powered Inner Chatbot

- Our stakeholder-facing chatbot queries the OpenSearch index as its information base.

- We use Bedrock Guardrails, to implement security and reliability and ensure responses align with our model and compliance requirements.

- Product managers and help groups can ask natural-language questions, for instance, “What ache factors do prospects point out most frequently?” and obtain prompt, context-rich solutions.

- Biweekly Insights Report

- Each two weeks, a second Lambda perform aggregates and analyzes the most recent suggestions traits.

- It generates a concise report with key metrics, highlights, and sentiment breakdowns.

- The report is mechanically delivered to our Product Managers and Product House owners, feeding straight into dash planning and roadmap discussions.

Generative AI Orchestration

Orchestration is a core basis of our answer, as a result of generative AI workflows usually contain a number of steps that have to be coordinated. In our pipeline, information ingestion and processing steps, corresponding to sentiment evaluation, embedding technology, and indexing, are orchestrated utilizing LangChain, which offers modular, reusable parts for calling fashions, reworking information, and integrating with exterior techniques like Amazon OpenSearch Service. For our inner chatbot, we depend on LangGraph to implement a multi-agent structure. Every assistant is outlined declaratively in LangGraph, encapsulating its personal logic and instruments. This design makes assistants versatile and composable: as a substitute of inflexible step-by-step flows, we use an agent-based method the place an LLM selects the fitting instruments and actions dynamically to reply person queries. This offers product managers and help groups a pure, interactive solution to discover suggestions and associated operational insights.

Integration with Amazon Bedrock fashions is easy utilizing LangChain’s native help. For instance, our AI-powered inner chatbot makes use of the Claude Sonnet 4.6 mannequin through Amazon Bedrock. We selected Claude Sonnet 4.6 as a result of it provides frontier efficiency throughout coding and agentic workflows. The mannequin excels in multi-turn conversational exchanges and agentic workflows, making it perfect for our inner chatbot that requires dependable efficiency throughout single and multi-turn interactions with stakeholders. With its exact workflow administration capabilities and talent to serve in each lead agent and subagent roles, Claude Sonnet 4.6 delivers the constant conversational high quality our product managers and help groups want when exploring suggestions insights at scale. Moreover, we leverage geographic Cross-Area Inference Service (CRIS) endpoint to seamlessly handle unplanned site visitors bursts by distributing compute throughout a number of EU AWS Areas. This cross-region functionality ensures our suggestions processing pipeline stays resilient throughout peak utilization intervals whereas sustaining constant efficiency for our world stakeholder base. The mannequin is configured with guardrails utilized straight by means of LangChain configuration:

Information Administration

An AWS Lambda perform runs as soon as per day to fetch the brand new suggestions entries from the suggestions repository into Amazon S3, after which the information is categorized with semantic detection by means of Amazon Bedrock. The info is then listed in Amazon OpenSearch Service, serving each as our full-text search engine and vector database.

Accountable AI

To responsibly use the answer, we implement safeguards utilizing Amazon Bedrock Guardrails. This enables us to connect Amazon Bedrock Guardrails to an AI interplay and implement content material moderation insurance policies and ensure responses align with our model and compliance requirements.

Utilizing AWS CloudFormation, we outline guardrail insurance policies as infrastructure-as-code, offering examples of configurations to assist block dangerous content material.

Guardrails as Code: CloudFormation

Programmatic Enter Validation

We additionally use Amazon Bedrock Guardrails independently to validate uncooked person enter earlier than passing it to the LLM, serving to stop immediate injection and different misuse:

With this setup, we’ve got created a safer, scalable, and explainable pipeline that places Generative AI to work, responsibly and successfully, throughout our product suggestions lifecycle.

Monitoring

We monitor the components of the appliance utilizing Amazon CloudWatch, which collects uncooked information and processes it into readable, close to real-time metrics. We enabled mannequin invocation logging to gather invocation logs, mannequin enter information, and mannequin output information for the invocations, enabling assortment of full request information, response information, and metadata related to the calls. Amazon Bedrock additionally integrates with AWS CloudTrail, which captures API requires Amazon Bedrock as occasions. This generates insights that you need to use to optimize the functions additional like enhancing response latency or lowering prices.

Subsequent Steps

The answer processes over 15,000 suggestions objects monthly with an accuracy of 95% for sentiment classification on a labeled check dataset. As an alternative of spending hours reviewing uncooked suggestions, groups can now obtain clear, structured summaries in seconds that spotlight an important subjects and recurring alerts. This helps them transfer from info to motion a lot sooner, making choices inside days somewhat than weeks. Over time, the stories helped us perceive not solely when sentiment improved, but in addition why it didn’t. By constantly monitoring buyer suggestions, we will react shortly to early alerts, modify choices, and proper course when wanted. In a number of areas, actions taken primarily based on these insights have already resulted in additional constructive feedback and a noticeable discount in unfavourable suggestions. A powerful instance is the “Preview” performance in Transport Directions. This function was prioritized straight in response to a excessive quantity of unfavourable person suggestions highlighting the shortage of a preview functionality. After its launch, AI-driven stories allowed us to trace person reactions intimately. Suggestions associated particularly to this function confirmed that the beforehand frequent requests for preview functionality had been successfully resolved, demonstrating that the core person want had been efficiently addressed. On the similar time, broader suggestions continued to floor different areas for enchancment throughout the appliance. AI insights additionally guided future function planning and prioritization. Primarily based on person feedback, we created new OpenSearch Service-based dashboards that assist groups shortly confirm and analyze points reported by customers. One other instance is the power to add cargo information through Excel information, a repeatedly requested function highlighted by AI suggestions. This performance is now totally out there and is predicted to considerably scale back guide effort, significantly for big shipments. Throughout assessment classes, the stakeholders can now see high constructive and unfavourable feedback in actual time, alongside AI-generated suggestions, creating a much more knowledgeable and productive dialogue.

This suggestions evaluation answer is one instance of how we’re making use of generative AI throughout our processes, and it marks the start, not the tip, of our AI-native journey. Underneath our AI-Native Umbrella Program, which serves as a single supply of fact for AI adoption, our subsequent focus is to ascertain a shared, sturdy AI basis with Amazon Bedrock. By offering standardized infrastructure, safety, and guardrails, we goal to allow each function within the division, engineering, product and supply (PM, PO, SM), UX/design, and operations/help, to create their very own AI “areas” safely and independently whereas gaining access to the very best in-class basis fashions. This setup is designed to decrease the barrier to experimentation, streamline discovery, and encourage hands-on exploration of generative AI use instances in day-to-day work. In doing so, we assist groups transfer sooner from concepts to impression, whereas sustaining consistency, accountability, and scalability throughout the AI initiatives.

If you wish to scale your generative AI functions, you may get began by studying this Architect a mature generative AI basis on AWS that dives deeper on the assorted foundational parts that assist speed up the end-to-end generative AI utility lifecycle.

Concerning the authors