Amazon’s Finance Expertise (FinTech) groups construct and function techniques for Amazon groups to handle regulatory inquiries in compliance with completely different jurisdictions. These groups course of regulatory inquiries from authorities, every presenting completely different necessities, doc codecs, and complexity ranges.

Processing these regulatory inquiries entails reviewing documentation, extracting related data, retrieving supporting information from a number of techniques inside Amazon’s infrastructure, and compiling responses inside regulatory timeframes. As inquiry frequency and enterprise complexity grew, Amazon wanted a extra scalable method.

On this submit, we show how Amazon FinTech groups are utilizing Amazon Bedrock and different AWS providers to construct a scalable AI utility to remodel how regulatory inquiries are dealt with. Every crew utilizing this answer creates and maintains its personal devoted data base, populated with that crew’s particular paperwork and reference supplies.

Challenges

The size and complexity of managing regulatory inquiries offered a number of interconnected challenges:

Information fragmentation and retrieval complexity

Regulatory inquiries require synthesizing data from hundreds of historic paperwork. These paperwork exist in numerous codecs (PDF, PPT, Phrase, CSV) and comprise domain-specific terminology. Groups wanted a option to rapidly find related precedents and supporting data throughout this huge corpus whereas sustaining accuracy and regulatory compliance.

Conversational context and state administration

Regulatory inquiries require multi-turn conversations the place context from earlier interactions is important for producing correct responses. Sustaining conversational state throughout classes and monitoring response evolution as crew members refine solutions by way of iterative interactions presents important complexity.

Observability and steady enchancment

With generative AI techniques, understanding why a specific response was generated is as vital because the response itself. Groups required complete visibility into the retrieval course of, mannequin selections, and person interactions to determine areas for enchancment and preserve compliance with accountable AI rules. For instance, groups should detect when the mannequin hallucinates data that isn’t current in supply paperwork, or catch when the system retrieves outdated compliance tips that would result in regulatory violations. AI techniques expertise accuracy drift over time as fashions, prompts, and the doc corpus change, requiring steady monitoring.

Answer overview

To handle these challenges, Amazon FinTech crew constructed an clever regulatory response automation system utilizing Amazon Bedrock, AWS Lambda, and supporting AWS providers. The answer implements Retrieval Augmented Technology (RAG) with Amazon Bedrock Information Bases and Amazon OpenSearch Serverless for vector storage, enabling data retrieval from hundreds of historic paperwork. Actual-time chat interactions powered by Claude Sonnet 4.5 by way of the Converse Stream API, mixed with Amazon DynamoDB for dialog historical past administration, present contextually-aware multi-turn conversations. Complete observability by way of OpenTelemetry and self-hosted Langfuse ensures steady monitoring and enchancment of the AI system’s efficiency. The system doesn’t cache massive language mannequin (LLM) responses or intermediate outcomes as a result of regulatory inquiries are extremely contextual and are vulnerable to a low cache hit price.

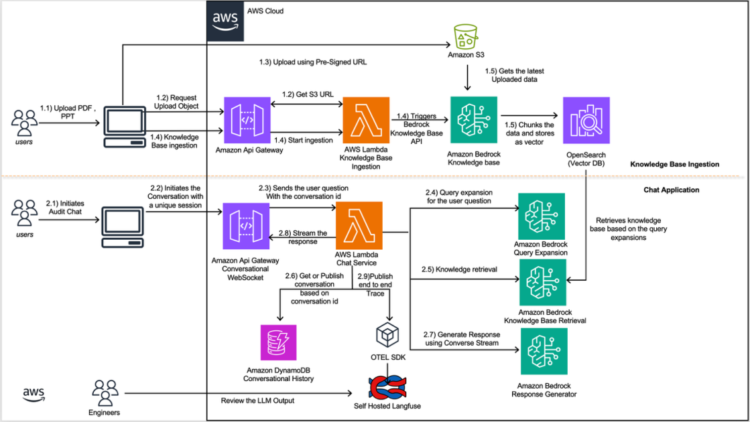

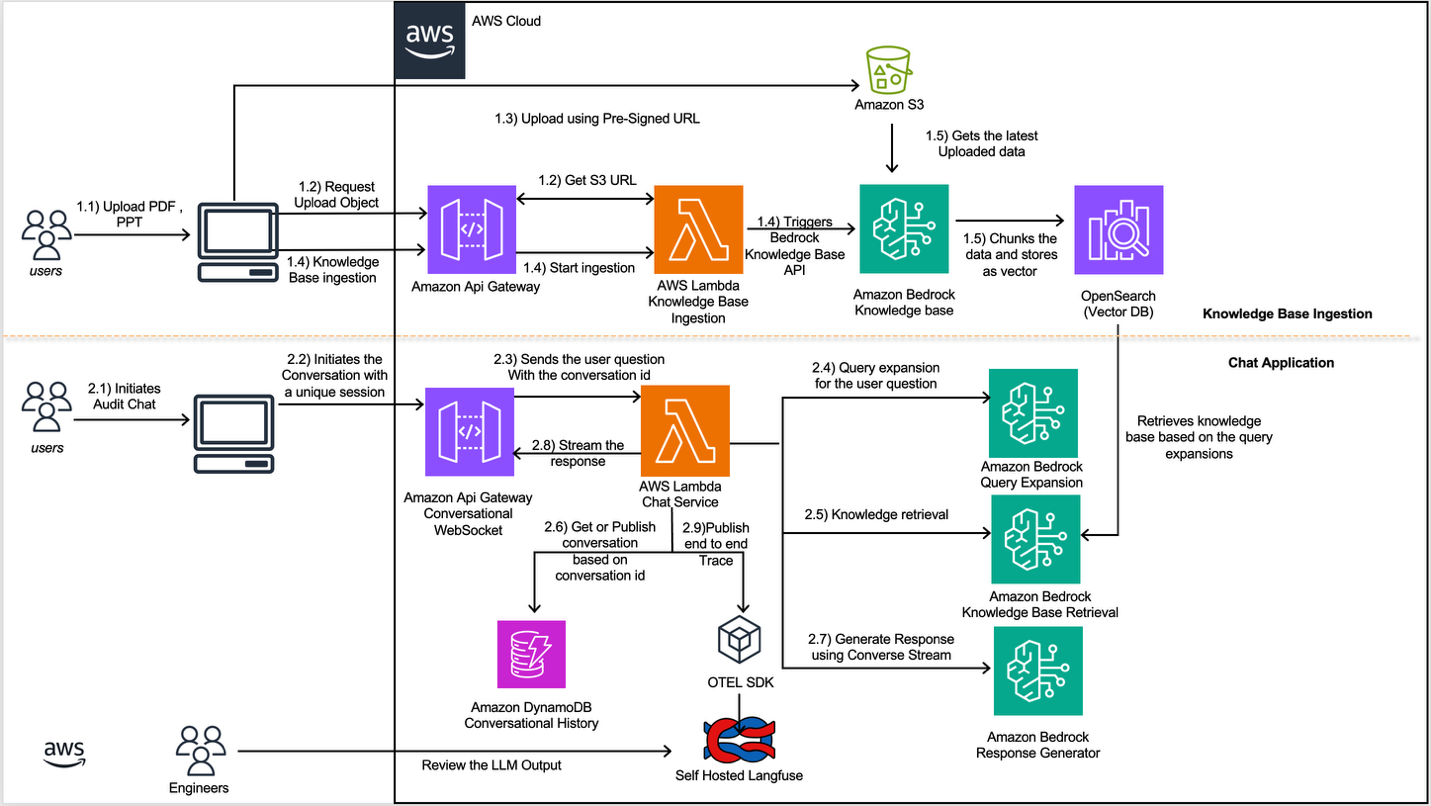

The next diagram exhibits how you need to use Amazon Bedrock Information Bases in a workflow, alongside Converse API and different instruments, to supply crucial data for regulatory inquiries:

Information base ingestion stream

The data base ingestion stream offers an automatic doc processing pipeline that initiates after the person uploads a doc. Its job is to embed the doc’s information into an Amazon Bedrock Information Base. Right here is the stream:

You should utilize the data base ingestion workflow to add paperwork in bulk and rework them into searchable vector embeddings by way of an automatic pipeline. The next detailed stream is illustrated within the earlier determine.

- Doc Add by Person: Customers add paperwork by way of the shopper utility.

- Pre-Signed URL Technology: The shopper utility sends a request to Amazon API Gateway, which invokes the data base ingestion AWS Lambda operate to generate a pre-signed S3 URL.

- Doc Add: The shopper utility makes use of the generated pre-signed URL to add the doc.

- Ingestion Set off and Knowledge Processing: After the doc is efficiently uploaded to Amazon Easy Storage Service (Amazon S3), the shopper utility triggers the Amazon API Gateway to provoke the doc processing AWS Lambda, which handles format conversion and manages the concurrent ingestion of paperwork. We don’t must pre-process the photographs, charts, and tables in these paperwork as a result of the Amazon Bedrock Information Base is configured with Amazon Bedrock Knowledge Automation (BDA) to successfully extract this multimodal content material. The AWS Lambda operate then calls the Amazon Bedrock Information Bases.

- Vector Storage: The Amazon Bedrock Information Base chunks the doc content material utilizing a hierarchical chunking technique, generates embeddings utilizing Amazon Titan Textual content Embeddings, and shops the ensuing vectors in OpenSearch Serverless. Hierarchical chunking creates nested parent-child relationships that mirror the sectioned construction of economic paperwork. This technique works effectively for structured and complicated paperwork as a result of it indexes small chunks for exact retrieval whereas returning bigger mum or dad chunks to supply adequate context for coherent responses.

Constructing an automatic ingestion pipeline addresses the core problem of data fragmentation by effectively processing hundreds of historic paperwork throughout a number of codecs whereas optimizing content material indexing for related AI responses. This parallelized method permits the system to scale successfully, accommodating the rising year-over-year regulatory inquiry exercise whereas sustaining constant processing efficiency throughout massive doc volumes.

Chat Utility

The Chat Utility offers a real-time dialog interface powered by AWS serverless structure, enabling pure language interactions with the system. We selected to stream responses to clients to allow them to start studying the AI response sooner in real-time, implementing this functionality by way of WebSocket connections. By these WebSocket connections and the Claude Sonnet 4.5 mannequin, it delivers contextually related responses whereas sustaining dialog state in DynamoDB. The workflow operates as follows:

- Provoke Chat Dialog: Customers provoke or open an present chat session by way of the shopper utility.

- WebSocket Connection: The appliance makes use of WebSockets to ascertain a persistent, bi-directional reference to Amazon API Gateway.

- Message Submission: The appliance posts the person questions by way of the WebSocket connection which is propagated to the Chat service AWS Lambda operate.

- Question Enhancement: The Chat Service AWS Lambda operate makes use of the Claude 3.5 Haiku mannequin with a question growth technique to generate a number of variations of the person’s query.

- Information Retrieval: The Chat Service Lambda invokes the Amazon Bedrock Information Bases Retrieve API for every expanded question. The API performs vector similarity searches in opposition to the underlying OpenSearch Serverless index and returns probably the most related doc chunks together with their supply metadata and relevance scores.

- Context Meeting: The Chat Service AWS Lambda operate retrieves dialog historical past from Amazon DynamoDB (for present conversations, based mostly on that particular dialog ID) and combines it with the retrieved data base outcomes and the person’s query.

- Response Technology: The Chat Service AWS Lambda operate makes use of the Converse Stream API with Claude Sonnet 4.5 and a response generator immediate to supply a contextually related reply based mostly on the assembled context.

- Person Engagement: The Chat Service AWS Lambda operate streams the generated response again to the shopper utility in Markdown format by way of the WebSocket connection and shops all of the dialog within the Conversational Historical past Desk by Amazon DynamoDb.

- Observability: All through the method, the Chat Service publishes end-to-end traces to a self-hosted Langfuse occasion utilizing the OpenTelemetry (OTEL) SDK. This captures detailed telemetry information together with latency metrics, token utilization, immediate templates, and mannequin responses.

Multi-turn conversational expertise

Regulatory inquiry discussions typically progress by way of a number of exchanges as groups refine responses and reference further information sources. To assist this iterative course of, the Amazon FinTech crew applied a multi-turn conversational workflow utilizing Amazon API Gateway (WebSocket APIs), AWS Lambda, and Amazon DynamoDB, built-in with the Amazon Bedrock ConverseStream API for low-latency, context-aware dialogue. Every chat session is securely authenticated by way of Amazon Cognito and assigned a singular dialog ID. DynamoDB shops messages in chronological order to protect context throughout classes, so customers can resume prior discussions seamlessly and preserve continuity.

When a person submits a question, the system sanitizes inputs to stop immediate injection assaults. After sanitization, the system classifies intent and determines whether or not retrieval from the Amazon Bedrock Information Base is required. This willpower is made by way of an LLM name that classifies the person question as both conversational or data intensive. For advanced, knowledge-intensive questions, the workflow employs a question growth technique that addresses the prevalent use of acronyms and abbreviated questions by customers. This layer generates as much as 5 question variations utilizing Claude 3.5 Haiku, then makes parallel Retrieve API calls to the Information Base, retrieving related outcomes utilizing OpenSearch vector similarity search. To keep up efficiency at scale, the workflow implements parallel processing for these retrieval calls utilizing multi-threading. This optimization lowered retrieval latency from 10 seconds (sequential processing) to beneath 2 seconds, enabling responsive conversations. The retrieved data—mixed with current dialog historical past—is handed to Claude Sonnet 4.5 by way of the ConverseStream API augmented with Amazon Bedrock Guardrails, that implement delicate data filters to routinely detect and take away PII and monetary information from each inputs and outputs. That is crucial for safeguarding regulatory documentation. When immediate injection makes an attempt are detected, the system responds with “Sorry, the mannequin can’t reply that query,” maintaning safe and compliant interactions whereas sustaining conversational fluency.

This structure delivers continuity, transparency, and scalability. Customers obtain real-time, streaming responses with standing updates all through the retrieval and era phases, bettering engagement and lowering latency. Persistent logs in DynamoDB present an immutable audit path for compliance evaluate, whereas the serverless and event-driven design scales routinely to assist concurrent classes. Collectively, these capabilities allow Amazon FinTech crew to conduct advanced, iterative conversations—producing contextually related, safe, and regulatory-compliant responses powered by Amazon Bedrock.

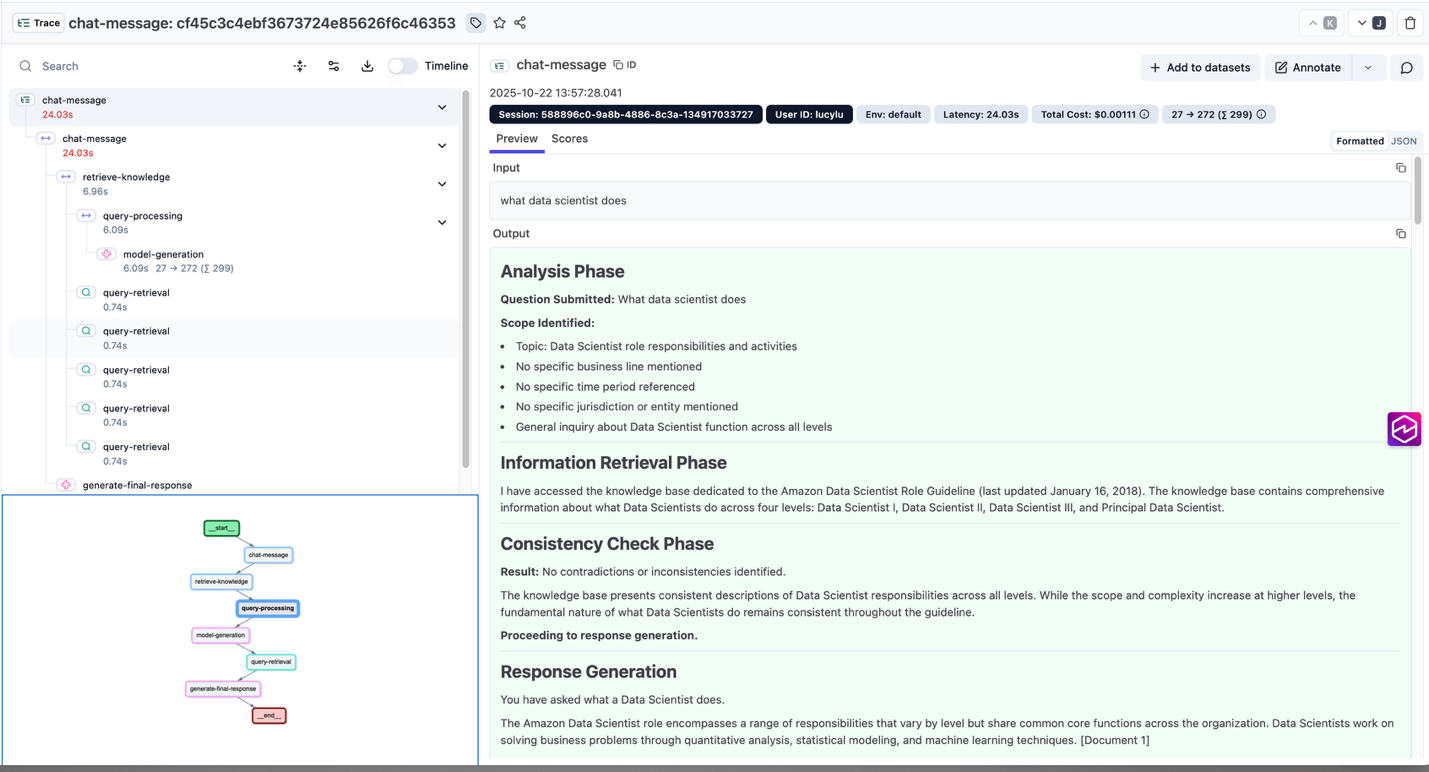

Observability

Observability performs a crucial function in understanding and bettering AI-driven workflows. To realize full visibility into the regulatory inquiry response system, the Chat Service AWS Lambda built-in OpenTelemetry (OTEL) with a self-hosted Langfuse occasion to seize detailed, end-to-end traces of every interplay. This setup offers engineers and utilized scientists with fine-grained telemetry on how prompts are processed, data is retrieved, and responses are generated. This allows almost steady refinement of the system’s efficiency and accuracy. The choice to make use of OTEL over the native Langfuse SDK offers vendor-neutral flexibility, permitting telemetry information to be routed to a number of observability backends and tailored to evolving monitoring necessities.

At runtime, every stage of the Chat Service AWS Lambda is manually instrumented utilizing the OTEL Java SDK to file latency, token utilization, mannequin selections, and immediate metadata in OTEL Generative AI semantic commonplace. Spans are revealed to Langfuse in close to actual time, giving the crew a clear view of how the Amazon Bedrock ConverseStream API, Information Base retrieval, and Claude Sonnet 4.5 work together inside a single request. The detailed telemetry permits the crew to determine efficiency bottlenecks, optimize immediate methods, and improve retrieval precision whereas sustaining accountable AI practices.

This observability framework maintains belief and accountability within the system’s conduct. Engineers can correlate person actions with mannequin outcomes, hint information lineage throughout a number of providers, and fine-tune configurations with out disrupting operations. By combining OpenTelemetry’s interoperability with Langfuse’s visualization and analytics, Amazon FinTech crew positive factors a scalable, extensible basis for evaluating generative AI techniques at scale—turning each interplay into actionable perception for steady enchancment.

The next screenshot illustrates an end-to-end hint captured in Langfuse, showcasing how the observability answer captures the whole workflow—from question growth and data retrieval to mannequin prompts, responses, and latency metrics. It additionally highlights supply doc citations, providing a clear view of how contextual data flows by way of the system throughout response era

Reference: Finish-to-Finish Hint Posted in Langfuse

Conclusion

On this submit, you noticed how Amazon FinTech crew constructed a scalable AI answer utilizing Amazon Bedrock, designed to assist regulatory inquiries by automating data retrieval, conversational workflows, and response era. By combining a doc ingestion pipeline, multi-turn stateful conversations, and detailed observability by way of OpenTelemetry and Langfuse, the structure empowers groups to deal with regulatory inquiries in ruled, traceable and compliant method.

As a result of your complete stack is constructed on AWS serverless providers, it provides the operational scalability, safety, and elasticity required for enterprise-grade deployment. Whether or not you’re coping with authorized compliance, regulatory inquiries, or high-volume inner data workflows, this sample provides a sensible basis which you can tailor and prolong to your corporation area.

In the event you’re able to modernize your knowledge-intensive processes with generative AI, discover the Amazon Bedrock documentation to find how one can start constructing your individual safe, ruled, and scalable AI-powered workflows.

Concerning the authors