This publish is cowritten by Shawn Tsai from TrendMicro.

Delivering related, context-aware responses is necessary for buyer satisfaction. For enterprise-grade AI chatbots, understanding not solely the present question but in addition the organizational context behind it’s key. Firm-wise reminiscence in Amazon Bedrock, powered by Amazon Neptune and Mem0, supplies AI brokers with persistent, company-specific context—enabling them to study, adapt, and reply intelligently throughout a number of interactions. TrendMicro, one of many largest antivirus software program firms on the planet, developed the Development’s Companion chatbot, so their prospects can discover info by pure, conversational interactions (study extra).

TrendMicro aimed to reinforce its AI chatbot service to ship customized, context-aware help for enterprise prospects. The chatbot wanted to retain dialog historical past for continuity, reference company-specific information at scale, and make sure that reminiscence remained correct, safe, and updated. The problem is in integrating long-term reminiscence for organizational information with short-term reminiscence for ongoing conversations, whereas supporting company-wide information sharing. In collaboration with the AWS workforce, together with AWS’s Generative AI Innovation Middle, TrendMicro addressed this problem utilizing Amazon Neptune, Amazon OpenSearch, and Amazon Bedrock, as we elaborate on this weblog.

Answer overview

TrendMicro applied company-wise reminiscence in Amazon Bedrock by combining a number of AWS companies. Amazon Neptune shops a company-specific information graph, representing organizational relationships, processes, and knowledge to allow exact and structured retrieval. Mem0 manages short-term conversational reminiscence for quick context and long-term reminiscence for persistent information throughout classes. Amazon Bedrock orchestrates the AI agent workflows, integrating with each Neptune and Mem0 to retrieve and apply contextual information throughout inference. This structure permits the chatbot to recall related historical past, retrieve structured firm information, and reply with tailor-made, context-rich solutions—serving to considerably enhance consumer expertise.

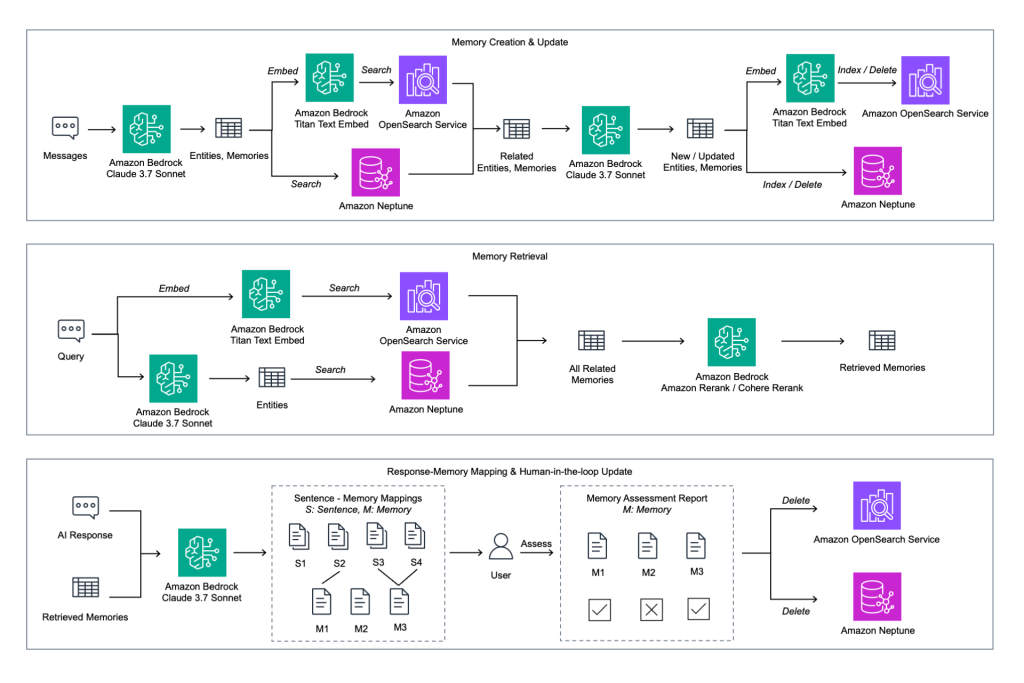

Reminiscence creation and replace

The structure begins with capturing consumer messages and extracting entities, relationships, and potential reminiscences by the Claude mannequin on Amazon Bedrock. These are then embedded with Amazon Bedrock Titan Textual content Embed and searched in opposition to each Amazon OpenSearch Service and Amazon Neptune. Related entities and reminiscences are retrieved, and up to date by the mannequin earlier than being re-embedded and listed again into OpenSearch and Neptune. This closed-loop course of makes certain that entity-related reminiscences could be constantly refreshed and the information graph in Neptune stays in step with conversational insights.

Reminiscence retrieval

When dealing with consumer queries, the system applies an identical embedding pipeline with Bedrock Titan to go looking throughout each vector embeddings in OpenSearch Service and entity triples in Neptune. The related reminiscences are then reranked utilizing Amazon Bedrock Rerank or Cohere Rerank fashions to guarantee that essentially the most contextually correct info is delivered. This twin retrieval technique supplies each semantic flexibility from OpenSearch and structured precision from Neptune, enabling the chatbot to ship extremely related, context-aware solutions.

Response-memory mapping and human-in-the-loop suggestions

For every AI response, the system maps sentences to the particular reminiscences referenced, producing a reminiscence evaluation report. Customers are then introduced with the chance to approve or reject these mappings. Authorised reminiscences stay a part of the information base, whereas rejected ones are faraway from each OpenSearch Service and Neptune. This makes certain that solely validated and trusted information persists. This human-in-the-loop mechanism strengthens belief and helps constantly enhance reminiscence accuracy and provides enterprise prospects direct affect over the refinement of their AI’s information.

Amazon Neptune in motion

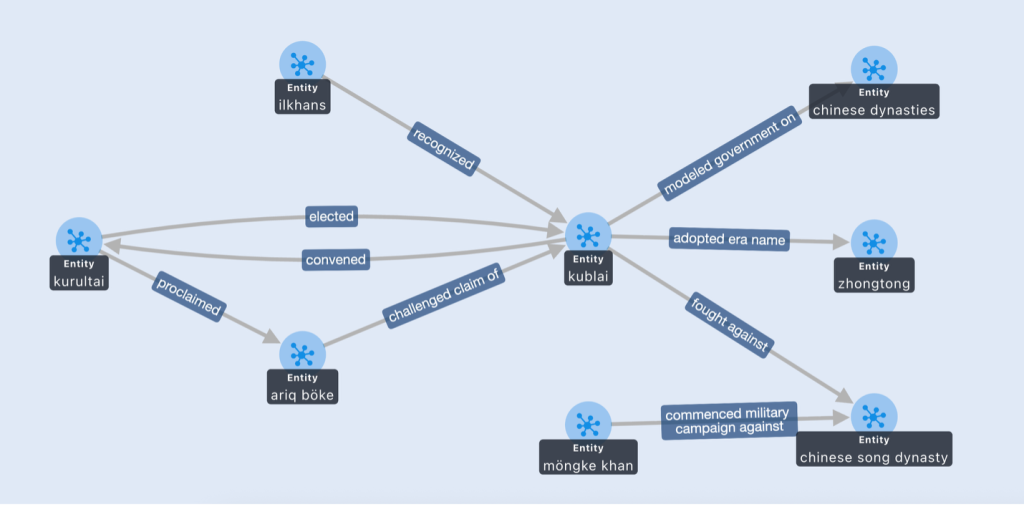

As an instance how Amazon Neptune enriches chatbot reminiscence, take into account a buyer asking, “Who acknowledged Kublai as ruler?” With out the information graph, the AI may return a obscure response comparable to: “Kublai was a Mongol ruler who gained recognition from totally different teams.” This sort of reply is generic and lacks precision.

When the identical query is requested however the Neptune entity graph is queried and positioned into the big language mannequin’s (LLM) context window, the mannequin can floor its reasoning in structured triples like (Ilkhans, acknowledged, Kublai). The chatbot can then reply extra precisely: “Based on the organizational information base, Kublai was acknowledged by the Ilkhans as ruler.” This before-and-after instance demonstrates how structured entity relationships in Neptune permit the mannequin to provide solutions which are each contextually related and verifiable.

Conclusion and subsequent step

As described within the AWS Development Micro case research, Development Micro makes use of AWS to assist ship safer, scalable, and clever buyer experiences. Constructing on this basis, Development Micro combines Amazon Bedrock, Amazon Neptune, Amazon OpenSearch Service, and Mem0 to create an AI chatbot with persistent, organization-specific reminiscence that delivers clever, context-aware conversations at scale. By integrating graph-based information with generative AI, Development Micro is predicted to enhance reply high quality, delivering clearer and extra correct responses whereas establishing a basis for AI methods that constantly adapt to evolving organizational information; This work stays beneath analysis and tuning to additional improve the end-user expertise.

Wanting forward, TrendMicro is exploring future enhancements comparable to broader graph protection, extra replace pipelines, and multilingual help. For readers who need to dive deeper, we advocate exploring the GitHub pattern implementation, which incorporates the supply code we applied, and the Amazon Neptune Documentation for additional technical particulars and inspiration.

In regards to the authors