This publish was co-authored with Neevash Ramdial, Technical Advertising chief at Stream

Constructing production-grade voice brokers that really feel pure and responsive is a posh engineering problem. You have to orchestrate speech-to-speech fashions, handle low-latency audio streaming, and deal with connection lifecycle. You additionally have to ship constant experiences throughout net, cell, and desktop functions.

On this publish, you learn to mix Stream’s Imaginative and prescient Brokers open-source framework with Amazon Bedrock and Amazon Nova 2 Sonic to construct real-time voice brokers that may be production-ready in minutes. You’ll learn the way the mixing works below the hood, stroll by code examples, and discover superior capabilities like operate calling, automated reconnection, and multilingual voice help.

The problem

Constructing voice-enabled AI functions requires orchestrating a number of complicated methods that should work collectively reliably. You face the problem of managing real-time audio streaming infrastructure whereas concurrently integrating speech recognition, language fashions, and text-to-speech providers. Every of those has its personal latency traits and failure modes. A typical voice interplay entails capturing audio from the consumer’s microphone, streaming it to a speech-to-text service, processing the transcript by a language mannequin, producing a response, changing that response again to speech, and delivering it to the consumer. All of this should occur inside a window of some hundred milliseconds to really feel pure. Delays on this pipeline can break the conversational move and frustrate customers.Past the core AI pipeline, manufacturing voice functions should deal with the messy realities of real-world deployment: unreliable community connections, browser compatibility points, session timeouts, and swish degradation when providers develop into unavailable. You usually spend extra time constructing reconnection logic, managing WebRTC connections, and dealing with edge circumstances than on the precise AI capabilities. This infrastructure burden means groups both make investments months constructing customized options or accept restricted off-the-shelf merchandise that don’t meet their particular wants. Imaginative and prescient Brokers abstracts the infrastructure complexity whereas offering the pliability to customise the AI expertise.

Answer overview

The answer brings collectively three key elements:

- Amazon Nova 2 Sonic a speech-to-speech basis mannequin out there by Amazon Bedrock that gives real-time bidirectional audio streaming, native flip detection, and performance calling capabilities. Nova 2 Sonic handles the complete speech-to-speech pipeline, accepting audio enter and producing audio output. This avoids the necessity for separate STT and TTS providers.

- Stream’s Imaginative and prescient Brokers an open-source Python framework for constructing real-time voice and video AI brokers. It offers a plugin-based structure with 25+ integrations, manufacturing deployment tooling, and consumer SDKs for React, iOS, Android, Flutter, and React Native. The system is designed with flexibility at its core. You should utilize Stream’s international edge community for environment friendly efficiency or combine your most popular real-time communication (RTC) supplier. Imaginative and prescient Brokers handles provider-specific specs by a clear decorator-based interface, enabling use circumstances like buyer help brokers, workflow automation, and API-driven actions with minimal boilerplate code. With Imaginative and prescient Brokers, you may construct AI functions utilizing an open-source framework, third-party mannequin suppliers, and telephony providers.

- Stream’s Edge Community a globally distributed edge community that sometimes delivers sub-500ms be a part of occasions and below 30ms audio latency, offering the real-time transport layer between shoppers and your agent backend.

Collectively, these elements create an entire stack: Stream handles the real-time media transport and client-side expertise, Amazon Nova 2 Sonic offers the AI intelligence, and Imaginative and prescient Brokers offers the glue code that ties them collectively.

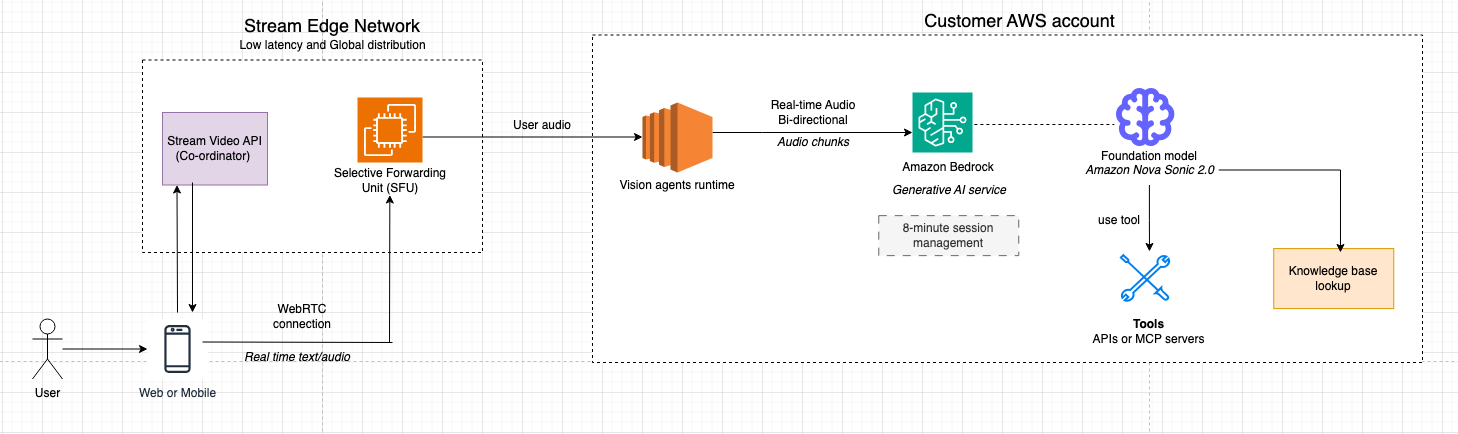

Structure overview

The system is designed round a clear separation of considerations: Stream’s infrastructure handles the real-time media transport and consumer connectivity, whereas Amazon Nova Sonic runs within the buyer’s personal AWS account and offers the AI intelligence. This separation helps maintain delicate knowledge and enterprise logic stay throughout the buyer’s management, whereas Stream’s globally distributed edge community delivers the low-latency media expertise customers count on.Stream’s edge community acts because the media dealer between end-user units and the Imaginative and prescient Agent employee processes. When a consumer speaks, audio is captured, encrypted, and transmitted as RTP over UDP to the closest Stream SFU (Selective Forwarding Unit). The SFU terminates the WebRTC connection, handles NAT traversal and bandwidth estimation, and forwards audio tracks to the Imaginative and prescient Agent employee as if it had been one other name participant. This implies the agent integrates naturally into the decision mannequin. The agent is one other peer, receiving and sending audio by the identical infrastructure utilized by human members.

Audio knowledge flows bidirectionally by the system: incoming speech from the consumer is decoded to uncooked PCM by the Imaginative and prescient Agent employee, streamed to Amazon Nova Sonic through the Bedrock real-time API, and response audio frames from Nova Sonic are re-encoded, packetized as RTP, and delivered again by the SFU to the consumer system. Finish-to-end latency is often below 500 milliseconds. Voice exercise detection (VAD) runs within the employee to detect speech boundaries and barge-in occasions, whereas echo cancellation within the browser helps stop the agent’s personal output from re-triggering the VAD loop.

Account boundaries

- Buyer AWS account

- Enterprise logic and orchestration (agent insurance policies, instruments, knowledge entry).

- Amazon Bedrock integration to entry Amazon Nova fashions.

- Stream AWS account

- International WebRTC/SFU media airplane, TURN/STUN, and signaling.

- Imaginative and prescient Agent runtime (employee processes) that terminate WebRTC as robotic friends and bridge the shopper’s Amazon Bedrock integration.

Finish-to-end media move

- Person joins from net or cell.

- The app embeds Stream’s audio consumer SDK, requests mic (and optionally digicam), and joins a name kind configured for AI participation.

- Media is distributed as RTP over UDP for predictable low latency and head‑of‑line–free supply. 2. Regional SFU termination

- Regional SFU termination

- A Stream SFU node within the closest area terminates the consumer’s WebRTC connection, dealing with bandwidth estimation, simulcast, and NAT traversal.

- The SFU forwards the related audio tracks to the Imaginative and prescient Agent employee as if it had been one other participant.

- Imaginative and prescient Agent employee

- A devoted Imaginative and prescient Agent employee course of holds the PeerConnection state for that session.

- It decodes audio to uncooked PCM and the employee streams PCM frames to Amazon Bedrock service forwarding to Amazon Nova 2 Sonic as a real-time session within the buyer’s AWS account.

- Amazon Nova 2 Sonic integration with Imaginative and prescient brokers by Amazon Bedrock

- Amazon Nova 2 Sonic detects speech boundaries and performs speech-to-speech modeling (understanding, reasoning, and TTS) with non-obligatory instrument calls into buyer methods (RDS, APIs, data bases).

- It gracefully handles barge-in and maintains full conversational context in order that the dialog stays pure and coherent.

- Streaming response again to the consumer

- As Amazon Nova Sonic produces response audio frames, the Imaginative and prescient Agent employee:

- Slices and wraps them in RTP with monotonically growing timestamps to keep away from gaps/drifts

- Sends RTP packets by the identical WebRTC session through the SFU. The browser’s WebRTC stack decodes and performs audio with sub-500 ms latency.

- As Amazon Nova Sonic produces response audio frames, the Imaginative and prescient Agent employee:

- Barge-in, transcripts, and aspect knowledge

- Echo cancellation within the browser helps stop the agent’s personal output from retriggering VAD.

- When the consumer interrupts, new speech triggers an interrupt sign over an RTCDataChannel, inflicting the employee to cease forwarding Amazon Nova Sonic output and reset its native buffer.

This structure may appear complicated, however Imaginative and prescient Brokers abstracts a lot of this complexity. Let’s see what the precise code appears like:

Conditions

Earlier than getting began, ensure you have the next:

- AWS credentials configured through atmosphere variables, IAM position, or AWS Command Line Interface (AWS CLI) profile. For manufacturing environments, use IAM roles connected to your compute sources as a substitute of long-term credentials. For native improvement, use AWS CLI profiles (aws configure) or AWS SSO. Don’t commit .env information containing credentials to model management.

- Stream account with an Audio API key and secret (you’re anticipated to obtain 333,000 participant minutes per 30 days at no further value).

- Python 3.12 or later put in.

- uv bundle supervisor put in (

pip set up uv). - Imaginative and prescient Brokers put in (

uv add vision-agents)

Getting began

Step 1: Create a brand new challenge listing and set up Imaginative and prescient Brokers with the AWS plugin

mkdir voice-agent

cd voice-agentuv inituv add "vision-agents[getstream,aws]"

python-dotenvThe vision-agents[aws] additional installs the Amazon Bedrock plugin together with its dependencies, together with boto3, aws-sdk-bedrock-runtime, and Silero VAD for voice exercise detection.

Step 2: Configure atmosphere variables

Create a “.env” file in your challenge root to handle your configuration. For AWS credentials, we advocate pointing to your AWS_PROFILE on this file so the appliance can entry your credentials when interacting with AWS sources. We don’t advocate storing your AWS entry keys straight on this file.

For Stream API credentials, you need to use a third-party library like HashiCorp Vault or AWS Secrets and techniques Supervisor, however safety issues aren’t within the scope of this publish.

# Stream API credentials

STREAM_API_KEY=take a look at/geststream/api_key

STREAM_API_SECRET=take a look at/getstream/api_secret

# AWS credentials

AWS_PROFILE=your_aws_profile_name

AWS_REGION=us-east-1Imaginative and prescient Brokers robotically discovers these atmosphere variables at startup, so that you don’t have to cross them explicitly to every consumer.

Step 3: Construct your first voice agent

Create a principal.py file with the next code:

import asyncio

from dotenv import load_dotenv

from vision_agents.core import Agent, Person, Runner

from vision_agents.core.brokers import AgentLauncher

from vision_agents.plugins import aws, getstream

load_dotenv()

async def create_agent(**kwargs) -> Agent:

agent = Agent(

edge=getstream.Edge(),

agent_user=Person(identify="Useful Assistant", id="agent"),

directions="You're a useful voice assistant. Be concise and pleasant.",

llm=aws.Realtime(

mannequin="amazon.nova-2-sonic-v1:0",

region_name="us-east-1",

voice_id="matthew",

),

)

return agent

async def join_call(agent: Agent, call_type: str, call_id: str, **kwargs) -> None:

name = await agent.create_call(call_type, call_id)

async with agent.be a part of(name):

await asyncio.sleep(2)

await agent.llm.simple_response(

textual content="Greet the consumer warmly and ask how one can assist."

)

await agent.end() # Run till the decision ends

if __name__ == "__main__":

Runner(AgentLauncher(create_agent=create_agent, join_call=join_call)).cli()

Step 4: Run the voice agent

Run the agent:

uv run principal.py runIn fewer than 30 strains of code, you will have a completely useful, real-time voice agent powered by Amazon Nova Sonic, accessible from a Stream consumer SDK.

Understanding the Amazon Bedrock integration

Let’s take a better take a look at how the aws.Realtime plugin works below the hood.

Bidirectional streaming with Amazon Nova 2 Sonic

Amazon Nova 2 Sonic makes use of an event-driven bidirectional streaming API. As an alternative of utilizing a request-response sample, this method permits almost steady audio to move in each instructions concurrently. The Imaginative and prescient Brokers AWS plugin manages this complexity by a structured occasion sequence:

- Session initialization – A

sessionStartoccasion is distributed with inference configuration (temperature, max tokens, top-p). - Immediate setup – A

promptStartoccasion configures the audio output format (24kHz PCM), voice choice, and gear definitions. - System directions – System directions are despatched as a textual content content material block with the

SYSTEMposition. - Audio streaming – Microphone audio frames (~32ms every) are streamed as

audioInputoccasions. - Response streaming – Nova Sonic streams again

audioOutputoccasions with the generated speech. - Session teardown –

promptEndandsessionEndoccasions cleanly shut the connection.

Every content material block follows a three-part sample: contentStart → content material payload → contentEnd. This hierarchical construction permits the mannequin to keep up correct context all through the interplay.

Here’s what the session begin occasion appears like within the plugin:

def _create_session_start_event(self) -> Dict[str, Any]:

return {

"occasion": {

"sessionStart": {

"inferenceConfiguration": {

"maxTokens": 1024,

"topP": 0.9,

"temperature": 0.7,

}

},

"turnDetectionConfiguration": {

"endpointingSensitivity": "MEDIUM"

},

}

}

Including operate calling

One of many key capabilities of Amazon Nova 2 Sonic is native operate calling throughout real-time conversations. This permits your voice agent to carry out actions like querying databases, calling APIs, and triggering workflows whereas sustaining a pure spoken dialog.Use the @llm.register_function decorator to outline capabilities the mannequin can name:

import asyncio

from dotenv import load_dotenv

from typing import Dict, Any

from vision_agents.core import Agent, Person, Runner

from vision_agents.core.brokers import AgentLauncher

from vision_agents.plugins import aws, getstream

load_dotenv()

async def create_agent(**kwargs) -> Agent:

agent = Agent(

edge=getstream.Edge(),

agent_user=Person(identify="Climate Assistant", id="agent"),

directions="""You're a useful climate assistant. When customers ask

about climate, use the get_weather operate to fetch present circumstances.

You can too assist with easy calculations.""",

llm=aws.Realtime(

mannequin="amazon.nova-2-sonic-v1:0",

region_name="us-east-1",

),

)

@agent.llm.register_function(

identify="get_weather",

description="Get the present climate for a given metropolis"

)

async def get_weather(location: str) -> Dict[str, Any]:

# In manufacturing, name an actual climate API

return {

"metropolis": location,

"temperature": 72,

"situation": "Sunny",

"humidity": "45%"

}

@agent.llm.register_function(

identify="calculate",

description="Carry out a mathematical calculation"

)

def calculate(operation: str, a: float, b: float) -> dict:

operations = {

"add": lambda x, y: x + y,

"subtract": lambda x, y: x - y,

"multiply": lambda x, y: x * y,

"divide": lambda x, y: x / y if y != 0 else None,

}

outcome = operations.get(operation, lambda x, y: None)(a, b)

return {"operation": operation, "a": a, "b": b, "outcome": outcome}

return agent

async def join_call(agent: Agent, call_type: str, call_id: str, **kwargs) -> None:

await agent.create_user()

name = await agent.create_call(call_type, call_id)

async with agent.be a part of(name):

await asyncio.sleep(2)

await agent.llm.simple_response(

textual content="Greet the consumer and allow them to know you may test the climate."

)

await agent.end()

if __name__ == "__main__":

Runner(AgentLauncher(create_agent=create_agent, join_call=join_call)).cli()

How operate calling works with Amazon Nova 2 Sonic

When the mannequin decides to invoke a operate, the next sequence happens:

- Nova 2 Sonic emits a

toolUseoccasion containing the operate identify and arguments. - The Imaginative and prescient Brokers plugin intercepts this occasion, deserializes the arguments, and runs the registered Python operate.

- The result’s despatched again to Nova through a

toolResultoccasion, wrapped in the usualcontentStart→toolResult→contentEndsample. - Nova 2 Sonic incorporates the operate outcome into its response and continues the spoken dialog naturally.

You possibly can construct complicated, multi-step workflows with this method. For instance, a voice agent may search for a buyer file, test stock, and place an order, all inside a single pure dialog.

Utilizing the usual LLM with Amazon Bedrock

Past real-time speech-to-speech, the AWS plugin additionally offers a regular LLM integration through aws.LLM. That is helpful for customized pipeline architectures the place you need to pair an Amazon Bedrock mannequin with separate STT and TTS suppliers:

from vision_agents.core import Agent, Person

from vision_agents.plugins import aws, getstream, cartesia, deepgram, smart_turn

agent = Agent(

edge=getstream.Edge(),

agent_user=Person(identify="Customized Pipeline Agent"),

directions="Be useful and concise.",

llm=aws.LLM(

mannequin="anthropic.claude-3-haiku-20240307-v1:0",

region_name="us-east-1"

),

tts=cartesia.TTS(),

stt=deepgram.STT(),

turn_detection=smart_turn.TurnDetection(

buffer_duration=2.0,

confidence_threshold=0.5

),

)

The usual LLM helps streaming responses through converse_stream(), full dialog historical past administration, imaginative and prescient inputs for fashions like Claude, and multi-round instrument calling with as much as 3 rounds of operate execution per request.

Textual content-to-speech with Amazon Polly

For customized pipeline architectures, the AWS plugin additionally contains an Amazon Polly TTS integration. That is helpful if you’re utilizing a non-realtime LLM (like Claude on Amazon Bedrock or one other supplier) and want high-quality voice synthesis:

from vision_agents.plugins import aws

tts = aws.TTS(

region_name="us-east-1",

voice_id="Joanna",

engine="neural", # 'normal' or 'neural'

language_code="en-US"

)

Amazon Polly TTS helps each normal and neural engines, SSML enter for fine-grained speech management, and a number of languages and voices. The neural engine produces extra natural-sounding speech, making it a robust alternative if you’re constructing a customized STT → LLM → TTS pipeline on AWS infrastructure.

Clear up sources

To delete the Stream name and terminate operating Imaginative and prescient Agent processes:

uv run principal.py ceaseNecessary: Amazon Bedrock costs apply for all API calls to Amazon Nova 2 Sonic. You possibly can run the cleanup command to terminate periods and keep away from ongoing costs. Lively periods could proceed to incur prices till explicitly terminated.

Use circumstances

With the technical basis in place, it’s price exploring the place these capabilities translate into significant real-world impression. The mix of low-latency voice, dialog administration, and gear integration opens up a variety of functions throughout industries the place pure spoken interplay can change or increase conventional interfaces.

Use case 1: Voice interfaces for no-screen and low-attention environments

Imaginative and prescient Brokers mixed with Amazon Nova 2 Sonic is nicely fitted to environments the place customers can not reliably work together with a display screen, equivalent to driving, area service, logistics, healthcare, or on-site operations.In these contexts, voice turns into the first interface, not a comfort function.

- With Amazon Nova 2 Sonic, you get real-time, speech-to-speech interactions with low latency and pure turn-taking, permitting customers to talk freely, interrupt responses, and proper themselves with out breaking the move.

- Imaginative and prescient Brokers manages dialog state and activity logic throughout turns, translating spoken enter into structured actions like retrieving the following job task, updating activity standing, logging notes, or requesting human help.

As a result of the agent maintains context all through the interplay, customers can subject follow-up instructions or clarifications with out repeating info.For instance, a supply driver can ask, “What’s my subsequent cease?” obtain spoken instructions, say “Mark the final supply as full,” after which observe up with “Name dispatch,” all with out touching a display screen, whereas the agent updates backend methods in actual time.

Use case 2: Excessive-volume inbound telephone help at scale

Imaginative and prescient Brokers mixed with Amazon Nova 2 Sonic is designed for dealing with giant volumes of inbound help calls the place human brokers develop into a bottleneck. This use case is essentially about scale: lowering queue occasions, deflecting repetitive requests, and reserving human brokers for circumstances that require their involvement.

- With Amazon Nova 2 Sonic, callers can have low-latency, real-time speech-to-speech conversations that permit callers to elucidate points naturally as a substitute of navigating scripted IVR bushes.

- Imaginative and prescient Brokers orchestrates intent detection, dialog state, and backend integrations, equivalent to order methods, account information, or ticketing providers, so widespread requests could be resolved robotically throughout the name.

When a difficulty exceeds predefined confidence thresholds or requires guide intervention, the agent escalates to a human with structured context connected, assuaging the necessity for callers to repeat themselves.Throughout peak hours, lots of of shoppers may name asking about supply delays. As an alternative of ready in a queue, callers are instantly answered by a voice agent that checks order standing, explains the delay, provides subsequent steps, and solely routes to a stay agent if an exception is detected.This turns the telephone system from a queue-based value heart right into a steady, first-line decision layer.

Conclusion

This publish walked by find out how to construct real-time voice brokers utilizing Stream’s Imaginative and prescient Brokers framework and Amazon Bedrock with Amazon Nova 2 Sonic. We lined the structure, the bidirectional streaming protocol, automated reconnection dealing with, operate calling, multilingual help, and manufacturing deployment.The mix of Stream’s low-latency edge community and Amazon Nova Sonic’s native speech-to-speech capabilities offers a strong basis for constructing voice AI functions. The Imaginative and prescient Brokers framework abstracts the complicated orchestration of connection lifecycle administration, audio encoding, VAD-aware reconnection, and gear execution, so you may focus in your agent’s logic and consumer expertise.

In case you’re able to discover additional, we encourage you to attempt extending your agent with customized capabilities to your particular use case or discover the multilingual capabilities for international functions. The Imaginative and prescient Brokers repository at https://github.com/GetStream/Imaginative and prescient-Brokers is an effective place to start out. You’ll discover further examples, plugin documentation, and group discussions. For deeper integration particulars, the AWS plugin documentation is offered at https://visionagents.ai/integrations/aws-bedrock, and the Amazon Nova 2 Sonic documentation within the AWS Nova Person Information offers a complete reference for the bidirectional streaming API. You possibly can join a Stream developer account at https://getstream.io/ and begin constructing for at the moment at no further value.

Concerning the authors