a distributed multi-agent system each in OpenClaw and AWS AgentCore for some time now. In my OpenClaw setup alone, it has a analysis agent, a writing agent, a simulation engine, a heartbeat scheduler, and a number of other extra. They collaborate asynchronously, hand off context by way of shared information, and keep state throughout periods spanning days or perhaps weeks.

Once I usher in different agentic methods like Claude Code or the brokers I’ve deployed in AgentCore, coordination, reminiscence, and state all develop into harder to unravel for.

Ultimately, I got here to a realization: most of what makes these brokers truly work isn’t the mannequin selection. It’s the reminiscence structure.

So once I got here throughout “Reminiscence for Autonomous LLM Brokers: Mechanisms, Analysis, and Rising Frontiers” (arxiv 2603.07670), I used to be curious whether or not the formal taxonomy matched what I’d constructed by really feel and iteration. It does, fairly intently. Nevertheless, it codifies quite a lot of what I had discovered alone and helped me see that a few of my present ache factors aren’t distinctive to me and are being seen extra broadly.

Let’s stroll by way of the survey and talk about its findings as I share my experiences.

Why Reminiscence Issues Extra Than You Assume

The paper leads with an empirical statement that ought to recalibrate your priorities if it hasn’t already:

“The hole between ‘has reminiscence’ and ‘doesn’t have reminiscence’ is commonly bigger than the hole between totally different LLM backbones.”

It is a large declare. Swapping your underlying mannequin issues lower than whether or not your agent can bear in mind issues. I’ve felt this intuitively, however seeing it said this plainly in a proper survey is helpful. Practitioners spend huge vitality on mannequin choice and immediate tuning whereas treating reminiscence as an afterthought. That’s backward.

The paper frames agent reminiscence inside a Partially Observable Markov Choice Course of (POMDP) construction, the place reminiscence features because the agent’s perception state over {a partially} observable world. That’s a tidy formalization. In apply, it means the agent can’t see all the things, so it builds and maintains an inside mannequin of what’s true. Reminiscence is that mannequin. Get it fallacious, and each downstream resolution degrades.

The Write-Handle-Learn Loop

The paper characterizes agent reminiscence as a write-manage-read loop, not simply “retailer and retrieve.”

- Write: New data enters reminiscence (observations, outcomes, reflections)

- Handle: Reminiscence is maintained, pruned, compressed, and consolidated

- Learn: Related reminiscence is retrieved and injected into the context

Most implementations I see nail “write” and “learn” and fully neglect “handle.” They accumulate with out curation. The result’s noise, contradiction, and bloated context. Managing is the onerous half, and it’s the place most methods battle or outright fail.

Earlier than the newest OpenClaw enhancements, I used to be dealing with this with a heuristic management coverage: guidelines for what to retailer, what to summarize, when to escalate to long-term reminiscence, and when to let issues age out. It’s not elegant, but it surely forces me to be express in regards to the administration step moderately than ignoring it.

In different methods I construct, I usually depend on mechanisms reminiscent of AgentCore Brief/Lengthy-term reminiscence, Vector Databases, and Agent Reminiscence methods. The file-based reminiscence system doesn’t scale effectively for big, distributed methods (although for brokers or chatbots, it’s not off the desk).

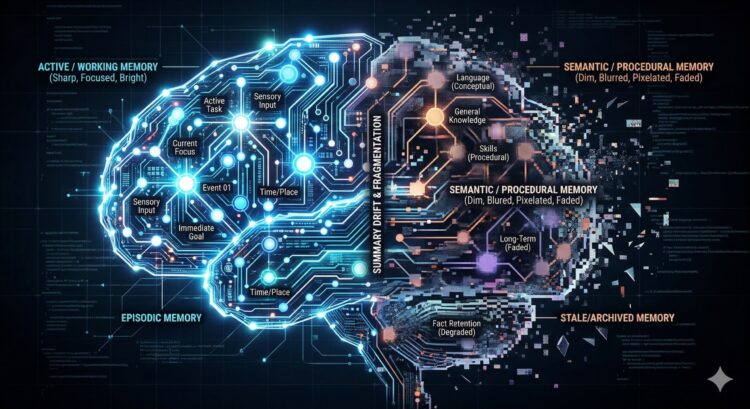

4 Temporal Scopes (And The place I See Them in Apply)

The paper breaks reminiscence into 4 temporal scopes.

Working Reminiscence

That is the context window.

It’s ephemeral, high-bandwidth, and restricted. Every part lives right here briefly. The failure mode is attentional dilution and the “misplaced within the center” impact, the place related content material will get ignored as a result of the window is simply too crowded. I’ve hit this, as have a lot of the groups I’ve labored with.

When OpenClaw, Claude Code, or your chatbot context will get lengthy, agent habits degrades in methods which might be onerous to debug as a result of the mannequin technically “has” the data however isn’t utilizing it. The commonest factor I see from groups (and myself) is to create new threads for various chunks of labor. You don’t preserve Claude Code open all day whereas engaged on 20+ totally different JIRA duties; it degrades over time and performs poorly.

Episodic Reminiscence

This captures concrete experiences; what occurred, when, and in what sequence.

In my OpenClaw occasion, that is the every day standup logs. Every agent writes a short abstract of what it did, what it discovered, and what it escalated. These accumulate as a searchable timeline. The sensible worth is gigantic: brokers can look again at yesterday’s work, spot patterns, and keep away from repeating failures. Instruments like Claude Code battle, except you arrange directions to pressure the habits.

Manufacturing brokers can leverage issues like Agent Core’s short-term reminiscence to maintain these episodic reminiscences. There are even mechanisms to grasp what deserves to be endured past a single interplay.

The paper validates this as a definite and essential tier.

Semantic Reminiscence

Is answerable for abstracted, distilled data, information, heuristics, and realized conclusions.

In my OpenClaw, that is the MEMORY.md file in every agent’s workspace. It’s curated. Not all the things goes in. The agent (or I, periodically) decides what’s value preserving as an enduring fact versus what was situational.

In Agent Core Reminiscence, that is primarily the Lengthy-term reminiscence function.

This curation step is vital; with out it, semantic reminiscence turns into a junk drawer.

Procedural Reminiscence

It’s encoded executable expertise, behavioral patterns, and realized habits.

In OpenClaw, this maps largely to the AGENTS.md and SOUL.md information, which include persona directions, behavioral constraints, and escalation guidelines. When the agent reads these initially of the session, it’s loading procedural reminiscence. These must be up to date primarily based on consumer suggestions, and even by way of ‘dream’ processes that analyze interactions.

That is an space that I’ve been remiss in (as have groups that I’ve labored with). I spend time tuning a immediate, however the suggestions mechanisms that drive the storage of procedural reminiscence and the iteration on these personas usually get not noted.

The paper formalizes this as a definite tier, which I discovered validating. These aren’t simply system prompts. They’re a type of long-term realized habits that shapes each motion.

5 Mechanism Households

Now that we’ve got some frequent definitions across the forms of reminiscences, let’s dive into reminiscence mechanisms.

Context-Resident Compression

This covers sliding home windows, rolling summaries, and hierarchical compression. These are the “keep in context” methods. Rolling summaries are seductive as a result of they really feel clear (they’re not, I’ll get to why in a second).

I’m certain everybody has run into Claude Code or Kiro CLI compressing a dialog when it will get too massive for the context window. Oftentimes, you’re higher off restarting a brand new thread.

Retrieval-Augmented Shops

That is RAG utilized to agent interplay historical past moderately than static paperwork. The agent embeds previous observations and retrieves by similarity. That is highly effective for long-running brokers with deep historical past, however retrieval high quality turns into a bottleneck quick. In case your embeddings don’t seize semantic intent effectively, you’ll miss related reminiscences and floor stale ones.

You additionally run into points the place questions like ‘what occurred final Monday’ don’t retrieve high quality reminiscences.

Reflective Self-Enchancment

This contains methods reminiscent of Reflexion and ExpeL, the place brokers write verbal post-mortems and retailer conclusions for future runs. The concept is compelling; brokers study from errors and enhance. The failure mode is extreme, although (we’ll cowl it in additional element in a minute).

I imagine different ‘dream’ primarily based reflection and methods just like the Google Reminiscence Agent sample belong to this class as effectively.

Hierarchical Digital Context

A MemGPT’s OS-inspired structure (see GitHub repo additionally). A foremost context window is “RAM”, a recall database is the “disk”, and archival storage is “chilly storage”, whereas the agent manages its personal paging. Whereas this class is attention-grabbing, the overhead/work of sustaining these separate tiers is burdensome and tends to fail.

The MemGPT paper and git repo are each virtually 3 years outdated, and I’ve but to see any precise use in manufacturing.

Coverage-Discovered Administration

It is a new frontier strategy, the place RL-trained operators (reminiscent of retailer, retrieve, replace, summarize, and discard) that fashions study to invoke optimally. I feel there may be quite a lot of promise right here, however I haven’t seen actual harnesses for builders to make use of or any precise manufacturing use.

Failure Modes

We’ve lined the forms of reminiscences and the methods that make them. Subsequent is how these can fail.

Context-Resident Failures

Summarization drift happens while you repeatedly compress historical past to suit it inside a context window. Every compression/summarization throws away particulars, and ultimately, you’re left with reminiscence that doesn’t actually match what occurred. Once more, you see this Claude Code and Kiro CLI when coding periods cowl too many options with out creating new threads. A method I’ve seen groups fight that is to maintain uncooked reminiscences linked to the summarized/consolidated reminiscences.

Consideration dilution is the opposite failure mode on this class. Even for those who can preserve all the things in context (as with the brand new 1 million-token home windows), bigger prompts “lose” data within the center. Whereas brokers technically have all of the reminiscences, they’ll’t concentrate on the proper components on the proper time.

Retrieval Failures

Semantic vs. causal mismatch happens when similarity searches return reminiscences that appear associated however aren’t. Embeddings are nice at figuring out when textual content ‘appear like’ one another, however are horrible with realizing ‘that is the trigger’. In apply, I usually see this when debugging by way of coding assistants. They see comparable errors however can miss the underlying trigger, which frequently results in thrashing/churning, quite a lot of modifications, however by no means fixes the true situation.

Reminiscence blindness happens in tiered methods when essential information by no means resurface. The information exists, however the agent by no means sees it once more. This may be as a result of a sliding window has moved on, since you solely retrieve 10 reminiscences from a knowledge supply, however what you want would have been the eleventh reminiscence.

Silent orchestration failures are essentially the most harmful on this class. Paging, eviction, or archival insurance policies do the fallacious issues, however no errors are thrown (or are misplaced within the noise by the autonomous system or by people operating it). The one symptom will likely be that responses worsen, get extra generic, and get much less grounded. Whereas I’ve seen this come up in a number of methods, the newest for me was when OpenClaw failed to write down every day reminiscence information, so every day stand-ups/summarizations had nothing to do. I solely seen as a result of it stored forgetting issues we labored on throughout these days.

Information-Integrity Failures

Staleness might be commonest. The surface world modifications, however your system reminiscence doesn’t. Addresses, machine states, consumer preferences, and something that your system depends on to make choices can drift over time. Lengthy-lived brokers will act on knowledge from 2024 even in 2026 (who hasn’t seen an LLM insist the date is fallacious, the fallacious President is in workplace, or that the most recent know-how hasn’t truly hit the scene but?).

Self-reinforcing errors (affirmation loops) happen when a system treats a reminiscence as floor fact, however that reminiscence is fallacious. Whilst you usually need methods to study and construct a brand new foundation of fact, if a system creates a nasty reminiscence, its view of the world is affected. In my OpenClaw occasion, it determined that my SmartThings integration with my House Assistant was defective; subsequently, all data from a SmartThings machine was deemed misguided, and it ignored all the things from it (in reality, there have been only a few useless batteries in my system).

Over-generalization is a quieter model of self-reinforcement. Brokers study a lesson in a slender context, then apply it in all places. A workaround for a single buyer or a single error is a default sample.

Environmental Failure

Contradiction dealing with will be extremely irritating. As new data is collected, if it conflicts with present data, methods can’t all the time decide the precise fact. In my OpenClaw system, I requested it to create some N8N workflows. All of them created accurately, however the motion timed out, so it thought it failed. I verified the workflows existed, informed my OpenClaw agent to recollect it, and it agreed. For the following a number of interactions, the agent oscillated between believing the workflow was accessible and believing it had did not arrange.

Design Tensions

There may be going to be push-and-pull towards all these for brokers and reminiscence methods.

Utility vs. Effectivity

Higher reminiscence normally means extra tokens, extra latency, extra storage, extra methods.

Utility vs. Adaptivity

Reminiscence that’s helpful now will likely be stale in some unspecified time in the future. Updating is dear and dangerous.

Adaptivity vs. Faithfulness

The extra you replace, revise, and compress, the extra you threat distorting what truly occurred.

Faithfulness vs. Governance

Correct reminiscence might include delicate data (PHI, PII, and so on) that you could be be required to delete, obfuscate, or defend.

The entire above vs. Governance

Enterprises have advanced compliance necessities that may battle with all these.

Sensible Takeaways for Builders

I’m usually requested by engineering groups for the very best reminiscence system or the place they need to begin their journey. Right here’s what I say.

Begin with express temporal scopes

Don’t construct “reminiscence”. While you want episodic reminiscence, construct it. When your use case grows and wishes semantic reminiscence, construct it. Don’t attempt to discover one system that does all of it, and don’t construct each type of reminiscence earlier than you want it.

Take the administration step severely

Plan tips on how to keep your reminiscence. Don’t plan on accumulating indefinitely; work out for those who want compression or reminiscence connection/dream habits. How will you understand what goes into semantic reminiscence versus RAG reminiscence? How do you deal with updates? With out realizing these, you’ll accumulate noise, get contradictions, and your system will degrade.

Hold uncooked episodic information

Don’t simply depend on summaries; they’ll drift or lose particulars. Uncooked information allow you to return to what truly occurred and pull them in when crucial.

Model reflective reminiscence

To assist keep away from contradictions in summaries, long-term reminiscences, and compressions, add timestamps or variations to every. This may also help your brokers decide what’s true and what’s the most correct reflection of the system.

Deal with procedural reminiscence as code

In OpenClaw, your Brokers.MD, Reminiscence.MD, private information, and behavioral configs are all a part of your reminiscence structure. Evaluate them and preserve them beneath supply management so you possibly can look at what modifications and when. That is particularly essential in case your autonomous system can alter these primarily based on suggestions.

Wrapup

The write-manage-read framing is essentially the most helpful takeaway from this paper. It’s easy, it’s full, and it forces you to consider all three phases as a substitute of simply “retailer stuff, retrieve stuff.”

The taxonomy maps surprisingly effectively to what I inbuilt OpenClaw by way of iteration and frustration. That’s both validating or humbling, relying on the way you take a look at it (in all probability each.) The paper formalizes patterns that practitioners have been discovering independently, which is what an excellent survey ought to do.

The open issues part is sincere about how a lot is unsolved. Analysis remains to be primitive. Governance is generally ignored in apply. Coverage-learned administration is promising however immature. There’s quite a lot of runway right here.

Reminiscence is the place the true differentiation occurs in agent methods. Not the mannequin, not the prompts. The reminiscence structure. The paper offers you a vocabulary and a framework to suppose extra clearly about it.

About

Nicholaus Lawson is a Resolution Architect with a background in software program engineering and AIML. He has labored throughout many verticals, together with Industrial Automation, Well being Care, Monetary Providers, and Software program firms, from start-ups to massive enterprises.

This text and any opinions expressed by Nicholaus are his personal and never a mirrored image of his present, previous, or future employers or any of his colleagues or associates.

Be at liberty to attach with Nicholaus through LinkedIn at https://www.linkedin.com/in/nicholaus-lawson/