of knowledge governance

Knowledge governance is the structured, ongoing means of managing a company’s information to make sure its availability, usability, integrity, and safety. It includes organising a framework of roles, insurance policies, requirements, and metrics that management how information is created, used, saved, and guarded all through its lifecycle.

Knowledge governance emerged as a proper follow within the early 2000’s the place the main target was fundamental safety and entry management usually housed inside the IT division. Sparked by monetary crises and information breaches, early information governance frameworks had been merely “checking packing containers”, GDPR and information stewardship to mitigate dangers. Quick ahead to 2025, with the rise of Agentic AI, information governance is now embedded into workflows focussing on AI-readiness, information high quality and real-time lineage. By 2026, the “grace intervals” for a lot of European rules might be ending, marking this yr as “a yr of reckoning” for information technique.

EU Laws you must know

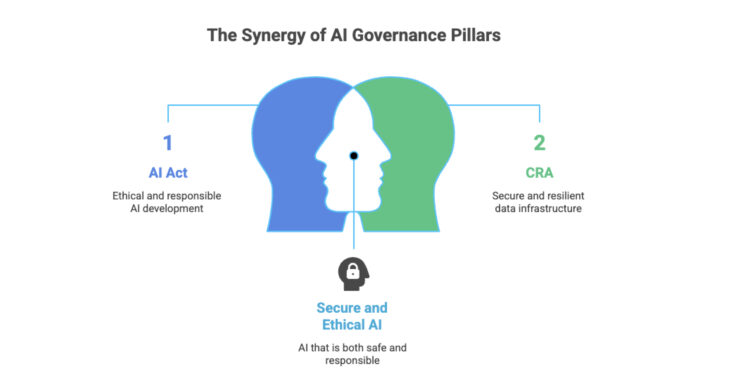

In 2026, European corporations can now not afford to take governance frivolously. With the complete implementation of the EU AI Act, the Cyber Resilience Act (CRA) and the Knowledge Act, the price of “messy information” has shifted from a efficiency tax to a authorized legal responsibility.

The EU AI Act (The High quality & Ethics Mandate)

Whereas the EU AI Act entered into power in 2024, August 2026 is the important deadline for many “Excessive-Threat” AI methods and Normal Goal AI (GPAI) transparency guidelines. For “Excessive-Threat” AI methods, Article 10 of the Act requires:

- Knowledge Provenance: It’s essential to show the place your coaching information got here from.

- Bias Mitigation: Lively monitoring for “consultant” and “error-free” datasets.

- Traceability: A technical “paper path” of how information influenced a mannequin’s choice.

By 2026, documentation path is necessary. AI-generated content material must be marked and labelled. If an auditor knocks, you must be capable to hint a choice again to actual coaching information and bias-mitigation steps taken up to now.

The Cyber Resilience Act (CRA)

Whereas the AI Act governs the intelligence, the CRA governs the vessel. By 2027, any digital product within the EU should bear the CE mark, proving it meets strict cybersecurity requirements. Producers of digital merchandise should actively report exploited vulnerabilities to ENISA inside 24 hours. Firms ought to have a Software program Invoice of Supplies (SBOM) – a reside governing stock of each open supply software program part of their stack. For information governance, this implies:

- Safe Knowledge Lifecycles: Knowledge can’t be ruled if the software program dealing with it’s susceptible.

- Vulnerability Disclosure: Firms should now govern their information pipelines with the identical safety rigor as their monetary transactions.

The Knowledge Act (The Finish of Knowledge Silos)

Usually overshadowed by the AI Act, the Knowledge Act (already in full impact from September 2025) is maybe extra disruptive.

- The Proper to Portability: It grants customers (each B2B and B2C) the suitable to entry and share information generated by their use of related merchandise.

- Pivot Technique: Firms can now not deal with “utilization information” as their unique asset. Your 2026 information technique should embody Knowledge-Sharing-by-Design. It’s essential to construct APIs that permit your clients to drag their information out and hand it to a competitor – on honest and non-discriminatory phrases.

The 2026 Pivot: From “Test-box” to “By Design”

The standard “Test-box” method was good when governance was an annual audit. Firms should now transition from a reactive information cleanup to proactive technical structure. Governance must be embedded “By Design” in 2026. Beneath are the three technological shifts taking place on this route:

- From Passive Catalogs to Lively Metadata – We already know high-risk AI methods should have “logging of exercise to endure traceability”. That is solely attainable with an energetic metadata platform. These methods use AI to observe the info stack in real-time. If a coaching dataset is up to date, the metadata system immediately alerts downstream AI fashions and logs the change for future audits, thus making a “paper path”.

- Common Semantic Layer (or “Single Model of Reality”) – Firms are adopting a common semantic layer, which is a middleware layer that sits between your information (Snowflake, Databricks, and so forth) and your AI brokers. Your AI chatbot can not give one reply and your monetary report one other. Each software ought to use the identical enterprise logic. Firms like Snowflake (via Horizon Catalog) and Databricks (via Unity Catalog) are offering built-in governance to their clients reasonably than a bolt-on layer.

- Zero ETL and “Safe Knowledge Circulate” – The CRA calls for that digital merchandise should be safe all through their lifecycle. No extra brittle, hand-coded ETL pipelines. The Zero ETL architectures goal to scale back the “information footprint” minimizing the variety of instances delicate information is copied. Handbook ingestion scripts are sometimes the weakest hyperlinks the place information will get leaked or corrupted. Open desk codecs (like Iceberg) permit completely different instruments to work on the identical information with none duplication.

How AI Brokers Are Taking the Governance Burden

Some of the thrilling shifts in 2026 is that we’re lastly utilizing AI to resolve the issues AI created. We’re shifting from Static BI (the place you have a look at a chart) to Agentic BI (the place an agent screens the info and acts on it). Within the outdated world, a Knowledge Steward manually checked for biases or high quality errors. In 2026, autonomous brokers (with human oversight) function as silent sentinels inside your information stack. Beneath are some use instances that may already be carried out:

- Autonomous Metadata Technology: Brokers scan newly ingested information, robotically tagging it for sensitivity (GDPR), provenance (AI Act), and high quality. They “learn” the info so people don’t need to.

- Actual-Time Bias Filtering: As information flows right into a high-risk AI mannequin, an agentic layer performs a “pre-flight verify,” flagging consultant gaps or historic biases earlier than they’ll affect a mannequin’s coaching.

- Automated Audit Trails: When a regulator asks for proof of “Human Oversight,” an agent can immediately compile a file of each choice made, each log captured, and each guide override carried out over the past 12 months.

You’ll be able to automate the info, however you can’t automate the accountability. In 2026, the human function shifts from doing the work to auditing the brokers who do the work.

Belief, Regulation, and the Human Aspect

Organizations are now not viewing the rules as burdens. As an alternative, they’re utilizing compliance to show transparency and construct belief with their clients, boards and traders. Whereas AI excels at velocity, sample recognition, and processing huge information, human oversight is important to supply context, moral, reasoning, empathy, and accountability. The AI Act explicitly forbids absolutely autonomous “black field” decision-making for high-risk use instances (similar to recruitment, credit score scoring, diagnostic instruments, and so forth). The “Human-in-the-Loop” is a required architectural part. At any time limit, a human ought to be capable to kill or override an AI choice. For this to be efficient, workers should be “AI literate”, ie, an worker should perceive find out how to spot a “hallucination,” find out how to shield delicate information from leaking into public LLMs, and find out how to use AI instruments responsibly.

There’s additionally a brand new function rising in 2026 – AI Compliance Officer (AICO). Their job is to make sure that AI methods adhere to authorized, moral, and regulatory requirements, mitigating dangers like bias and privateness violations. These roles are now not “police” on the finish of the method; they sit within the Product Design part, guaranteeing that “Ethics-by-Design” is baked into the code earlier than the primary line is even written.

Conclusion

By the point the EU AI Act reaches its full enforcement milestones in August 2026, the divide between the “data-mature” and the “data-exposed” might be insurmountable. Don’t look forward to auditors to knock your door. To know the place your group stands right this moment, ask your management workforce these 4 “Exhausting Reality” questions:

- Traceability: If a regulator requested for the precise coaching information used in your most crucial AI mannequin three months in the past, might you produce an automatic audit path in underneath an hour?

- Resilience: Do you’ve gotten a reside Software program Invoice of Supplies (SBOM) that identifies each open-source part touching your information pipelines proper now?

- Sovereignty: Does your information reside in a stack the place you maintain the encryption keys, or is your compliance on the mercy of a non-EU hyperscaler’s phrases of service?

- Literacy: Does your frontline employees know find out how to determine an AI “hallucination,” or are they treating agentic outputs as absolute fact?

The time to pivot is now. Begin by unifying your Metadata and establishing a Common Semantic Layer. By simplifying your structure right this moment, you construct the “Sovereign Fortress” that may let you innovate with confidence tomorrow.

Earlier than you go…

Observe me so that you don’t miss any new posts I write in future; you will see that extra of my articles on my profile web page. You can too join with me on LinkedIn or X!