Organizations throughout in Thailand, Malaysia, Singapore, Indonesia, and Taiwan can now entry Anthropic Claude Opus 4.6, Sonnet 4.6, and Claude Haiku 4.5 via International cross-Area inference (CRIS) on Amazon Bedrock—delivering basis fashions via a globally distributed inference structure designed for scale. International CRIS provides three key benefits: larger quotas, value effectivity, and clever request routing to inference capability throughout AWS business Areas for enabling AI use-cases like chatbots, autonomous coding brokers, and monetary evaluation techniques for patrons.

On this submit, we’re thrilling to announce availability of International CRIS for patrons in Thailand, Malaysia, Singapore, Indonesia, and Taiwan and provides a walkthrough of technical implementation steps, and canopy quota administration finest practices to maximise the worth of your AI Inference deployments. We additionally present steerage on finest practices for manufacturing deployments.

International cross Area inference

CRIS is a robust Amazon Bedrock functionality that organizations can use to seamlessly distribute inference processing throughout a number of AWS Areas. This functionality helps you obtain larger throughput whereas constructing at scale, serving to to ensure your generative AI purposes stay responsive and dependable even below heavy load.

You entry CRIS via inference profiles, which function on two key ideas:

- Supply Area – The Area from which you make the API request

- Vacation spot Area – A Area to which Amazon Bedrock can route the request for inference

CRIS operates via the safe AWS community with end-to-end encryption for each knowledge in transit and at relaxation. Whenever you submit an inference request from a supply Area, CRIS intelligently routes the request to one of many vacation spot Areas configured for the inference profile over the Amazon Bedrock managed community. The inference request travels over the AWS world community by Bedrock and responses are returned to your software within the supply Area.

The important thing distinction is that whereas inference processing (the transient computation) would possibly happen in one other Area, the info at relaxation—together with logs, information bases, and saved configurations—stays completely inside your supply Area. Amazon Bedrock supplies two sorts of cross-Area inference profiles: Geographic CRIS (which routes inside a selected geography resembling US, EU, APAC, Australia, Japan) and International CRIS (which routes to supported business Areas worldwide). Prospects in Thailand, Malaysia, Singapore, Taiwan, and Indonesia can now entry Claude Opus 4.6, Sonnet 4.6, and Haiku 4.5 via International CRIS, which routes requests throughout Areas for larger throughput and built-in resilience throughout site visitors spikes.

Why International CRIS for Thailand, Malaysia, Singapore, Taiwan, and Indonesia

As organizations shift from conversational AI assistants to autonomous brokers that plan, execute, and coordinate complicated workflows, manufacturing AI deployments require extra resilient and scalable infrastructure. International CRIS delivers Claude Opus 4.6, Sonnet 4.6 and Haiku 4.5 via a excessive availability structure designed to fulfill the calls for of this shift to production-scale autonomous techniques. As autonomous brokers more and more deal with service provider operations, coordinate logistics networks, and automate monetary workflows throughout use-cases for patrons in Thailand, Malaysia, Singapore, Taiwan, and Indonesia, infrastructure reliability straight impacts the continuity of those autonomous decision-making techniques. International CRIS routes inference requests throughout extra inference capability on AWS Areas worldwide, decreasing the chance that your purposes expertise service throttling throughout site visitors spikes. This routing functionality delivers built-in resilience, permitting your agentic purposes to take care of operational continuity whilst demand patterns shift.

Supply Areas configuration in Thailand, Malaysia, Singapore, Taiwan, and Indonesia

At launch, prospects in Thailand, Malaysia, Singapore, Taiwan, and Indonesia can name International CRIS profiles from the next supply Areas:

| Supply Area | AWS Industrial Areas | Availability | International CRIS routing |

| Asia Pacific (Singapore) | ap-southeast-1 |

Out there now | Routes to greater than 20 supported AWS business Areas globally |

| Asia Pacific (Jakarta) | ap-southeast-3 |

Out there now | Routes to greater than 20 supported AWS business Areas globally |

| Asia Pacific (Taipei) | ap-east-2 |

Out there now | Routes to greater than 20 supported AWS business Areas globally |

| Asia Pacific (Thailand) | ap-southeast-7 |

Out there now | Routes to greater than 20 supported AWS business Areas globally |

| Asia Pacific (Malaysia) | ap-southeast-5 |

Out there now | Routes to greater than 20 supported AWS business Areas globally |

As soon as invoked behind the scenes, International CRIS will handle routing of requests to any supported business AWS Areas.

Stipulations

Earlier than utilizing International CRIS, it is advisable to configure IAM permissions that allow cross-Area routing on your inference requests.

Configure IAM permissions

Earlier than you may invoke Claude fashions via International CRIS, you will need to configure IAM permissions that account for the cross-Area routing structure. The next part walks via the coverage construction and explains why three separate statements are required.

Full the next steps to configure IAM permissions for International CRIS. The IAM coverage grants permission to invoke Claude fashions via International CRIS. The coverage requires three statements as a result of CRIS routes requests throughout Areas: you name the inference profile in your supply Area (Singapore or Jakarta), which then invokes the inspiration mannequin in whichever vacation spot Area CRIS selects. The third assertion makes use of "aws:RequestedRegion": "unspecified" to grant the mandatory permissions for International CRIS to route your requests throughout Areas.

Exchange ap-southeast-3) as a substitute of Singapore (ap-southeast-1).

It’s vital to notice that in case your group’s service management insurance policies (SCPs) deny entry to unspecified Areas, International CRIS won’t operate. We advocate validating your SCP configuration earlier than deploying manufacturing workloads that rely on world routing.

In case your group restricts AWS API calls to particular Areas, make positive your SCP contains "unspecified" within the permitted Areas checklist. The next instance reveals tips on how to configure an SCP that allows International CRIS routing. Add your supply Area for International CRIS (Singapore ap-southeast-1 or Jakarta ap-southeast-3) together with different Areas your group makes use of:

With IAM permissions configured, you can begin invoking Claude fashions via International CRIS utilizing inference profiles and the Converse API.

Use cross-Area inference profiles

International inference profiles are recognized by the world. prefix of their mannequin identifier—a naming conference that you should utilize to differentiate world routing profiles from Regional or single-Area mannequin IDs. Use these inference profile IDs when making API calls as a substitute of the usual mannequin IDs:

| Mannequin | Base mannequin ID | International inference profile ID |

| Claude Sonnet 4.6 | anthropic.claude-sonnet-4-6 | world.anthropic.claude-sonnet-4-6 |

| Claude Opus 4.6 | anthropic.claude-opus-4-6-v1 | world.anthropic.claude-opus-4-6-v1 |

| Claude Sonnet 4.5 | anthropic.claude-sonnet-4-5-20250929-v1:0 | world.anthropic.claude-sonnet-4-5-20250929-v1:0 |

| Claude Haiku 4.5 | anthropic.claude-haiku-4-5-20251001-v1:0 | world.anthropic.claude-haiku-4-5-20251001-v1:0 |

Each the InvokeModel and Converse APIs help cross-Area inference profiles. We advocate utilizing the Converse API—this strategy supplies a simplified interface and constant request/response format throughout totally different basis fashions, so you may swap between fashions with out rewriting integration code.

Make your first API name

Getting began with International CRIS requires only some adjustments to your present software code. The next code snippet demonstrates tips on how to invoke Claude Opus 4.6 utilizing International CRIS in Python with the boto3 SDK:

If that is your first time working with a cross-Area functionality, you would possibly anticipate that routing requests to a number of Areas would complicate your monitoring setup. With International CRIS, that’s not the case. Your Amazon CloudWatch metrics, CloudWatch logs, and AWS CloudTrail audit logs stay in your supply Area, even when inference requests are processed elsewhere. Your present dashboards, alarms, and audit path proceed to work precisely as they do right now.

For extra info on the Converse API and obtainable parameters, see the Amazon Bedrock API Reference. Constructing on this basis, let’s discover quota administration methods to make positive your deployment can scale with demand.

Quota administration

As your software scales from prototype to manufacturing, understanding and managing service quotas turns into crucial for sustaining constant efficiency. This part covers how quotas work, tips on how to monitor your utilization, and tips on how to request will increase when wanted.

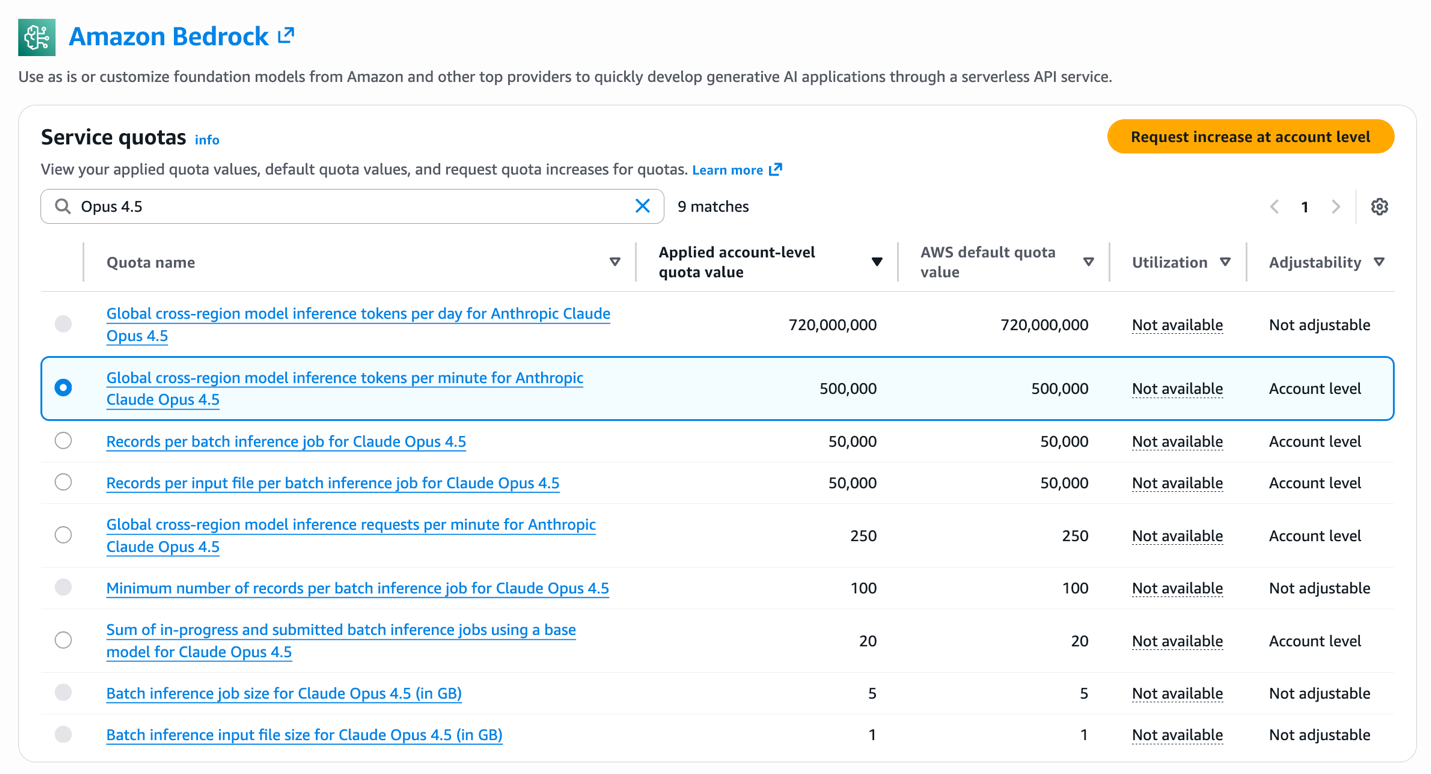

The next determine reveals the Amazon Bedrock Service Quotas web page within the AWS console, the place you may view your utilized account-level quota values for International CRIS inference profiles.

Understanding quotas and planning for scale

Understanding quotas and planning for scale is step one in ensuring your International CRIS deployment can deal with manufacturing site visitors with out throttling. Amazon Bedrock enforces service quotas to facilitate truthful useful resource allocation and system stability. This consideration turns into crucial as your software scales from prototype to manufacturing. For International CRIS, quotas are measured in two dimensions, every serving a definite function in capability administration:

- Tokens per minute (TPM) – The utmost variety of tokens (enter + output) that may be processed per minute

- Requests per minute (RPM) – The utmost variety of inference requests that may be made per minute

Default quotas range by mannequin and are allotted per supply Area. You’ll be able to view your present quotas within the AWS Service Quotas console by navigating to Amazon Bedrock service quotas in your supply Area (Singapore or Jakarta).

Be suggested that Amazon Bedrock makes use of a token burndown fee that weighs output tokens extra closely than enter tokens when calculating quota consumption. The burndown fee is 5:1—output tokens devour 5 instances extra quota than enter tokens as a result of producing tokens requires extra computation than processing enter.

Quota consumption = Enter tokens + (Output tokens × 5)

For instance, in case your request makes use of 10,000 enter tokens and generates 5,000 output tokens:

Whole quota consumption = 10,000 + (5,000 × 5) = 35,000 tokens

The request consumes 35,000 tokens towards your TPM quota for throttling functions. When planning capability necessities and requesting quota will increase, it is advisable to account for this burndown fee in your calculations. In case your software processes requests with this identical token sample at 100 requests per minute, the overall quota consumption can be 3,500,000 TPM (100 requests × 35,000 tokens per request). When working together with your AWS Account Supervisor on quota enhance requests, present your anticipated request quantity, common enter tokens per request, and common output tokens per request to allow them to calculate the suitable quota allocation utilizing this burndown multiplier.

Managing quotas successfully

We advocate establishing CloudWatch alarms at 70–80% quota utilization to request will increase earlier than hitting throttling limits. The CloudWatch metrics InputTokenCount and OutputTokenCount monitor your consumption in real-time, whereas the InvocationClientErrors metric signifies throttling when it spikes—offering early warning indicators for capability planning. For detailed steerage on obtainable metrics and tips on how to configure monitoring on your Bedrock workloads, consult with Monitoring the efficiency of Amazon Bedrock.

For non-time-sensitive workloads, Claude Haiku 4.5 helps batch inference at 50% value financial savings. Batch requests course of asynchronously inside 24 hours and don’t rely towards your real-time TPM quota.

Requesting quota will increase

Think about the next components when figuring out whether or not you want quota will increase: workload scale (requests per minute throughout peak site visitors), output token ratio (excessive output era consumes quota quicker), and development projections (account for six–12 month scaling wants). In case your workload requires quotas past the default limits, you may request will increase via the AWS Service Quotas console.

Full the next steps to request quota will increase via the AWS Service Quotas console:

- Sign up to the AWS Administration Console for AWS Service Quotas in your supply Area.

- Navigate to AWS companies and choose Amazon Bedrock.

- Seek for

International cross-Area mannequin inference tokens per minuteon your particular mannequin. - Choose the quota and select Request enhance at account degree.

- Enter your required quota worth with justification for the rise.

- Submit the request for AWS evaluate.

Plan forward when requesting quota will increase to assist guarantee capability is offered earlier than your launch or scaling occasions. For big-scale deployments or time-sensitive launches, we advocate working together with your AWS account staff to assist guarantee applicable capability planning and expedited evaluate. With quota administration methods in place, let’s discover how to decide on between Opus 4.6, Sonnet 4.6 and Haiku 4.5 on your particular use instances.

Migrating from Claude 3.x to Claude 4.5 / 4.6

The migration from Claude 3.x to Claude 4.5 / 4.6 represents a considerable technological leap for organizations utilizing both Opus, Sonnet or Haiku variations. Claude’s hybrid reasoning structure introduces substantial enhancements in device integration, reminiscence administration, and context processing capabilities.

For extra technical implementation steerage, see the AWS weblog submit, Migrate from Anthropic’s Claude Sonnet 3.x to Claude Sonnet 4.x on Amazon Bedrock, which supplies important finest practices which are additionally legitimate for the migration to the brand new Claude Sonnet 4.6 mannequin. Moreover, Anthropic’s migration documentation provides model-specific optimization methods and concerns for transitioning to Claude 4.5 / 4.6 fashions.

Greatest practices

Think about the next optimization strategies to maximise efficiency and decrease prices on your workloads:

1. Immediate caching for repeated context

Immediate caching delivers as much as 90% value discount on cached tokens and as much as 85% latency enchancment for workloads that repeatedly use the identical context. Cache system prompts exceeding 500 tokens, documentation content material, few-shot examples, and power definitions. Construction prompts with static content material first, adopted by dynamic queries. See Immediate caching for quicker mannequin inference Person Information for implementation particulars.

2. Mannequin choice technique

Think about job complexity, latency necessities, value constraints, and accuracy wants when selecting between fashions. We advocate Claude Opus 4.6 for essentially the most complicated duties requiring frontier intelligence, resembling complicated multi-step reasoning, refined autonomous brokers, and precision-critical evaluation. Claude Sonnet 4.6 is nicely fitted to complicated issues requiring agent planning and execution. Claude Haiku 4.5 delivers near-frontier efficiency at decrease value, making it optimum for high-volume operations and latency-sensitive experiences. For multi-agent architectures, think about using Opus 4.6 or Sonnet 4.6 as orchestrator and Haiku 4.5 for parallel execution employees.

3. Adaptive and prolonged considering for complicated duties

Claude Opus 4.6 helps adaptive considering, an evolution of prolonged considering that offers Claude the liberty to suppose if and when it determines reasoning is required. You’ll be able to information how a lot considering Claude allocates utilizing the trouble parameter, optimizing each efficiency and velocity. Sonnet 4.6 and Haiku 4.5 help prolonged considering, the place the mannequin generates intermediate reasoning steps via downside decomposition, self-correction, and exploring a number of resolution paths. These considering capabilities ship accuracy enhancements on complicated reasoning duties, so allow them selectively the place accuracy enhancements justify the extra quota utilization.

4. Load testing for quota validation

Run load checks earlier than manufacturing launch to measure precise quota consumption below peak site visitors. Configure your take a look at shopper with adaptive retry mode (Config(retries={‘mode’: ‘adaptive’})) to deal with throttling throughout the take a look at, use instruments like Locust or boto3 with threading to simulate concurrent requests, and monitor the CloudWatch metrics throughout your load take a look at to watch TPM and RPM consumption patterns. A take a look at with 20 concurrent threads making steady requests will shortly reveal whether or not your quota allocation matches your anticipated load.

Abstract and subsequent steps

International cross-Area inference on Amazon Bedrock delivers Claude Opus 4.6, Sonnet 4.6, and Haiku 4.5 fashions to organizations in Thailand, Malaysia, Singapore, Taiwan, and Indonesia with two key benefits: value financial savings in comparison with Regional profiles, and clever routing throughout greater than 20 AWS Areas for max availability and scale.

This infrastructure permits manufacturing AI purposes throughout Southeast Asia, from real-time customer support to monetary evaluation and autonomous coding assistants. Claude Opus 4.6 supplies intelligence for essentially the most demanding enterprise workloads, Sonnet 4.6 delivers balanced efficiency for day by day manufacturing use instances, and Haiku 4.5 permits cost-efficient high-volume operations. For multi-agent architectures, mix these fashions to optimize for each high quality and economics.

We encourage you to get began right now with International cross-Area inference in your purposes. Full the next steps to start:

- Sign up to the Amazon Bedrock console in any of the supply Areas listed above, e.g. Singapore (

ap-southeast-1) or Jakarta (ap-southeast-3). - Configure IAM permissions utilizing the coverage template supplied on this submit.

- Make your first API name utilizing the worldwide inference profile ID.

- Implement immediate caching for value financial savings on repeated context.

For extra info:

In regards to the Authors