, I noticed our manufacturing system fail spectacularly. Not a code bug, not an infrastructure error, however merely misunderstanding the optimization objectives of our AI system. We constructed what we thought was a flowery doc evaluation pipeline with retrieval-augmented technology (RAG), vector embeddings, semantic search, and fine-tuned reranking. After we demonstrated the system, it answered questions on our consumer’s regulatory paperwork very convincingly. However in manufacturing, the system answered questions fully context free.

The revelation hit me throughout a autopsy assembly: we weren’t managing info retrieval however we had been managing context distribution. And we had been horrible at it.

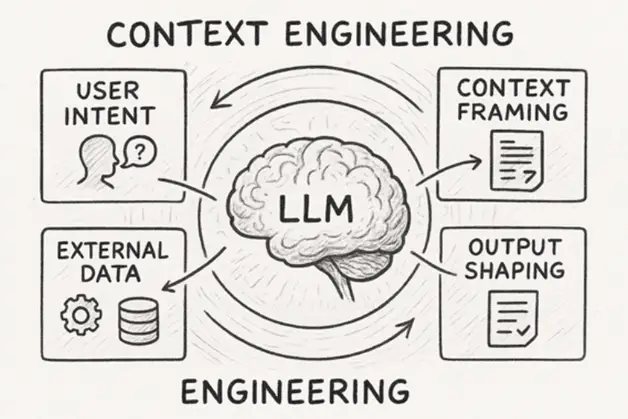

This failure taught me one thing that’s grow to be more and more clear throughout the AI trade: context isn’t simply one other enter parameter to optimize. Relatively, it’s the central forex that defines whether or not an AI system delivers actual worth or stays a pricey sideshow. In contrast to conventional software program engineering, through which we optimize for pace, reminiscence, or throughput, context engineering requires us to treat info as people do: layered, interdependent, and reliant on situational consciousness.

The Context Disaster in Trendy AI Programs

Earlier than we glance into potential options, it’s essential to establish why context has grow to be such a essential choke level. It isn’t a problem from a technical viewpoint. It’s extra of a design and philosophical challenge.

Most AI carried out right this moment takes under consideration context as a fixed-sized buffer which is full of pertinent info forward of processing. This labored properly sufficient with the early implementations of chatbots and question-answering methods. Nonetheless, with the growing sophistication of AI functions and their incorporation into workflows, the buffer-based methodology has proved to be deeply inadequate.

Let’s take a typical enterprise RAG system for example. What occurs when a person inputs a query? The system performs the next actions:

- Converts the query into embeddings

- Searches a vector database for related content material

- Retrieves the top-k most related paperwork

- Stuffs every part into the context window

- Generates a solution

This movement relies on the speculation that clustering embeddings in some area of similarity could be handled as contextual purpose which in observe fails not simply often, however persistently.

The extra basic flaw is the view of context as static. In a human dialog, context is versatile and shifts throughout the course of a dialogue, shifting and evolving as you progress via a dialog, a workflow. For instance, should you had been to ask a colleague “the Johnson report,” that search doesn’t simply pulse via their reminiscence for paperwork with these phrases. It’s related to what you’re engaged on and what undertaking.

From Retrieval to Context Orchestration

The shift from occupied with retrieval to occupied with context orchestration represents a basic change in how we architect AI methods. As a substitute of asking “What info is most just like this question?” we have to ask “What mixture of data, delivered in what sequence, will allow the best decision-making?”

Writer-generated picture utilizing AI

This distinction issues as a result of context isn’t additive, reasonably it’s compositional. Throwing extra paperwork right into a context window doesn’t enhance efficiency in a linear trend. In lots of circumstances, it truly degrades efficiency as a result of what some researchers name “consideration dilution.” The mannequin’s consideration focus spreads too skinny and because of this, the concentrate on essential particulars weakens.

That is one thing I skilled firsthand when growing a doc evaluation system. Our earliest variations would fetch each relevant case, statute, and even regulation for each single question. Whereas the outcomes would cowl each potential angle, they had been completely devoid of utility. Image a decision-making state of affairs the place an individual is overwhelmed by a flood of related info being learn out to them.

The second of perception occurred after we started to think about context as a story construction as a substitute of a mere info dump. Authorized reasoning works in a scientific approach: articulate the information, decide the relevant authorized rules, apply them to the information, and anticipate counterarguments.

| Facet | RAG | Context Engineering |

| Focus | Retrieval + Era | Full lifecycle: Retrieve, Course of, Handle |

| Reminiscence Dealing with | Stateless | Hierarchical (brief/long-term) |

| Device Integration | Fundamental (non-obligatory) | Native (TIR, brokers) |

| Scalability | Good for Q&A | Wonderful for brokers, multi-turn |

| Widespread Instruments | FAISS, Pinecone | LangGraph, MemGPT, GraphRAG |

| Instance Use Case | Doc search | Autonomous coding assistant |

The Structure of Context Engineering

Efficient context engineering requires us to consider three distinct however interconnected layers: info choice, info group, and context evolution.

Data Choice: Past Semantic Similarity

The primary layer focuses on growing extra superior strategies on the right way to outline what the context entails. Conventional RAG methods place far an excessive amount of emphasis on embedding similarity. This strategy overlooks key components of the lacking, how the lacking info contributes to the understanding.

It’s my expertise that probably the most helpful choice methods incorporate many alternative unders.

Relevance cascading begins with extra basic broad semantic similarity, after which focuses on extra particular filters. As an instance, within the regulatory compliance system, first, there’s a collection of semantically related paperwork, then paperwork from the related regulatory jurisdiction are filtered, adopted by prioritizing paperwork from the newest regulatory interval, and eventually, rating by latest quotation frequency.

Temporal context weighting acknowledges that the relevance of data adjustments over time. A regulation from 5 years in the past may be semantically linked to up to date points. Nonetheless, if the regulation is outdated, then incorporating it into the context could be contextually inaccurate. We will implement decay features that mechanically downweight outdated info until explicitly tagged as foundational or precedential.

Consumer context integration goes past the quick question to contemplate the person’s function, present initiatives, and historic interplay patterns. When a compliance officer asks about information retention necessities, the system ought to prioritize totally different info than when a software program engineer asks the identical query, even when the semantic content material is an identical.

Data Group: The Grammar of Context

As soon as we’ve extracted the related info, how we characterize it within the context window is essential. That is the world the place typical RAG methods can fall brief – they contemplate the context window as an unstructured bucket reasonably a considerate assortment of narrative.

Within the case of organizing context that’s efficient, the framework also needs to require that one understands the method recognized to cognitive scientists as “info chunking.” Human working reminiscence can preserve roughly seven discrete items of data without delay. As soon as going past it our understanding falls precipitously. The identical is true for AI methods not as a result of their cognitive shortcomings are an identical, however as a result of their coaching forces them to mimic human like reasoning.

In observe, this implies growing context templates that mirror how specialists in a site naturally manage info. For monetary evaluation, this would possibly imply beginning with market context, then shifting to company-specific info, then to the precise metric or occasion being analyzed. For medical analysis, it’d imply affected person historical past, adopted by present signs, adopted by related medical literature.

However right here’s the place it will get attention-grabbing: the optimum group sample isn’t mounted. It ought to adapt based mostly on the complexity and kind of question. Easy factual questions can deal with extra loosely organized context, whereas complicated analytical duties require extra structured info hierarchies.

Context Evolution: Making AI Programs Conversational

The third layer context evolution is probably the most difficult but in addition a very powerful one. The vast majority of current methods contemplate every interplay to be impartial; due to this fact, they recreate the context from zero for every question. But offering efficient human communication requires preserving and evolving shared context as a part of a dialog or workflow.

However structure that evolves the context through which the AI system runs will likely be one other matter; what will get shifted is the right way to handle its state in a single sort of area of potentialities. We’re not merely sustaining information state we’re additionally sustaining understanding state.

This “context reminiscence” — a structured illustration of what the system has discovered in previous interactions — turned a part of our Doc Response system. The system doesn’t deal with the brand new question as if it exists in isolation when a person asks a follow-up query.

It considers how the brand new question pertains to the beforehand established context, what assumptions could be carried ahead, and what new info must be built-in.

This strategy has profound implications for person expertise. As a substitute of getting to re-establish context with each interplay, customers can construct on earlier conversations, ask follow-up questions that assume shared understanding, and interact within the sort of iterative exploration that characterizes efficient human-AI collaboration.

The Economics of Context: Why Effectivity Issues

The price of studying context is proportional to computational energy, and it’d quickly grow to be cost-prohibitive to take care of complicated AI functions which might be ineffective in studying context.

Do the maths: In case your context window includes 8,000 tokens, and you’ve got some 1,000 queries per day, you’re consuming up 8 million tokens per day for context solely. At current pricing methods, the price of context inefficiency can simply dwarf the price of the duty technology itself.

However the economics lengthen past the direct prices of computation. A foul context administration immediately causes slower response time and thus worse person expertise and fewer system utilization. It additionally will increase the chance of repeating errors, which has downstream prices in person’s confidence and handbook patches created to repair points.

Essentially the most profitable AI implementations I’ve noticed deal with context as a constrained useful resource that requires cautious optimization. They implement context budgeting—specific allocation of context area to various kinds of info based mostly on question traits. They use context compression strategies to maximise info density. And so they implement context caching methods to keep away from recomputing steadily used info.

Measuring Context Effectiveness

One of many challenges in context engineering is growing metrics that truly correlate with system effectiveness. Conventional info retrieval metrics like precision and recall are needed however not adequate. They measure whether or not we’re retrieving related info, however they don’t measure whether or not we’re offering helpful context.

In our implementations, we’ve discovered that probably the most predictive metrics are sometimes behavioral reasonably than accuracy-based. Context effectiveness correlates strongly with person engagement patterns: how usually customers ask follow-up questions, how steadily they act on system suggestions, and the way usually they return to make use of the system for related duties.

We’ve additionally carried out what we name “context effectivity metrics”; it measures of how a lot worth we’re extracting per token of context consumed. Excessive-performing context methods constantly present actionable insights with minimal info overhead.

Maybe most significantly, we measure context evolution effectiveness by monitoring how system efficiency improves inside conversational periods. Efficient context engineering ought to result in higher solutions as conversations progress, because the system builds extra subtle understanding of person wants and situational necessities.

The Instruments and Strategies of Context Engineering

Growing efficient context engineering requires each new instruments and in addition new methods to consider previous instruments. New instruments are developed and obtainable each month, however the methods that in the end work in manufacturing appear to match acquainted patterns:

Context routers make selections dynamically based mostly on figuring out question components. As a substitute of mounted retrieval methods, they assess parts of the question like f intent, effort complexity, and situational concerns. That is to plot methods based mostly on some type of optimization to pick out and manage info.

Context compressors borrow from info principle and create what I consider as max logic to include maximally impute density issue inside a context window. These should not merely textual content summarisation instruments, these are methods that attend to storing probably the most contextually wealthy info and cut back noise in addition to redundancy.

Context state managers develop structured representations about conversational state and workflow state – in order that AI methods study, reasonably than are born anew with every totally different intervention or element of interplay.

Context engineering requires occupied with AI methods as companions in ongoing conversations reasonably than oracle methods that reply to remoted queries. This adjustments how we design interfaces, how we construction information, and the way we measure success.

Wanting Ahead: Context as Aggressive Benefit

As AI performance turns into extra standardized, context engineering is changing into our differentiator.

AI functions could not make use of extra superior mannequin architectures or extra complicated algorithms. Relatively, they improve current capabilities additional for better worth and reliability via higher context engineering.

The implications run deeper than the precise setting through which implementations happen, to 1’s organizational technique. Corporations that concentrate on context engineering as a core competency as a part of their differentiated organizational technique, will outperform opponents who merely emphasize their mannequin capabilities and never their info architectures, person workflows and domain-specific reasoning patterns.

A new survey analyzing over 1,400 AI papers has discovered one thing fairly attention-grabbing: we’ve been occupied with AI context fully improper. Whereas everybody’s been obsessing over greater fashions and longer context home windows, researchers found that our AIs are already superb at understanding complicated info, they only suck at utilizing it correctly. The true bottleneck isn’t mannequin intelligence; it’s how we feed info to those methods.

Conclusion

The failure that began this exploration taught me that constructing efficient AI methods isn’t primarily about having the most effective fashions or probably the most subtle algorithms. It’s about understanding and engineering the movement of data in ways in which allow efficient decision-making.

Context engineering is changing into the differentiator for AI methods that present actual worth, versus those who stay attention-grabbing demos.

The way forward for AI shouldn’t be creating methods that perceive every part, it’s creating methods that precisely perceive what the system ought to take note of, when to concentrate, and the way that spotlight could be transformed to motion and perception.