This put up is cowritten by David Stewart and Matthew Individuals from Oumi.

Superb-tuning open supply massive language fashions (LLMs) usually stalls between experimentation and manufacturing. Coaching configurations, artifact administration, and scalable deployment every require completely different instruments, creating friction when shifting from speedy experimentation to safe, enterprise-grade environments.

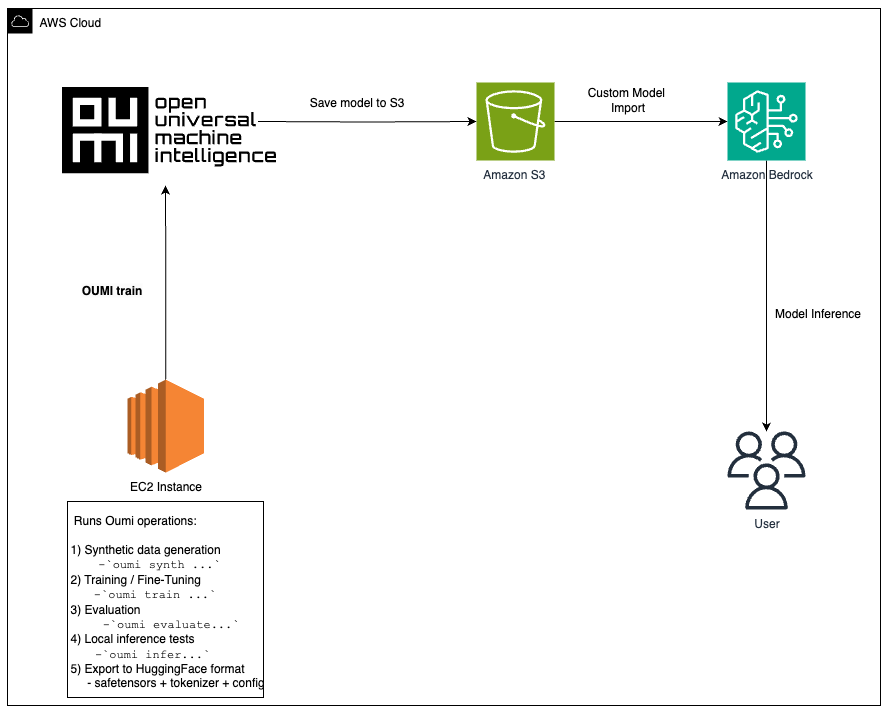

On this put up, we present easy methods to fine-tune a Llama mannequin utilizing Oumi on Amazon EC2 (with the choice to create artificial knowledge utilizing Oumi), retailer artifacts in Amazon S3, and deploy to Amazon Bedrock utilizing Customized Mannequin Import for managed inference. Whereas we use EC2 on this walkthrough, fine-tuning might be accomplished on different compute companies resembling Amazon SageMaker or Amazon Elastic Kubernetes Service, relying in your wants.

Advantages of Oumi and Amazon Bedrock

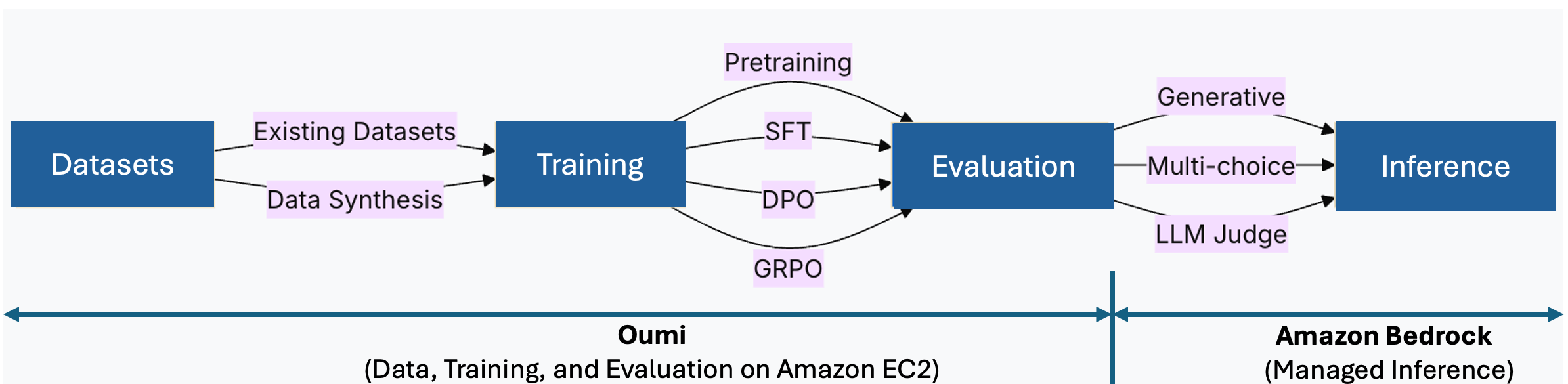

Oumi is an open supply system that streamlines the inspiration mannequin lifecycle, from knowledge preparation and coaching to analysis. As a substitute of assembling separate instruments for every stage, you outline a single configuration and reuse it throughout runs.

Key advantages for this workflow:

- Recipe-driven coaching: Outline your configuration as soon as and reuse it throughout experiments, decreasing boilerplate and bettering reproducibility

- Versatile fine-tuning: Select full fine-tuning or parameter-efficient strategies like LoRA, based mostly in your constraints

- Built-in analysis: Rating checkpoints utilizing benchmarks or LLM-as-a-judge with out further tooling

- Knowledge synthesis: Generate task-specific datasets when manufacturing knowledge is proscribed

Amazon Bedrock enhances this by offering managed, serverless inference. After fine-tuning with Oumi, you import your mannequin by way of Customized Mannequin Import in three steps: add to S3, create the import job, and invoke. No inference infrastructure to handle. The next structure diagram exhibits how these parts work collectively.

Determine 1: Oumi manages knowledge, coaching, and analysis on EC2. Amazon Bedrock offers managed inference by way of Customized Mannequin Import.

Resolution overview

This workflow consists of three levels:

- Superb-tune with Oumi on EC2: Launch a GPU-optimized occasion (for instance, g5.12xlarge or p4d.24xlarge), set up Oumi, and run coaching along with your configuration. For bigger fashions, Oumi helps distributed coaching with Totally Sharded Knowledge Parallel (FSDP), DeepSpeed, and Distributed Knowledge Parallel (DDP) methods throughout multi-GPU or multi-node setups.

- Retailer artifacts on S3: Add mannequin weights, checkpoints, and logs for sturdy storage.

- Deploy to Amazon Bedrock: Create a Customized Mannequin Import job pointing to your S3 artifacts. Amazon Bedrock provisions inference infrastructure mechanically. Consumer functions name the imported mannequin utilizing the Amazon Bedrock Runtime APIs.

This structure addresses widespread challenges in shifting fine-tuned fashions to manufacturing:

Technical implementation

Let’s stroll by a hands-on workflow utilizing the meta-llama/Llama-3.2-1B-Instruct mannequin for example. Whereas we chosen this mannequin because it pairs nicely with fine-tuning on an AWS g6.12xlarge EC2 occasion, the identical workflow might be replicated throughout many different open supply fashions (be aware that bigger fashions might require bigger situations or distributed coaching throughout situations). For extra data, see the Oumi mannequin fine-tuning recipes and Amazon Bedrock customized mannequin architectures.

Stipulations

To finish this walkthrough, you want:

Arrange AWS Sources

- Clone this repository in your native machine:

git clone https://github.com/aws-samples/sample-oumi-fine-tuning-bedrock-cmi.git

cd sample-oumi-fine-tuning-bedrock-cmi- Run the setup script to create IAM roles, an S3 bucket, and launch a GPU-optimized EC2 occasion:

./scripts/setup-aws-env.sh [--dry-run]The script prompts in your AWS Area, S3 bucket title, EC2 key pair title, and safety group ID, then creates all required sources. Defaults: g6.12xlarge occasion, Deep Studying Base AMI with Single CUDA (Amazon Linux 2023), and 100 GB gp3 storage. Word: If you happen to do not need permissions to create IAM roles or launch EC2 situations, share this repository along with your IT administrator and ask them to finish this part to arrange your AWS setting.

- As soon as the occasion is operating, the script outputs the SSH command and the Amazon Bedrock import function ARN (wanted in Step 5). SSH into the occasion and proceed with Step 1 beneath.

See the iam/README.md for IAM coverage particulars, scoping steering, and validation steps.

Step 1: Arrange the EC2 setting

Full the next steps to arrange the EC2 setting.

- On the EC2 occasion (Amazon Linux 2023), replace the system and set up base dependencies:

sudo yum replace -y

sudo yum set up python3 python3-pip git -y- Clone the companion repository:

git clone https://github.com/aws-samples/sample-oumi-fine-tuning-bedrock-cmi.git

cd sample-oumi-fine-tuning-bedrock-cmi- Configure setting variables (exchange the values along with your precise area and bucket title from the setup script):

export AWS_REGION=us-west-2

export S3_BUCKET=your-bucket-name

export S3_PREFIX=your-s3-prefix

aws configure set default.area "$AWS_REGION"- Run the setup script to create a Python digital setting, set up Oumi, validate GPU availability, and configure Hugging Face authentication. See setup-environment.sh for choices.

./scripts/setup-environment.sh

supply .venv/bin/activate- Authenticate with Hugging Face to entry gated mannequin weights. Generate an entry token at huggingface.co/settings/tokens, then run:

hf auth loginStep 2: Configure coaching

The default dataset is tatsu-lab/alpaca, configured in configs/oumi-config.yaml. Oumi downloads it mechanically throughout coaching, no handbook obtain is required. To make use of a special dataset, replace the dataset_name parameter in configs/oumi-config.yaml. See the Oumi dataset docs for supported codecs.

[Optional] Generate artificial coaching knowledge with Oumi:

To generate artificial knowledge utilizing Amazon Bedrock because the inference backend, replace the model_name placeholder in configs/synthesis-config.yaml with an Amazon Bedrock mannequin ID you’ve entry to (e.g. anthropic.claude-sonnet-4-6). See Oumi knowledge synthesis docs for particulars. Then run:

oumi synth -c configs/synthesis-config.yamlStep 3: Superb-tune the mannequin

Superb-tune the mannequin utilizing Oumi’s built-in coaching recipe for Llama-3.2-1B-Instruct:

./scripts/fine-tune.sh --config configs/oumi-config.yaml --output-dir fashions/remaining [--dry-run]To customise hyperparameters, edit oumi-config.yaml.

Word: If you happen to generated artificial knowledge in Step 2, replace the dataset path within the config earlier than coaching.

Monitor GPU utilization with nvidia-smi or Amazon CloudWatch Agent. For long-running jobs, configure Amazon EC2 Computerized Occasion Restoration to deal with occasion interruptions.

Step 4: Consider mannequin (Optionally available)

You’ll be able to consider the fine-tuned mannequin utilizing commonplace benchmarks:

oumi consider -c configs/evaluation-config.yamlThe analysis config specifies the mannequin path and benchmark duties (e.g., MMLU). To customise, edit evaluation-config.yaml. For LLM-as-a-judge approaches and extra benchmarks, see Oumi’s analysis information.

Step 5: Deploy to Amazon Bedrock

Full the next steps to deploy the mannequin to Amazon Bedrock:

- Add mannequin artifacts to S3 and import the mannequin to Amazon Bedrock.

./scripts/upload-to-s3.sh --bucket $S3_BUCKET --source fashions/remaining --prefix $S3_PREFIX

./scripts/import-to-bedrock.sh --model-name my-fine-tuned-llama --s3-uri s3://$S3_BUCKET/$S3_PREFIX --role-arn $BEDROCK_ROLE_ARN --wait- The import script outputs the mannequin ARN on completion. Set

MODEL_ARNto this worth (format:arn:aws:bedrock:).: :imported-model/ - Invoke the mannequin on Amazon Bedrock

./scripts/invoke-model.sh --model-id $MODEL_ARN --prompt "Translate this textual content to French: What's the capital of France?"- Amazon Bedrock creates a managed inference setting mechanically. For IAM function arrange, see bedrock-import-role.json.

- Allow S3 versioning on the bucket to help rollback of mannequin revisions. For SSE-KMS encryption and bucket coverage hardening, see the safety scripts within the companion repository.

Step 6: Clear up

To keep away from ongoing prices, take away the sources created throughout this walkthrough:

aws ec2 terminate-instances --instance-ids $INSTANCE_ID

aws s3 rm s3://$S3_BUCKET/$S3_PREFIX/ --recursive

aws bedrock delete-imported-model --model-identifier $MODEL_ARNConclusion

On this put up, you realized easy methods to fine-tune a Llama-3.2-1B-Instruct base mannequin utilizing Oumi on EC2 and deploy it utilizing Amazon Bedrock Customized Mannequin Import. This method provides you full management over fine-tuning with your individual knowledge whereas utilizing managed inference in Amazon Bedrock.

The companion sample-oumi-fine-tuning-bedrock-cmi repository offers scripts, configurations, and IAM insurance policies to get began. Clone it, swap in your dataset, and deploy a customized mannequin to Amazon Bedrock.

To get began, discover the sources beneath and start constructing your individual fine-tuning-to-deployment pipeline on Oumi and AWS. Completely happy Constructing!

Study Extra

Acknowledgement

Particular due to Pronoy Chopra and Jon Turdiev for his or her contribution.

In regards to the authors