Nemotron 3 Tremendous is now accessible as a totally managed and serverless mannequin on Amazon Bedrock, becoming a member of the Nemotron Nano fashions which might be already accessible throughout the Amazon Bedrock atmosphere.

With NVIDIA Nemotron open fashions on Amazon Bedrock, you’ll be able to speed up innovation and ship tangible enterprise worth with out managing infrastructure complexities. You may energy your generative AI purposes with Nemotron by means of the totally managed inference of Amazon Bedrock, utilizing its intensive options and tooling.

This put up explores the technical traits of the Nemotron 3 Tremendous mannequin and discusses potential utility use instances. It additionally offers technical steering to get began utilizing this mannequin on your generative AI purposes throughout the Amazon Bedrock atmosphere.

About Nemotron 3 Tremendous

Nemotron 3 Tremendous is a hybrid Combination of Consultants (MoE) mannequin with main compute effectivity and accuracy for multi-agent purposes and for specialised agentic AI programs. The mannequin is launched with open weights, datasets, and recipes so builders can customise, enhance, and deploy the mannequin on their infrastructure for enhanced privateness and safety.

Mannequin overview:

- Structure:

- MoE with Hybrid Transformer-Mamba structure.

- Helps token finances for offering improved accuracy with minimal reasoning token era.

- Accuracy:

- Highest throughput effectivity in its dimension class and as much as 5x over the earlier Nemotron Tremendous mannequin.

- Main accuracy for reasoning and agentic duties amongst main open fashions and as much as 2x increased accuracy over the earlier model.

- Achieves excessive accuracy throughout main benchmarks, together with AIME 2025, Terminal-Bench, SWE Bench verified and multilingual, RULER.

- Multi-environment RL coaching gave the mannequin main accuracy throughout 10+ environments with NVIDIA NeMo.

- Mannequin dimension: 120 B with 12 B lively parameters

- Context size: as much as 256K tokens

- Mannequin enter: Textual content

- Mannequin output: Textual content

- Languages: English, French, German, Italian, Japanese, Spanish, and Chinese language

Latent MoE

Nemotron 3 Tremendous makes use of latent MoE, the place consultants function on a shared latent illustration earlier than outputs are projected again to token house. This method permits the mannequin to name on 4x extra consultants on the identical inference value, enabling higher specialization round delicate semantic buildings, area abstractions, or multi-hop reasoning patterns.

Multi-token prediction (MTP)

MTP allows the mannequin to foretell a number of future tokens in a single ahead cross, considerably growing throughput for lengthy reasoning sequences and structured outputs. For planning, trajectory era, prolonged chain-of-thought, or code era, MTP reduces latency and improves agent responsiveness.

To study extra about Nemotron 3 Tremendous’s structure and the way it’s educated, see Introducing Nemotron 3 Tremendous: an Open Hybrid Mamba Transformer MoE for Agentic Reasoning.

NVIDIA Nemotron 3 Tremendous use instances

Nemotron 3 Tremendous helps energy numerous use instances for various industries. A number of the use instances embody

- Software program improvement: Help with duties like code summarization.

- Finance: Speed up mortgage processing by extracting information, analyzing earnings patterns, and detecting fraudulent operations, which can assist scale back cycle occasions and threat.

- Cybersecurity: Can be utilized to triage points, carry out in-depth malware evaluation, and proactively hunt for safety threats.

- Search: Will help perceive consumer intent to activate the proper brokers.

- Retail: Will help optimize stock administration and improve in-store service with real-time, customized product suggestions and assist.

- Multi-agent Workflows: Orchestrates activity‑particular brokers—planning, device use, verification, and area execution—to automate advanced, finish‑to‑finish enterprise processes.

Get Began with NVIDIA Nemotron 3 Tremendous in Amazon Bedrock. Full the next steps to check NVIDIA Nemotron 3 Tremendous in Amazon Bedrock

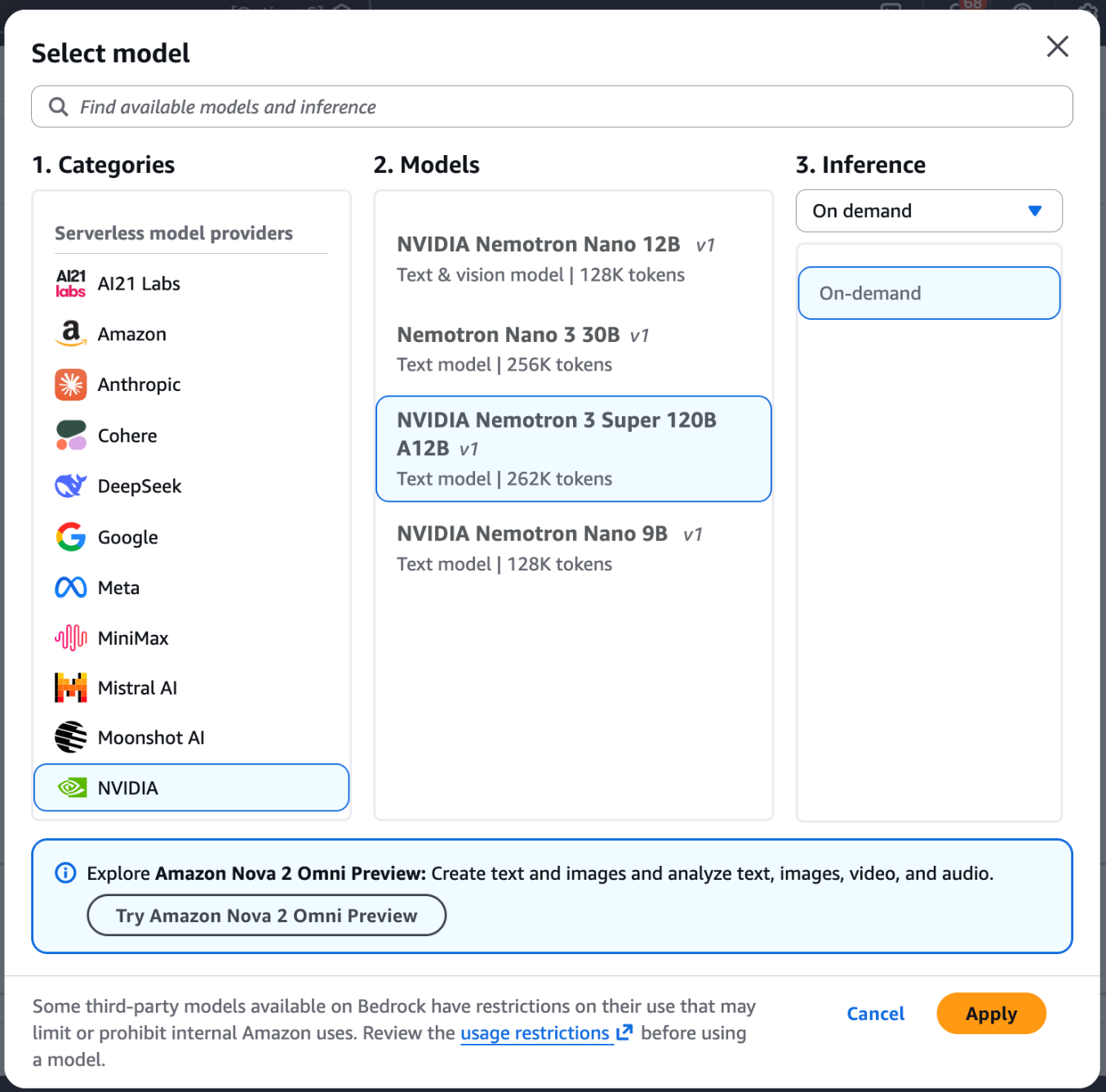

- Navigate to the Amazon Bedrock console and choose Chat/Textual content playground from the left menu (below the Take a look at part).

- Select Choose mannequin within the upper-left nook of the playground.

- Select NVIDIA from the class record, then choose NVIDIA Nemotron 3 Tremendous.

- Select Apply to load the mannequin.

After finishing the earlier steps, you’ll be able to take a look at the mannequin instantly. To actually showcase Nemotron 3 Tremendous’s functionality, we’ll transfer past easy syntax and activity it with a fancy engineering problem. Excessive-reasoning fashions excel at “system-level” pondering the place they need to steadiness architectural trade-offs, concurrency, and distributed state administration.

Let’s use the next immediate to design a globally distributed service:

"Design a distributed rate-limiting service in Python that should assist 100,000 requests per second throughout a number of geographic areas.

1. Present a high-level architectural technique (e.g., Token Bucket vs. Fastened Window) and justify your selection for a world scale. 2. Write a thread-safe implementation utilizing Redis because the backing retailer. 3. Deal with the 'race situation' downside when a number of situations replace the identical counter. 4. Embody a pytest suite that simulates community latency between the app and Redis."

This immediate requires the mannequin to function as a senior distributed-systems engineer — reasoning about trade-offs, producing thread-safe code, anticipating failure modes, and validating every thing with practical checks, all in a single coherent response.

Utilizing the AWS CLI and SDKs

You may entry the mannequin programmatically utilizing the mannequin ID nvidia.nemotron-super-3-120b . The mannequin helps each the InvokeModel and Converse APIs by means of the AWS Command Line Interface (AWS CLI) and AWS SDK with nvidia.nemotron-super-3-120b because the mannequin ID. Additional, it helps the Amazon Bedrock OpenAI SDK appropriate API.

Run the next command to invoke the mannequin immediately out of your terminal utilizing the AWS Command Line Interface (AWS CLI) and the InvokeModel API:

If you wish to invoke the mannequin by means of the AWS SDK for Python (Boto3), use the next script to ship a immediate to the mannequin, on this case by utilizing the Converse API:

To invoke the mannequin by means of the Amazon Bedrock OpenAI-compatible ChatCompletions endpoint you’ll be able to proceed as follows utilizing the OpenAI SDK:

Conclusion

On this put up, we confirmed you the best way to get began with NVIDIA Nemotron 3 Tremendous on Amazon Bedrock for constructing the subsequent era of agentic AI purposes. By combining the mannequin’s superior Hybrid Transformer-Mamba structure and Latent MoE with the totally managed, serverless infrastructure of Amazon Bedrock, organizations can now deploy high-reasoning, environment friendly purposes at scale with out the heavy lifting of backend administration. Able to see what this mannequin can do on your particular workflow?

- Strive it now: Head over to the Amazon Bedrock Console to experiment with NVIDIA Nemotron 3 Tremendous within the mannequin playground.

- Construct: Discover the AWS SDK to combine Nemotron 3 Tremendous into your present generative AI pipelines.

Concerning the authors