Agentic AI isn’t a function you activate. It’s a shift in how work is outlined, who does it, and the way choices get made.

Most enterprises be taught this the onerous means. They launch pilots that stall the second they hit actual processes, techniques, and governance. The sample repeats: imprecise use circumstances, prototypes that may’t survive messy information, autonomy outpacing controls, compliance blocking launch dates, datasets too weak for autonomous choices. Beneath all of it, the identical root downside—nobody agreed on what success appears to be like like.

The AWS Generative AI Innovation Middle has helped 1,000+ clients transfer AI into manufacturing, delivering hundreds of thousands in documented productiveness good points. Our cross-functional groups—scientists, strategists, and machine studying specialists—work side-by-side with clients from ideation via deployment. More and more, that work includes brokers.

On this put up, we share steering for leaders throughout the C-suite: CTOs, CISOs, CDOs, and Chief Knowledge Science/AI officers, in addition to enterprise house owners and compliance leads. Our core remark: when agentic AI works, it appears to be like much less like magic software program and extra like a well-run staff—every agent with a transparent job, a supervisor, a playbook, and a means to enhance over time.

If you happen to sit in an government assembly and ask, “Are we investing sufficient in AI?”, the reply is sort of at all times sure. If you happen to then ask, “Which particular workflows are materially higher in the present day due to AI brokers, and the way do we all know?”, the room will get quiet.

That is Half I of a two-part collection. Right here we set up the muse: why the worth hole is generally an execution downside, and what makes work actually agent-shaped. Half II will converse instantly to every C-suite persona, within the language of their tasks.

The shared downside as an enterprise

The worth hole is generally about how you’re employed

If you happen to sit in an government assembly and ask, “Are we investing sufficient in AI?”, the reply is sort of at all times sure. If you happen to then ask, “Which particular workflows are materially higher in the present day due to AI brokers, and the way do we all know?”, the room will get quiet.

What sits between these two solutions isn’t a lacking basis mannequin or a lacking vendor. It’s a lacking working mannequin. In organizations the place brokers create seen worth, three issues are typically true:

- The work is outlined in painful element. Individuals can describe, step-by-step, what arrives, what occurs, and what “completed” means. They will additionally describe what occurs when issues go fallacious.

- Autonomy is bounded. Brokers are given clear authority limits, express escalation guidelines, and surfaces the place people can see and override choices.

- Enchancment is a behavior, not a mission. There’s an everyday cadence the place groups have a look at how brokers behaved final week, the place they helped, the place they triggered friction, and what to alter subsequent.

The place these issues are lacking, the identical signs seem: spectacular proofs of idea that don’t depart the lab, pilots that quietly die after just a few months, and leaders who cease asking, “What can we do subsequent?” and begin asking, “Why are we spending a lot on this?”

What makes work agent-shaped

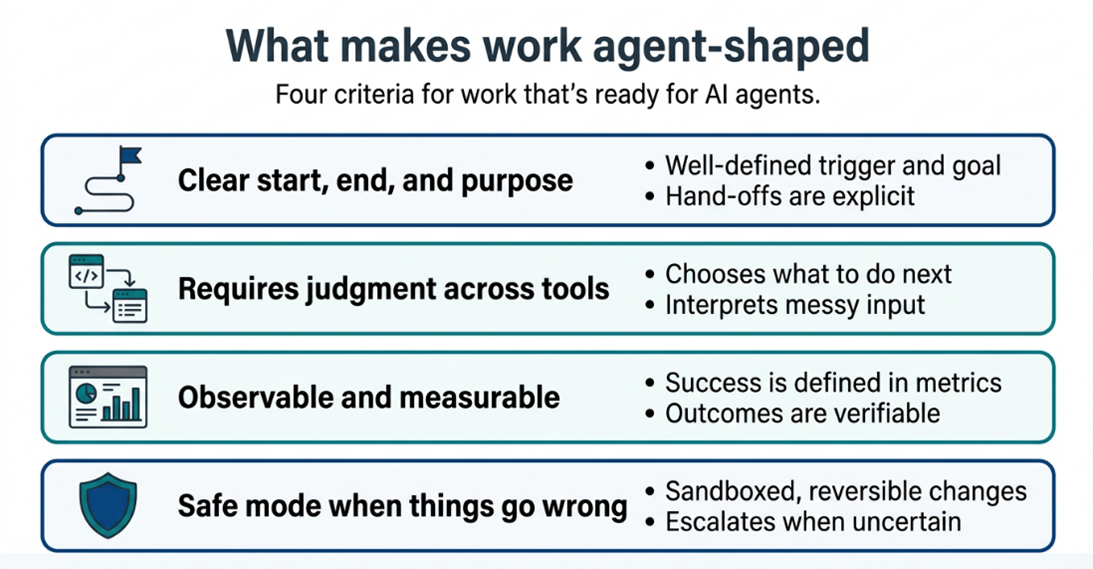

Most organizations begin with the query, “The place can we use an agent?” A greater place to begin is, “The place is the work already structured like a job an agent might do?” In apply, which means 4 issues.

First, the work has a transparent begin, finish, and objective. A declare arrives. An bill seems. A help ticket is opened. The agent can acknowledge when it has sufficient data to start, what purpose it’s working towards, and when the duty is full or must be handed off. That is greater than only a set off and a end line. The agent wants to know the intent behind the work nicely sufficient to deal with affordable variations with out being explicitly informed what to do for every one. In case your staff can’t articulate what completed nicely appears to be like like for a given job, together with the right way to deal with exceptions and edge circumstances, the work isn’t but prepared for an agent.

Second, the work requires judgment throughout instruments. The agent doesn’t observe a hard and fast script. It causes about what data it wants, decides which techniques to question, interprets what it finds, and determines the proper motion primarily based on context. The distinction from conventional automation is that the trail isn’t hard-coded: the agent adapts its strategy, handles variations, and is aware of when a state of affairs falls exterior its competence. However brokers act via instruments, and people instruments should exist earlier than the agent does. Your techniques want well-defined, safe, and dependable interfaces that an agent can name to learn information, write updates, set off transactions, or ship communications. If the method in the present day is people reasoning in electronic mail and spreadsheets, you might have each course of design and tooling work to do earlier than you might have a viable agent use case.

Third, success is observable and measurable. Somebody who doesn’t work within the staff can have a look at the output and say, “That is appropriate,” or “This wants fixing” with out studying minds. Which may imply checking whether or not a ticket was resolved on time, whether or not a kind is full and constant, whether or not a transaction balances, or whether or not a buyer acquired the response they wanted. However observability goes past spot-checking outputs. It is advisable see how the agent arrived at its reply: what information it used, what instruments it referred to as, what choices it thought-about, and why it selected one over one other. If you happen to can’t consider the reasoning, you may’t enhance the agent, and you’ll’t defend its choices when one thing goes fallacious.

Begin with work the place actions are reversible or the place the agent’s output is a suggestion {that a} human acts on. As belief, controls, and analysis mature, you earn the proper to maneuver into higher-stakes work the place the agent closes the loop by itself.

Fourth, the work has a secure mode when issues go fallacious. The perfect early agent candidates are duties the place errors are caught rapidly, corrected cheaply, and don’t create irreversible hurt. If an agent misclassifies a help ticket, it may be rerouted. If it drafts an incorrect response, a human can edit earlier than it’s despatched. But when an agent approves a cost, executes a commerce, or sends a legally binding communication, the price of being fallacious is basically totally different. Begin with work the place actions are reversible or the place the agent’s output is a suggestion {that a} human acts on. As belief, controls, and analysis mature, you earn the proper to maneuver into higher-stakes work the place the agent closes the loop by itself.

When these 4 components are current, you might have one thing that may develop into a job for an agent. Once they’re lacking, the dialog drifts again into imprecise labels like assistant, copilot, or automation that imply various things to each individual within the room.

Name to Motion

Able to Shut the Execution Hole?

The patterns described in Half I aren’t theoretical. They present up in organizations of each measurement, throughout each business. The excellent news: the hole between the place you’re and the place you need to be isn’t a know-how hole. It’s an execution hole, and execution gaps are solvable.

Listed here are three issues you are able to do this week:

- Identify the work, not the want. Choose one workflow in your group that has a transparent begin, a transparent finish, and a measurable definition of “completed.” That’s your first candidate for an agent.

- Ask the onerous query within the room. In your subsequent management assembly, don’t ask, “Are we investing sufficient in AI?” Ask, “Which particular workflows are materially higher in the present day due to AI brokers, and the way do we all know?” The silence that follows is your roadmap.

- Begin the job description. Earlier than any know-how resolution, write down what the agent would do, what instruments it could want, what success appears to be like like, and what occurs when it fails. If you happen to can’t fill in that web page, you’re not able to construct, and that’s priceless data.

Arising in Half II: Steering by Persona

Realizing that agentic AI is an execution downside is one factor. Realizing your function in fixing it’s one other.

In Half II, we converse on to the leaders who must make this work in apply: the line-of-business proprietor who wants brokers tied to KPIs, the CTO deciding between ten one-off brokers or a platform for 100, the CISO who should deal with brokers like colleagues fairly than code, the CDO who must make information boring in the very best means, the Chief AI Officer for whom analysis is the product, and the compliance chief who should design for audits earlier than they occur.

Every persona. Every duty. Every concrete transfer.

Associate with the Generative AI Innovation Middle

You don’t should navigate this journey alone. Whether or not you’re planning your first agentic pilot or scaling to an enterprise-wide functionality, attain out to the Generative AI Innovation Middle staff to start out a dialog grounded in your workflows, your information, and your corporation outcomes.

Concerning the authors