This submit was written with Martyna Shallenberg and Brode Mccrady from Myriad Genetics.

Healthcare organizations face challenges in processing and managing excessive volumes of advanced medical documentation whereas sustaining high quality in affected person care. These organizations want options to course of paperwork successfully to satisfy rising calls for. Myriad Genetics, a supplier of genetic testing and precision drugs options serving healthcare suppliers and sufferers worldwide, addresses this problem.

Myriad’s Income Engineering Division processes 1000’s of healthcare paperwork every day throughout Girls’s Well being, Oncology, and Psychological Well being divisions. The corporate classifies incoming paperwork into lessons corresponding to Check Request Varieties, Lab Outcomes, Scientific Notes, and Insurance coverage to automate Prior Authorization workflows. The system routes these paperwork to applicable exterior distributors for processing primarily based on their recognized doc class. They manually carry out Key Info Extraction (KIE) together with insurance coverage particulars, affected person data, and take a look at outcomes to find out Medicare eligibility and help downstream processes.

As doc volumes elevated, Myriad confronted challenges with its present system. The automated doc classification answer labored however was expensive and time-consuming. Info extraction remained handbook attributable to complexity. To handle excessive prices and gradual processing, Myriad wanted a greater answer.

This submit explores how Myriad Genetics partnered with the AWS Generative AI Innovation Heart (GenAIIC) to rework their healthcare doc processing pipeline utilizing Amazon Bedrock and Amazon Nova basis fashions. We element the challenges with their present answer, and the way generative AI lowered prices and improved processing pace.

We study the technical implementation utilizing AWS’s open supply GenAI Clever Doc Processing (GenAI IDP) Accelerator answer, the optimization methods used for doc classification and key data extraction, and the measurable enterprise impression on Myriad’s prior authorization workflows. We cowl how we used immediate engineering strategies, mannequin choice methods, and architectural selections to construct a scalable answer that processes advanced medical paperwork with excessive accuracy whereas decreasing operational prices.

Doc processing bottlenecks limiting healthcare operations

Myriad Genetics’ every day operations depend upon effectively processing advanced medical paperwork containing important data for affected person care workflows and regulatory compliance. Their present answer mixed Amazon Textract for Optical Character Recognition (OCR) with Amazon Comprehend for doc classification.

Regardless of 94% classification accuracy, this answer had operational challenges:

- Operational prices: 3 cents per web page leading to $15,000 month-to-month bills per enterprise unit

- Classification latency: 8.5 minutes per doc, delaying downstream prior authorization workflows

Info extraction was completely handbook, requiring contextual understanding to distinguish important scientific distinctions (like “is metastatic” versus “isn’t metastatic”) and to find data like insurance coverage numbers and affected person data throughout various doc codecs. This processing burden was substantial, with Girls’s Well being customer support requiring as much as 10 full-time workers contributing 78 hours every day within the Girls’s Well being enterprise unit alone.

Myriad wanted an answer to:

- Scale back doc classification prices whereas sustaining or bettering accuracy

- Speed up doc processing to remove workflow bottlenecks

- Automate data extraction for medical paperwork

- Scale throughout a number of enterprise items and doc varieties

Amazon Bedrock and generative AI

Fashionable massive language fashions (LLMs) course of advanced healthcare paperwork with excessive accuracy attributable to pre-training on huge textual content corpora. This pre-training allows LLMs to know language patterns and doc constructions with out characteristic engineering or massive labeled datasets. Amazon Bedrock is a completely managed service that gives a broad vary of high-performing LLMs from main AI corporations. It gives the safety, privateness, and accountable AI capabilities that healthcare organizations require when processing delicate medical data. For this answer, we used Amazon’s latest basis fashions:

- Amazon Nova Professional: An economical, low-latency mannequin very best for doc classification

- Amazon Nova Premier: A sophisticated mannequin with reasoning capabilities for data extraction

Answer overview

We applied an answer with Myriad utilizing AWS’s open supply GenAI IDP Accelerator. The accelerator gives a scalable, serverless structure that converts unstructured paperwork into structured information. The accelerator processes a number of paperwork in parallel by configurable concurrency limits with out overwhelming downstream companies. Its built-in analysis framework lets customers present anticipated output by the person interface (UI) and consider generated outcomes to iteratively customise configuration and enhance accuracy.

The accelerator presents 1-click deployment with a selection of pre-built patterns optimized for various workloads with completely different configurability, price, and accuracy necessities:

- Sample 1 – Makes use of Amazon Bedrock Information Automation, a completely managed service that gives wealthy out-of-the-box options, ease of use, and easy per-page pricing. This sample is advisable for many use instances.

- Sample 2 – Makes use of Amazon Textract and Amazon Bedrock with Amazon Nova, Anthropic’s Claude, or customized fine-tuned Amazon Nova fashions. This sample is right for advanced paperwork requiring customized logic.

- Sample 3 – Makes use of Amazon Textract, Amazon SageMaker with a fine-tuned mannequin for classification, and Amazon Bedrock for extraction. This sample is right for paperwork requiring specialised classification.

Sample 2 proved most fitted for this undertaking, assembly the important requirement of low price whereas providing flexibility to optimize accuracy by immediate engineering and LLM choice. This sample presents a no-code configuration – customise doc varieties, extraction fields, and processing logic by configuration, editable within the net UI.

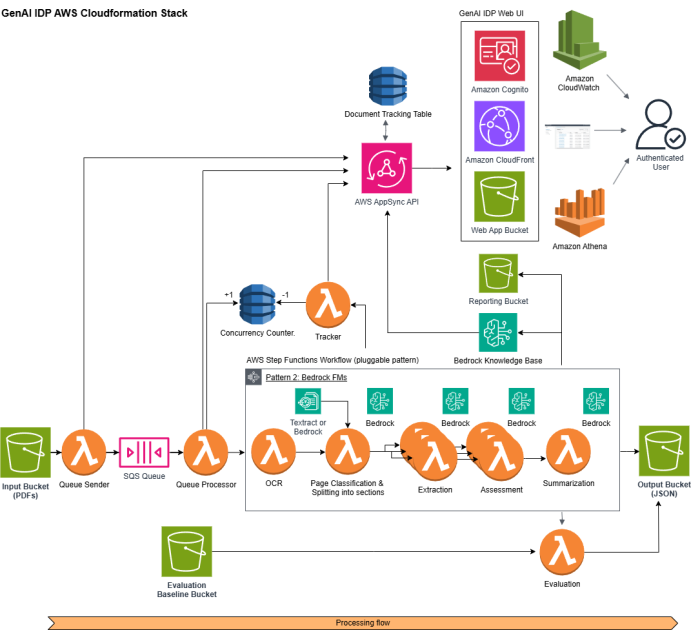

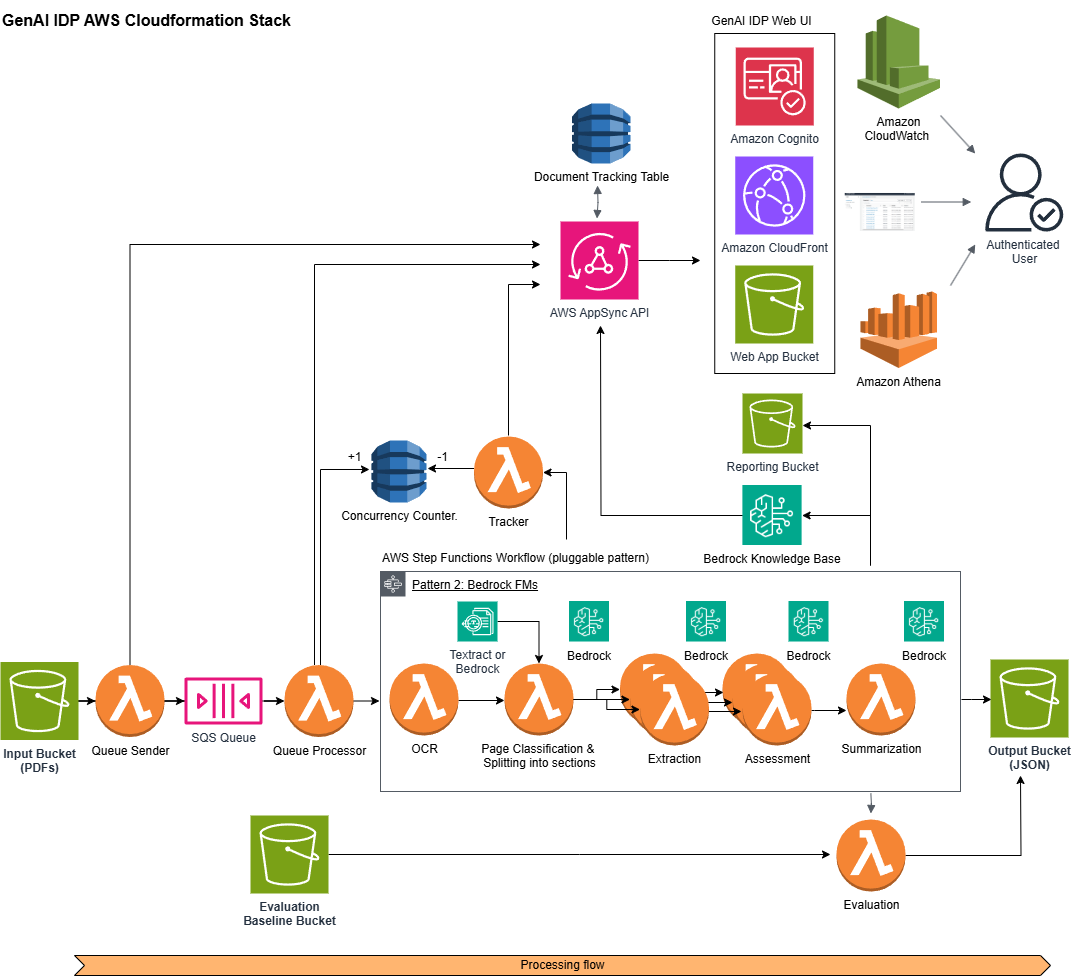

We personalized the definitions of doc lessons, key attributes and their definitions per doc class, LLM selection, LLM hyperparameters, and classification and extraction LLM prompts through Sample 2’s config file. In manufacturing, Myriad built-in this answer into their present event-driven structure. The next diagram illustrates the manufacturing pipeline:

- Doc Ingestion: Incoming order occasions set off doc retrieval from supply doc administration methods, with cache optimization for beforehand processed paperwork.

- Concurrency Administration: DynamoDB tracked concurrent AWS Step Perform jobs whereas Amazon Easy Queue Service (SQS) queues information exceeding concurrency limits for doc processing.

- Textual content Extraction: Amazon Textract extracted textual content, format data, tables and varieties from the normalized paperwork.

- Classification: The configured LLM analyzed the extracted content material primarily based on the personalized doc classification immediate supplied within the config file and classifies paperwork into applicable classes.

- Key Info Extraction: The configured LLM extracted medical data utilizing extraction immediate supplied within the config file.

- Structured Output: The pipeline formatted the ends in a structured method and delivered to Myriad’s Authorization System through RESTful operations.

Doc classification with generative AI

Whereas Myriad’s present answer achieved 94% accuracy, misclassifications occurred attributable to structural similarities, overlapping content material, and shared formatting patterns throughout doc varieties. This semantic ambiguity made it tough to tell apart between related paperwork. We guided Myriad on immediate optimization strategies that used LLM’s contextual understanding capabilities. This strategy moved past sample matching to allow semantic evaluation of doc context and goal, figuring out distinguishing options that human specialists acknowledge however earlier automated methods missed.

AI-driven immediate engineering for doc classification

We developed class definitions with distinguishing traits between related doc varieties. To establish these differentiators, we supplied doc samples from every class to Anthropic Claude Sonnet 3.7 on Amazon Bedrock with mannequin reasoning enabled (a characteristic that permits the mannequin to exhibit its step-by-step evaluation course of). The mannequin recognized distinguishing options between related doc lessons, which Myriad’s material specialists refined and integrated into the GenAI IDP Accelerator’s Sample 2 config file for doc classification prompts.

Format-based classification methods

We used doc construction and formatting as key differentiators to tell apart between related doc varieties that shared comparable content material however differed in construction. We enabled the classification fashions to acknowledge format-specific traits corresponding to format constructions, discipline preparations, and visible components, permitting the system to distinguish between paperwork that textual content material alone can’t distinguish. For instance, lab experiences and take a look at outcomes each comprise affected person data and medical information, however lab experiences show numerical values in tabular format whereas take a look at outcomes observe a story format. We instructed the LLM: “Lab experiences comprise numerical outcomes organized in tables with reference ranges and items. Check outcomes current findings in paragraph format with scientific interpretations.”

Implementing destructive prompting for enhanced accuracy

We applied destructive prompting strategies to resolve confusion between related paperwork by explicitly instructing the mannequin what classifications to keep away from. This strategy added exclusionary language to classification prompts, specifying traits that shouldn’t be related to every doc sort. Initially, the system often misclassified Check Request Varieties (TRFs) as Check Outcomes attributable to confusion between affected person medical historical past and lab measurements. Including a destructive immediate like “These varieties comprise affected person medical historical past. DO NOT confuse them with take a look at outcomes which comprise present/latest lab measurements” to the TRF definition improved the classification accuracy by 4%. By offering express steering on widespread misclassification patterns, the system prevented typical errors and confusion between related doc varieties.

Mannequin choice for price and efficiency optimization

Mannequin choice drives optimum cost-performance at scale, so we performed complete benchmarking utilizing the GenAI IDP Accelerator’s analysis framework. We examined 4 basis fashions—Amazon Nova Lite, Amazon Nova Professional, Amazon Nova Premier, and Anthropic Claude Sonnet 3.7—utilizing 1,200 healthcare paperwork throughout three doc lessons: Check Request Varieties, Lab Outcomes, and Insurance coverage. We assessed every mannequin utilizing three important metrics: classification accuracy, processing latency, and price per doc. The accelerator’s price monitoring enabled direct comparability of operational bills throughout completely different mannequin configurations, making certain efficiency enhancements translate into measurable enterprise worth at scale.

The analysis outcomes demonstrated that Amazon Nova Professional achieved optimum steadiness for Myriad’s use case. We transitioned from Myriad’s Amazon Comprehend implementation to Amazon Nova Professional with optimized prompts for doc classification, reaching vital enhancements: classification accuracy elevated from 94% to 98%, processing prices decreased by 77%, and processing pace improved by 80%—decreasing classification time from 8.5 minutes to 1.5 minutes per doc.

Automating Key Info Extraction with generative AI

Myriad’s data extraction was handbook, requiring as much as 10 full-time workers contributing 78 hours every day within the Girls’s Well being unit alone, which created operational bottlenecks and scalability constraints. Automating healthcare KIE introduced challenges: checkbox fields required distinguishing between marking types (checkmarks, X’s, handwritten marks); paperwork contained ambiguous visible components like overlapping marks or content material spanning a number of fields; extraction wanted contextual understanding to distinguish scientific distinctions and find data throughout various doc codecs. We labored with Myriad to develop an automatic KIE answer, implementing the next optimization strategies to handle extraction complexity.

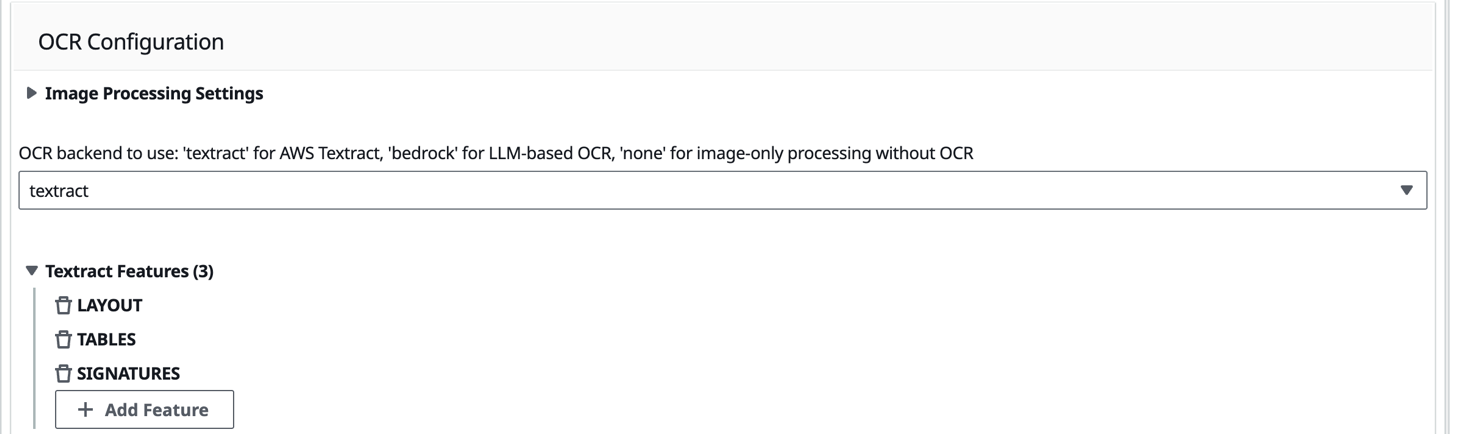

Enhanced OCR configuration for checkbox recognition

To handle checkbox identification challenges, we enabled Amazon Textract’s specialised TABLES and FORMS options on the GenAI IDP Accelerator portal as proven within the following picture, to enhance OCR discrimination between chosen and unselected checkbox components. These options enhanced the system’s capacity to detect and interpret marking types present in medical varieties.

We enhanced accuracy by incorporating visible cues into the extraction prompts. We up to date the prompts with directions corresponding to “search for seen marks in or across the small sq. packing containers (✓, x, or handwritten marks)” to information the language mannequin in figuring out checkbox alternatives. This mixture of enhanced OCR capabilities and focused prompting improved checkbox extraction in medical varieties.

Visible context studying by few-shot examples

Configuring Textract and bettering prompts alone couldn’t deal with advanced visible components successfully. We applied a multimodal strategy that despatched each doc photos and extracted textual content from Textract to the inspiration mannequin, enabling simultaneous evaluation of visible format and textual content material for correct extraction selections. We applied few-shot studying by offering instance doc photos paired with their anticipated extraction outputs to information the mannequin’s understanding of assorted kind layouts and marking types. A number of doc picture examples with their appropriate extraction patterns create prolonged LLM prompts. We leveraged the GenAI IDP Accelerator’s built-in integration with Amazon Bedrock’s immediate caching characteristic to cut back prices and latency. Immediate caching shops prolonged few-shot examples in reminiscence for five minutes—when processing a number of related paperwork inside that timeframe, Bedrock reuses cached examples as an alternative of reprocessing them, decreasing each price and processing time.

Chain of thought reasoning for advanced extraction

Whereas this multimodal strategy improved extraction accuracy, we nonetheless confronted challenges with overlapping and ambiguous tick marks in advanced kind layouts. To carry out effectively in ambiguous and complicated conditions, we used Amazon Nova Premier and applied Chain of Thought reasoning to have the mannequin suppose by extraction selections step-by-step utilizing pondering tags. For instance:

Moreover, we included reasoning explanations within the few-shot examples, demonstrating how we reached conclusions in ambiguous instances. This strategy enabled the mannequin to work by advanced visible proof and contextual clues earlier than making remaining determinations, bettering efficiency with ambiguous tick marks.

Testing throughout 32 doc samples with various complexity ranges through the GenAI IDP Accelerator revealed that Amazon Textract with Structure, TABLES, and FORMS options enabled, paired with Amazon Nova Premier’s superior reasoning capabilities and the inclusion of few-shot examples, delivered one of the best outcomes. The answer achieved 90% accuracy (identical as human evaluator baseline accuracy) whereas processing paperwork in roughly 1.3 minutes every.

Outcomes and enterprise impression

By way of our new answer, we delivered measurable enhancements that met the enterprise targets established on the undertaking outset:

Doc classification efficiency:

- We elevated accuracy from 94% to 98% by immediate optimization strategies for Amazon Nova Professional, together with AI-driven immediate engineering, document-format primarily based classification methods, and destructive prompting.

- We lowered classification prices by 77% (from 3.1 to 0.7 cents per web page) by migrating from Amazon Comprehend to Amazon Nova Professional with optimized prompts.

- We lowered classification time by 80% (from 8.5 to 1.5 minutes per doc) by selecting Amazon Nova Professional to supply a low-latency and cost-effective answer.

New automated Key Info Extraction efficiency:

- We achieved 90% extraction accuracy (identical because the baseline handbook course of): Delivered by a mixture of Amazon Textract’s doc evaluation capabilities, visible context studying by few-shot examples and Amazon Nova Premier’s reasoning for advanced information interpretation.

- We achieved processing prices of 9 cents per web page and processing time of 1.3 minutes per doc in comparison with handbook baseline requiring as much as 10 full-time workers working 78 hours every day per enterprise unit.

Enterprise impression and rollout

Myriad has deliberate a phased rollout starting with doc classification. They plan to launch our new classification answer within the Girls’s Well being enterprise unit, adopted by Oncology and Psychological Well being divisions. On account of our work, Myriad will understand as much as $132K in annual financial savings of their doc classification prices. The answer reduces every prior authorization submission time by 2 minutes—specialists now full orders in 4 minutes as an alternative of six minutes attributable to sooner entry to tagged paperwork. This enchancment saves 300 hours month-to-month throughout 9,000 prior authorizations in Girls’s Well being alone, equal to 50 hours per prior authorization specialist.

These measurable enhancements have remodeled Myriad’s operations, as their engineering management confirms:

“Partnering with the GenAIIC emigrate our Clever Doc Processing answer from AWS Comprehend to Bedrock has been a transformative step ahead. By bettering each efficiency and accuracy, the answer is projected to ship financial savings of greater than $10,000 monthly. The staff’s shut collaboration with Myriad’s inner engineering staff delivered a high-quality, scalable answer, whereas their deep experience in superior language fashions has elevated our capabilities. This has been a superb instance of how innovation and partnership can drive measurable enterprise impression.”

– Martyna Shallenberg, Senior Director of Software program Engineering, Myriad Genetics

Conclusion

The AWS GenAI IDP Accelerator enabled Myriad’s fast implementation, offering a versatile framework that lowered improvement time. Healthcare organizations want tailor-made options—the accelerator delivers in depth customization capabilities that permit customers adapt options to particular doc varieties and workflows with out requiring in depth code adjustments or frequent redeployment throughout improvement. Our strategy demonstrates the facility of strategic immediate engineering and mannequin choice. We achieved excessive accuracy in a specialised area by specializing in immediate design, together with destructive prompting and visible cues. We optimized each price and efficiency by deciding on Amazon Nova Professional for classification and Nova Premier for advanced extraction—matching the proper mannequin to every particular process.

Discover the answer for your self

Organizations trying to enhance their doc processing workflows can expertise these advantages firsthand. The open supply GenAI IDP Accelerator that powered Myriad’s transformation is obtainable to deploy and take a look at in your surroundings. The accelerator’s simple setup course of lets customers shortly consider how generative AI can remodel doc processing challenges.

When you’ve explored the accelerator and seen its potential impression in your workflows, attain out to the AWS GenAIIC staff to discover how the GenAI IDP Accelerator might be personalized and optimized to your particular use case. This hands-on strategy ensures you can also make knowledgeable selections about implementing clever doc processing in your group.

In regards to the authors

Priyashree Roy is a Information Scientist II on the AWS Generative AI Innovation Heart, the place she applies her experience in machine studying and generative AI to develop modern options for strategic AWS prospects. She brings a rigorous scientific strategy to advanced enterprise challenges, knowledgeable by her PhD in experimental particle physics from Florida State College and postdoctoral analysis on the College of Michigan.

Priyashree Roy is a Information Scientist II on the AWS Generative AI Innovation Heart, the place she applies her experience in machine studying and generative AI to develop modern options for strategic AWS prospects. She brings a rigorous scientific strategy to advanced enterprise challenges, knowledgeable by her PhD in experimental particle physics from Florida State College and postdoctoral analysis on the College of Michigan.

Mofijul Islam is an Utilized Scientist II and Tech Lead on the AWS Generative AI Innovation Heart, the place he helps prospects deal with customer-centric analysis and enterprise challenges utilizing generative AI, massive language fashions (LLM), multi-agent studying, code era, and multimodal studying. He holds a PhD in machine studying from the College of Virginia, the place his work centered on multimodal machine studying, multilingual pure language processing (NLP), and multitask studying. His analysis has been revealed in top-tier conferences like NeurIPS, Worldwide Convention on Studying Representations (ICLR), Empirical Strategies in Pure Language Processing (EMNLP), Society for Synthetic Intelligence and Statistics (AISTATS), and Affiliation for the Development of Synthetic Intelligence (AAAI), in addition to Institute of Electrical and Electronics Engineers (IEEE) and Affiliation for Computing Equipment (ACM) Transactions.

Mofijul Islam is an Utilized Scientist II and Tech Lead on the AWS Generative AI Innovation Heart, the place he helps prospects deal with customer-centric analysis and enterprise challenges utilizing generative AI, massive language fashions (LLM), multi-agent studying, code era, and multimodal studying. He holds a PhD in machine studying from the College of Virginia, the place his work centered on multimodal machine studying, multilingual pure language processing (NLP), and multitask studying. His analysis has been revealed in top-tier conferences like NeurIPS, Worldwide Convention on Studying Representations (ICLR), Empirical Strategies in Pure Language Processing (EMNLP), Society for Synthetic Intelligence and Statistics (AISTATS), and Affiliation for the Development of Synthetic Intelligence (AAAI), in addition to Institute of Electrical and Electronics Engineers (IEEE) and Affiliation for Computing Equipment (ACM) Transactions.

Nivedha Balakrishnan is a Deep Studying Architect II on the AWS Generative AI Innovation Heart, the place she helps prospects design and deploy generative AI purposes to unravel advanced enterprise challenges. Her experience spans massive language fashions (LLMs), multimodal studying, and AI-driven automation. She holds a Grasp’s in Utilized Information Science from San Jose State College and a Grasp’s in Biomedical Engineering from Linköping College, Sweden. Her earlier analysis centered on AI for drug discovery and healthcare purposes, bridging life sciences with machine studying.

Nivedha Balakrishnan is a Deep Studying Architect II on the AWS Generative AI Innovation Heart, the place she helps prospects design and deploy generative AI purposes to unravel advanced enterprise challenges. Her experience spans massive language fashions (LLMs), multimodal studying, and AI-driven automation. She holds a Grasp’s in Utilized Information Science from San Jose State College and a Grasp’s in Biomedical Engineering from Linköping College, Sweden. Her earlier analysis centered on AI for drug discovery and healthcare purposes, bridging life sciences with machine studying.

Martyna Shallenberg is a Senior Director of Software program Engineering at Myriad Genetics, the place she leads cross-functional groups in constructing AI-driven enterprise options that remodel income cycle operations and healthcare supply. With a novel background spanning genomics, molecular diagnostics, and software program engineering, she has scaled modern platforms starting from Clever Doc Processing (IDP) to modular LIMS options. Martyna can also be the Founder & President of BioHive’s HealthTech Hub, fostering cross-domain collaboration to speed up precision drugs and healthcare innovation.

Martyna Shallenberg is a Senior Director of Software program Engineering at Myriad Genetics, the place she leads cross-functional groups in constructing AI-driven enterprise options that remodel income cycle operations and healthcare supply. With a novel background spanning genomics, molecular diagnostics, and software program engineering, she has scaled modern platforms starting from Clever Doc Processing (IDP) to modular LIMS options. Martyna can also be the Founder & President of BioHive’s HealthTech Hub, fostering cross-domain collaboration to speed up precision drugs and healthcare innovation.

Brode Mccrady is a Software program Engineering Supervisor at Myriad Genetics, the place he leads initiatives in AI, income methods, and clever doc processing. With over a decade of expertise in enterprise intelligence and strategic analytics, Brode brings deep experience in translating advanced enterprise wants into scalable technical options. He holds a level in Economics, which informs his data-driven strategy to problem-solving and enterprise technique.

Brode Mccrady is a Software program Engineering Supervisor at Myriad Genetics, the place he leads initiatives in AI, income methods, and clever doc processing. With over a decade of expertise in enterprise intelligence and strategic analytics, Brode brings deep experience in translating advanced enterprise wants into scalable technical options. He holds a level in Economics, which informs his data-driven strategy to problem-solving and enterprise technique.

Randheer Gehlot is a Principal Buyer Options Supervisor at AWS who focuses on healthcare and life sciences transformation. With a deep deal with AI/ML purposes in healthcare, he helps enterprises design and implement environment friendly cloud options that handle actual enterprise challenges. His work includes partnering with organizations to modernize their infrastructure, allow innovation, and speed up their cloud adoption journey whereas making certain sensible, sustainable outcomes.

Randheer Gehlot is a Principal Buyer Options Supervisor at AWS who focuses on healthcare and life sciences transformation. With a deep deal with AI/ML purposes in healthcare, he helps enterprises design and implement environment friendly cloud options that handle actual enterprise challenges. His work includes partnering with organizations to modernize their infrastructure, allow innovation, and speed up their cloud adoption journey whereas making certain sensible, sustainable outcomes.

Acknowledgements

We wish to thank Bob Strahan, Kurt Mason, Akhil Nooney and Taylor Jensen for his or her vital contributions, strategic selections and steering all through.