This put up is cowritten by Mike Koźmiński from Beekeeper.

Massive Language Fashions (LLMs) are evolving quickly, making it tough for organizations to pick the perfect mannequin for every particular use case, optimize prompts for high quality and price, adapt to altering mannequin capabilities, and personalize responses for various customers.

Selecting the “proper” LLM and immediate isn’t a one-time choice—it shifts as fashions, costs, and necessities change. System prompts have gotten bigger (e.g. Anthropic system immediate) and extra complicated. A variety of mid-sized corporations don’t have sources to rapidly consider and enhance them. To handle this challenge, Beekeeper constructed an Amazon Bedrock-powered system that constantly evaluates mannequin+immediate candidates, ranks them on a dwell leaderboard, and routes every request to the present most suitable option for that use case.

Beekeeper: Connecting and empowering the frontline workforce

Beekeeper presents a complete digital office system particularly designed for frontline workforce operations. The corporate offers a mobile-first communication and productiveness resolution that connects non-desk employees with one another and headquarters, enabling organizations to streamline operations, increase worker engagement, and handle duties effectively. Their system options sturdy integration capabilities with current enterprise programs (human sources, scheduling, payroll), whereas focusing on industries with massive deskless workforces akin to hospitality, manufacturing, retail, healthcare, and transportation. At its core, Beekeeper addresses the normal disconnect between frontline staff and their organizations by offering accessible digital instruments that improve communication, operational effectivity, and workforce retention, all delivered by means of a cloud-based SaaS system with cell apps, administrative dashboards, and enterprise-grade security measures.

Beekeeper’s resolution: A dynamic analysis system

Beekeeper solved this problem with an automatic system that constantly assessments completely different mannequin and immediate mixtures, ranks choices primarily based on high quality, price, and pace, incorporates person suggestions to personalize responses, and routinely routes requests to the present most suitable choice. High quality is scored with a small artificial check set and validated in manufacturing with person suggestions (thumbs up/down and feedback). By incorporating immediate mutation, Beekeeper created an natural system that evolves over time. The result’s a constantly-optimizing setup that balances high quality, latency, and price—and adapts routinely when the panorama adjustments.

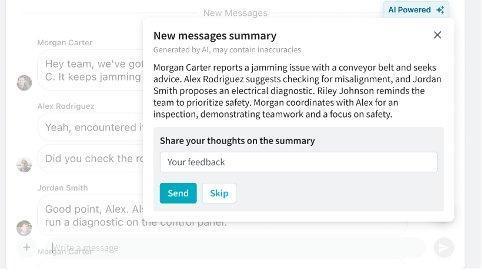

Actual-world instance: Chat Summarization

Beekeeper’s Frontline Success Platform unifies communication for deskless employees throughout industries. One sensible utility of their LLM system is chat summarization. When a person returns to shift, they may discover a chat with many unread messages – as a substitute of studying every thing, they’ll request a abstract. The system generates a concise overview with motion objects tailor-made to the person’s wants. Customers can then present suggestions to enhance future summaries. This seemingly easy characteristic depends on subtle know-how behind the scenes. The system should perceive dialog context, determine necessary factors, acknowledge motion objects, and current data concisely—all whereas adapting to person preferences.

Resolution overview

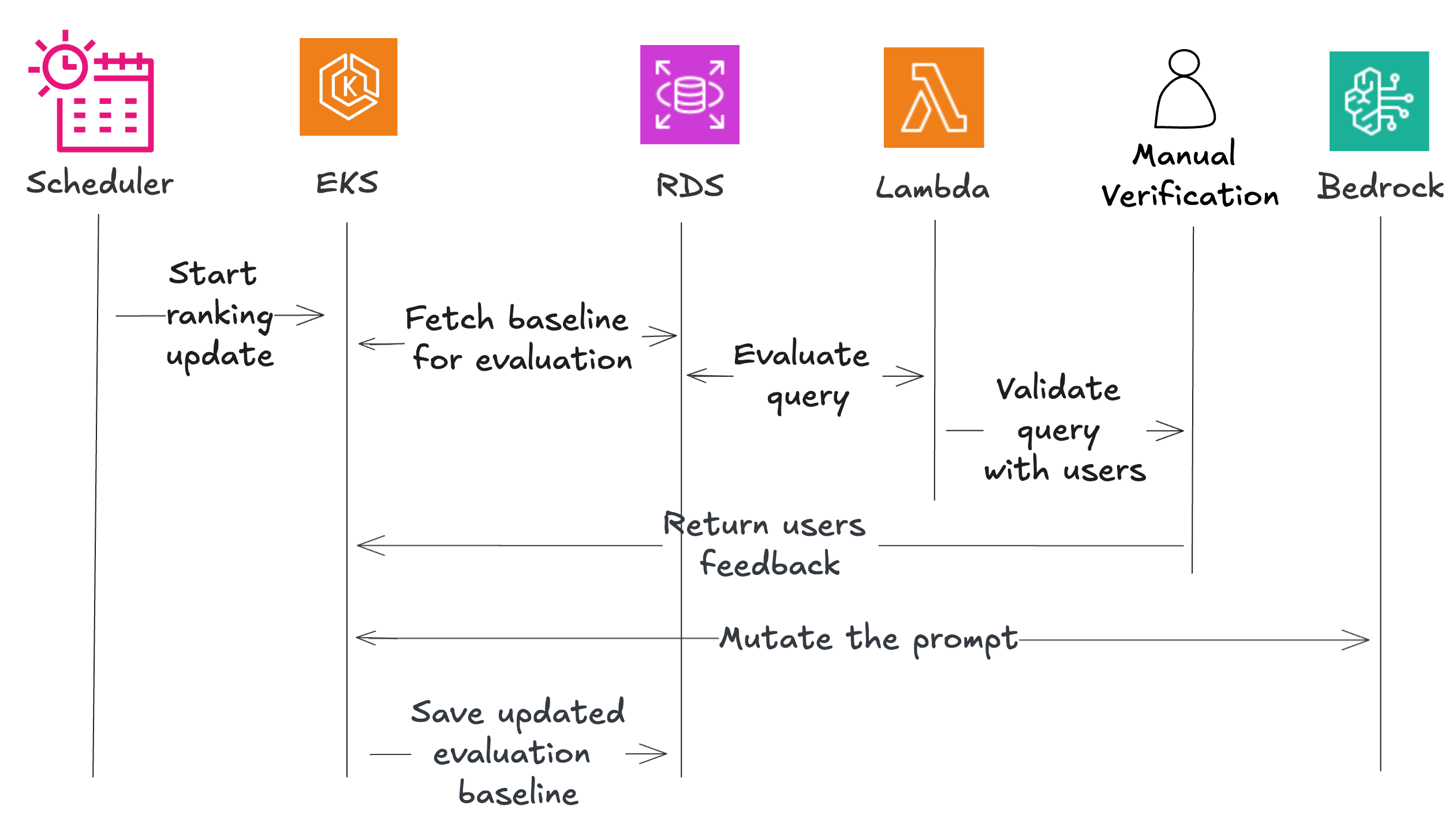

Beekeeper’s resolution consists of two foremost phases: constructing a baseline leaderboard and personalizing with person suggestions.

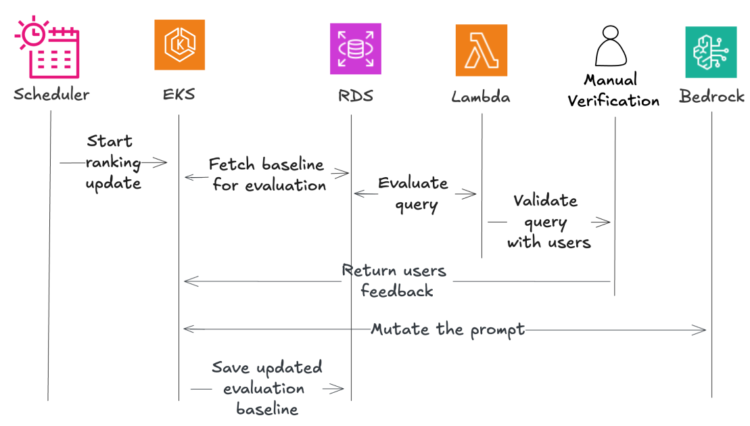

The system makes use of a number of AWS parts, together with Amazon EventBridge for scheduling, Amazon Elastic Kubernetes Service (EKS) for orchestration, AWS Lambda for analysis features, Amazon Relational Database Service (RDS) for information storage, and Amazon Mechanical Turk for handbook validation.

The workflow begins with an artificial rank creator that establishes baseline efficiency. A scheduler triggers the coordinator, which fetches check information and sends it to evaluators. These evaluators check every mannequin/immediate pair and return outcomes, with a portion despatched for handbook validation. The system mutates promising prompts to create variations, evaluates these once more, and saves the perfect performers. When person suggestions arrives, the system incorporates it by means of a second part. The coordinator fetches ranked mannequin/immediate pairs and sends them with person suggestions to a mutator, which returns customized prompts. A drift detector makes certain these customized variations don’t stray too removed from high quality requirements, and validated prompts are saved for particular customers.

Constructing the baseline leaderboard

To kick-start the optimization journey, Beekeeper engineers chosen numerous fashions and supplied them with domain-specific human-written prompts. The tech crew examined these prompts utilizing LLM-generated examples to ensure they had been error-free. A strong baseline is essential right here. This basis helps them refine their method when incorporating suggestions from actual customers.

The next sections, we dive into their success metrics, which guides their refinement of prompts and helps create an optimum person expertise.

Analysis standards for baseline

The standard of summaries generated by mannequin/immediate pairs is measured utilizing each quantitative and qualitative metrics, together with the next:

- Compression ratio – Measures abstract size relative to the unique textual content, rewarding adherence to focus on lengths and penalizing extreme size.

- Presence of motion objects – Makes certain user-specific motion objects are clearly recognized.

- Lack of hallucinations – Validates factual accuracy and consistency.

- Vector comparability – Assesses semantic similarity to human-generated good outcomes.

Within the following sections, we stroll by means of every of the analysis standards and the way they’re applied.

Compression ratio

The compression ratio evaluates the size of the summarized textual content in comparison with the unique one and its adherence to a goal size (it rewards compression ratios near the goal and penalizes texts that deviate from goal size). The corresponding rating, between 0 and 100, is computed programmatically with the next Python code:

Presence of motion objects associated to the person

To examine whether or not the abstract comprises all of the motion objects associated to the customers, Beekeeper depends on the comparability to the bottom fact. For the bottom fact comparability, the anticipated output format requires a bit labeled “Motion objects:” adopted by bullet factors, which makes use of common expressions to extract the motion merchandise record as within the following Python code:

They embody this extra extraction step to ensure the info is formatted in a approach that the LLM can simply course of. The extracted record is distributed to an LLM with the request to examine whether or not it’s appropriate or not. A +1 rating is assigned for every motion merchandise appropriately assigned, and a -1 is utilized in case of false constructive. After that, scores are normalized to not penalize/gratify summaries with kind of motion objects.

Lack of hallucinations

To guage hallucinations, Beekeeper makes use of two approaches: cross-LLM analysis and handbook validation.

Within the cross-LLM analysis, a abstract created by LLM A (for instance, Mistral Massive) is handed to the evaluator element, along with the immediate and the preliminary enter. The evaluator submits this textual content to LLM B (for instance, Anthropic’s Claude), asking if the details from the abstract match the uncooked context. An LLM of a distinct household is used for this analysis. Amazon Bedrock makes this train significantly easy by means of the Converse API—customers can choose completely different LLMs by altering the mannequin identifier string.

One other necessary level is the presence of handbook verification on a small set of evaluations at Beekeeper, to keep away from circumstances of double hallucination. They assign a rating of 1 if no hallucination was detected and -1 if any is detected. For the entire pipeline, they use the identical heuristic of seven% handbook analysis (particulars mentioned additional alongside on this put up).

Vector comparability

As a further analysis methodology, semantic similarity is used for information with obtainable floor fact data. The embedding fashions are chosen among the many MTEB Leaderboard (multi-task and multi-language comparability of embedding fashions), contemplating massive vector dimensionality to maximise the quantity of data saved contained in the vector. Beekeeper makes use of as its baseline Qwen3, a mannequin offering a 4096 dimensionality and supporting 16-bit quantization for quick computation. Additional embedding fashions are additionally used immediately from Amazon Bedrock. After computing the embedding vectors for each the bottom fact reply and the one generated by a given mannequin/immediate pair, cosine similarity is used to compute the similarity, as proven within the following Python code:

Analysis baseline

The analysis baseline of every mannequin/immediate pair is carried out by accumulating the generated output of a set of mounted, predefined queries which can be manually annotated with floor fact outputs containing the “true solutions” (on this case, the perfect summaries from in-house and public dataset). This set as talked about earlier than is created from a public dataset in addition to hand crafted examples higher representing a buyer’s area. The scores are evaluated routinely primarily based on the metrics described earlier: compression, lack of hallucinations, presence of motion objects, and vector comparability, to construct a baseline model of the leaderboard.

Guide evaluations

For extra validation, Beekeeper manually opinions a scientifically decided pattern of evaluations utilizing Amazon Mechanical Turk. This pattern measurement is calculated utilizing Cochran’s system to assist statistical significance.

Amazon Mechanical Turk permits companies to harness human intelligence for duties computer systems can’t carry out successfully. This crowdsourcing market connects customers with a worldwide, on-demand workforce to finish microtasks like information labeling, content material moderation, and analysis validation—serving to to scale operations with out sacrificing high quality or rising overhead. As talked about earlier, Beekeeper employs human suggestions to confirm that the automated LLM-based ranking system is working appropriately. Based mostly on their prior assumptions, they know what proportion of responses ought to be categorised as containing hallucinations. If the quantity detected by human verification diverges by greater than two proportion factors from their estimations, they know that the automated course of isn’t working correctly and desires revision. Now that Beekeeper has established their baseline, they’ll present the perfect outcomes to their clients. By consistently updating their fashions, they’ll deliver new worth in an automatic style. Each time their engineers have concepts for brand spanking new immediate optimization, they’ll let the pipeline consider it in opposition to earlier ones utilizing baseline outcomes. Beekeeper can take it additional and embed person suggestions, permitting for extra customizable outcomes. Nevertheless, they don’t need person suggestions to completely change the habits of their mannequin by means of immediate injection in suggestions. Within the following part, we study the natural a part of Beekeeper’s pipeline that embeds person preferences into responses with out affecting different customers.

Analysis of person suggestions

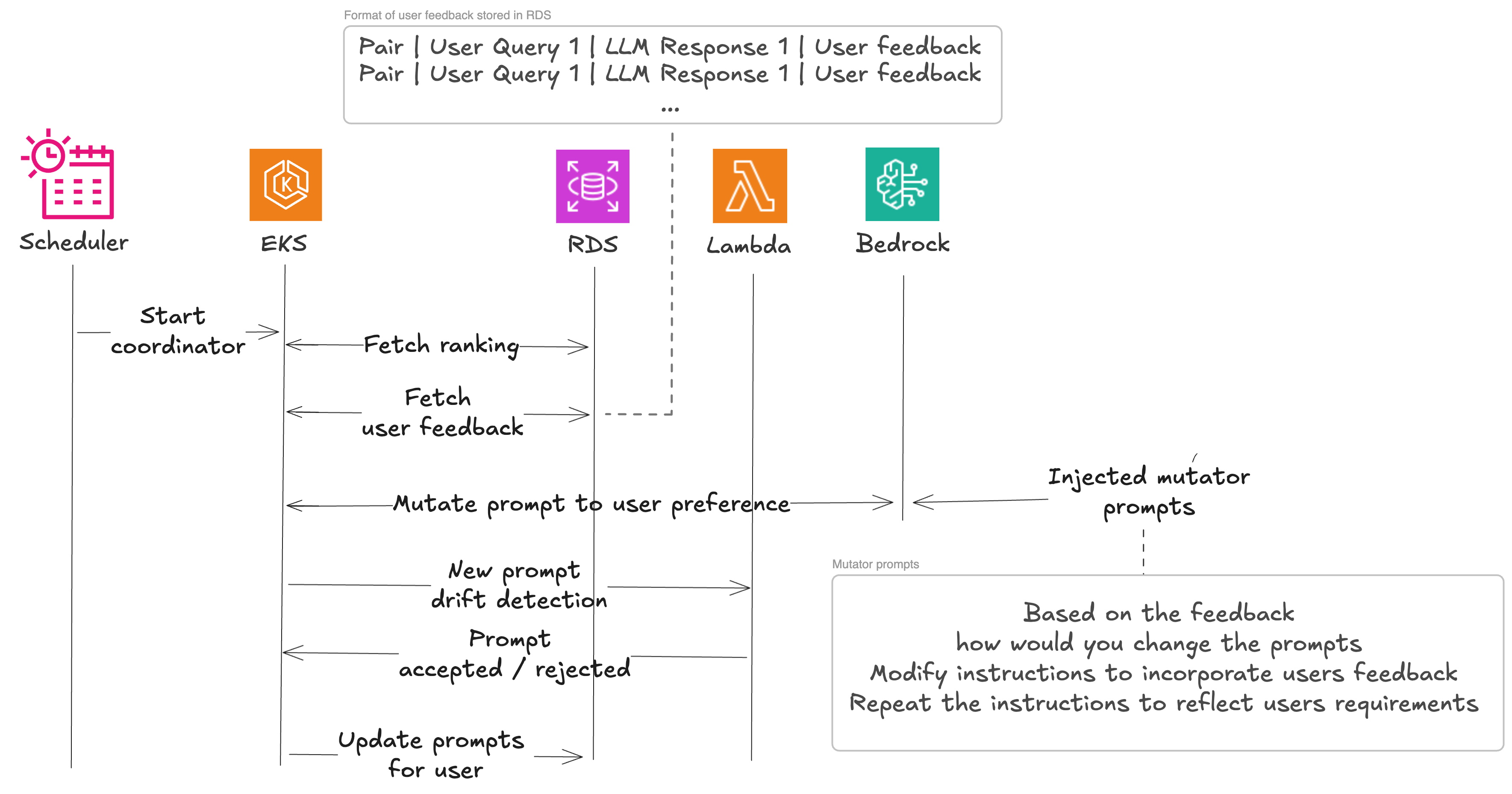

Now that Beekeeper has established their baseline utilizing floor fact set, they’ll begin incorporating human suggestions. This works based on the identical ideas because the beforehand described hallucination detection course of. Person suggestions is pulled along with enter and LLM response. They go inquiries to the LLM within the following format:

They use this to examine whether or not the suggestions supplied remains to be relevant after the prompt-model pair was up to date. This works as a baseline for incorporating person suggestions. They’re now prepared to start out mutating the immediate. That is completed to keep away from suggestions being utilized a number of instances. If mannequin change or mutation already solved the issue, there isn’t a want to use it once more.

The mutation course of consists of reevaluating the person generated dataset after immediate mutation till the output incorporates the person suggestions, then we use the baseline to grasp variations and discard adjustments in case they undermine mannequin work.

The 4 best-performing mannequin/immediate pairs chosen within the baseline analysis (for mutated prompts) are additional processed by means of a immediate mutation course of, to examine for residual enchancment of the outcomes. That is important in an atmosphere the place even small modifications to a immediate can result in dramatically completely different outcomes when used at the side of person suggestions.

The preliminary immediate is enriched with a immediate mutation, the obtained person suggestions, a pondering fashion (a particular cognitive method like “Make it inventive” or “Suppose in steps” that guides how the LLM approaches the mutation activity), the person context, and is distributed to the LLM to supply a mutated immediate. The mutated prompts are added to the record, evaluated, and the corresponding scores are included into the leaderboard. Mutation prompts may also embody customers suggestions when such is current.

Examples of generated mutations prompts embody:

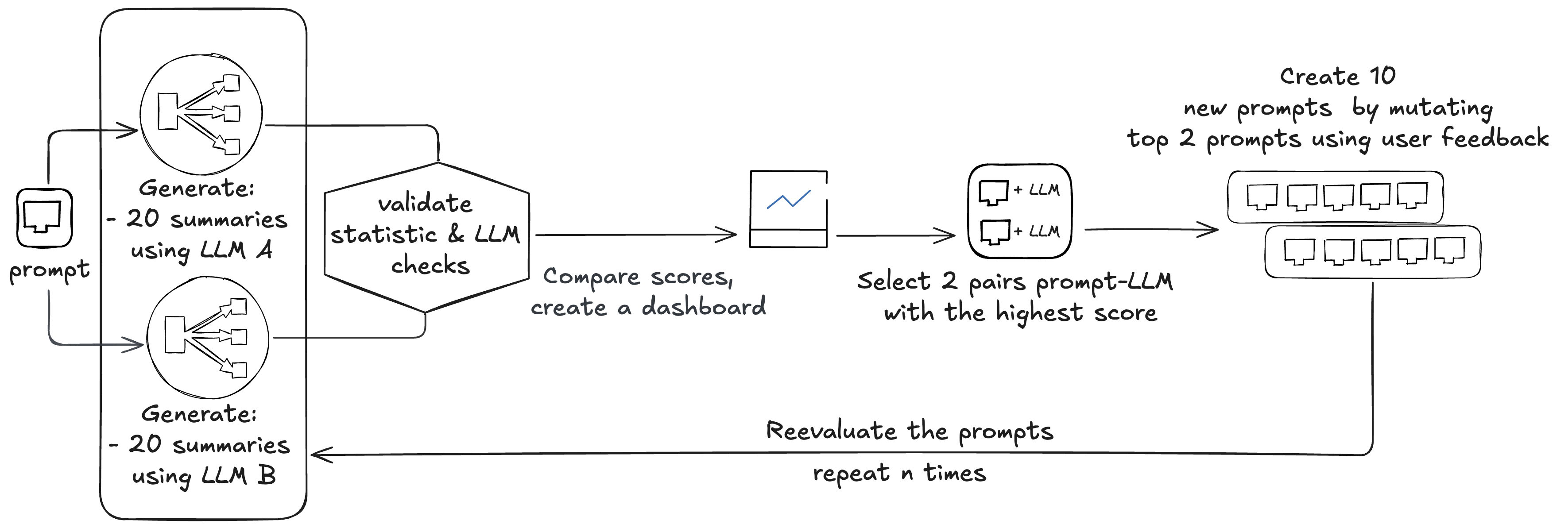

Resolution instance

The baseline analysis course of begins with eight pairs of prompts and related fashions (Amazon Nova, Anthropic Claude 4 Sonnet, Meta Llama 3, and Mistral 8x7B). Beekeeper normally makes use of 4 base prompts and two fashions to start out with. These prompts are used throughout all of the fashions, however outcomes are thought of in pairs of prompt-models. Fashions are routinely up to date as newer variations develop into obtainable by way of Amazon Bedrock.

Beekeeper begins by evaluating the eight current pairs:

- Every analysis requires producing 20 summaries per pair (8 x 20 = 160)

- Every abstract is checked by three static checks and two LLM checks (160 x 2 = 320)

In complete, this creates 480 LLM calls. Scores are in contrast, making a leaderboard, and two prompt-model pairs are chosen. These two prompts are mutated utilizing person suggestions, creating 10 new prompts, that are once more evaluated, creating 600 calls to the LLM (10 x 20 + 10 x 20 x 2 = 600).

This course of could be run n instances to carry out extra inventive mutations; Beekeeper normally performs two cycles.

In complete, this train performs assessments on (8 + 10 + 10) x 2 mannequin/immediate pairs. The entire course of on common requires round 8,352,000 enter tokens and round 1,620,000 output tokens, costing round $48.Newly chosen mannequin/immediate pairs are utilized in manufacturing with ratios 1st: 50%, 2nd: 30%, and third: 20%.After deploying the brand new mannequin/immediate pairs, Beekeeper gathers suggestions from the customers. This suggestions is used to feed the mutator to create three new prompts. These prompts are despatched for drift detection, which compares them to the baseline. In complete, they create 4 LLM calls, costing round 4,800 enter tokens and 500 output tokens.

Advantages

The important thing advantage of Beekeeper’s resolution is its skill to quickly evolve and adapt to person wants. With this method, they’ll make preliminary estimations of which mannequin/immediate pairs could be optimum candidates for every activity, whereas controlling each price and the standard of outcomes. By combining the advantages of artificial information with person suggestions, the answer is appropriate even for smaller engineering groups. As a substitute of specializing in generic prompts, Beekeeper prioritizes tailoring the immediate enchancment course of to satisfy the distinctive wants of every tenant. By doing so, they’ll refine prompts to be extremely related and user-friendly. This method permits customers to develop their very own fashion, which in flip enhances their expertise as they supply suggestions and see its impression. One of many uncomfortable side effects they noticed is that sure teams of individuals want completely different kinds of communication. By mapping these outcomes to buyer interactions, they purpose to current a extra tailor-made expertise. This makes certain that suggestions given by one person doesn’t impression one other. Their preliminary outcomes recommend 13–24% higher rankings on response when aggregated per tenant. In abstract, the proposed resolution presents a number of notable advantages. It reduces handbook labor by automating the LLM and immediate choice course of, shortens the suggestions cycle, permits the creation of user- or tenant-specific enhancements, and offers the capability to seamlessly combine and estimate the efficiency of latest fashions in the identical method because the earlier ones.

Conclusion

Beekeeper’s automated leaderboard method and human suggestions loop system for dynamic LLM and immediate pair choice addresses the important thing challenges organizations face in navigating the quickly evolving panorama of language fashions. By constantly evaluating and optimizing high quality, measurement, pace, and price, the answer helps clients use the best-performing mannequin/immediate mixtures for his or her particular use circumstances. Trying forward, Beekeeper plans to additional refine and develop the capabilities of this method, incorporating extra superior strategies for immediate engineering and analysis. Moreover, the crew is exploring methods to empower customers to develop their very own custom-made prompts, fostering a extra customized and interesting expertise. In case your group is exploring methods to optimize LLM choice and immediate engineering, there’s no want to start out from scratch. Utilizing AWS providers like Amazon Bedrock for mannequin entry, AWS Lambda for light-weight analysis, Amazon EKS for orchestration, and Amazon Mechanical Turk for human validation, a pipeline could be constructed that routinely evaluates, ranks, and evolves your prompts. As a substitute of manually updating prompts or re-benchmarking fashions, concentrate on making a feedback-driven system that constantly improves outcomes in your customers. Begin with a small set of fashions and prompts, outline your analysis metrics, and let the system scale as new fashions and use circumstances emerge.

Concerning the authors

Mike (Michał) Koźmiński is a Zürich-based Principal Engineer at Beekeeper by LumApps, the place he builds the foundations that make AI a first-class a part of the product. With 10+ years spanning startups and enterprises, he focuses on translating new know-how into dependable programs and actual buyer impression.

Mike (Michał) Koźmiński is a Zürich-based Principal Engineer at Beekeeper by LumApps, the place he builds the foundations that make AI a first-class a part of the product. With 10+ years spanning startups and enterprises, he focuses on translating new know-how into dependable programs and actual buyer impression.

Magdalena Gargas is a Options Architect keen about know-how and fixing buyer challenges. At AWS, she works largely with software program corporations, serving to them innovate within the cloud.

Magdalena Gargas is a Options Architect keen about know-how and fixing buyer challenges. At AWS, she works largely with software program corporations, serving to them innovate within the cloud.

Luca Perrozzi is a Options Architect at Amazon Net Providers (AWS), primarily based in Switzerland. He focuses on innovation subjects at AWS, particularly within the space of Synthetic Intelligence. Luca holds a PhD in particle physics and has 15 years of hands-on expertise as a analysis scientist and software program engineer.

Luca Perrozzi is a Options Architect at Amazon Net Providers (AWS), primarily based in Switzerland. He focuses on innovation subjects at AWS, particularly within the space of Synthetic Intelligence. Luca holds a PhD in particle physics and has 15 years of hands-on expertise as a analysis scientist and software program engineer.

Simone Pomata is a Principal Options Architect at AWS. He has labored enthusiastically within the tech trade for greater than 10 years. At AWS, he helps clients reach constructing new applied sciences daily.

Simone Pomata is a Principal Options Architect at AWS. He has labored enthusiastically within the tech trade for greater than 10 years. At AWS, he helps clients reach constructing new applied sciences daily.