As organizations scale their AI workloads on Amazon Bedrock, understanding what’s driving spending turns into vital. Groups may must carry out chargebacks, examine price spikes, and information optimization selections, all of which require price attribution on the workload stage.

With Amazon Bedrock Initiatives, you’ll be able to attribute inference prices to particular workloads and analyze them in AWS Value Explorer and AWS Information Exports. On this publish, you’ll discover ways to arrange Initiatives end-to-end, from designing a tagging technique to analyzing prices.

How Amazon Bedrock Initiatives and price allocation work

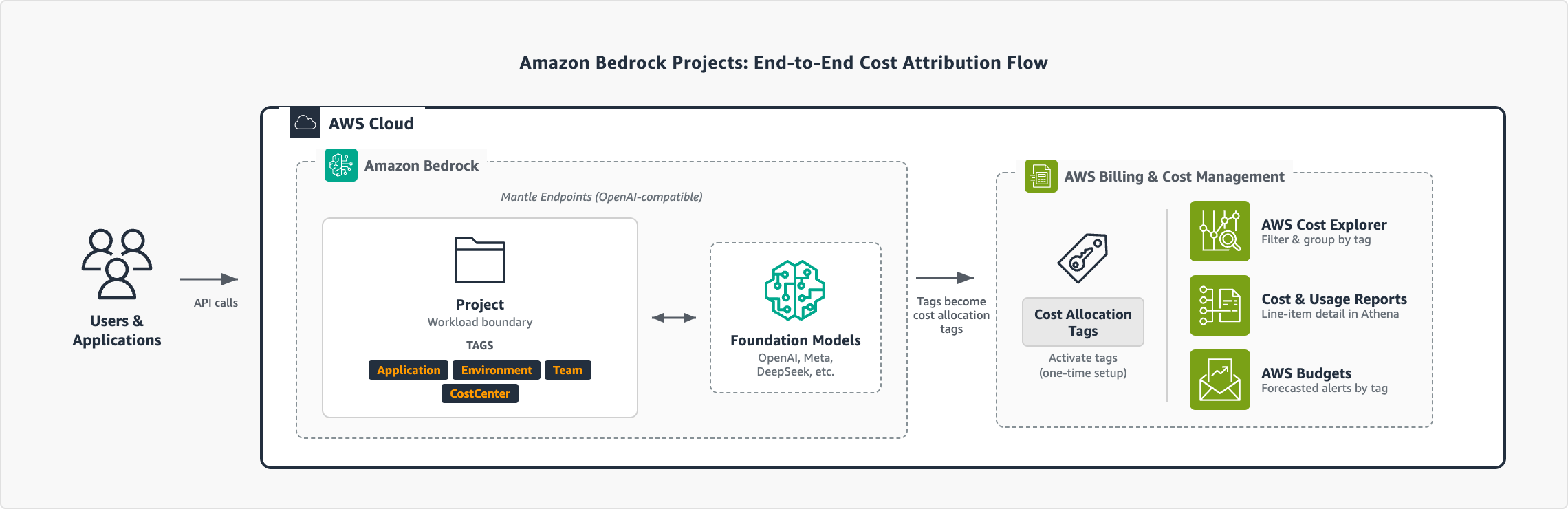

A challenge on Amazon Bedrock is a logical boundary that represents a workload, equivalent to an software, setting, or experiment. To attribute the price of a challenge, you connect useful resource tags and move the challenge ID in your API calls. You may then activate the price allocation tags in AWS Billing to filter, group, and analyze spend in AWS Value Explorer and AWS Information Exports.

The next diagram illustrates the end-to-end movement:

Determine 1: Finish-to-end price attribution movement with Amazon Bedrock Initiatives

Notes:

- Amazon Bedrock Initiatives assist the OpenAI-compatible APIs: Responses API and Chat Completions API.

- Requests with out a challenge ID are robotically related to the default challenge in your AWS account.

Conditions

To observe together with the steps on this publish, you want:

Outline your tagging technique

The tags that you just connect to initiatives turn out to be the size that you may filter and group by in your price reviews. We suggest that you just plan these earlier than creating your first challenge. A standard strategy is to tag by software, setting, staff, and price middle:

| Tag key | Goal | Instance values |

| Utility | Which workload or service | CustomerChatbot, Experiments, DataAnalytics |

| Setting | Lifecycle stage | Manufacturing, Improvement, Staging, Analysis |

| Staff | Possession | CustomerExperience, PlatformEngineering, DataScience |

| CostCenter | Finance mapping | CC-1001, CC-2002, CC-3003 |

For extra steering on constructing a value allocation technique, see Finest Practices for Tagging AWS Sources. Together with your tagging technique outlined, you’re able to create initiatives and begin attributing prices.

Create a challenge

Together with your tagging technique and permissions in place, you’ll be able to create your first challenge. Every challenge has its personal set of price allocation tags that movement into your billing information. The next instance reveals the way to create a challenge utilizing the Initiatives API.

First, set up the required dependencies:

$ pip3 set up openai requestsCreate a challenge together with your tag taxonomy:

The OpenAI SDK makes use of the OPENAI_API_KEY setting variable. Set this to your Bedrock API key.

import os

import requests

# Configuration

BASE_URL = "https://bedrock-mantle..api.aws/v1"

API_KEY = os.environ.get("OPENAI_API_KEY") # Your Amazon Bedrock API key

def create_project(identify: str, tags: dict) -> dict:

"""Create a Bedrock challenge with price allocation tags."""

response = requests.publish(

f"{BASE_URL}/group/initiatives",

headers={

"Authorization": f"Bearer {API_KEY}",

"Content material-Kind": "software/json"

},

json={"identify": identify, "tags": tags}

)

if response.status_code != 200:

increase Exception(

f"Didn't create challenge: {response.status_code} - {response.textual content}"

)

return response.json()

# Create a manufacturing challenge with full tag taxonomy

challenge = create_project(

identify="CustomerChatbot-Prod",

tags={

"Utility": "CustomerChatbot",

"Setting": "Manufacturing",

"Staff": "CustomerExperience",

"CostCenter": "CC-1001",

"Proprietor": "alice"

}

)

print(f"Created challenge: {challenge['id']}") The API returns the challenge particulars, together with the challenge ID and ARN:

{

"id": "proj_123",

"arn": "arn:aws:bedrock-mantle:::challenge/"

} Save the challenge ID. You’ll use it to affiliate inference requests within the subsequent step. The ARN is used for IAM coverage attachment for those who should limit entry to this challenge. Repeat this for every workload. The next desk reveals a pattern challenge construction for a corporation with three functions:

| Mission identify | Utility | Setting | Staff | Value Heart |

| CustomerChatbot-Prod | CustomerChatbot | Manufacturing | CustomerExperience | CC-1001 |

| CustomerChatbot-Dev | CustomerChatbot | Improvement | CustomerExperience | CC-1001 |

| Experiments-Analysis | Experiments | Manufacturing | PlatformEngineering | CC-2002 |

| DataAnalytics-Prod | DataAnalytics | Manufacturing | DataScience | CC-3003 |

You may create as much as 1,000 initiatives per AWS account to suit your group’s wants.

Affiliate inference requests together with your challenge

Together with your initiatives created, you’ll be able to affiliate inference requests by passing the challenge ID in your API calls. The next instance makes use of the Responses API:

from openai import OpenAI

consumer = OpenAI(

base_url="https://bedrock-mantle..api.aws/v1",

challenge="", # ID returned once you created the challenge

)

response = consumer.responses.create(

mannequin="openai.gpt-oss-120b",

enter="Summarize the important thing findings from our This autumn earnings report."

)

print(response.output_text) To keep up clear price attribution, at all times specify a challenge ID in your API calls quite than counting on the default challenge.

Activate price allocation tags

Earlier than your challenge tags seem in price reviews, you will need to activate them as price allocation tags in AWS Billing. This one-time setup connects your challenge tags to the billing pipeline. For extra details about activating price allocation tags, see the AWS Billing documentation.

It will probably take as much as 24 hours for tags to propagate to AWS Value Explorer and AWS Information Exports. You may activate your tags instantly after creating your first challenge to keep away from gaps in price information.

View challenge prices

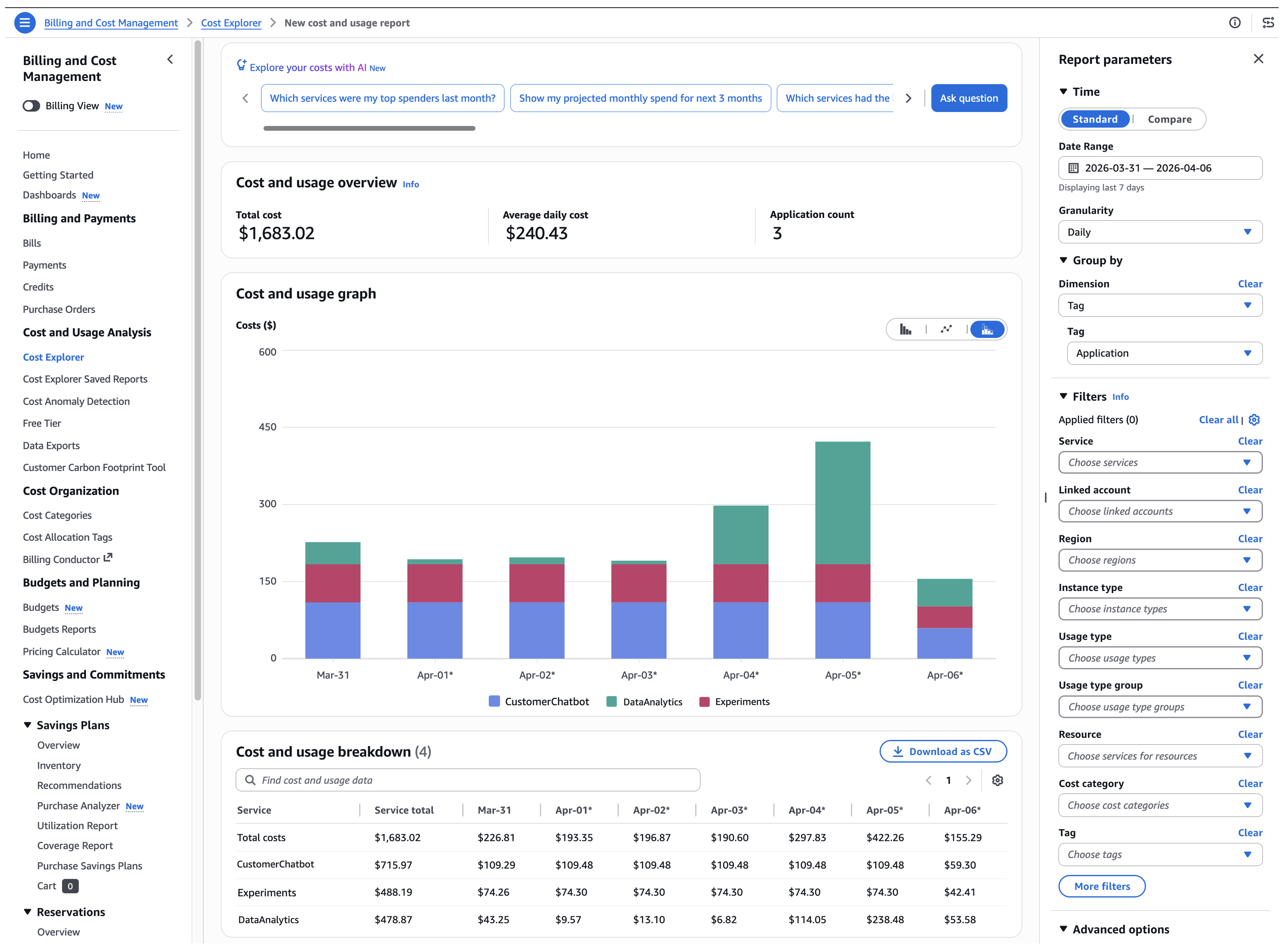

With initiatives created, inference requests tagged, and price allocation tags activated, you’ll be able to see precisely the place your Amazon Bedrock spend goes. Each dimension that you just outlined in your taxonomy is now obtainable as a filter or grouping in your AWS Billing price reviews.

AWS Value Explorer

AWS Value Explorer offers the quickest solution to visualize your prices by challenge. Full the next steps to evaluation your prices by challenge:

- Open the AWS Billing and Value Administration console and select Value Explorer.

- Within the Filters pane, broaden Service and choose Amazon Bedrock.

- Beneath Group by, choose Tag and select your tag key (for instance, Utility).

Determine 2: Value Explorer displaying each day Amazon Bedrock spending grouped by the Utility tag

For extra methods to refine your view, see Analyzing your prices and utilization with AWS Value Explorer.

For extra granular evaluation and line-item element together with your challenge tags, see Creating Information Exports within the AWS Billing documentation.

Conclusion

With Amazon Bedrock Initiatives, you’ll be able to attribute prices to particular person workloads and observe spending utilizing the AWS instruments that your group already depends on. As your workloads scale, use the tagging technique and price visibility patterns lined on this publish to keep up accountability throughout groups and functions.

For extra info, see Amazon Bedrock Initiatives documentation and the AWS Value Administration Consumer Information.

Concerning the authors