, the transfer from a conventional information warehouse to Knowledge Mesh feels much less like an evolution and extra like an id disaster.

At some point, every little thing works (possibly “works” is a stretch, however all people is aware of the lay of the land) The following day, a brand new CDO arrives with thrilling information: “We’re shifting to Knowledge Mesh.” And all of a sudden, years of rigorously designed pipelines, fashions, and conventions are questioned.

On this article, I wish to step away from idea and buzzwords and stroll by a sensible transition, from a centralised information “monolith” to a contract-driven Knowledge Mesh, utilizing a concrete instance: web site analytics.

The standardized information contract turns into the crucial enabler for this transition. By adhering to an open, structured contract specification, schema definitions, enterprise semantics, and high quality guidelines are expressed in a constant format that ETL and Knowledge High quality instruments can interpret instantly. As a result of the contract follows an ordinary, these exterior platforms can programmatically generate checks, implement validations, orchestrate transformations, and monitor information well being with out customized integrations.

The contract shifts from static documentation to an executable management layer that seamlessly integrates governance, transformation, and observability. The Knowledge Contract is de facto the glue that holds the integrity of the Knowledge Mesh.

Why conventional information warehousing turns into a monolith

When individuals hear “monolith”, they usually consider unhealthy structure. However most monolithic information platforms didn’t begin that manner, they developed into one.

A standard enterprise information warehouse usually has:

- One central crew chargeable for ingestion, modelling, high quality, and publishing

- One central structure with shared pipelines and shared patterns

- Tightly coupled parts, the place a change in a single mannequin can ripple in every single place

- Gradual change cycles, as a result of demand at all times exceeds capability

- Restricted area context, as modelers are sometimes far faraway from the enterprise

- Scaling ache, as extra information sources and use instances arrive

This isn’t incompetence, it’s a pure final result of centralisation and years of unintended penalties. Ultimately, the warehouse turns into the bottleneck.

What Knowledge Mesh truly modifications (and what it doesn’t)

Knowledge Mesh is commonly misunderstood as “no extra warehouse” or “everybody does their very own factor.”

In actuality, it’s a community shift, not essentially a expertise shift.

At its core, Knowledge Mesh is constructed on 4 pillars:

- Area possession

- Knowledge as a Product

- Self-serve information platform

- Federated governance

The important thing distinction is that as a substitute of 1 massive system owned by one crew, you get many small, linked information merchandise, owned by domains, and linked collectively by clear contracts.

And that is the place information contracts turn out to be the quiet hero of the story.

Knowledge contracts: the lacking stabiliser

Knowledge contracts borrow a well-recognized concept from software program engineering: API contracts, utilized to information.

They had been popularised within the Knowledge Mesh neighborhood between 2021 and 2023, with contributions from individuals and initiatives equivalent to:

- Andrew Jones, who launched the time period information contract extensively by blogs and talks and his ebook, which was printed in 20231

- Chad Sanderson (gable.ai)

- The Open Knowledge Contract Commonplace, which was launched by the Bitol venture

An information contract explicitly defines the settlement between a knowledge producer and a knowledge shopper.

The instance: web site analytics

Let’s floor this with a concrete state of affairs.

Think about an internet retailer, PlayNest, an internet toy retailer. The enterprise needs to analyse the person behaviour on our web site.

There are two principal departments which might be related to this train. Buyer Expertise, which is chargeable for the person journey on our web site; How the shopper feels when they’re searching our merchandise.

Then there’s the Advertising area, who make campaigns that take customers to our web site, and ideally make them keen on shopping for our product.

There’s a pure overlap between these two departments. The boundaries between domains are sometimes fuzzy.

On the operational degree, after we speak about web sites, you seize issues like:

- Guests

- Periods

- Occasions

- Units

- Browsers

- Merchandise

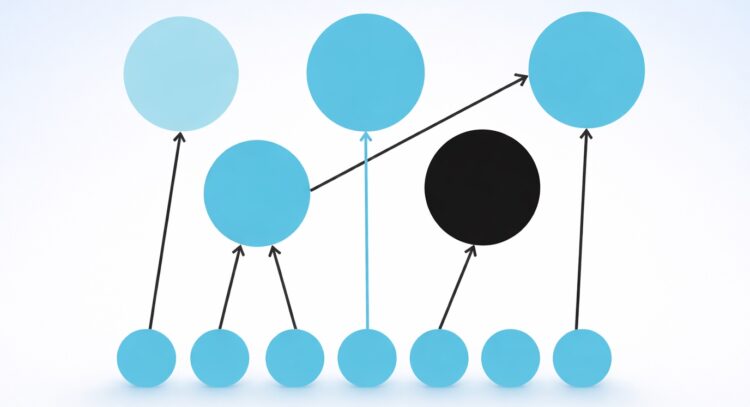

A conceptual mannequin for this instance might seem like this:

From a advertising perspective, nevertheless, no person needs uncooked occasions. They need:

- Advertising leads

- Funnel efficiency

- Marketing campaign effectiveness

- Deserted carts

- Which kind of merchandise individuals clicked on for retargeting and so on.

And from a buyer expertise perspective, they wish to know:

- Frustration scores

- Conversion metrics (For instance what number of customers created wishlists, which indicators they’re keen on sure merchandise, a sort of conversion from random person to person)

The centralised (pre-Mesh) strategy

I’ll use a Medallion framework for instance how this is able to be inbuilt a centralised lakehouse structure.

- Bronze: uncooked, immutable information from instruments like Google Analytics

- Silver: cleaned, standardized, source-agnostic fashions

- Gold: curated, business-aligned datasets (info, dimensions, marts)

Right here within the Bronze layer, the uncooked CSV or JSON objects are saved in, for instance, an Object retailer like S3 or Azure Blob. The central crew is chargeable for ingesting the info, ensuring the API specs are adopted and the ingestion pipelines are monitored.

Within the Silver layer, the central crew begins to scrub and rework the info. Maybe the info modeling chosen was Knowledge Vault and thus the info is standardised into particular information sorts, enterprise objects are recognized and sure comparable datasets are being conformed or loosely coupled.

Within the Gold layer, the actual end-user necessities are documented in story boards and the centralised IT groups implement the size and info required for the completely different domains’ analytical functions.

Let’s now reframe this instance, shifting from a centralised working mannequin to a decentralised, domain-owned strategy.

Web site analytics in a Knowledge Mesh

A typical Knowledge Mesh information mannequin might be depicted like this:

A Knowledge Product is owned by a Area, with a particular kind, and information is available in through enter ports and goes out through output ports. Every port is ruled by a knowledge contract.

As an organisation, in case you have chosen to go along with Knowledge Mesh you’ll continuously need to resolve between the next two approaches:

Do you organise your panorama with these re-usable constructing blocks the place logic is consolidated, OR:

Do you let all shoppers of the info merchandise resolve for themselves the best way to implement it, with the chance of duplication of logic?

Individuals have a look at this and so they inform me it’s apparent. In fact you need to select the primary possibility as it’s the higher apply, and I agree. Besides that in actuality the primary two questions that will probably be requested are:

- Who will personal the foundational Knowledge Product?

- Who can pay for it?

These are basic questions that usually hamper the momentum of Knowledge Mesh. As a result of you may both overengineer it (having a number of reusable elements, however in so doing hampering autonomy and escalate prices), or create a community of many little information merchandise that don’t communicate to one another. We wish to keep away from each of those extremes.

For the sake of our instance, let’s assume that as a substitute of each crew ingesting Google Analytics independently, we create a number of shared foundational merchandise, for instance Web site Consumer Behaviour and Merchandise.

These merchandise are owned by a particular area (in our instance it will likely be owned by Buyer Expertise), and they’re chargeable for exposing the info in commonplace output ports, which must be ruled by information contracts. The entire concept is that these merchandise needs to be reusable within the organisation similar to exterior information units are reusable by a standardised API sample. Downstream domains, like Advertising, then construct Shopper Knowledge Merchandise on high.

Web site Consumer Behaviour Foundational Knowledge Product

- Designed for reuse

- Steady, well-governed

- Typically constructed utilizing Knowledge Vault, 3NF, or comparable resilient fashions

- Optimised for change, not for dashboards

The 2 sources are handled as enter ports to the foundational information product.

The modelling methods used to construct the info product is once more open to the area to resolve however the motivation is for re-usability. Thus a extra versatile modelling method like Knowledge Vault I’ve usually seen getting used inside this context.

The output ports are then additionally designed for re-usability. For instance, right here you may mix the Knowledge Vault objects into an easier-to-consume format OR for extra technical shoppers you may merely expose the uncooked information vault tables. These will merely be logically break up into completely different output ports. You might additionally resolve to publish a separate output to be uncovered to LLM’s or autonomous brokers.

Advertising Lead Conversion Metrics Shopper Knowledge Product

- Designed for particular use instances

- Formed by the wants of the consuming area

- Typically dimensional or extremely aggregated

- Allowed (and anticipated) to duplicate logic if wanted

Right here I illustrate how we go for utilizing different foundational information merchandise as enter ports. Within the case of the Web site person behaviour we go for utilizing the normalised Snowflake tables (since we wish to hold constructing in Snowflake) and create a Knowledge Product that’s prepared for our particular consumption wants.

Our principal shoppers will probably be for analytics and dashboard constructing so choosing a Dimensional mannequin is smart. It’s optimised for one of these analytical querying inside a dashboard.

Zooming into Knowledge Contracts

The Knowledge Contract is de facto the glue that holds the integrity of the Knowledge Mesh. The Contract shouldn’t simply specify a few of the technical expectations but in addition the authorized and high quality necessities and something that the patron can be keen on.

The Bitol Open Knowledge Contract Commonplace2 got down to tackle a few of the gaps that existed with the seller particular contracts that had been out there in the marketplace. Particularly a shared, open commonplace for describing information contracts in a manner that’s human-readable, machine-readable, and tool-agnostic.

Why a lot concentrate on a shared commonplace?

- Shared language throughout domains

When each crew defines contracts in another way, federation turns into not possible.

A normal creates a frequent vocabulary for producers, shoppers, and platform groups.

- Device interoperability

An open commonplace permits information high quality instruments, orchestration frameworks, metadata platforms and CI/CD pipelines to all eat the identical contract definition, as a substitute of every requiring its personal configuration format.

- Contracts as dwelling artifacts

Contracts shouldn’t be static paperwork. With an ordinary, they are often versioned, validated routinely, examined in pipelines and in contrast over time. This strikes contracts from “documentation” to enforceable agreements.

- Avoiding vendor lock-in

Many distributors now assist information contracts, which is nice, however with out an open commonplace, switching instruments turns into costly.

The ODCS is a YAML template that features the next key parts:

- Fundamentals – Function, possession, area, and supposed shoppers

- Schema – Fields, sorts, constraints, and evolution guidelines

- Knowledge high quality expectations – Freshness, completeness, validity, thresholds

- Service-level agreements (SLAs) – Replace frequency, availability, latency

- Assist and communication channels – Who to contact when issues break

- Groups and roles – Producer, proprietor, steward duties

- Entry and infrastructure – How and the place the info is uncovered (tables, APIs, recordsdata)

- Customized area guidelines – Enterprise logic or semantics that buyers should perceive

Not each contract wants each part — however the construction issues, as a result of it makes expectations express and repeatable.

Knowledge Contracts enabling interoperability

In our instance now we have a knowledge contract on the enter port (Foundational information product) in addition to the output port (Shopper information product). You wish to implement these expectations as seamlessly as doable, simply as you’ll with any contract between two events. For the reason that contract follows a standardised, machine-readable format, now you can combine with third celebration ETL and information high quality instruments to implement these expectations.

Platforms equivalent to dbt, SQLMesh, Coalesce, Nice Expectations, Soda, and Monte Carlo can programmatically generate checks, implement validations, orchestrate transformations, and monitor information well being with out customized integrations. A few of these instruments have already introduced assist for the Open Knowledge Contract Commonplace.

LLMs, MCP servers and Knowledge Contracts

Through the use of standardised metadata, together with the info contracts, organisations can safely make use of LLMs and different agentic AI functions to work together with their crown jewels, the info.

So in our instance, let’s assume Peter from PlayNest needs to test what the highest most visited merchandise are:

That is sufficient context for the LLM to make use of the metadata to find out which information merchandise are related, but in addition to see that the person doesn’t have entry to the info. It will possibly now decide who and the best way to request entry.

As soon as entry is granted:

The LLM can interpret the metadata and create the question that matches the person request.

Ensuring autonomous brokers and LLMs have strict guardrails beneath which to function will enable the enterprise to scale their AI use instances.

A number of distributors are rolling out MCP servers to offer a properly structured strategy to exposing your information to autonomous brokers. Forcing the interfacing to work by metadata requirements and protocols (equivalent to these information contracts) will enable safer and scalable roll-outs of those use instances.

The MCP server offers the toolset and the guardrails for which to function in. The metadata, together with the info contracts, offers the insurance policies and enforceable guidelines beneath which any agent might function.

For the time being there’s a tsunami of AI use instances being requested by enterprise. Most of them are presently nonetheless not including worth. Now now we have a major alternative to spend money on organising the proper guardrails for these initiatives to function in. There’ll come a crucial mass second when the worth will come, however first we want the constructing blocks.

I’ll go so far as to say this: a Knowledge Mesh with out contracts is solely decentralised chaos. With out clear, enforceable agreements, autonomy turns into silos, shadow IT multiplies, and inconsistency scales quicker than worth. At that time, you haven’t constructed a mesh, you’ve distributed dysfunction. You would possibly as properly revert to centralisation.

Contracts substitute assumption with accountability. Construct small, join neatly, govern clearly — don’t mesh round.

[1] Jones, A. (2023). Driving information high quality with information contracts: A complete information to constructing dependable, trusted, and efficient information platforms. O’Reilly Media.

[2] Bitol. (n.d.). Open information contract commonplace (v3.1.0). Retrieved February 18, 2026, from https://bitol-io.github.io/open-data-contract-standard/v3.1.0/

All photographs on this article was created by the writer