That is Half II of a two-part sequence from the AWS Generative AI Innovation Heart. When you missed Half I, discuss with Operationalizing Agentic AI Half 1: A Stakeholder’s Information.

The most important barrier to agentic AI isn’t the expertise—it’s the working mannequin. In Half I, we established that organizations producing actual worth from brokers share three traits: they outline work in exact element, they certain autonomy intentionally, they usually deal with enchancment as a steady behavior moderately than a one-time mission. We additionally launched the 4 components of labor that’s actually “agent-shaped”: a transparent begin and finish, judgment throughout instruments, observable and measurable success, and a secure failure mode. With out these foundations, even probably the most refined agent will stall within the lab.

Now comes the more durable query: who makes it work, and how?

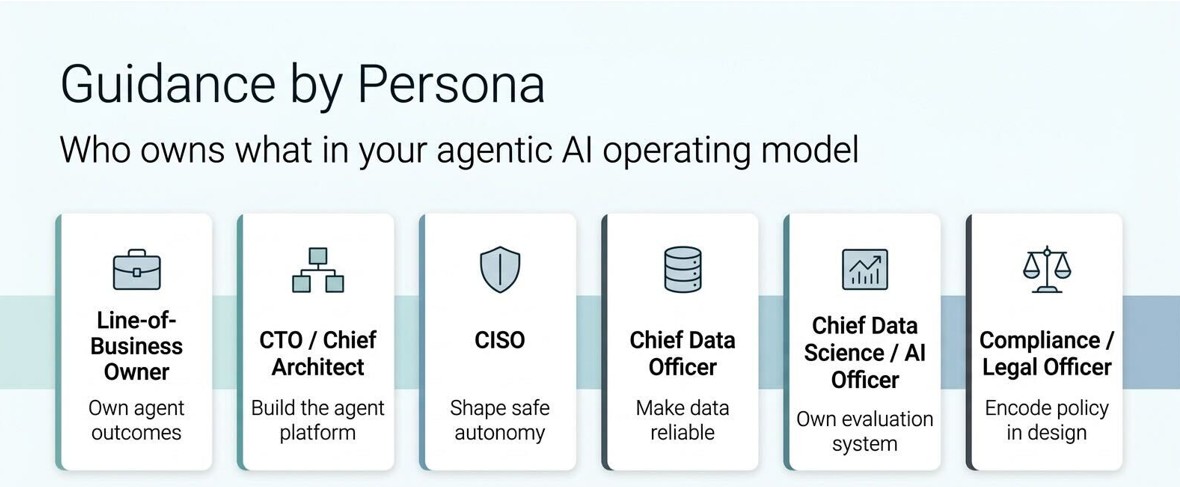

In Half II, we converse on to the leaders who should flip that shared basis into motion. Every function carries a definite set of tasks, dangers, and leverage factors. Whether or not you personal a P&L, run enterprise structure, lead safety, govern information, or handle compliance, this part is written within the language of your job—as a result of that’s the place agentic AI both succeeds or quietly dies.

Half II – Steerage by persona

For the line-of-business proprietor: put the agent on the hook in your KPIs.

When you personal a P&L, you don’t want one other expertise toy. You want fewer open tickets, fewer days in your money conversion cycle, fewer deserted carts, fewer compliance exceptions. An agent is beneficial provided that it may be tied on to these numbers.

Step one is to put in writing a job description for the agent the identical approach you’d for a brand new rent. “This agent takes inbound X, checks Y, does Z, and palms off to this group when it’s accomplished.” Embrace what accomplished means in your operational phrases: time to reply, high quality threshold, escalation triggers, and customer-facing commitments.

The second step is to anchor the enterprise case in numbers your individual group already tracks. What number of items per week cross via this workflow? What does every unit price in labor, rework, and write-offs? How lengthy does it spend ready in queues? How usually does it bounce again as a result of one thing was lacking or improper? When you can’t reply these questions at this time, your first mission isn’t an agent—it’s instrumenting the workflow.

The third step is sequencing. Early within the journey, probably the most helpful agent is usually the one which collapses handoffs: it reads the inbound request, gathers context from a number of techniques, proposes a plan, and drops that plan into your group’s lap with every part pre-staged. It might not shut the loop by itself, however it will possibly take away hours or days of back-and-forth. Price-saving wins like this assist construct credibility with the CFO and offer you political capital to pursue extra formidable, revenue-focused use instances later.

The road-of-business proprietor doesn’t want to grasp fashions or prompts. They should personal a small portfolio of agent jobs tied on to their metrics, and they should insist that each initiative begins with a written job contract, not a slide with a label.

For the CTO or chief architect: Resolve whether or not you need ten brokers or 100

When you’re the CTO, considered one of your largest dangers is success. As soon as the primary agent lands nicely, different groups will need one. If every group builds its personal stack—personal framework, personal connectors, personal entry mannequin—you’ll find yourself with a zoo of brokers that look totally different, are examined otherwise, and are unimaginable to observe as a complete.

The structure query is straightforward to state and laborious to execute: would you like ten spectacular one-off brokers, or would you like a system that may help 100 brokers safely?

The system path asks you to do some laborious work early. It means standardizing how instruments are uncovered so that each agent calls the identical integration when it must learn buyer information, replace a ticket, or ebook a fee. It means separating considering from doing in your design: one element plans, one other calls instruments, one other checks compliance, one other explains selections again to customers. It means capturing resolution traces in a constant format so observability and debugging work throughout use instances.

It additionally asks you to consider brokers as long-lived companies, not short-lived scripts. They want identities, permissions, rotation, lifecycle administration, and a approach to be upgraded with out breaking their shoppers. That’s extra work on day one, but it surely’s what lets you say “Sure” to the tenth group that desires an agent with out ranging from scratch.

The CTO’s job isn’t to choose the most effective agent framework in a vacuum. It’s to construct a sturdy flooring—identification, coverage enforcement, logging, connectors, and analysis hooks—that permits many groups to ship brokers safely, rapidly, and persistently.

For the CISO: Deal with brokers like colleagues, not code

When you’re answerable for safety, you’re used to considering in property: techniques, information shops, credentials. Brokers add one thing new to your risk mannequin: approved entities that may make selections and take actions at machine velocity.

The error is to deal with brokers as simply one other software. They’re nearer to colleagues. They’ve accounts. They’ve roles. They’ve instruments they will use. They’ll make errors. They are often misconfigured.

The sensible transfer is to arrange non-human identities for brokers with the identical seriousness you apply to human identities. Every agent ought to have its personal credentials, its personal permissions, and its personal audit path. It shouldn’t inherit all of the rights of the service account it occurs to run beneath. When an agent reads delicate information or calls a high-risk instrument, that needs to be seen in your logs in a approach your group acknowledges.

You’ll additionally need methods to cease brokers cleanly. Meaning kill switches that actually work, not only a line in a design doc. It means insurance policies that say, “This class of motion all the time requires human approval,” and enforces that on the instrument degree, not simply within the agent’s immediate. It means waiting for habits that drifts: an agent that immediately calls a instrument way more usually than regular, or begins studying information it hasn’t wanted earlier than.

CISOs who adapt nicely to agentic AI don’t attempt to block autonomy completely. They outline the place autonomy is suitable, what proof is required to belief it, and what occurs when that belief is damaged. They be a part of the design dialog early and make coverage a part of the agent’s form, not a gate on the finish.

For the chief information officer: Make the info boring

Brokers amplify no matter information basis you have already got. In case your information is fragmented, stale, and undocumented, brokers could make these issues seen to everybody rapidly. In case your information is constant, well-governed, and easy to grasp, brokers can multiply its worth.

The CDO’s job within the agentic period is to make the info boring, in the absolute best approach. Meaning when an agent asks, “Present me all open claims over this threshold,” it will get a constant reply no matter which area or line of enterprise it operates in. It means one definition of “buyer well being rating” exists and is documented nicely sufficient that individuals and brokers can each use it. It means lineage is evident: when one thing goes improper, you may hint the choice again via the metrics, via the options, all the best way to the supply system.

It additionally means being real looking about readiness. Some workflows merely aren’t prepared for autonomous selections as a result of the info they depend on is simply too incomplete or too contradictory. The most effective CDOs lean into this. They don’t say, “We are able to’t help brokers.” They are saying, “We are able to help this class of labor at this time. If you wish to automate that different class, listed below are the info enhancements we’d like first.”

One of the priceless contributions a CDO could make to the agent dialog is a map: which domains have production-grade information, that are in progress, and the place the landmines are. That map helps everybody else choose their first jobs properly, as an alternative of discovering information debt mid-implementation.

For the chief information science or AI officer: Analysis is your actual product

When you lead information science or AI, it’s tempting to give attention to fashions: which basis mannequin, which fine-tune approach, which benchmark rating. These selections matter, however in manufacturing, your actual product is the analysis system wrapped across the mannequin.

Brokers can fail in ways in which benchmarks don’t measure. They get caught in loops. They name instruments incorrectly. They half-complete duties in ways in which look believable however are improper. They behave nicely on clear take a look at information and crumble on the sting instances nobody thought to incorporate. An efficient analysis system does three issues.

First, it turns actual work into assessments. When an agent makes a mistake in manufacturing, that situation turns into a part of a rising analysis suite. Over time, the toughest instances you encounter grow to be guardrails that assist shield you from regressing.

Second, it runs mechanically. Adjustments to prompts, fashions, instruments, or retrieval indexes set off analysis earlier than that change goes reside. That offers you the arrogance to iterate rapidly, since you’re not counting on a couple of spot checks and hope.

Third, it measures what the enterprise cares about. That features technical metrics like latency and gear success fee, but in addition process completion fee, escalation fee, price per resolution, and the share of labor the place people settle for the agent’s suggestion as-is. When these numbers are seen and enhancing, belief follows.

Groups that make investments right here early uncover that mannequin decisions grow to be less complicated, not more durable. As soon as you may see how a mannequin behaves in your actual duties, the “which mannequin is finest?” debate turns into a grounded comparability as an alternative of a philosophical dialogue.

For the compliance or authorized officer: Design for audits earlier than you face one

When you’re accountable for compliance or authorized danger, agentic AI most likely appears like a transferring goal. Rules are evolving, and vendor advertising is forward of regulatory readability. You may’t freeze the group till each customary settles, however you can also’t tolerate “We’ll determine the governance later.”

A practical strategy is to work backwards from an audit. Think about a regulator or inner audit committee asks, “On this date, why did this agent take this motion?” Resolve now what proof you’d must reply that query clearly and rapidly.

That means a couple of design decisions. Each agent ought to go away a path: what inputs it noticed, what instruments it known as, what choices it thought-about, what it selected, and what guidelines it utilized. For top-stakes domains like credit score selections, insurance coverage underwriting, and employment-related actions, people should stay within the loop, and the agent’s function needs to be advisory or preparatory: accumulating information, organizing proof, proposing actions. The human’s approval turns into a part of the report.

It additionally implies that not all agent concepts are allowed. Some use instances reside squarely inside regulatory purple zones till frameworks and controls mature. Your job is to make these traces seen early. When you may say “Sure” to some brokers with clear situations, “Sure later” to others with particular conditions, and “No” to some with a transparent rationale, you may grow to be an enabler moderately than a blocker.

One of the useful issues you are able to do for the remainder of the management group is to show summary considerations like “we’d like accountable AI” right into a concrete guidelines that may be utilized to every proposed agent earlier than work begins.

Name to motion

If the patterns on this put up sound acquainted, you’re not behind. You’re the place most enterprises are. What separates those that transfer ahead is the choice to deal with agentic AI as an working mannequin problem, not a expertise experiment. 5 strikes you can also make to get began:

Convene the appropriate room. Deliver your LOB proprietor, CTO, CISO, CDO, AI/DS chief, and compliance lead collectively—not for a demo, however for a working session. Every particular person solutions one query: “What’s the one largest factor blocking us from placing an agent into manufacturing on an actual workflow?”

Decide one job, not one use case. Determine one concrete piece of labor with a transparent begin, clear finish, outlined instruments, and successful measure somebody exterior the group can confirm. Write the agent’s job description collectively. If the room can’t agree on what accomplished appears like, you’ve discovered your first downside to unravel.

Draw your readiness map. Have your CDO and CISO collectively sketch which information domains and techniques are production-ready for autonomous selections at this time, which want enhancements first, and the place the laborious boundaries are. That one-page map can prevent months of wasted effort.

Decide to a cadence. Set a recurring weekly or biweekly assessment the place the cross-functional group examines how the agent behaved, what labored, what broke, and what to regulate. When you solely consider at launch, you’re constructing a demo. When you consider constantly, you’re constructing a functionality.

Make governance a design enter, not a launch gate. Resolve now what proof you would want if an auditor requested “Why did this agent do that?” six months from at this time. Combine that into the structure earlier than the primary line of code is written.

The enterprises producing actual worth from agentic AI received there by doing the unglamorous work: defining jobs exactly, bounding autonomy intentionally, investing in analysis relentlessly, and aligning stakeholders round a shared working mannequin.

Associate with the Generative AI Innovation Heart

You don’t should navigate this journey alone. Whether or not you’re planning your first agentic pilot or scaling to an enterprise-wide functionality, attain out to the Generative AI Innovation Heart group to start out a dialog grounded in your workflows, your information, and your corporation outcomes.

In regards to the authors