This submit is cowritten by Jeff Boudier, Simon Pagezy, and Florent Gbelidji from Hugging Face.

Agentic AI techniques characterize an evolution from conversational AI to autonomous brokers able to advanced reasoning, software utilization, and code execution. Enterprise functions profit from strategic deployment approaches tailor-made to particular wants. These wants embody managed endpoints, which ship auto-scaling capabilities, basis mannequin APIs to assist advanced reasoning, and containerized deployment choices that assist customized integration necessities.

Hugging Face smolagents is an open supply Python library designed to make it simple to construct and run brokers utilizing a couple of strains of code. We are going to present you how one can construct an agentic AI answer by integrating Hugging Face smolagents with Amazon Internet Companies (AWS) managed companies. You’ll learn to deploy a healthcare AI agent that demonstrates multi-model deployment choices, vector-enhanced data retrieval, and medical resolution assist capabilities.

Whereas we use healthcare for example, this structure applies to a number of industries the place domain-specific intelligence and reliability are crucial. The answer makes use of the model-agnostic, modality-agnostic, and tool-agnostic design of smolagents to orchestrate throughout Amazon SageMaker AI endpoints, Amazon Bedrock APIs, and containerized mannequin servers.

Answer overview

Many AI techniques face limitations with single-model approaches that may’t adapt to various enterprise wants. These techniques usually have inflexible deployment choices, inconsistent APIs throughout totally different AI companies, and lack multi-model deployment choices for optimum mannequin choice.

This answer demonstrates how organizations can construct AI techniques that tackle these limitations. The answer permits deployment choice based mostly on operational wants and gives constant request and response codecs throughout totally different AI backends and deployment strategies. It generates contextual responses by means of medical data integration and vector search, supporting deployment from improvement to manufacturing environments by means of containerized structure.

This healthcare use case illustrates how the AI agent can course of advanced medical queries for six drugs with medical resolution assist and AWS safety and compliance capabilities.

Structure

The answer consists of the next companies and options to ship the agentic AI capabilities:

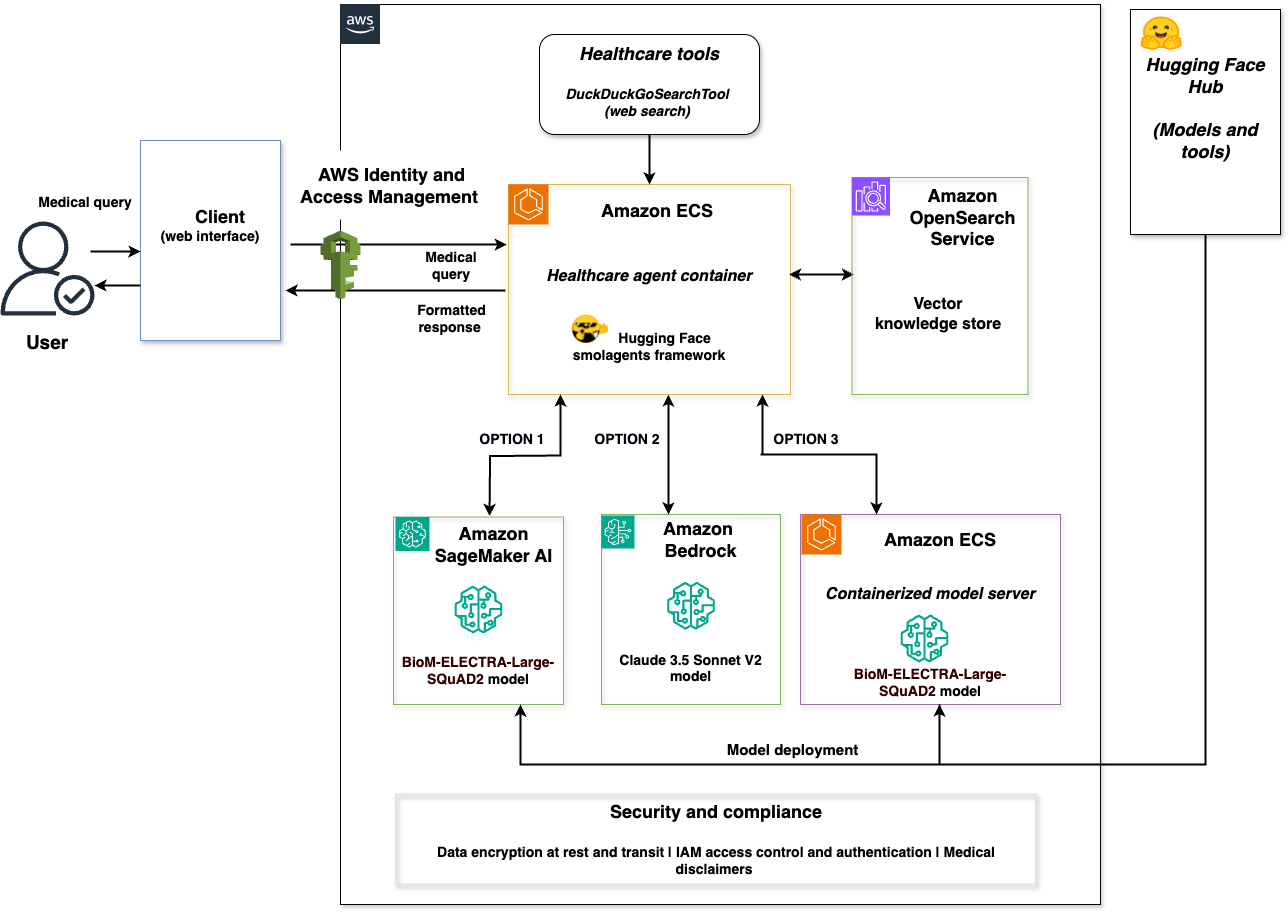

The next diagram illustrates the answer structure.

The structure is an entire integration of the Hugging Face smolagents framework with AWS companies. A consumer net interface connects to a healthcare agent container that orchestrates throughout three mannequin backends: SageMaker AI with BioM-ELECTRA, Amazon Bedrock with Claude 3.5 Sonnet V2, and a containerized mannequin server with BioM-ELECTRA. The answer features a vector retailer powered by OpenSearch Service and a safety layer with knowledge encryption at relaxation and in transit. The safety layer additionally handles IAM entry management and authentication, and any medical disclaimers for regulatory compliance.

This answer helps deployment choices by means of smolagents with every backend optimized for various situations:

- SageMaker AI for managed endpoints with auto-scaling and manufacturing workloads utilizing Hugging Face Hub fashions.

- Amazon Bedrock for serverless entry to basis fashions and sophisticated reasoning by means of AWS APIs.

- A containerized mannequin server for self-hosted mannequin deployment and gear integration from Hugging Face Hub.

The three backends implement Hugging Face Messages API compatibility, confirming constant request and response codecs whatever the chosen mannequin service. Customers choose the suitable backend based mostly on their necessities—the answer gives deployment choices reasonably than automated routing.

The whole implementation is offered within the sample-healthcare-agent-with-smolagents-on-aws GitHub repository.

Key advantages

The combination of Hugging Face smolagents with AWS managed companies presents vital benefits for enterprise agentic AI deployments.

Deployment alternative

Organizations can select the optimum deployment for every use case: Amazon Bedrock for serverless entry to basis fashions and self-hosted containerized deployment for customized software integration or SageMaker AI for specialised area fashions. These choices assist to match particular workload necessities, reasonably than a one-size-fits-all strategy.

Multi-model deployment choices

Organizations can optimize their infrastructure selections with out altering their agent logic. You may change between containerized mannequin server, SageMaker AI, and Amazon Bedrock with out modifying your utility code. This gives deployment choices, whereas sustaining constant agent habits.

Code era capabilities

The CodeAgent strategy of smolagents streamlines multi-step operations by means of direct Python code era and processing. The next comparability illustrates the multi-step operations of smolagents:

Multi-step JSON-based strategy:

smolagents CodeAgent:

The smolagents CodeAgent helps single code blocks to deal with multi-step operations, decreasing giant language mannequin (LLM) calls whereas streamlining agent improvement. It gives full management of agent logic throughout AWS service deployments.

Scalable structure

By deploying the appliance on AWS, you acquire entry to safety features and auto-scaling capabilities that enable you meet organizational safety necessities and keep regulatory compliance. Working containerized workloads with Amazon ECS and Fargate helps you obtain dependable operations and optimize prices by means of automated useful resource scaling.

Let’s stroll by means of implementing this answer.

Stipulations

Earlier than you deploy the answer, you want the next:

Run the next command to put in the required Python packages:

Configure atmosphere variables

Set the required atmosphere variables to your AWS Area and useful resource names earlier than deploying the infrastructure.

- Set the next atmosphere variables in your terminal:

- Confirm the variables are set:

These atmosphere variables are used all through the deployment and testing processes. Confirm that they’re set earlier than continuing to the following step.

Arrange AWS infrastructure

Begin by creating the foundational AWS infrastructure elements utilizing the SampleAWSInfrastructureManager class from the Smolagents_SageMaker_Bedrock_Opensearch.py implementation.

Deploy full infrastructure (automated strategy)

For automated deployment of the AWS infrastructure elements, you should utilize the improved essential perform.

- Begin the enhanced essential perform:

- The deployment routinely creates an Amazon ECS cluster, IAM roles, and an OpenSearch Service area.

- Look ahead to deployment to finish (roughly 15–20 minutes).

Create particular person AWS elements (different strategy)

In the event you want to create elements individually, you may arrange an OpenSearch Service area for vector-enhanced data retrieval and an Amazon ECS cluster for containerized deployment.

Each the OpenSearch Service area and Amazon ECS cluster are routinely created as a part of the whole AWS infrastructure deployment (Possibility 1 in enhanced_main). In the event you’ve already deployed the whole infrastructure, each elements are prepared and you’ll skip to the Deploy the Amazon SageMaker AI endpoint part.

Deploy the Amazon SageMaker AI endpoint

Deploy the BioM-ELECTRA-Giant-SQuAD2 mannequin to SageMaker AI for specialised medical question processing. An automatic technique (for deployment by means of the improved essential) and a guide technique (for deployment by means of the SageMaker AI endpoint) are supplied.

To deploy utilizing enhanced essential (automated technique)

To deploy the SageMaker AI endpoint (guide technique)

- Confirm your SageMaker atmosphere variables are set (from the Configure atmosphere variables part):

- Run the Amazon SageMaker deployment perform:

- The deployment makes use of the HuggingFaceModel with transformers 4.28.1, PyTorch 2.0.0, and ml.m5.xlarge occasion sort.

- Look ahead to the endpoint deployment to finish (roughly 5–10 minutes).

- Confirm the endpoint deployment:

- The endpoint is configured for question-answering duties with

MAX_LENGTH=512andTEMPERATURE=0.1.

Configure the multi-model backends

Configure the 2 extra backend choices utilizing the SampleTripleHealthcareAgent class for mannequin choice based mostly on operational wants.

Possibility 1 – Arrange Amazon Bedrock entry

Configure entry to Amazon Bedrock for basis mannequin integration with Claude 3.5 Sonnet V2.

- Confirm your Amazon Bedrock mannequin configuration is about (from the Configure atmosphere variables part):

- Entry to Claude 3.5 Sonnet V2 is routinely obtainable to your AWS account.

- You may confirm mannequin availability within the Amazon Bedrock console underneath Mannequin catalog.

Initialize the medical data base

Arrange the medical data database with six drugs and vector embeddings in OpenSearch Service. You should use the enhanced_main() perform, which gives an interactive menu for deployment duties, or initialize manually utilizing the SampleOpenSearchManager class.

To initialize utilizing enhanced essential (Automated technique)

To initialize the medical data base (Guide technique)

- Initialize the

SampleOpenSearchManager:

- The system creates the medical data index with mappings for

drug_name,content material, andcontent_typefields. - Medical data for Metformin, Lisinopril, Atorvastatin, Amlodipine, Omeprazole, and Simvastatin is routinely listed.

- Every remedy contains negative effects, monitoring necessities, and drug class data.

- The vector retailer helps similarity search with content material sort filtering for focused queries.

- Confirm the indexing accomplished efficiently:

Possibility 2 – Deploy the containerized mannequin server

After organising the core infrastructure, deploy a containerized mannequin server that gives self-hosted mannequin deployment capabilities.

Deploy the containerized mannequin server utilizing the LocalContainerizedModelServer class with BioM-ELECTRA-Giant-SQuAD2 for mannequin deployment.

To deploy containers to Amazon ECS (automated technique)

For automated deployment, use the containerized Amazon ECS deployment:

To deploy the containerized mannequin server (guide technique)

- Confirm your containerized mannequin configuration is about (from the Configure atmosphere variables step):

- Initialize the containerized mannequin server:

The containerized mannequin server makes use of Docker sandboxing for safe code execution.

- Confirm that the containerized mannequin server is working:

- The containerized mannequin server contains fallback mechanisms with medical data database integration.

- The containerized mannequin server helps deployment with Amazon ECS service discovery.

Implement the healthcare agent

Deploy the core healthcare agent utilizing the SampleTripleHealthcareAgent class that demonstrates smolagents integration with deployment capabilities throughout the three backends.

Initialize the triple healthcare agent

Arrange the primary healthcare agent that orchestrates throughout SageMaker AI, Amazon Bedrock, and containerized mannequin server with smolagents framework integration.

- Create the vector retailer occasion:

- Initialize the triple healthcare agent:

- The agent initializes three mannequin backends:

SampleSageMakerEndpointModelwith BioM-ELECTRA integrationSampleBedrockClaudeModelwith Claude 3.5 Sonnet V2 APISampleContainerizedModelwith fallback mechanisms

- Every backend is wrapped with

SampleHealthcareCodeAgentfor smolagents integration. - The brokers are configured with

max_steps=3andDuckDuckGoSearchToolintegration.

Configure deployment choices

The system demonstrates three deployment choices based mostly on operational wants.

To know the deployment choices:

- SageMaker AI deployment: Specialised medical queries about drug data, negative effects, and monitoring necessities.

- Amazon Bedrock deployment: Complicated reasoning duties requiring detailed medical evaluation and multi-step reasoning.

- Containerized deployment: Customized mannequin integration with specialised instruments and self-hosted mannequin entry.

Every backend contains database fallback with medical data for six drugs and Amazon OpenSearch Service gives contextual data throughout the mannequin backends with similarity matching.

Take a look at the interactive system

Use the sample_interactive_healthcare_assistant perform to check the multi-model deployment.

- Begin the interactive healthcare assistant:

- The system gives instructions for mannequin switching:

- 1 for SageMaker Endpoint

- 2 for containerized mannequin server

- 3 for Amazon Bedrock Claude API

- evaluate

to view the three responses

- Take a look at queries are restricted to 100 characters for optimum efficiency.

- Responses embody vector context when obtainable and database fallback data.

Run and take a look at the answer

Now you can run and take a look at the multi-model healthcare agent to watch the multi-backend deployment capabilities of smolagents utilizing the precise medical data database with six drugs.

Run the appliance

The answer gives a number of methods to work together with the healthcare AI agent based mostly in your most popular improvement atmosphere.

Streamlit net interface

For an interactive web-based expertise:

Jupyter Pocket book

For interactive improvement and experimentation:

Direct Python

For command-line execution:

These strategies present entry to the identical multi-model healthcare agent performance with totally different person interfaces.

Take a look at the deployment choices

Use the enhanced_main perform to entry testing utilities and validate the multi-model deployment.

Interactive healthcare demo

Expertise the healthcare assistant’s conversational interface with close to real-time mannequin switching capabilities.

- Begin the improved essential perform:

- Select possibility 5 for Interactive 3-model demo to launch the conversational interface.

- The system gives instructions for mannequin switching:

- 1 for SageMaker Endpoint

- 2 for containerized mannequin server

- 3 for Amazon Bedrock Claude API

- evaluate

to see the three responses

- Take a look at queries are restricted to 100 characters for optimum efficiency.

- Responses embody vector context when obtainable and database fallback data.

Evaluate mannequin responses

Use the enhanced_main perform to match the three deployment choices throughout totally different

question varieties.

To run interactive exams

- Begin the improved essential perform:

Select possibility 7 for Evaluate the three fashions to check deployment choices.

- Take a look at a medical question utilizing SageMaker AI:

- Take a look at a posh reasoning question utilizing Amazon Bedrock:

- Take a look at a question that makes use of the containerized mannequin server:

Precise responses embody vector context from OpenSearch Service when obtainable, displaying similarity matching outcomes alongside the mannequin responses.

Confirm deployment standing

Use the built-in testing utilities to confirm healthcare agent deployment and part standing.

- Run the part take a look at (possibility 6 in

enhanced_main):

Anticipated output:

- OpenSearch: Related

- SageMaker endpoint: Energetic

- Bedrock: Out there

- Containerized mannequin server: Began

- ECS cluster: Energetic

- Monitor the agent standing utilizing

get_container_status():

Anticipated output:

- Healthcare agent container: Energetic

- smolagents framework: Energetic

- Messages API: Appropriate

- Drug database: 6 drugs loaded

- Vector retailer: OpenSearch built-in

- Take a look at containerized mannequin server performance (possibility 10) to validate the containerized mannequin server independently:

Anticipated output:

- Containerized mannequin server: Began efficiently

- BioM-ELECTRA mannequin: Loaded

- Medical data: Out there

- Fallback mechanisms: Energetic

- Take a look at OpenSearch Service vector similarity (possibility 9) to watch how the system matches queries (comparable to metformin negative effects and lisinopril monitoring) with vector similarity scores and content material matching.

Clear up assets

To keep away from incurring future prices, delete the assets you created utilizing the cleanup utilities supplied within the implementation.

- Delete the Amazon OpenSearch Service area:

- Delete the Amazon ECS cluster and healthcare agent service:

- Clear up the Amazon SageMaker AI endpoint and containerized mannequin server. Select both of the next strategies:

- Methodology 1: Use the enhanced_main menu

- Run the improved essential perform:

- Choose possibility 8 for SageMaker cleanup when prompted.

- Methodology 2: Use direct cleanup capabilities

- Use the direct cleanup capabilities:

- If the containerized server is working, cease it:

- Methodology 1: Use the enhanced_main menu

- Take away the IAM function:

Further issues

For manufacturing deployments, implementing observability is important for monitoring agent efficiency, monitoring execution traces, and verifying reliability. Amazon Bedrock AgentCore Runtime gives observability with automated instrumentation. It captures session metrics, efficiency knowledge, error monitoring, and full execution traces (together with software invocations). Learn extra about implementing observability in Construct reliable AI brokers with Amazon Bedrock AgentCore observability.

Use instances

Whereas we demonstrated a healthcare implementation, this answer applies to a number of industries requiring domain-specific intelligence and reliability. With multi-model deployment, organizations can select the optimum backend for every use case. This contains managed endpoints for manufacturing workloads, basis fashions for advanced reasoning, or self-hosted deployment for customized integration.

Monetary companies

Monetary establishments can deploy brokers for regulatory compliance, threat evaluation, and fraud detection whereas assembly strict safety and audit necessities. This deployment strategy helps specialised fraud detection fashions, advanced regulatory evaluation, and customized monetary instruments integration.

Manufacturing and industrial operations

Manufacturing organizations can implement clever brokers for predictive upkeep, high quality management, and provide chain optimization. Multi-model deployment permits gear monitoring, with domain-specific fashions and sophisticated provide chain reasoning with basis fashions.

Power and utilities

Power corporations can deploy brokers for grid operations, regulatory compliance, and infrastructure administration. This strategy helps close to real-time demand forecasting with specialised fashions and sophisticated environmental impression evaluation with basis fashions.

Conclusion

We confirmed how one can construct an agentic AI answer by integrating Hugging Face smolagents with AWS managed companies. The answer demonstrates multi-model deployment choices, vector-enhanced data retrieval, and deployment capabilities so organizations can deploy domain-specific AI brokers with AWS safety features and compliance controls.

The healthcare use case illustrates how the model-agnostic design of smolagents helps deployment orchestration throughout Amazon SageMaker AI, Amazon Bedrock, and a containerized mannequin server. Key technical improvements embody the messages API compatibility throughout the backends, smolagents framework integration, and containerized deployment with AWS Fargate.

This answer structure is extensible to monetary companies, manufacturing, vitality, and different industries the place domain-specific intelligence and reliability are crucial.

Talk about a undertaking and necessities to seek out the proper assist for your corporation wants with Hugging Face. Or if there are questions on getting began with AWS, communicate with an AWS generative AI Specialist to find out how we might help speed up your corporation immediately.

Additional studying

In regards to the authors

Sanhita Sarkar, PhD, International Accomplice Options, AI/ML, and generative AI at AWS. She drives AI/ML and generative AI companion options at AWS, with in depth management expertise throughout edge, cloud, and knowledge middle environments. She holds a number of patents, has revealed analysis papers, and serves as chair for technical conferences.

Sanhita Sarkar, PhD, International Accomplice Options, AI/ML, and generative AI at AWS. She drives AI/ML and generative AI companion options at AWS, with in depth management expertise throughout edge, cloud, and knowledge middle environments. She holds a number of patents, has revealed analysis papers, and serves as chair for technical conferences.

Jeff Boudier, Head of Merchandise at Hugging Face. Jeff was additionally a co-founder of Stupeflix, acquired by GoPro, the place he served as director of product administration, product advertising and marketing, enterprise improvement, and company improvement.

Jeff Boudier, Head of Merchandise at Hugging Face. Jeff was additionally a co-founder of Stupeflix, acquired by GoPro, the place he served as director of product administration, product advertising and marketing, enterprise improvement, and company improvement.

Simon Pagezy, Accomplice Success Supervisor at Hugging Face. He leads partnerships at Hugging Face with main cloud and {hardware} corporations. He works to make the Hugging Face choices broadly accessible throughout various deployment environments.

Simon Pagezy, Accomplice Success Supervisor at Hugging Face. He leads partnerships at Hugging Face with main cloud and {hardware} corporations. He works to make the Hugging Face choices broadly accessible throughout various deployment environments.

Florent Gbelidji, Cloud Partnership Tech Lead for the AWS account, driving integrations between the Hugging Face choices and AWS companies. He has additionally been an ML Engineer within the Skilled Acceleration Program the place he helped corporations construct options with open-source AI.

Florent Gbelidji, Cloud Partnership Tech Lead for the AWS account, driving integrations between the Hugging Face choices and AWS companies. He has additionally been an ML Engineer within the Skilled Acceleration Program the place he helped corporations construct options with open-source AI.