Sustaining mannequin agility is essential for organizations to adapt to technological developments and optimize their synthetic intelligence (AI) options. Whether or not transitioning between totally different giant language mannequin (LLM) households or upgrading to newer variations throughout the similar household, a structured migration strategy and a standardized course of are important for facilitating steady efficiency enchancment whereas minimizing operational disruptions. Nonetheless, growing such an answer is difficult in each technical and non-technical features as a result of the answer must:

- Be generic to cowl a wide range of use instances

- Be particular so {that a} new person can apply it to the goal use case

- Present complete and honest comparability between LLMs

- Be automated and scalable

- Incorporate domain- and task-specific information and inputs

- Have a well-defined, end-to-end course of from knowledge preparation steerage to last success standards

On this submit, we introduce a scientific framework for LLM migration or improve in generative AI manufacturing, encompassing important instruments, methodologies, and finest practices. The framework facilitates transitions between totally different LLMs by offering sturdy protocols for immediate conversion and optimization. It contains analysis mechanisms that assess a number of efficiency dimensions, enabling data-driven decision-making via detailed and comparative evaluation of supply and vacation spot fashions. The proposed strategy presents a complete answer that features the technical features of mannequin migration and supplies quantifiable metrics to validate profitable migration and establish areas for additional optimization, facilitating a seamless transition and steady enchancment. Listed below are a number of highlights of the answer:

- Offers a wide range of reporting choices with numerous LLM analysis frameworks and complete steerage for metrics choice for goal use instances.

- Offers automated immediate optimization and migration with Amazon Bedrock Immediate Optimization and the Anthropic Metaprompt instrument, along with finest practices for additional immediate optimization.

- Offers complete steerage for mannequin choice and an end-to-end answer for mannequin comparability concerning price, latency, accuracy, and high quality.

- Offers characteristic examples and use case examples for customers to shortly apply the answer to the goal use case.

- The whole time required for an LLM migration or improve by following this framework is from two days as much as two weeks relying on the complexity of the use case.

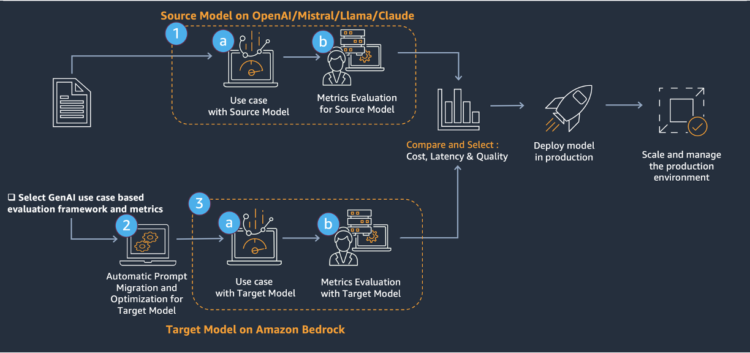

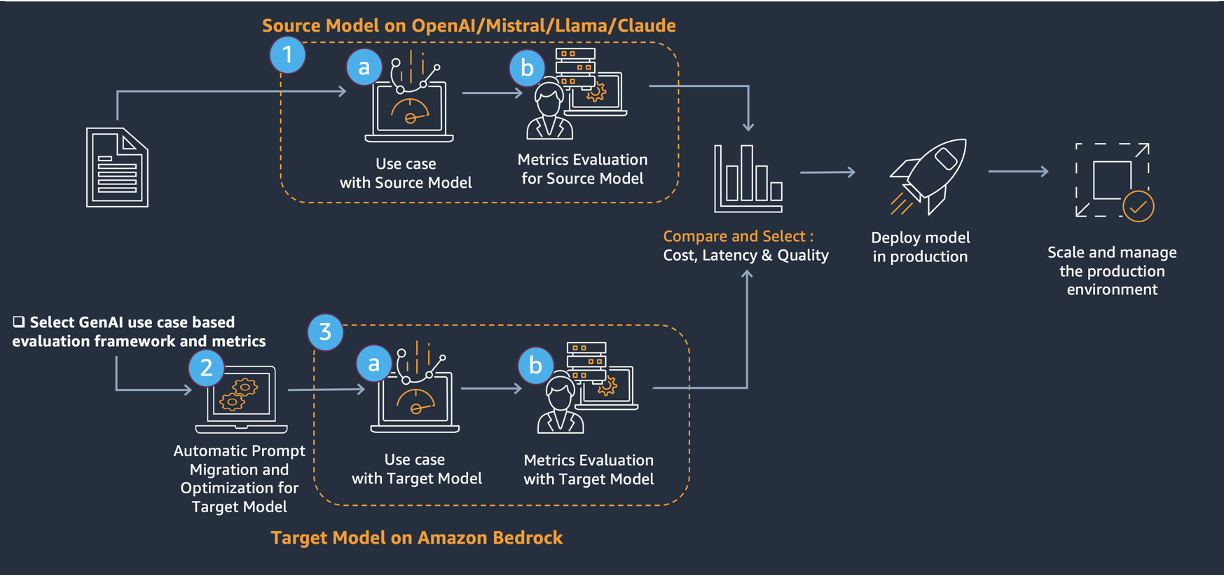

Resolution overview

The core of the migration includes a three-step strategy, proven within the previous diagram.

- Consider the supply mannequin.

- Immediate migration to and optimization of the goal mannequin with Amazon Bedrock immediate optimization and the Anthropic Metaprompt instrument.

- Consider the goal mannequin.

This answer supplies a complete strategy to improve present generative AI options (supply mannequin) to LLMs on Amazon Bedrock (goal mannequin). This answer addresses technical challenges via:

- Analysis metrics choice with a framework that makes use of numerous LLMs

- Immediate enchancment and migration with Amazon Bedrock Immediate Optimization and the Anthropic Metaprompt instrument

- Mannequin comparability throughout price, latency, and efficiency

This structured strategy supplies a sturdy framework for evaluating, migrating, and optimizing LLMs. By following these steps, we will transition between fashions, doubtlessly unlocking improved efficiency, cost-efficiency, and capabilities in your AI functions. The method emphasizes thorough preparation, systematic analysis, and steady enchancment; setting the stage for long-term success in utilizing superior language fashions.

Resolution implementation

Dataset preparation

An analysis dataset with high-quality samples is vital to the migration course of. For many use instances, samples with floor fact solutions are required; whereas for different use instances, metrics that don’t require floor fact—resembling reply relevancy, faithfulness, toxicity, and bias (see Analysis of frameworks and metrics choice part)—can be utilized because the willpower metrics. Use the next steerage and knowledge format to arrange the pattern knowledge for the goal use instances.

Prompt fields for pattern knowledge embrace:

- Immediate used for the supply mannequin

- Immediate enter (if any), for instance: Questions and context for Retrieval-Augmented Technology (RAG)-based reply era

- Configurations used for supply mannequin invocation, for instance, temperature, top_p, top_k, and so forth.

- Floor truths

- Output from the supply mannequin

- Latency of the supply mannequin

- Enter and output tokens from the supply mannequin, which can be utilized for price calculation

It’s essential to do not forget that prime quality floor truths are important to profitable migration for many use instances. Floor truths shouldn’t solely be validated concerning correctness, but additionally to confirm that they match the subject material knowledgeable’s (SME’s) steerage and analysis standards. See Error Evaluation part for an instance of a SME’s steerage and analysis standards.

As well as, if any present analysis metrics can be found, resembling a human analysis rating or thumbs up/thumbs down from a SME, embrace these metrics and corresponding reasoning or feedback for every knowledge pattern. If any automated evaluations have been carried out, embrace the automated analysis scores, strategies, and configurations. The next part supplies extra detailed steerage on deciding on analysis frameworks and defining the metrics. Nonetheless, it’s nonetheless beneficial to gather the prevailing or most popular analysis metrics from stakeholders for reference.

Embody the next fields if relevant:

- Current human analysis metrics for the supply mannequin, for instance, the SME rating for supply mannequin.

- Current automated analysis metrics for the supply mannequin, for instance, the LLM-as-a-judge rating for the supply mannequin.

The next desk is an instance format of the info samples:

| sample_id | … |

| query | |

| content material | |

| prompt_source_llm | |

| answer_ground_truth | |

| answer_ source_llm | |

| latency_ source_llm | |

| input_token_source_llm | |

| output_token_source_llm | |

| llm_judge_score_source_llm | |

| human_score_source_llm | |

| human_score_reasoning_source_llm |

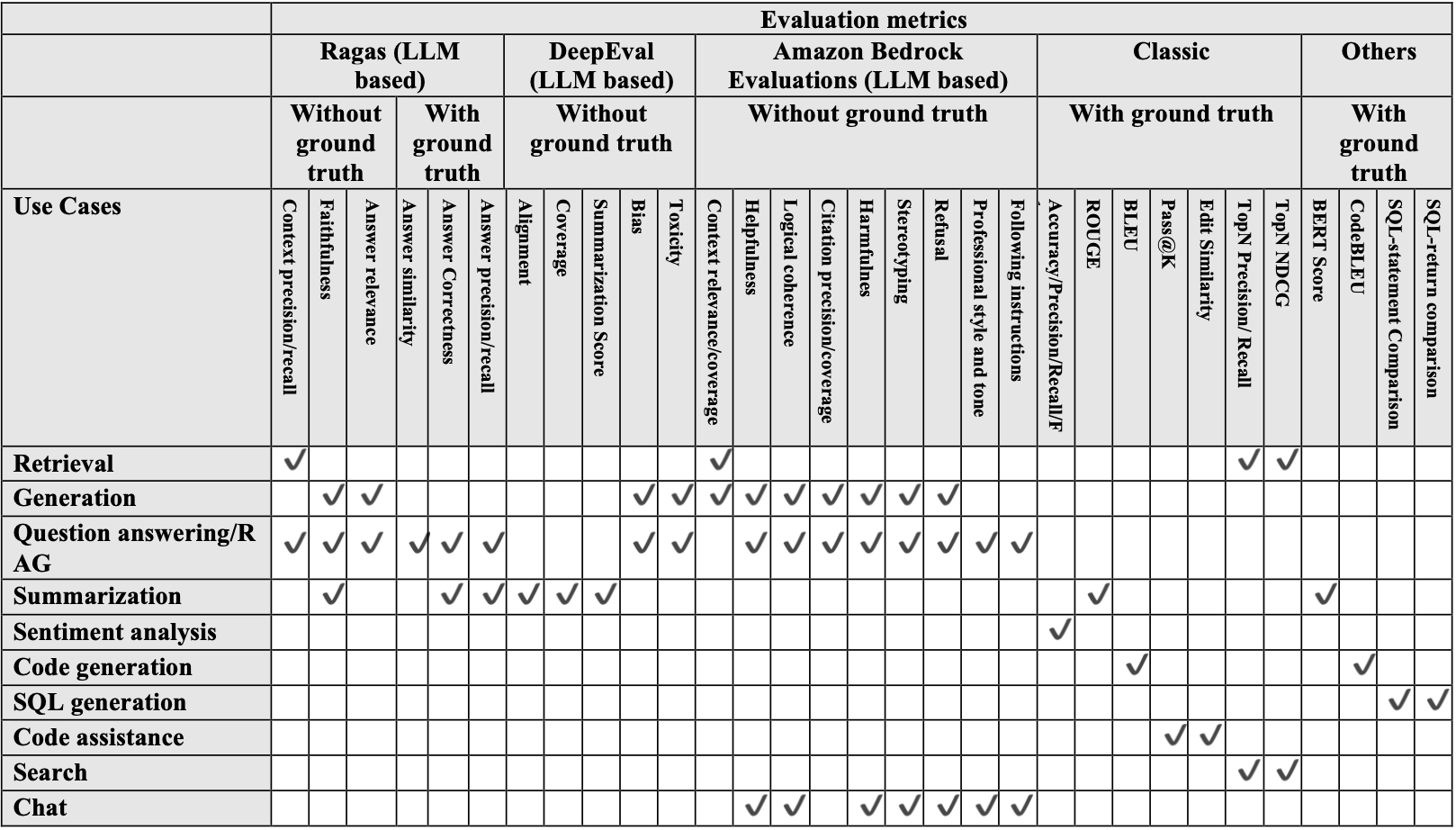

Analysis of frameworks and metrics choice

After accumulating data and knowledge samples, the subsequent step is to decide on the correct analysis metrics for the generative AI use case. Moreover human analysis by a SME, automated analysis metrics are really helpful as a result of they’re extra scalable and goal and assist the long-term well being and sustainability of the product. The next desk exhibits the automated metrics which can be obtainable for every use case.

Mannequin choice

The number of an acceptable LLM requires cautious consideration of a number of components. Whether or not migrating to an LLM throughout the similar LLM household or to a unique LLM household, understanding the important thing traits of every mannequin and the analysis standards is essential for achievement. When planning emigrate between LLMs, fastidiously evaluate and consider numerous obtainable choices and take a look at the mannequin card and respective prompting guides launched by every mannequin supplier. When evaluating LLM choices, take into account a number of key standards:

- Enter and output modalities: Textual content, code, and multi-modal capabilities

- Context window measurement: Most enter tokens the mannequin can course of

- Price per inference or token

- Efficiency metrics: Latency and throughput

- Output high quality and accuracy

- Area specialization and particular use case compatibility

- Internet hosting choices: Cloud, on-premises, and hybrid

- Knowledge privateness and safety necessities

After preliminary filtering based mostly on these traits, benchmarking assessments needs to be carried out by evaluating efficiency on particular duties to check shortlisted fashions. Amazon Bedrock presents a complete answer with entry to numerous LLMs via a unified API. This permits us to experiment with totally different fashions, evaluate their efficiency, and even use a number of fashions in parallel, all whereas sustaining a single integration level. This strategy not solely simplifies the technical implementation but additionally helps keep away from vendor lock-in by enabling a diversified AI mannequin technique.

Immediate migration

Two automated immediate migration and optimization instruments are launched right here: the Amazon Bedrock Immediate Optimization and the Anthropic Metaprompt instrument.

Amazon Bedrock Immediate Optimization

Amazon Bedrock Immediate Optimization is a instrument obtainable in Amazon Bedrock to robotically optimize prompts written by customers. This helps customers construct prime quality generative AI functions on Amazon Bedrock and reduces friction when transferring workloads from different suppliers to Amazon Bedrock. Amazon Bedrock Immediate Optimization can allow migration of present workloads from a supply mannequin to LLMs on Amazon Bedrock with minimal immediate engineering. With this instrument, we will select the mannequin to optimize the immediate for after which generate an optimized immediate for the goal mannequin. The principle benefit of utilizing Amazon Bedrock Immediate Optimization is the flexibility to make use of it from the AWS Administration Console for Amazon Bedrock. Utilizing the console, we will shortly generate a brand new immediate for the goal mannequin. We are able to additionally use the Bedrock API to generate a migrated immediate, please see the detailed implementation beneath.

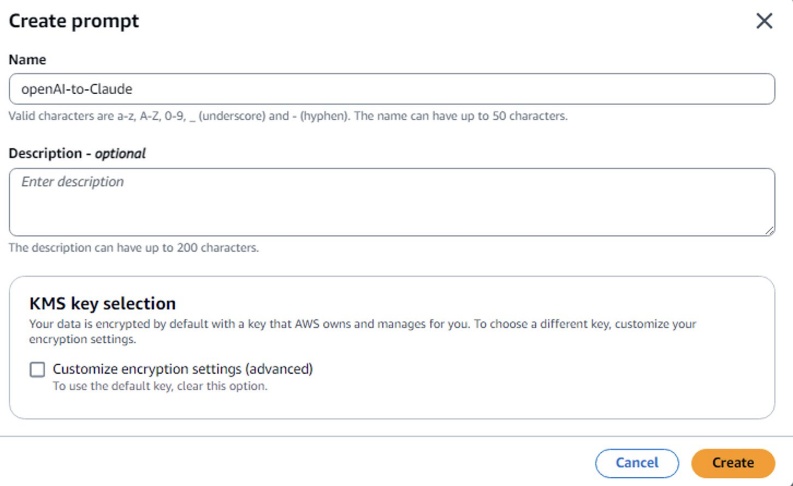

Choice A) Optimize a immediate from the Amazon Bedrock Console

- Within the Amazon Bedrock console, go to Immediate administration.

- Select Create immediate, enter a reputation for the immediate template, and select Create.

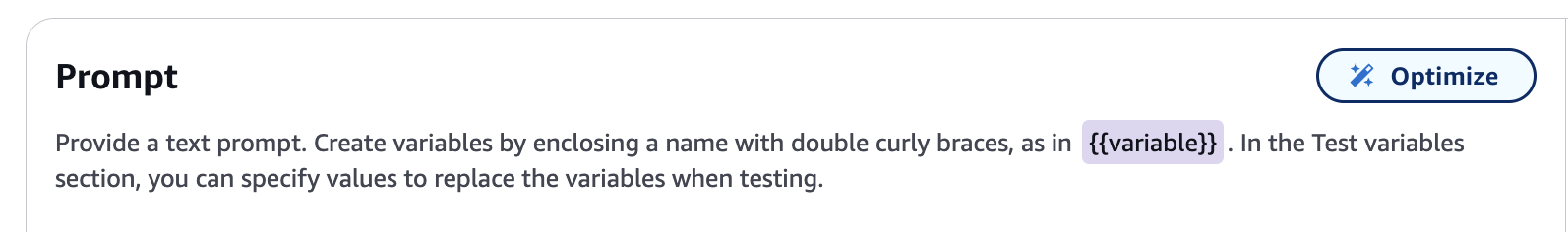

- Enter the supply mannequin immediate. Create variables by enclosing a reputation with double curly braces:

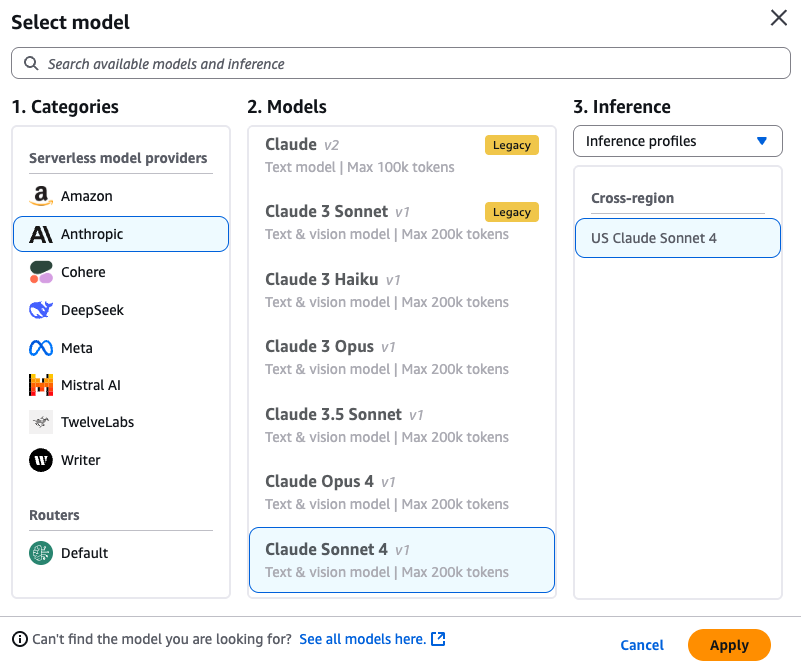

{{variable}}. Within the Check variables part, enter values to switch the variables with when testing. - Choose a Goal Mannequin in your optimized immediate. For instance, Anthropic’s Claude Sonnet 4.

- Select the Optimize button to generate an optimized immediate for the goal mannequin.

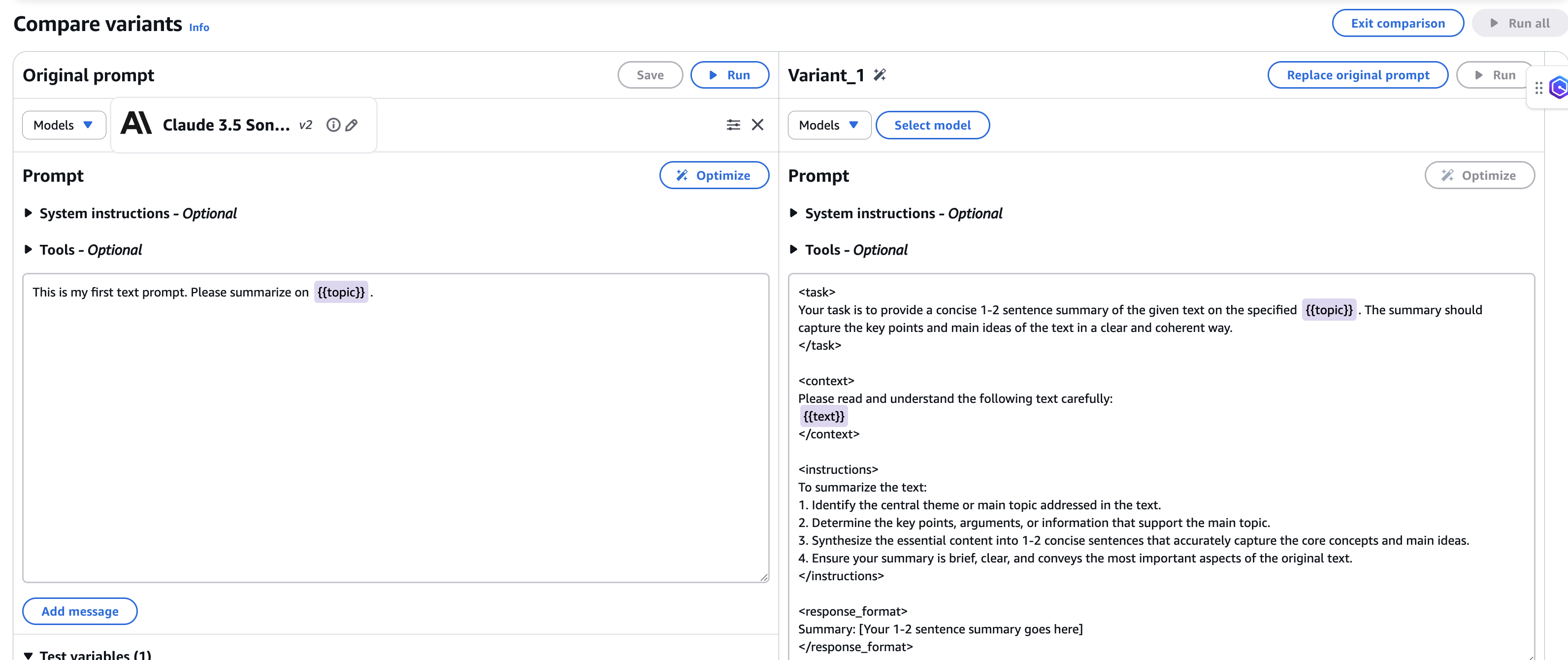

6. After the immediate is generated, the comparability window of the optimized immediate for the goal mannequin is proven along with your unique immediate from supply mannequin.

7. Save the brand new optimized immediate earlier than exiting evaluating mode.

Choice B) Optimize a immediate utilizing Amazon Bedrock API

We are able to additionally use the Bedrock API to generate a migrated immediate, by sending an OptimizePrompt request with an Brokers for Amazon Bedrock runtime endpoint. Present the immediate to optimize within the enter object and specify the mannequin to optimize for within the targetModelId discipline.

The response stream returns the next occasions:

- analyzePromptEvent – Seems when the immediate is completed being analyzed. Accommodates a message describing the evaluation of the immediate.

- optimizedPromptEvent – Seems when the immediate has completed being rewritten. Accommodates the optimized immediate.

Run the next code pattern to optimize a immediate:

Anthropic Metaprompt instrument

The Metaprompt is a immediate optimization instrument supplied by Anthropic the place Claude is prompted to jot down immediate templates on the person’s behalf based mostly on a subject or process. We are able to use it to instruct Claude on find out how to finest assemble a immediate to realize a given goal persistently and precisely.

The important thing steps are:

- Specify the uncooked immediate template, clarify the duty, and specify the enter variables and the anticipated output.

- Run Metaprompt with a Claude LLM resembling Claude-3-Sonnet by inputting the uncooked immediate from the supply mannequin.

- The brand new immediate template is generated with an optimized set of directions and format following Claude LLM’s finest practices.

Advantages of utilizing metaprompts:

- Prompts are way more detailed and complete in comparison with human-created prompts

- Helps improve the probability that finest practices are adopted for prompting the Anthropic fashions

- Permits specifying that key particulars such most popular tone

- Improves high quality and consistency of the mannequin’s outputs

The Metaprompt instrument is especially helpful for studying Claude’s most popular immediate model or as a technique to generate a number of immediate variations for a given process, simplifying testing a wide range of preliminary immediate variations for the goal use case.

To implement this course of, comply with the steps within the Immediate Migration Jupyter Pocket book emigrate supply mannequin prompts to focus on mannequin prompts. This pocket book requires Claude-3-Sonnet to be enabled because the LLM in Amazon Bedrock utilizing Mannequin Entry to generate the transformed prompts.

The next is one instance of a supply mannequin immediate in a monetary Q&A use case:

After finishing the steps within the pocket book, we will robotically get the optimized immediate for the goal mannequin. The next instance generates a immediate optimized for Anthropic’s Claude LLMs.

As proven within the previous instance, the immediate model and format are robotically transformed to comply with the very best practices of the goal mannequin, resembling utilizing XML tags and regrouping the directions to be clearer and extra direct.

Generate outcomes

Reply era throughout migration is an iterative course of. The overall movement contains passing migrated prompts and context to the LLM and producing a solution. A number of iterations are wanted to check totally different immediate variations, a number of LLMs, and totally different configurations of every LLM to assist us choose the very best mixture. Normally, your entire pipeline of a generative AI system (resembling a RAG-based chatbot) isn’t migrated. As an alternative, solely a portion of the pipeline is migrated. Thus, it’s essential {that a} mounted model of the remaining parts within the pipeline is out there. For instance, in a RAG-based query and reply (Q&A) system, we’d migrate solely the reply era part of the pipeline. Because of this, we will proceed to make use of the already generated context of the prevailing manufacturing mannequin.

As a finest observe, use the Amazon Bedrock fashions customary invocation methodology (within the Migration code repository) to generate metadata resembling latency, time to first token, enter token, and output token along with the ultimate response. These metadata fields are added as a brand new column on the finish of the outcomes desk and used for analysis. The output format and column identify needs to be aligned with the analysis metric necessities. The next desk exhibits an instance of the pattern knowledge earlier than feeding it into the analysis pipeline for a RAG use case.

Instance of a pattern knowledge earlier than analysis:

| financebench_id | financebench_id_03029 |

| doc_name | 3M_2018_10K |

| doc_link | https://traders.3m.com/financials/sec-filings/content material/0001558370-19-000470/0001558370-19-000470.pdf |

| doc_period | 2018 |

| question_type | metrics-generated |

| query | What’s the FY2018 capital expenditure quantity (in USD hundreds of thousands) for 3M? Give a response to the query by counting on the small print proven within the money movement assertion. |

| ground_truths | [‘$1577.00’] |

| evidence_text | … |

| page_number | 60 |

| llm_answer | In keeping with the money movement assertion within the 3M 2018 10-Ok report, the capital expenditure (purchases of property, plant and tools) for fiscal 12 months |

| llm_contexts | … |

| latency_meta_time | 0.92706 |

| latency_meta_kwd | 0.60666 |

| latency_meta_comb | 1.44876 |

| latency_meta_ans_gen | 2.48371 |

| input_tokens | 21147 |

| output_tokens | 401 |

Analysis

Analysis is among the most essential components of the migration course of as a result of it straight connects to the sign-off standards and determines the success of the migration. For many instances, analysis focuses on metrics in three main classes: accuracy and high quality, latency, and price. Both automated analysis or human analysis can be utilized to evaluate the accuracy and high quality of the mannequin response.

Automated analysis

The combination of LLMs within the high quality analysis course of represents a big development in evaluation methodology. These fashions excel at conducting complete evaluations throughout a number of dimensions, together with contextual relevance, coherence, and factual accuracy, whereas sustaining consistency and scalability. Two main classes of the automated analysis metrics are launched right here:

- Predefined metrics: Metrics predefined in LLM-based analysis frameworks resembling Ragas, DeepEval, and Amazon Bedrock Evaluations, or straight based mostly on non-LLM algorithms, like these launched in Analysis of frameworks.

- Customized metrics: Personalized metrics with person supplied definitions, analysis standards, or prompts to make use of LLM as an neutral choose.

Predefined metrics

These metrics are both utilizing some LLM-based analysis frameworks resembling Ragas and DeepEval or are straight based mostly on non-LLM algorithms. These metrics are broadly adopted, predefined, and have restricted choices for personalization. Ragas and DeepEval are two LLM-based analysis frameworks and metrics that we used as examples within the Migration code repository.

- Ragas: Ragas is an open supply framework that helps to judge RAG pipelines. RAG denotes a category of LLM functions that use exterior knowledge to enhance the LLM’s context. It supplies a wide range of LLM-powered automated analysis metrics. The next metrics are launched within the Ragas analysis pocket book within the Migration code repository.

- Reply precision: Measures how precisely the mannequin’s generated reply comprises related and proper claims in comparison with the bottom fact reply.

- Reply recall: Evaluates the completeness of the reply; that’s, the mannequin’s skill to retrieve the appropriate claims and evaluate them to the bottom fact reply. Excessive recall signifies that the reply completely covers the mandatory particulars in keeping with the bottom fact.

- Reply correctness: The evaluation of reply correctness includes gauging the accuracy of the generated reply when in comparison with the bottom fact. This analysis depends on the

floor factand thereply, with scores starting from 0 to 1. The next rating signifies a more in-depth alignment between the generated reply and the bottom fact, signifying higher correctness. - Reply similarity: The evaluation of the semantic resemblance between the generated reply and the bottom fact. This analysis relies on the

floor factand thereply, with values falling throughout the vary of 0 to 1. The next rating signifies a greater alignment between the generated reply and the bottom fact.

The next desk is a pattern knowledge output after Ragas analysis.

| financebench_id | financebench_id_03029 |

| doc_name | 3M_2018_10K |

| doc_link | https://traders.3m.com/financials/sec-filings/content material/0001558370-19-000470/0001558370-19-000470.pdf |

| doc_period | 2018 |

| question_type | metrics-generated |

| query | What’s the FY2018 capital expenditure quantity (in USD hundreds of thousands) for 3M?. |

| ground_truths | [‘$1577.00’] |

| evidence_text | … |

| page_number | 60 |

| llm_answer | In keeping with the money movement assertion within the 3M 2018 10-Ok report, the capital expenditure (purchases of property, plant and tools) for fiscal 12 months 2018 was $1,577 million. … |

| llm_contexts | … |

| latency_meta_time | 0.92706 |

| latency_meta_kwd | 0.60666 |

| latency_meta_comb | 1.44876 |

| latency_meta_ans_gen | 2.48371 |

| input_tokens | 21147 |

| output_tokens | 401 |

| answer_precision | 0 |

| answer_recall | 1 |

| answer_correctness | 0.16818 |

| answer_similarity | 0.33635 |

- DeepEval: DeepEval is an open supply LLM analysis framework. It’s just like Pytest however specialised for unit testing LLM outputs. DeepEval incorporates the newest analysis to judge LLM outputs based mostly on metrics such because the G-Eval, hallucination, reply relevancy, Ragas, and so forth. It makes use of LLMs and numerous different pure language processing (NLP) fashions that run domestically in your machine for analysis. In DeepEval, a metric serves as a typical of measurement for evaluating the efficiency of an LLM output based mostly on particular standards. DeepEval presents a variety of default metrics to shortly get began. The next metrics are launched within the DeepEval analysis pocket book within the Migration code repository.|

- Reply relevancy: The reply relevancy metric measures the standard of your RAG pipeline’s generator by evaluating how related the

actual_outputof your LLM software is in comparison with the supplied enter. - Faithfulness: The faithfulness metric measures the standard of your RAG pipeline’s generator by evaluating whether or not the

actual_outputfactually aligns with the contents of yourretrieval_context. - Toxicity: The toxicity metric is one other referenceless metric that evaluates toxicity in your LLM outputs.

- Bias: The bias metric determines whether or not your LLM output comprises gender, racial, or political bias.

- Reply relevancy: The reply relevancy metric measures the standard of your RAG pipeline’s generator by evaluating how related the

- Amazon Bedrock Evaluations: Amazon Bedrock Evaluations is a set of instruments for evaluating, evaluating, and deciding on basis fashions – together with customized or third-party fashions – in your particular use instances. It helps each model-only and RAG pipelines analysis. We are able to use Bedrock Evaluations both by way of AWS console or API. Amazon Bedrock Evaluations presents an intensive listing of built-in metrics for each standalone LLMs and full RAG pipelines, together with however not restricted to:

- Accuracy: Measures the correctness of mannequin outputs.

- Faithfulness: Checks for factual accuracy and avoids hallucinations.

- Helpfulness: Measures holistically how helpful responses are in answering questions.

- Logical coherence: Measures whether or not the responses are free from logical gaps, inconsistencies or contradictions.

- Harmfulness: Measures dangerous content material within the responses, together with hate, insults, violence, or sexual content material.

- Stereotyping: Measures generalized statements about people or teams of individuals in responses.

- Refusal: Measures how evasive the responses are in answering questions.

- Following directions: Measures how nicely the mannequin’s response respects the precise instructions discovered within the immediate.

- Skilled model and tone: Measures how acceptable the response’s model, formatting, and tone is for an expert setting.

Customized metrics

These metrics are person outlined and are usually tailor-made to particular duties or domains. One common methodology is to make use of customized LLM as a choose to offer an analysis rating for a solution utilizing a user-provided immediate. In distinction to utilizing predefined metrics, this methodology is very customizable as a result of we will present the immediate with task-specific analysis necessities. For instance, we will ask the LLM to generate a 10-point scoring system and comprehensively consider the reply towards floor fact throughout totally different dimensions, resembling correctness of knowledge, contextual relevance, depth and comprehensiveness of element, and total utility and helpfulness.

The next is an instance of a custom-made immediate for LLM as a choose:

Human analysis

Whereas quantitative metrics present beneficial knowledge factors, a complete qualitative analysis based mostly on skilled tips and SME suggestions can also be essential to validate mannequin efficiency. Efficient qualitative evaluation usually covers a number of key areas together with response theme and tone consistency, detection of inappropriate or undesirable content material, domain-specific accuracy, date and time associated points, and so forth. By utilizing SME experience, we will establish refined nuances and potential points that may escape quantitative evaluation. Error evaluation supplies some potential features that the SME can use for analysis standards, which might additionally function the steerage for validating and getting ready floor truths. We are able to use instruments resembling Amazon Bedrock Evaluations for human analysis.

Although human analysis or person suggestions collected from a UI can straight replicate the SME’s analysis standards, it’s not as environment friendly, scalable, and goal because the automated analysis strategies. Thus, a generative AI system improvement life cycle may begin with human analysis however finally strikes towards automated analysis. Human analysis can be utilized if automated analysis isn’t assembly baseline targets or pre-defined analysis standards.

Latency metrics

When migrating language fashions, runtime efficiency metrics are essential indicators of operational success. Complete latency and Time to first token (TTFT) are the most typical metrics for latency measurement.

- Complete latency is an end-to-end metric that measures the full length required for full response era, from preliminary immediate to last output. It encompasses processing the enter, producing the response, and delivering it to the person. Complete latency impacts person satisfaction, system throughput, and useful resource utilization.

- Time to first token (TTFT) quantifies the preliminary response velocity—particularly, the length till the mannequin generates its first output token. This metric considerably impacts perceived responsiveness and person expertise, particularly in interactive functions. TTFT is especially essential in conversational AI and real-time techniques (functions resembling chatbots, digital assistants, and interactive search techniques) the place customers anticipate fast suggestions. A low TTFT creates an impression of system responsiveness and might drastically improve person engagement.

If the outcomes era step requires a number of LLM calls, the breakdown latency metrics needs to be supplied as a result of solely the submodule latency comparable to LLM migration needs to be in contrast within the following mannequin comparability step.

Price calculation

For LLM invocation, the associated fee might be calculated based mostly on the variety of enter and output tokens and the corresponding value per token:

The fee calculations desk for value per enter and output token might be present in Amazon Bedrock Pricing .

Mannequin comparability report: Efficiency, latency, and price

We are able to use the Generate Comparability Report pocket book within the code repository to robotically generate a last comparability report for the supply and goal mannequin in a holistic view.

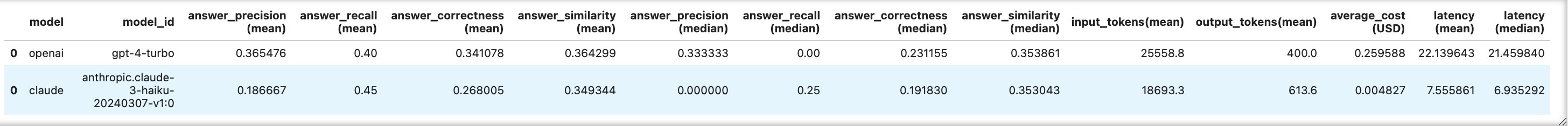

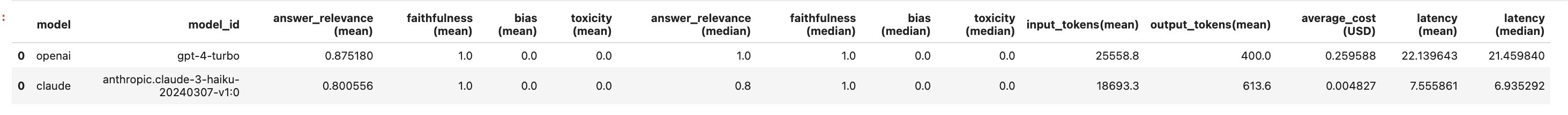

We are able to additionally use analysis stories generated from Ragas and DeepEval with corresponding metrics to check the fashions from the 2 analysis frameworks. We are able to receive a side-by-side comparability of the common enter and output tokens and common price and latency for the chosen fashions. As proven within the following determine, after operating this pocket book, there are two comparability tables for the supply and goal fashions from the 2 chosen analysis frameworks.

Ragas

DeepEval

Additional optimization

When enhancing and optimizing a generative AI manufacturing pipeline throughout an LLM migration or improve, customers usually give attention to two key areas:

- High quality of generated solutions

- Latency of response era

Immediate optimization

To optimize the standard of the generated solutions, we have to get understanding of the errors by conducting error evaluation and figuring out the gadgets for immediate optimization.

Error evaluation

Getting the very best response from a candidate LLM is unlikely with none optimization. Thus, conducting error evaluation and specializing in attainable features for error patterns helps us consider generated reply high quality and establish the alternatives for enchancment. Error evaluation additionally supplies a path to handbook immediate engineering to enhance the standard. After gathering error evaluation insights and suggestions from SMEs, an iterative immediate optimization course of might be carried out. To start out, formulate the error evaluation insights and suggestions from SMEs into clear steerage or standards. Ideally, these standards needs to be clarified earlier than beginning the immediate migration. These standards function the core concerns for additional immediate optimization to assist present constant, high-quality responses to satisfy the SME’s bar. The next is an instance of attainable steerage and standards we’d obtain from a SME.

Instance of a solution formatting model information from a SME in a monetary Q&A use case:

- Correctness

- Ensure pulled numbers are appropriate. All numbers needs to be matched to floor fact.

- Ensure all claims from floor fact can be found within the LLM reply.

- Generated responses shouldn’t add irrelevant sentences.

- Time

- Generated solutions should acknowledge the fiscal 12 months and all wanted quarters from the query appropriately.

- Within the reply, quarter orders from most up-to-date to the earliest is most popular.

- When the query asks about year-over-year, the reply ought to specify total 12 months or the final quarter, not quarter-by-quarter.

- When the reply comes from a single information doc, embrace the date of publication within the reply.

- Theme and tone

- Use skilled language mirroring the model of a newspaper.

- Format and excerpts

- When the person question asks for an inventory, current the listing in bullet level format.

- When the person question asks for excerpts, present a abstract assertion adopted by a bulleted listing of unedited excerpts straight from the doc.

- Queries that ask for a complete listing ideally embrace bullet factors.

- Queries that ask for subjects or themes with subjective classes ideally embrace a bulleted listing.

- Don’t begin the reply by referencing the context (in accordance with context).

- Size

- Most responses needs to be between 30–150 phrases. Longer solutions are acceptable when the query includes a number of entities or responding to queries that require sub-categories throughout the response.

Optimization strategies

After acquiring clear standards, a number of optimization strategies can be utilized to handle these standards, resembling:

- Immediate engineering to specify sure standards within the instruction of the immediate

- Few-shot studying to specify the reply format and generated reply examples

- Incorporating meta-information that would assist the LLM to grasp the context of the duty and query

- Pre- or post-processing to implement the output format or resolve some frequent error patterns

Latency optimization

There are a number of attainable options to optimize the latency:

Optimizing prompts to generate shorter solutions

The latency of an LLM mannequin is straight impacted by the variety of output tokens as a result of every extra token requires a separate ahead go via the mannequin, rising processing time. As extra tokens are generated, latency grows, particularly in bigger fashions resembling Opus 4. To cut back the latency, we will add directions to immediate to keep away from offering prolonged solutions, unrelated explanations, or filler phrases.

Utilizing provisioned throughput

Throughput refers back to the quantity and price of inputs and outputs {that a} mannequin processes and returns. Buying provisioned throughput to offer the next degree of throughput for a devoted hosted mannequin can doubtlessly scale back the latency in comparison with utilizing on-demand fashions. Although it can’t assure the development of latency, it persistently helps to stop throttled requests.

Enchancment lifecycle

It’s unlikely {that a} candidate LLM can obtain the very best efficiency with none optimization. It’s additionally typical for the previous optimization processes to be carried out iteratively. Thus, the development (optimization) lifecycle is vital to enhance the efficiency and establish the gaps or defects within the pipeline or knowledge. The advance lifecycle usually contains:

- Immediate optimization

- Reply era

- Analysis metrics era

- Error evaluation

- Pattern label verification

- Dataset updates concerning pattern defects and unsuitable labels

Job or area information identificationThe migration course of described on this submit can be utilized in two phases in a generative AI answer manufacturing lifecycle.

Finish-to-end LLM migration and mannequin agility

New LLMs are launched regularly. No LLM can persistently preserve peak efficiency for a given use case. It’s frequent for a manufacturing generative AI answer emigrate to a different household of LLMs or improve to a brand new model of an LLM. Thus, having a typical and reusable end-to-end LLM migration or improve course of is vital to the long-term success of any generative AI answer.

Monitoring and high quality assurance

When migration or updates are stabilized, there needs to be a typical monitoring and high quality assurance course of utilizing a routinely refreshed golden analysis dataset with floor fact and automatic or human analysis metrics, in addition to analysis of precise person traces. As a part of this answer, the established analysis and knowledge or floor fact assortment processes might be reused for monitoring and high quality assurance.

Suggestions and options (classes discovered)

The next are some ideas and options for the success of an LLM migration or improve course of.

- Signal-off situation: The info, analysis standards and success standards outlined in the beginning needs to be ample for stakeholders to confidently log out on the method. Ideally, there needs to be no adjustments within the knowledge, floor truths, or SME analysis and success standards in the course of the course of.

- Pattern knowledge and high quality: The info needs to be of ample high quality and amount for assured analysis. The bottom fact solutions and labels needs to be totally aligned with the SME’s analysis standards and expectations. Ideally, there needs to be no adjustments within the knowledge, floor truths, or SME analysis standards in the course of the course of.

- Enchancment lifecycle: Ensure to plan and implement an enchancment lifecycle to get essentially the most out of your chosen LLM.

- Mannequin choice: When deciding on competing goal fashions towards a supply mannequin, use assets such because the Synthetic Evaluation benchmarking web site to acquire a holistic comparability of fashions. These comparisons usually cowl high quality, efficiency, and value evaluation, offering beneficial insights earlier than beginning the experiment. This preliminary analysis may help slim down essentially the most promising candidates and inform the experimental design.

- Efficiency towards price trade-offs: When evaluating totally different fashions or options, it’s essential to contemplate the stability between efficiency and price. In some instances, a mannequin may provide barely decrease efficiency however at a sufficiently decreased price to make it a cheaper possibility total. That is notably true in situations the place the efficiency distinction is minimal, however the associated fee financial savings are substantial.

- Optimization strategies: Exploring numerous optimization strategies, resembling immediate engineering or provisioned throughput, can result in important enhancements in efficiency metrics like accuracy and latency. These optimizations may help bridge the hole between totally different fashions and needs to be thought of as a part of the analysis course of.

Conclusion

On this submit, we launched the AWS Generative AI Mannequin Agility Resolution, an end-to-end answer for LLM migrations and upgrades of present generative AI functions that maintains and improves mannequin agility. The answer defines a standardized course of and supplies a complete toolkit for LLM migration or improve with a wide range of ready-to-use instruments and superior strategies that may can be utilized emigrate generative AI functions to new LLMs. This can be utilized as a typical course of within the lifecycle of your generative AI functions. After an software is stabilized with a selected LLM and configuration, the analysis and knowledge and floor fact assortment processes on this answer might be reused for manufacturing monitoring and high quality assurance.

To be taught extra about this answer, please take a look at our AWS Generative AI Mannequin Agility Code Repo.

In regards to the authors