Many organizations are archiving massive media libraries, analyzing contact heart recordings, getting ready coaching knowledge for AI, or processing on-demand video for subtitles. When knowledge volumes develop considerably, managed computerized speech recognition (ASR) service prices can rapidly change into the first constraint on scalability.

To deal with this cost-scalability problem, we use the NVIDIA Parakeet-TDT-0.6B-v3 mannequin, deployed by AWS Batch on GPU-accelerated cases. Parakeet-TDT’s Token-and-Period Transducer structure concurrently predicts textual content tokens and their period to intelligently skip silence and redundant processing. This helps obtain inference speeds orders of magnitude sooner than real-time. By paying just for transient bursts of compute quite than the total size of your audio, you possibly can transcribe at scale for fractions of a cent per hour of audio based mostly on the benchmarks described on this put up.

On this put up, we stroll by constructing a scalable, event-driven transcription pipeline that mechanically processes audio information uploaded to Amazon Easy Storage Service (Amazon S3), and present you learn how to use Amazon EC2 Spot Cases and buffered streaming inference to additional cut back prices.

Mannequin capabilities

Parakeet-TDT-0.6B-v3, launched in August 2025, is an open-source multilingual ASR mannequin that delivers excessive accuracy throughout 25 European languages with computerized language detection and versatile licensing beneath CC-BY-4.0. In keeping with NVIDIA’s revealed metrics, the mannequin maintains a 6.34% phrase error charge (WER) in clear circumstances and 11.66% WER at 0 dB SNR, and helps audio as much as three hours utilizing native consideration mode.

The 25 supported languages embody Bulgarian, Croatian, Czech, Danish, Dutch, English, Estonian, Finnish, French, German, Greek, Hungarian, Italian, Latvian, Lithuanian, Maltese, Polish, Portuguese, Romanian, Slovak, Slovenian, Spanish, Swedish, Russian, and Ukrainian. This can assist alleviate the necessity for separate fashions or language-specific configuration when serving worldwide European economies. For deployment on AWS, the mannequin requires GPU-enabled cases with a minimal of 4 GB VRAM, although 8 GB gives higher efficiency. G6 cases (NVIDIA L4 GPUs) present the most effective cost-to-performance ratio for inference workloads based mostly on our assessments. The mannequin additionally performs properly on G5 (A10G), G4dn (T4), and for optimum throughput, P5 (H100) or P4 (A100) cases.

Answer structure

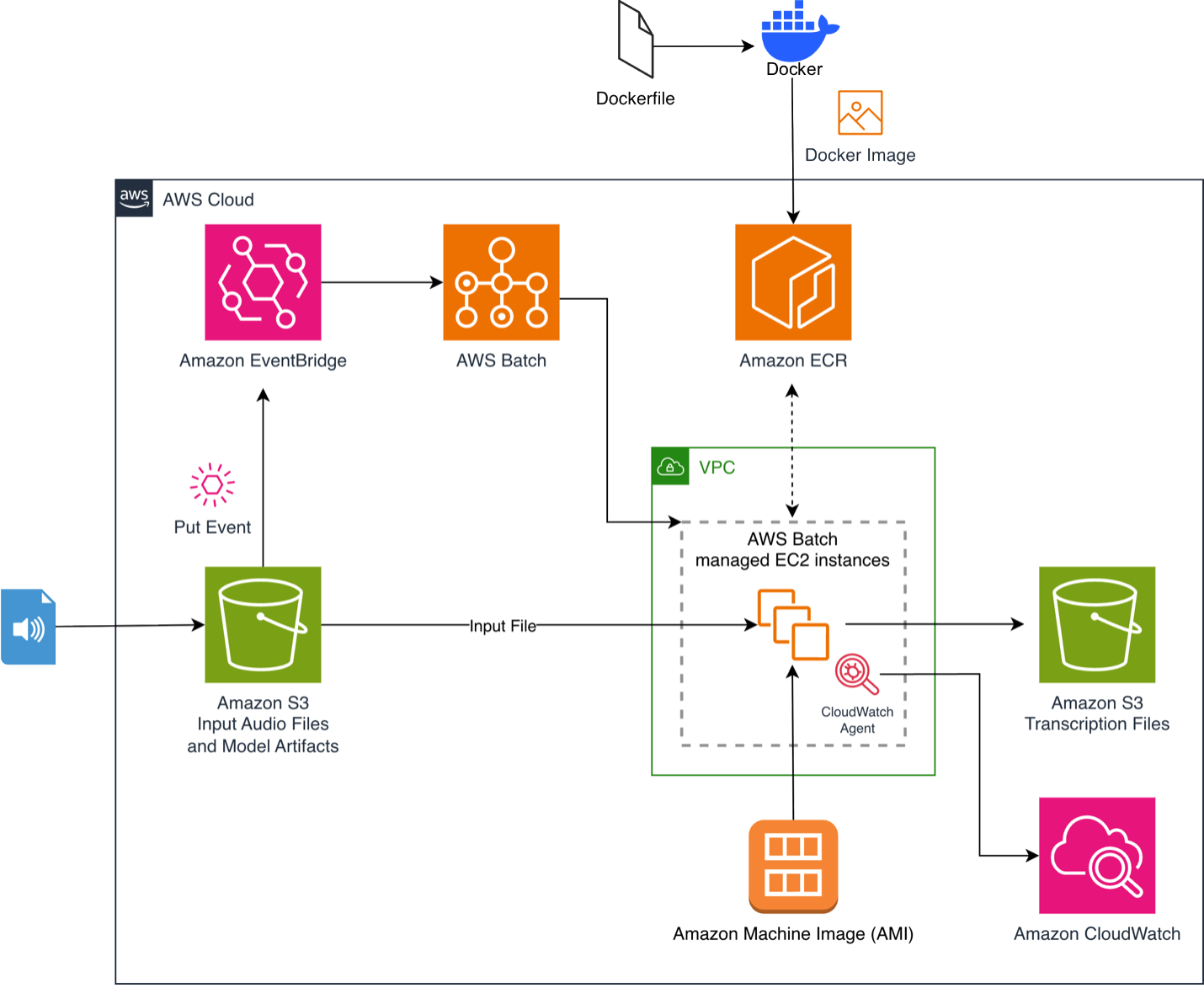

The method begins if you add an audio file to an S3 bucket. This triggers an Amazon EventBridge rule that submits a job to AWS Batch. AWS Batch provisions GPU-accelerated compute sources, and the provisioned cases pull our container picture with a pre-cached mannequin from Amazon Elastic Container Registry (Amazon ECR). The inference script downloads and processes the file, then uploads the timestamped JSON transcript to an output S3 bucket. The structure scales to zero when idle, so prices are incurred solely throughout energetic compute.

For a deep dive into the final architectural parts, consult with our earlier put up, Whisper audio transcription powered by AWS Batch and AWS Inferentia.

Determine 1. Occasion-driven audio transcription pipeline with Amazon EventBridge and AWS Batch

Determine 1. Occasion-driven audio transcription pipeline with Amazon EventBridge and AWS Batch

Conditions

- Create an AWS account in case you don’t have already got one and register. Create a consumer utilizing AWS IAM Id Heart with full administrator permissions as described in Add customers.

- Set up the AWS Command Line Interface (AWS CLI) in your native growth machine and create a profile for the admin consumer as described in Arrange the AWS CLI.

- Set up Docker in your native machine.

- Clone the GitHub repository to your native machine.

Constructing the container picture

The repository features a Docker file that builds a streamlined container picture optimized for inference efficiency. The picture makes use of Amazon Linux 2023 as a base, installs Python 3.12, and pre-caches the Parakeet-TDT-0.6B-v3 mannequin throughout the construct to alleviate obtain latency at runtime:

Pushing to Amazon ECR

The repository contains an updateImage.sh script that handles surroundings detection (CodeBuild or EC2), builds the container picture, creates an ECR repository if wanted, permits vulnerability scanning, and pushes the picture. Run it with:./updateImage.sh

Deploying the answer

The answer makes use of an AWS CloudFormation template (deployment.yaml) to provision the infrastructure. The buildArch.sh script automates the deployment by detecting your AWS Area, gathering VPC, subnet, and safety group info, and deploying the CloudFormation stack:

./buildArch.shBelow the hood, this runs:

The CloudFormation template creates the AWS Batch compute surroundings with G6 and G5 GPU cases, a job queue, a job definition referencing your ECR picture, enter and output S3 buckets with EventBridge notifications enabled. It additionally creates an EventBridge rule that triggers a Batch job on S3 add, an Amazon CloudWatch agent configuration for GPU/CPU/reminiscence monitoring, and IAM roles with least-privilege insurance policies. AWS Batch permits number of Amazon Linux 2023 GPU pictures by specifying ImageType: ECS_AL2023_NVIDIA within the compute surroundings configuration.

Alternatively, you possibly can deploy instantly from the AWS CloudFormation console utilizing the launch hyperlink supplied within the repository README.

Configuring Spot cases

Amazon EC2 Spot Cases can assist additional cut back the prices, by working your workloads on unused EC2 capability at a reduction of as much as 90% relying in your occasion kind. To allow Spot Cases, we modify the compute surroundings in deployment.yaml:

You’ll be able to allow this by setting –parameter-overrides UseSpotInstances=Sure when working aws cloudformation deploy. The SPOT_PRICE_CAPACITY_OPTIMIZED allocation technique selects Spot Occasion swimming pools which might be each the least more likely to be interrupted and have the bottom potential value. Diversifying occasion varieties (G6 xlarge, G6 2xlarge, G5 xlarge) can enhance Spot availability. Setting MinvCpus: 0 makes certain the surroundings scales to zero when idle, so that you keep away from incurring prices between workloads. Since ASR jobs are stateless and idempotent, they’re well-suited for Spot. If an occasion is reclaimed, AWS Batch mechanically retries the job (configured with as much as 2 retry makes an attempt within the job definition).

Managing reminiscence for lengthy audio

The Parakeet-TDT mannequin’s reminiscence consumption scales linearly with audio period. The Quick Conformer encoder should generate and retailer function representations for the total audio sign, making a direct dependency the place doubling audio size roughly doubles VRAM utilization. In keeping with the mannequin card, with full consideration the mannequin can course of as much as 24 minutes given 80GB of VRAM.

NVIDIA addresses this with a native consideration mode that helps as much as 3 hours of audio on an 80 GB A100:

Buffered streaming inference

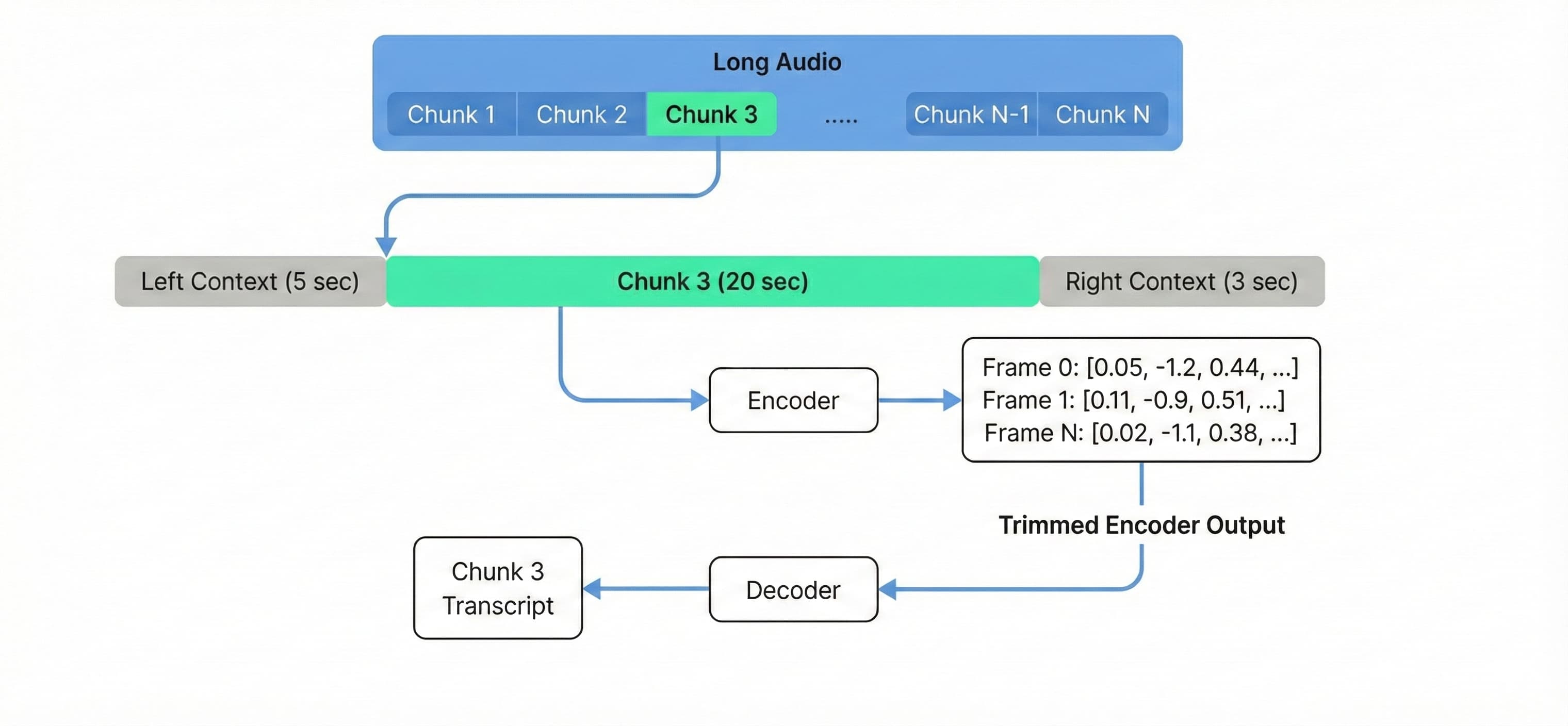

For audio that exceeds 3 hours, or to course of lengthy audio cost-effectively on normal {hardware} like a g6.xlarge, we use buffered streaming inference. Tailored from NVIDIA NeMo’s streaming inference instance, this method processes audio in overlapping chunks quite than loading the total context into reminiscence.

We configure 20-second chunks with 5-second left context and 3-second proper context to take care of transcription high quality at chunk boundaries (word that the accuracy might degrade when altering these parameters, so experiment to search out the optimum configuration. Reducing the chunk_secs will increase processing time):

Processing audio at fastened chunk sizes decouples VRAM utilization from whole audio size, permitting a single g6.xlarge occasion to course of a 10-hour file with the identical reminiscence footprint as a 10-minute one.

Determine 2. Buffered streaming inference processes audio in overlapping chunks with fixed reminiscence utilization.

To deploy with buffered streaming enabled, set the EnableStreaming=Sure parameter.

Testing and monitoring

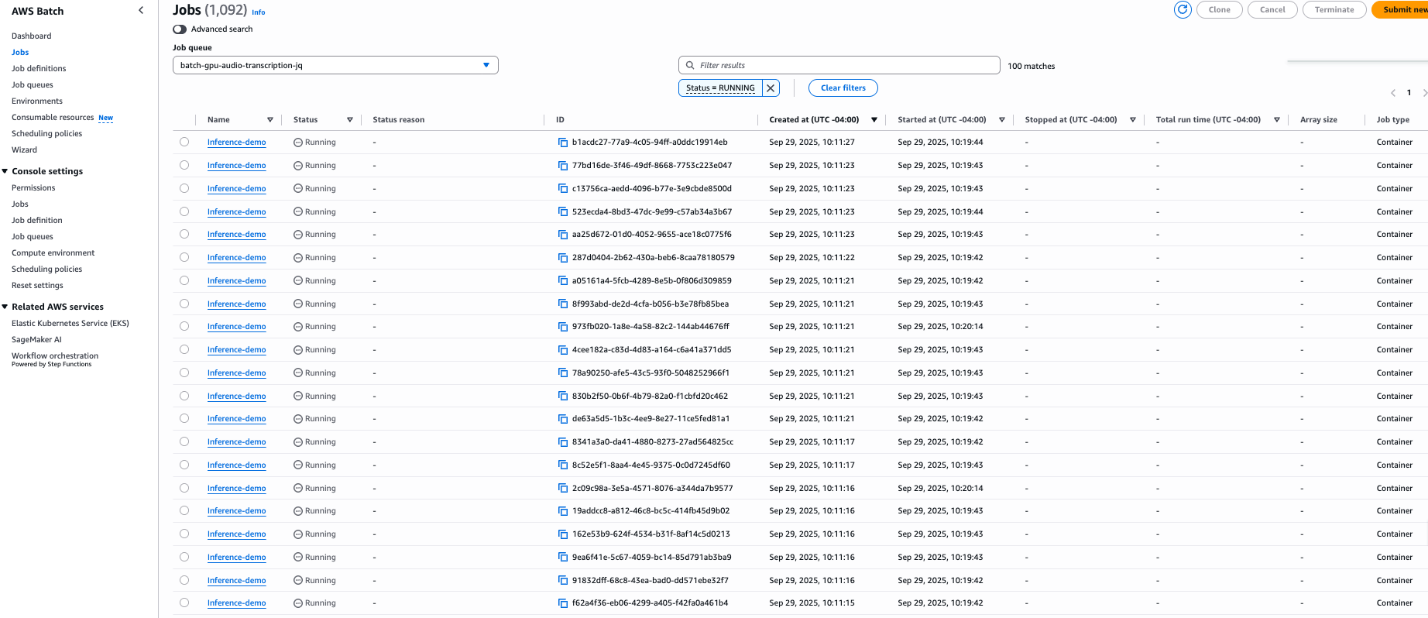

To validate the answer at scale, we ran an experiment with 1,000 equivalent 50-minute audio information from a NASA preflight crew information convention, distributed throughout 100 g6.xlarge cases processing 10 information every.

Determine 3. Batch jobs working concurrently on 100 g6.xlarge cases.

Determine 3. Batch jobs working concurrently on 100 g6.xlarge cases.

The deployment contains an Amazon CloudWatch agent configuration that collects GPU utilization, energy draw, VRAM utilization, CPU utilization, reminiscence consumption, and disk utilization at 10-second intervals. These metrics seem beneath the CWAgent namespace, enabling you to construct dashboards for real-time monitoring.

Efficiency and price evaluation

To validate the effectivity of the structure, we benchmarked the system utilizing a number of longform audio information.

The Parakeet-TDT-0.6B-v3 mannequin achieved a uncooked inference velocity of 0.24 seconds per minute of audio. Nonetheless, an entire pipeline additionally contains overhead for loading the mannequin into reminiscence, loading audio, preprocessing the enter and post-processing the output. Due to this overhead, the optimum price optimization occurs for long-form audio to maximise the processing time.

Benchmark outcomes (g6.xlarge):

- Audio Period: 3 hours 25 minutes (205 minutes)

- Complete Job Period: 100 sec

- Efficient Processing Pace: 0.49 seconds per minute of audio

- Price breakdown

Based mostly on pricing within the us-east-1 Area for the g6.xlarge occasion, we will estimate the fee per minute of audio processing.

| Pricing Mannequin | Hourly Price (g6.xlarge)* | Price per Minute of Audio |

|---|---|---|

| On-Demand | ~$0.805 | **$0.00011** |

| Spot Cases | ~$0.374 | **$0.00005** |

*Costs are estimates based mostly on us-east-1 charges on the time of writing. Spot costs fluctuate by Availability Zone and are topic to vary.

This comparability highlights the financial benefit of the self-hosted strategy for high-volume workloads, delivering worth for big scale transcriptions in comparison with managed API providers.

Cleanup

To keep away from incurring future expenses, delete the sources created by this resolution:

- Empty all S3 buckets (enter, output, and logs).

- Delete the CloudFormation stack:

aws cloudformation delete-stack --stack-name batch-gpu-audio-transcription

- Optionally, take away the ECR repository and container pictures.

For detailed cleanup directions, consult with the cleanup part of the repository README.

Conclusion

On this put up, we demonstrated learn how to construct an audio transcription pipeline that processes audio at scale for fractions of a cent per hour. By combining NVIDIA’s Parakeet-TDT-0.6B-v3 mannequin with AWS Batch and EC2 Spot Cases, you possibly can transcribe throughout 25 European languages with computerized language detection and assist cut back prices in comparison with different options. The buffered streaming inference method extends this functionality to audio of various size on normal {hardware}, and the event-driven structure scales mechanically from zero to deal with variable workloads.

To get began, discover the pattern code within the GitHub repository.

In regards to the authors