This submit is cowritten with David Kim, and Premjit Singh from Ring.

Scaling self-service assist globally presents challenges past translation. On this submit, we present you ways Ring, Amazon’s residence safety subsidiary, constructed a production-ready, multi-locale Retrieval-Augmented Technology (RAG)-based assist chatbot utilizing Amazon Bedrock Data Bases. By eliminating per-Area infrastructure deployments, Ring decreased the price of scaling to every further locale by 21%. On the identical time, Ring maintained constant buyer experiences throughout 10 worldwide Areas.

On this submit, you’ll find out how Ring applied metadata-driven filtering for Area-specific content material, separated content material administration into ingestion, analysis and promotion workflows, and achieved price financial savings whereas scaling up. The structure described on this submit makes use of Amazon Bedrock Data Bases, Amazon Bedrock, AWS Lambda, AWS Step Capabilities, and Amazon Easy Storage Service (Amazon S3). Whether or not you’re increasing assist operations internationally or trying to optimize your present RAG structure, this implementation offers sensible patterns you may apply to your personal multi-locale assist techniques.

The assist evolution journey for Ring

Buyer assist at Ring initially relied on a rule-based chatbot constructed with Amazon Lex. Whereas useful, the system had limitations with predefined dialog patterns that couldn’t deal with the various vary of buyer inquiries. Throughout peak intervals, 16% of interactions escalated to human brokers, and assist engineers spent 10% of their time sustaining the rule-based system. As Ring expanded throughout worldwide locales, this strategy turned unsustainable.

Necessities for a RAG-based assist system

Ring confronted a problem: the best way to present correct, contextually related assist throughout a number of worldwide locales with out creating separate infrastructure for every Area. The crew recognized 4 necessities that will inform their architectural strategy.

- World content material localization

The worldwide presence of Ring required greater than translation. Every territory wanted Area-specific product info, from voltage specs to regulatory compliance particulars, supplied by means of a unified system. Throughout the UK, Germany, and eight different locales, Ring wanted to deal with distinct product configurations and assist situations for every Area.

- Serverless, managed structure

Ring needed their engineering crew centered on bettering buyer expertise, not managing infrastructure. The crew wanted a completely managed, serverless answer.

- Scalable information administration

With a whole bunch of product guides, troubleshooting paperwork, and assist articles continuously being up to date, Ring wanted vector search know-how that would retrieve exact info from a unified repository. The system needed to assist automated content material ingestion pipelines in order that the Ring content material crew may publish updates that will change into accessible throughout a number of locales with out handbook intervention.

- Efficiency and value optimization

The typical end-to-end latency requirement for Ring was 7–8 seconds and efficiency evaluation revealed that cross-Area latency accounted for lower than 10% of whole response time. This discovering allowed Ring to undertake a centralized structure relatively than deploying separate infrastructure in every Area, which decreased operational complexity and prices.

To handle these necessities, Ring applied metadata-driven filtering with content material locale tags. This strategy serves Area-specific content material from a single centralized system. For his or her serverless necessities, Ring selected Amazon Bedrock Data Bases and Lambda, which eliminated the necessity for infrastructure administration whereas offering automated scaling.

Overview of answer

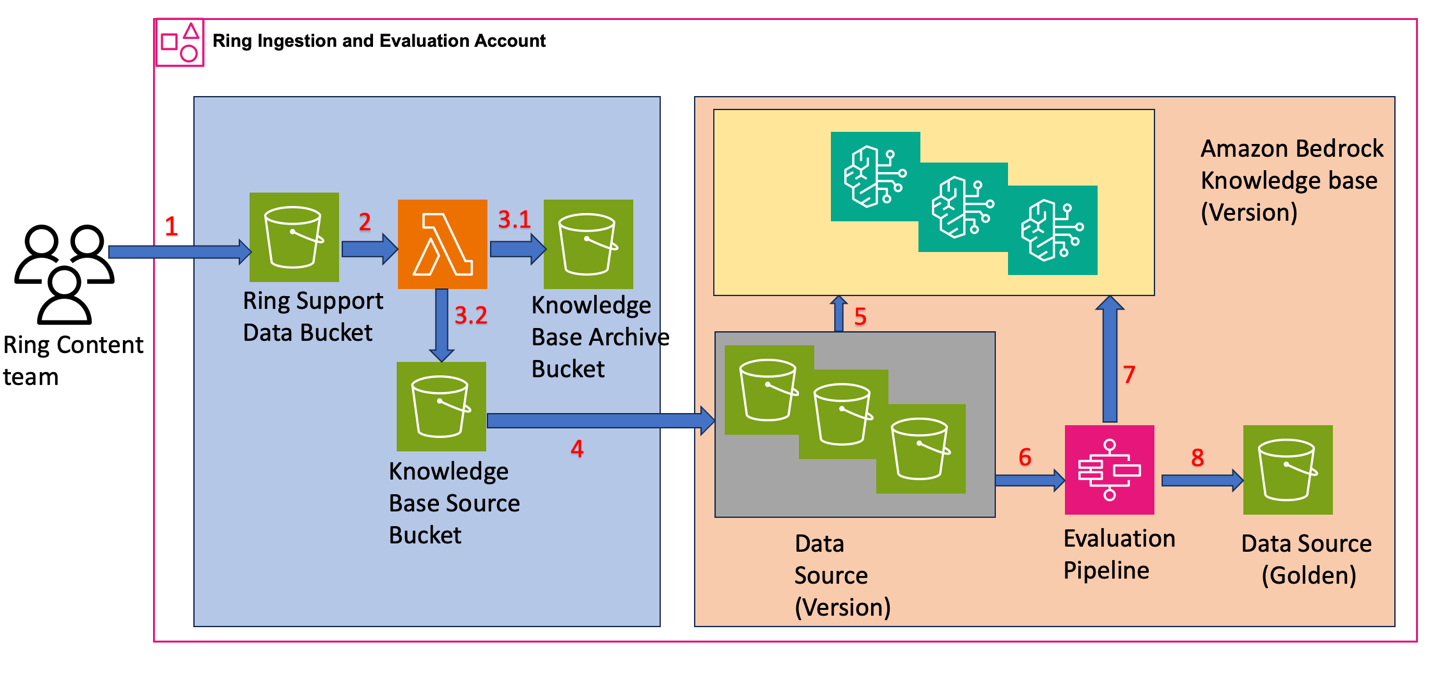

Ring designed their RAG-based chatbot structure to separate content material administration into two core processes: Ingestion & Analysis and Promotion. This two-phase strategy permits Ring to keep up steady content material enchancment whereas preserving manufacturing techniques steady.

Ingestion and analysis workflow

Determine 1: Structure diagram exhibiting the Ring ingestion and analysis workflow with Step Capabilities orchestrating every day information base creation, analysis, and high quality validation utilizing Data Bases and S3 storage.

- Content material add – The Ring content material crew uploads assist documentation, troubleshooting guides, and product info to Amazon S3. The crew structured the S3 objects with content material in encoded format and metadata attributes. For instance, a file for the content material “Steps to Substitute the doorbell battery” has the next construction:

- Content material processing – Ring configured Amazon S3 bucket occasion notifications with Lambda because the goal to routinely course of uploaded content material.

- Uncooked and processed content material storage

The Lambda operate performs two key operations:- Copies the uncooked knowledge to the Data Base Archive Bucket

- Extracts metadata and content material from uncooked knowledge, storing them as separate recordsdata within the Data Base Supply Bucket with

contentLocaleclassification (for instance, {locale}/Service.Ring.{Upsert/Delete}.{unique_identifier}.json)

For the doorbell battery instance, the Ring metadata and content material recordsdata have the next construction:

{locale}/Service.Ring.{Upsert/Delete}.{unique_identifier}.metadata.json

{locale}/Service.Ring.{Upsert/Delete}.{unique_identifier}.json

- Each day Information Copy and Data Base Creation

Ring makes use of AWS Step Capabilities to orchestrate their every day workflow that:

- Copies content material and metadata from the Data Base Supply Bucket to Information Supply (Model)

- Creates a brand new Data Base (Model) by indexing the every day bucket as knowledge supply for vector embedding

Every model maintains a separate Data Base, giving Ring unbiased analysis capabilities and easy rollback choices.

- Each day Analysis Course of

The AWS Step Capabilities workflow continues utilizing analysis datasets to:

- Run queries throughout Data Base variations

- Check retrieval accuracy and response high quality to check efficiency between variations

- Publish efficiency metrics to Tableau dashboards with outcomes organized by

contentLocale

- High quality Validation and Golden Dataset Creation

Ring makes use of the Anthropic Claude Sonnet 4 giant language mannequin (LLM)-as-a-judge to:

- Consider metrics throughout Data Base variations to establish the best-performing model

- Evaluate retrieval accuracy, response high quality, and efficiency metrics organized by

contentLocale - Promote the highest-performing model to Information Supply (Golden) for manufacturing use

This structure helps rollbacks to earlier variations for as much as 30 days. As a result of content material is up to date roughly 200 instances per week, Ring determined to not preserve variations past 30 days.

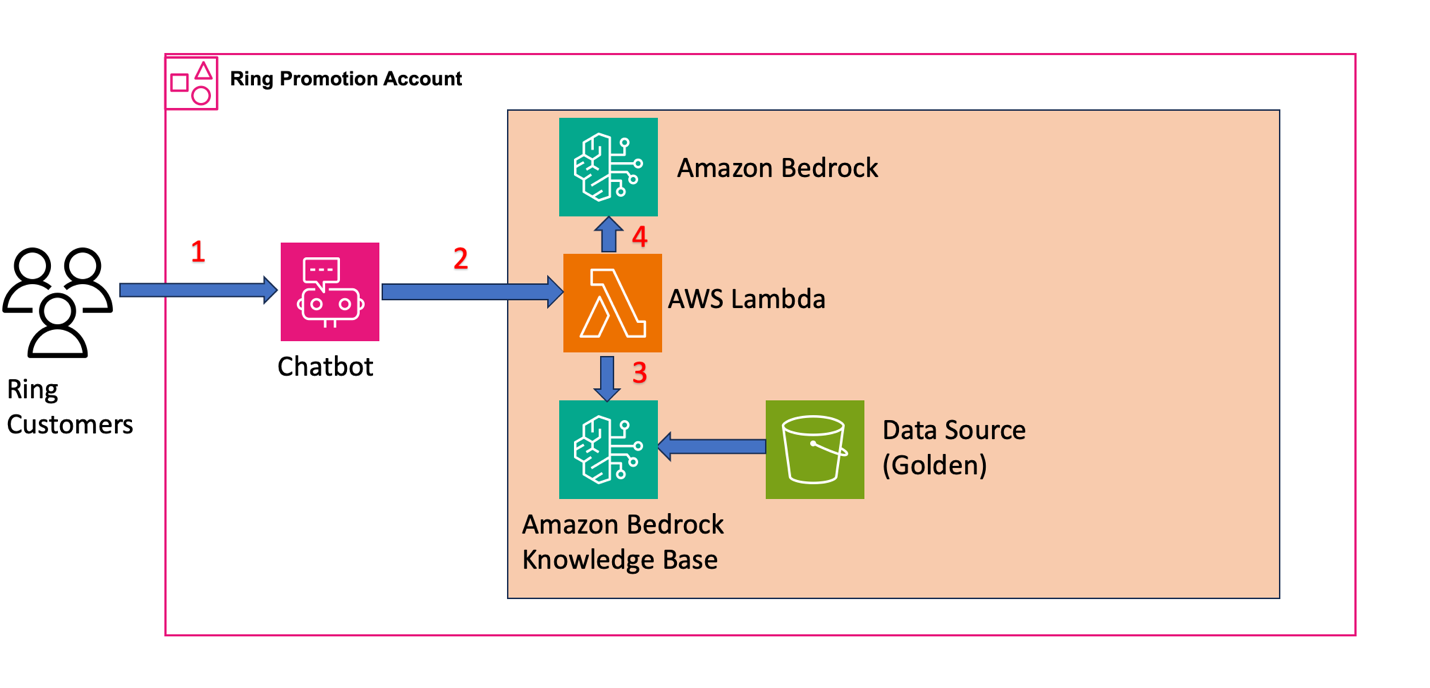

Promotion workflow: customer-facing

Determine 2: Structure diagram exhibiting the Ring manufacturing chatbot system the place buyer queries circulate by means of AWS Lambda to retrieve context from Data Bases and generate responses utilizing basis fashions

- Buyer interplay – Prospects provoke assist queries by means of the chatbot interface. For instance, a buyer question for the battery substitute situation seems to be like this:

- Question orchestration and information retrieval

Ring configured Lambda to course of buyer queries and retrieve related content material from Amazon Bedrock Data Bases. The operate:

- Transforms incoming queries for the RAG system

- Applies metadata filtering with

contentLocaletags utilizing equals operator for exact Regional content material focusing on - Queries the validated Golden Information Supply to retrieve contextually related content material

Right here’s the pattern code Ring makes use of in AWS Lambda:

- Response era

Within the Lambda operate, the system:

- Kinds the retrieved content material based mostly on relevance rating and selects the highest-scoring context

- Combines the top-ranked context with the unique buyer question to create an augmented immediate

- Sends the augmented immediate to LLM on Amazon Bedrock

- Configures locale-specific prompts for every

contentLocale - Generates contextually related responses returned by means of the chatbot interface

Different concerns in your implementation

When constructing your personal RAG-based system at scale, contemplate these architectural approaches and operational necessities past the core implementation:

Vector retailer choice

The Ring implementation makes use of Amazon OpenSearch Serverless because the vector retailer for his or her information bases. Nevertheless, Amazon Bedrock Data Bases additionally helps Amazon S3 Vectors as a vector retailer possibility. When selecting between these choices, contemplate:

- Amazon OpenSearch Serverless: Supplies superior search capabilities, real-time indexing, and versatile querying choices. Finest suited to functions requiring complicated search patterns or while you want further OpenSearch options past vector search.

- Amazon S3 vectors: Gives a less expensive possibility for simple vector search use instances. S3 vector shops present automated scaling, built-in sturdiness, and could be extra economical for large-scale deployments with predictable entry patterns.

Along with these two choices, AWS helps integrations with different knowledge retailer choices, together with Amazon Kendra, Amazon Neptune Analytics, and Amazon Aurora PostgreSQL. Consider your particular necessities round question complexity, price optimization, and operational wants when choosing your vector retailer. The prescriptive steerage offers a great start line to judge vector shops in your RAG use case.

Versioning structure concerns

Whereas Ring applied separate Data Bases for every model, you may contemplate another strategy involving separate knowledge sources for every model inside a single information base. This technique leverages the x-amz-bedrock-kb-data-source-id filter parameter to focus on particular knowledge sources throughout retrieval:

When selecting between these approaches, weigh these particular trade-offs:

- Separate information bases per model (the strategy that Ring makes use of): Supplies knowledge supply administration and cleaner rollback capabilities, however requires managing extra information base situations.

- Single information base with a number of knowledge sources: Reduces the variety of information base situations to keep up, however introduces complexity in knowledge supply routing logic and filtering mechanisms, plus requires sustaining separate knowledge shops for every knowledge supply ID.

Catastrophe restoration: Multi-Area deployment

Think about your catastrophe restoration necessities when designing your RAG structure. Amazon Bedrock Data Bases are Regional assets. To attain strong catastrophe restoration, deploy your full structure throughout a number of Areas:

- Data bases: Create Data Base situations in a number of Areas

- Amazon S3 buckets: Keep cross-Area copies of your Golden Information Supply

- Lambda capabilities and Step Capabilities workflows: Deploy your orchestration logic in every Area

- Information synchronization: Implement processes to maintain content material synchronized throughout Areas

The centralized structure serves its site visitors from a single Area, prioritizing price optimization over multi-region deployment. Consider your personal Restoration Time Goal (RTO) and Restoration Level Goal (RPO) necessities to find out whether or not a multi-Area deployment is important in your use case.

Basis mannequin throughput: Cross-Area inference

Amazon Bedrock basis fashions are Regional assets with Regional quotas. To deal with site visitors bursts and scale past single-Area quotas, Amazon Bedrock helps cross-Area inference (CRIS). CRIS routinely routes inference requests throughout a number of AWS Areas to extend throughput:

CRIS: Routes requests solely inside particular geographic boundaries (comparable to inside the US or inside the EU) to fulfill knowledge residency necessities. This could present as much as double the default in-Area quotas.

World CRIS: Routes requests throughout a number of industrial Areas worldwide, optimizing accessible assets and offering larger mannequin throughput past geographic CRIS capabilities. World CRIS routinely selects the optimum Area to course of every request.

CRIS operates independently out of your Data Base deployment technique. Even with a single-Area Data Base deployment, you may configure CRIS to scale your basis mannequin throughput throughout site visitors bursts. Observe that CRIS applies solely to the inference layer—your Data Bases, S3 buckets, and orchestration logic stay Regional assets that require separate multi-Area deployment for catastrophe restoration.

Embedding mannequin choice and chunking technique

Choosing the suitable embedding mannequin and chunking technique is necessary for RAG system efficiency as a result of it instantly impacts retrieval accuracy and response high quality. Ring makes use of the Amazon Titan Embeddings mannequin with the default chunking technique, which proved efficient for his or her assist documentation.

Amazon Bedrock presents flexibility with a number of choices:

Embedding fashions:

- Amazon Titan embeddings: Optimized for text-based content material

- Amazon Nova multimodal embeddings: Helps “Textual content”, “Picture”, “Audio”, and “Video” modalities

Chunking methods:

When ingesting knowledge, Amazon Bedrock splits paperwork into manageable chunks for environment friendly retrieval utilizing 4 methods:

- Normal chunking: Mounted-size chunks for uniform paperwork

- Hierarchical chunking: For structured paperwork with clear part hierarchies

- Semantic chunking: Splits content material based mostly on matter boundaries

- Multimodal content material chunking: For paperwork with combined content material sorts (textual content, pictures, tables)

Consider your content material traits to pick out the optimum mixture in your particular use case.

Conclusion

On this submit, we confirmed how Ring constructed a production-ready, multi-locale RAG-based assist chatbot utilizing Amazon Bedrock Data Bases. The structure combines automated content material ingestion, systematic every day analysis utilizing an LLM-as-judge strategy, and metadata-driven content material focusing on to attain a 21% discount in infrastructure and operational price per further locale, whereas sustaining constant buyer experiences throughout 10 worldwide Areas.

Past the core RAG structure, we coated key design concerns for manufacturing deployments: vector retailer choice, versioning methods, multi-Area deployment for catastrophe restoration, Cross-Area Inference for scaling basis mannequin throughput, embedding mannequin choice and chunking methods. These patterns apply broadly to any crew constructing multi-locale or high-availability RAG techniques on AWS.Ring continues to evolve their chatbot structure towards an agentic mannequin with dynamic agent choice and integration of a number of specialised brokers. This agentic strategy will permit Ring to route buyer inquiries to specialised brokers for gadget troubleshooting, order administration, and product suggestions, demonstrating the extensibility of RAG-based assist techniques constructed on Amazon Bedrock.

To study extra about Amazon Bedrock Data Bases, go to the Amazon Bedrock documentation.

In regards to the authors