On this article, you’ll be taught sensible methods for constructing helpful machine studying options when you will have restricted compute, imperfect knowledge, and little to no engineering help.

Matters we’ll cowl embody:

- What “low-resource” actually appears like in observe.

- Why light-weight fashions and easy workflows usually outperform complexity in constrained settings.

- Easy methods to deal with messy and lacking knowledge, plus easy switch studying methods that also work with small datasets.

Let’s get began.

Constructing Sensible Machine Studying in Low-Useful resource Settings

Picture by Creator

Most individuals who need to construct machine studying fashions should not have highly effective servers, pristine knowledge, or a full-stack staff of engineers. Particularly should you reside in a rural space and run a small enterprise (or you’re simply beginning out with minimal instruments), you most likely should not have entry to many sources.

However you’ll be able to nonetheless construct highly effective, helpful options.

Many significant machine studying tasks occur in locations the place computing energy is proscribed, the web is unreliable, and the “dataset” appears extra like a shoebox stuffed with handwritten notes than a Kaggle competitors. However that’s additionally the place among the most intelligent concepts come to life.

Right here, we’ll speak about find out how to make machine studying work in these environments, with classes pulled from real-world tasks, together with some sensible patterns seen on platforms like StrataScratch.

What Low-Useful resource Actually Means

In abstract, working in a low-resource setting seemingly appears like this:

- Outdated or sluggish computer systems

- Patchy or no web

- Incomplete or messy knowledge

- A one-person “knowledge staff” (most likely you)

These constraints may really feel limiting, however there’s nonetheless loads of potential to your options to be sensible, environment friendly, and even modern.

Why Light-weight Machine Studying Is Truly a Energy Transfer

The reality is that deep studying will get loads of hype, however in low-resource environments, light-weight fashions are your greatest good friend. Logistic regression, choice timber, and random forests might sound old-school, however they get the job completed.

They’re quick. They’re interpretable. And so they run superbly on primary {hardware}.

Plus, if you’re constructing instruments for farmers, shopkeepers, or neighborhood staff, readability issues. Folks must belief your fashions, and easy fashions are simpler to clarify and perceive.

Widespread wins with traditional fashions:

- Crop classification

- Predicting inventory ranges

- Gear upkeep forecasting

So, don’t chase complexity. Prioritize readability.

Turning Messy Information into Magic: Function Engineering 101

In case your dataset is slightly (or lots) chaotic, welcome to the membership. Damaged sensors, lacking gross sales logs, handwritten notes… we’ve all been there.

Right here’s how one can extract which means from messy inputs:

1. Temporal Options

Even inconsistent timestamps might be helpful. Break them down into:

- Day of week

- Time since final occasion

- Seasonal flags

- Rolling averages

2. Categorical Grouping

Too many classes? You possibly can group them. As an alternative of monitoring each product title, attempt “perishables,” “snacks,” or “instruments.”

3. Area-Primarily based Ratios

Ratios usually beat uncooked numbers. You possibly can attempt:

- Fertilizer per acre

- Gross sales per stock unit

- Water per plant

4. Strong Aggregations

Use medians as an alternative of means to deal with wild outliers (like sensor errors or data-entry typos).

5. Flag Variables

Flags are your secret weapon. Add columns like:

- “Manually corrected knowledge”

- “Sensor low battery”

- “Estimate as an alternative of precise”

They provide your mannequin context that issues.

Lacking Information?

Lacking knowledge generally is a downside, however it’s not at all times. It may be info in disguise. It’s vital to deal with it with care and readability.

Deal with Missingness as a Sign

Typically, what’s not crammed in tells a narrative. If farmers skip sure entries, it would point out one thing about their scenario or priorities.

Follow Easy Imputation

Go along with medians, modes, or forward-fill. Fancy multi-model imputation? Skip it in case your laptop computer is already wheezing.

Use Area Data

Area specialists usually have sensible guidelines, like utilizing common rainfall throughout planting season or identified vacation gross sales dips.

Keep away from Advanced Chains

Don’t attempt to impute every thing from every thing else; it simply provides noise. Outline just a few strong guidelines and stick with them.

Small Information? Meet Switch Studying

Right here’s a cool trick: you don’t want large datasets to learn from the massive leagues. Even easy types of switch studying can go a good distance.

Textual content Embeddings

Received inspection notes or written suggestions? Use small, pretrained embeddings. Massive features with low price.

International to Native

Take a worldwide weather-yield mannequin and modify it utilizing just a few native samples. Linear tweaks can do wonders.

Function Choice from Benchmarks

Use public datasets to information what options to incorporate, particularly in case your native knowledge is noisy or sparse.

Time Collection Forecasting

Borrow seasonal patterns or lag buildings from world traits and customise them to your native wants.

A Actual-World Case: Smarter Crop Decisions in Low-Useful resource Farming

A helpful illustration of light-weight machine studying comes from a StrataScratch undertaking that works with actual agricultural knowledge from India.

The purpose of this undertaking is to advocate crops that match the precise situations farmers are working with: messy climate patterns, imperfect soil, all of it.

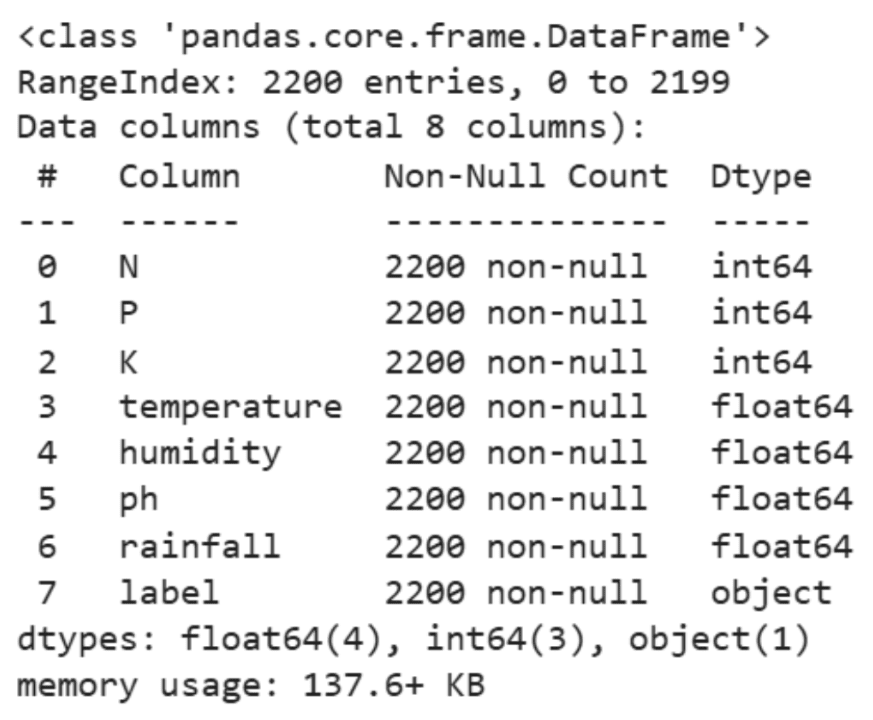

The dataset behind it’s modest: about 2,200 rows. But it surely covers vital particulars like soil vitamins (nitrogen, phosphorus, potassium) and pH ranges, plus primary local weather info like temperature, humidity, and rainfall. Here’s a pattern of the information:

As an alternative of reaching for deep studying or different heavy strategies, the evaluation stays deliberately easy.

We begin with some descriptive statistics:

|

df.select_dtypes(embody=[‘int64’, ‘float64’]).describe() |

Then, we proceed to some visible exploration:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# Setting the aesthetic type of the plots sns.set_theme(type=“whitegrid”)

# Creating visualizations for Temperature, Humidity, and Rainfall fig, axes = plt.subplots(1, 3, figsize=(14, 5))

# Temperature Distribution sns.histplot(df[‘temperature’], kde=True, coloration=“skyblue”, ax=axes[0]) axes[0].set_title(‘Temperature Distribution’)

# Humidity Distribution sns.histplot(df[‘humidity’], kde=True, coloration=“olive”, ax=axes[1]) axes[1].set_title(‘Humidity Distribution’)

# Rainfall Distribution sns.histplot(df[‘rainfall’], kde=True, coloration=“gold”, ax=axes[2]) axes[2].set_title(‘Rainfall Distribution’)

plt.tight_layout() plt.present() |

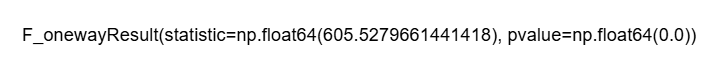

Lastly, we run just a few ANOVA checks to know how environmental elements differ throughout crop sorts:

ANOVA Evaluation for Humidity

|

# Outline crop_types based mostly in your DataFrame ‘df’ crop_types = df[‘label’].distinctive()

# Making ready a listing of humidity values for every crop sort humidity_lists = [df[df[‘label’] == crop][‘humidity’] for crop in crop_types]

# Performing the ANOVA take a look at for humidity anova_result_humidity = f_oneway(*humidity_lists)

anova_result_humidity |

ANOVA Evaluation for Rainfall

|

# Outline crop_types based mostly in your DataFrame ‘df’ if not already outlined crop_types_rainfall = df[‘label’].distinctive()

# Making ready a listing of rainfall values for every crop sort rainfall_lists = [df[df[‘label’] == crop][‘rainfall’] for crop in crop_types_rainfall]

# Performing the ANOVA take a look at for rainfall anova_result_rainfall = f_oneway(*rainfall_lists)

anova_result_rainfall |

ANOVA Evaluation for Temperature

|

# Guarantee crop_types is outlined out of your DataFrame ‘df’ crop_types_temp = df[‘label’].distinctive()

# Making ready a listing of temperature values for every crop sort temperature_lists = [df[df[‘label’] == crop][‘temperature’] for crop in crop_types_temp]

# Performing the ANOVA take a look at for temperature anova_result_temperature = f_oneway(*temperature_lists)

anova_result_temperature |

This small-scale, low-resource undertaking mirrors real-life challenges in rural farming. Everyone knows that climate patterns don’t comply with guidelines, and local weather knowledge might be patchy or inconsistent. So, as an alternative of throwing a fancy mannequin on the downside and hoping it figures issues out, we dug into the information manually.

Maybe probably the most precious facet of this strategy is its interpretability. Farmers usually are not in search of opaque predictions; they need steerage they will act on. Statements like “this crop performs higher underneath excessive humidity” or “that crop tends to favor drier situations” translate statistical findings into sensible selections.

This whole workflow was tremendous light-weight. No fancy {hardware}, no costly software program, simply trusty instruments like pandas, Seaborn, and a few primary statistical checks. Every thing ran easily on an everyday laptop computer.

The core analytical step used ANOVA to examine whether or not environmental situations akin to humidity or rainfall differ considerably between crop sorts.

In some ways, this captures the spirit of machine studying in low-resource environments. The strategies stay grounded, computationally light, and simple to clarify, but they nonetheless provide insights that may assist folks make extra knowledgeable selections, even with out superior infrastructure.

For Aspiring Information Scientists in Low-Useful resource Settings

You won’t have a GPU. You is likely to be utilizing free-tier instruments. And your knowledge may seem like a puzzle with lacking items.

However right here’s the factor: you’re studying abilities that many overlook:

- Actual-world knowledge cleansing

- Function engineering with intuition

- Constructing belief by means of explainable fashions

- Working sensible, not flashy

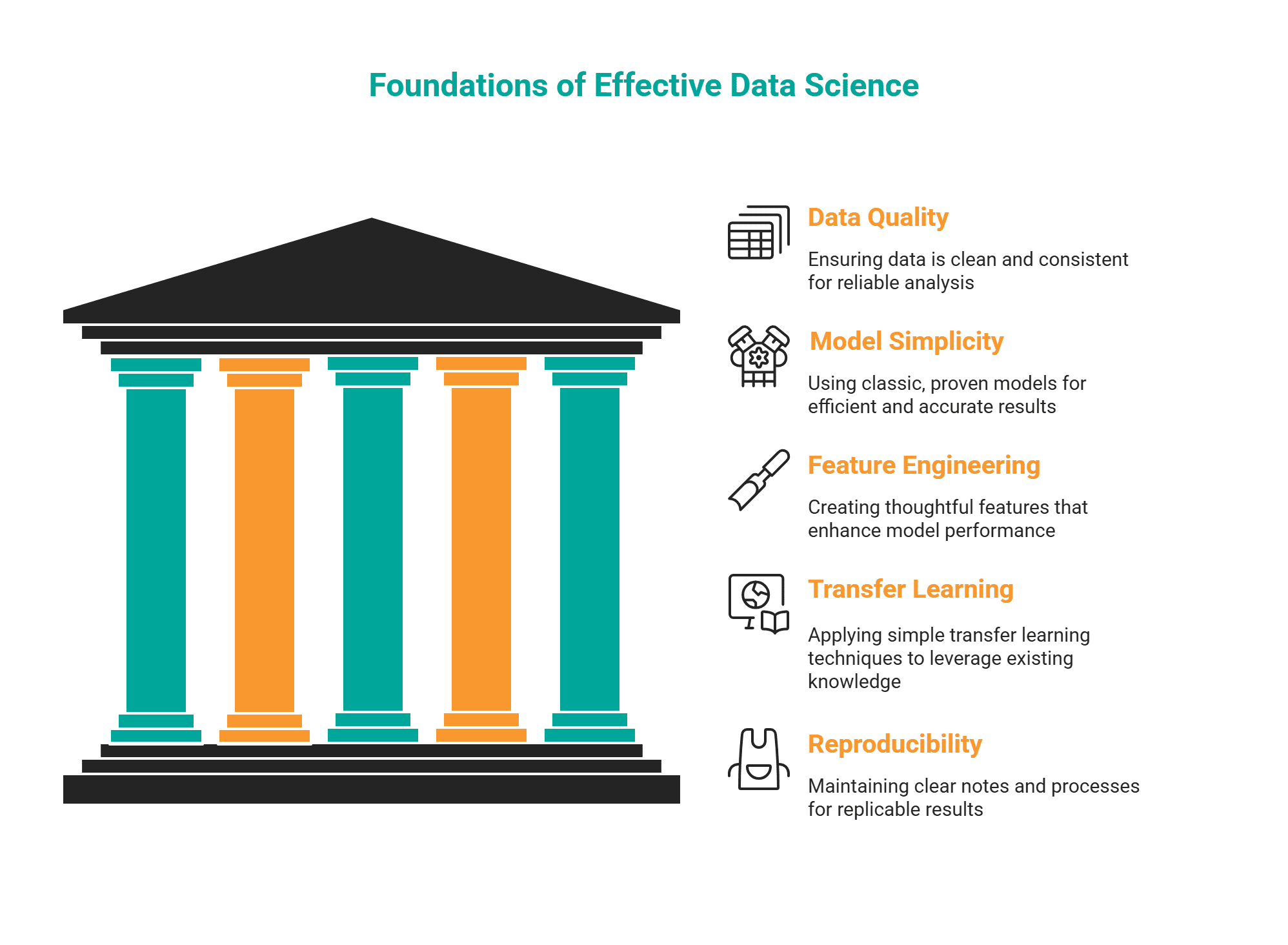

Prioritize this:

- Clear, constant knowledge

- Traditional fashions that work

- Considerate options

- Easy switch studying methods

- Clear notes and reproducibility

In the long run, that is the form of work that makes an amazing knowledge scientist.

Conclusion

Picture by Creator

Working in low-resource machine studying environments is feasible. It asks you to be inventive and enthusiastic about your mission. It comes right down to discovering the sign within the noise and fixing actual issues that make life simpler for actual folks.

On this article, we explored how light-weight fashions, sensible options, trustworthy dealing with of lacking knowledge, and intelligent reuse of current information may help you get forward when working in such a scenario.

What are your ideas? Have you ever ever constructed an answer in a low-resource setup?