This submit is co-written with Mark Ross from Atos.

Organizations pursuing AI transformation can face a well-recognized problem: the way to upskill their workforce at scale in a manner that adjustments how groups construct, deploy, and use AI. Conventional AI coaching approaches—on-line programs, certification applications, and classroom-based instruction—are obligatory, however usually inadequate. Whereas they construct foundational data, many organizations battle with low engagement, restricted hands-on observe, and a spot between theoretical understanding and real-world software. Consequently, groups might earn certifications with out gaining the boldness or expertise required to use AI meaningfully to enterprise issues.

By Atos’ partnership with AWS, we’ve lengthy acknowledged that hands-on studying is the lacking ingredient in efficient AI enablement. When mixed with structured e-learning and certification pathways, experiential studying helps translate data into affect. Immediately, Atos workers maintain over 5,800 AWS Certifications and 11 Golden Jackets, reflecting our sturdy basis in cloud and AI abilities. However with a dedication to attaining a 100% AI-fluent workforce by 2026, we knew we would have liked a studying mannequin that might scale engagement, speed up sensible abilities, and inspire engineers to use AI in sensible eventualities.

To deal with this, Atos partnered with AWS to ship a hands-on, gamified studying expertise by means of the AWS AI League—designed to maneuver past passive studying and immerse contributors in actual AI challenges. On this submit, we’ll discover how Atos used the AWS AI League to assist speed up AI schooling throughout 400+ contributors, spotlight the tangible advantages of gamified, experiential studying, and share actionable insights you possibly can apply to your personal AI enablement applications.

AI enablement by means of the AWS AI League

Whereas e-learning programs and certifications are a necessary basis, many organizations battle to translate that data into hands-on expertise, sustained engagement, and actual enterprise affect—significantly at scale.

The AWS AI League was designed to handle this hole. Moderately than focusing solely on conceptual studying, this system combines hands-on experimentation with structured competitors, so builders can work instantly with generative AI instruments utilized in real-world environments. For Atos, this method supplied a technique to speed up utilized AI abilities throughout the group whereas sustaining engagement, collaboration, and measurable outcomes.

The AWS AI League helps builders degree up their AI abilities by abstracting away deep infrastructure complexity whereas preserving the core mechanics of mannequin customization and analysis. Individuals work with Amazon SageMaker and Amazon SageMaker JumpStart to fine-tune massive language fashions (LLMs), gaining sensible expertise with strategies which might be more and more central to enterprise AI adoption.

Why fine-tuning issues for enterprise use circumstances

Advantageous-tuning a big language mannequin is a type of switch studying—a machine studying approach the place a pre-trained mannequin is tailored utilizing a smaller, domain-specific dataset moderately than being educated from scratch. For enterprise groups, this method gives a realistic path to customization: it helps scale back coaching time and computational price whereas permitting fashions to mirror specialised data, terminology, and resolution logic.

In observe, organizations that use fine-tuning can adapt general-purpose fashions to particular domains the place accuracy, reasoning, and explainability are crucial. For Atos, this meant tailoring fashions to the insurance coverage underwriting area, the place understanding threat profiles, coverage situations, exclusions, and premium calculations requires greater than generic language fluency. The AWS AI League demonstrates that, with the suitable construction and tooling, groups throughout roles—together with options architects, builders, consultants, and enterprise analysts—can fine-tune and deploy fashions with out requiring deep machine studying specialization. This makes fine-tuning a sensible functionality for companion organizations centered on delivering customer-ready AI options.

How the AWS AI League works

The AWS AI League follows a three-stage construction designed to construct hands-on, production-oriented AI abilities whereas sustaining momentum and engagement.This system begins with an immersive workshop that introduces the basics of fine-tuning utilizing SageMaker JumpStart. SageMaker JumpStart supplies entry to pre-trained basis fashions by means of a guided interface, permitting contributors to deal with mannequin habits and outcomes moderately than infrastructure setup.Individuals then transfer into an intensive mannequin growth section. Throughout this stage, groups iterate throughout a number of fine-tuning methods, experimenting with dataset composition, augmentation strategies, and hyperparameter settings. Mannequin submissions are evaluated on a dynamic leaderboard powered by an AI-based analysis system, which benchmarks efficiency throughout a constant set of standards. This construction encourages speedy experimentation and makes progress seen, permitting groups to check their personalized fashions towards bigger baseline fashions.This system culminates in a reside, interactive finale. High-performing groups exhibit their fashions by means of real-time challenges, with outputs evaluated utilizing a multi-dimensional scoring system. Technical judges assess depth and correctness, an AI benchmark measures goal efficiency, and viewers voting introduces a sensible, user-oriented perspective. Collectively, these dimensions reinforce the League’s aim: turning hands-on studying into fashions that carry out nicely in real-world eventualities.

Atos’s use case – Clever Insurance coverage Underwriter

With this basis in place, Atos chosen a use case that carefully displays actual buyer wants: the Clever Insurance coverage Underwriter. Developed by means of an AWS AI League occasion, the aim was to fine-tune a big language mannequin able to analyzing advanced insurance coverage eventualities and offering expert-level underwriting steerage. The mannequin was designed to evaluate threat, advocate applicable coverage situations or deductibles, recommend premium changes, and clearly clarify the reasoning behind every resolution — all whereas aligning with skilled business requirements.This use case was chosen not as a theoretical train, however as a sensible instance of how generative AI can help underwriting professionals by enhancing consistency and effectivity throughout insurance coverage product strains. Constructed on cost-effective, fine-tuned open supply fashions and powered by Amazon SageMaker, SageMaker Unified Studio, and Amazon S3, the answer incorporates a data base alongside reasoning and suggestion modules educated on proprietary underwriting knowledge. The result’s an reasonably priced, personalized assistant that enhances group productiveness, sharpens threat evaluation accuracy, and integrates seamlessly with the genuine business experience underwriters already depend on.

Advantageous-tuning with Amazon SageMaker Studio and Amazon SageMaker JumpStart

AWS AI League contributors do their mannequin fine-tuning inside Amazon SageMaker Studio—a totally built-in, web-based growth atmosphere for machine studying. SageMaker Studio supplies a low-code/no-code (LCNC) interface to construct, fine-tune, deploy, and monitor generative AI fashions end-to-end. By following this method, Atos contributors may deal with experimentation and innovation moderately than infrastructure administration, serving to speed up time-to-value. AI League now additionally gives customization of Amazon Nova fashions by means of serverless SageMaker mannequin customization and agentic challenges constructed on high of Amazon Bedrock AgentCore.

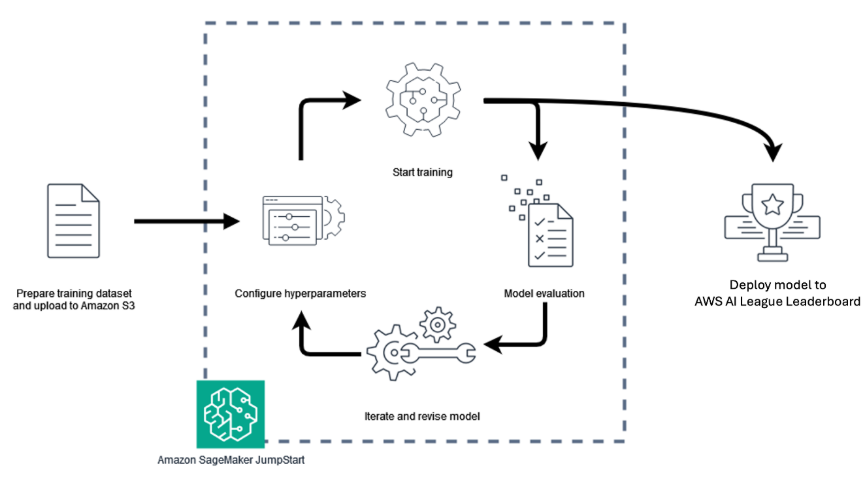

Customers comply with a streamlined collection of steps inside Amazon SageMaker Studio:

- Choose a mannequin – SageMaker JumpStart gives a catalog of pre-trained, publicly out there basis fashions for duties resembling textual content technology, summarization, and picture creation. Individuals can seamlessly browse and choose fashions from main suppliers, that are pre-integrated for personalization. For this competitors, contributors had been required to fine-tune the Meta Llama 3.2 3B Instruct mannequin, which is achieved in a no-code manner using Amazon SageMaker Jumpstart.

- Present a coaching dataset – Datasets saved in Amazon Easy Storage Service (Amazon S3) are related on to SageMaker, leveraging its just about limitless storage capability for fine-tuning duties.

- Carry out fine-tuning – Customers can configure hyperparameters resembling studying charge, epochs, and batch dimension earlier than launching the fine-tuning job. SageMaker then manages the coaching course of, together with provisioning compute sources and logging progress.

- Deploy the mannequin – As soon as coaching is full, contributors can deploy their fashions instantly from SageMaker Studio for inference or import them into Amazon Bedrock, which supplies a totally managed atmosphere for scalable manufacturing deployment.

- Consider and iterate – In the course of the AWS AI League, analysis was carried out utilizing LLM-as-a-Decide, an inside judging system that robotically scored fashions on high quality, accuracy, and responsiveness.

This simplified workflow, depicted above, reveals the AWS AI League mannequin growth lifecycle and the way it helps scale back the complexity of growing and operationalizing specialised AI fashions, whereas preserving efficiency, transparency, and cost-efficiency. For Atos, this hands-on course of supplies a sensible, production-ready basis for extending generative AI capabilities into customer-facing options. Individuals had been required to generate insurance coverage use case datasets in JSON Traces (JSONL) format. Every file consisted of two fields:

- Instruction – the immediate or query for the Clever Insurance coverage Underwriter to contemplate.

- Response – an instance of the perfect reply the fine-tuned mannequin ought to produce.

These datasets fashioned the inspiration for the mannequin fine-tuned section.

To simplify dataset creation, contributors got entry to an AWS offered PartyRock software which supplied an simple-to-use interface for producing and exporting knowledge. As soon as full, the datasets had been uploaded to Amazon Easy Storage Service (Amazon S3), the place they served because the enter for mannequin fine-tuning.

Throughout fine-tuning, contributors may alter a variety of hyperparameters to affect the fine-tuning together with, however not restricted, to the next:

- Epochs – The variety of occasions the fine-tuning course of will cross over the dataset .

- Studying charge – The weighting utilized to the updates the mannequin makes every time it passes over the information.

After fine-tuning, contributors deployed their personalized language fashions in Amazon SageMaker and used the endpoints to carry out inference. This allowed them to look at how the fine-tuned fashions responded to pattern insurance coverage queries and assessed the standard of their outputs

Outcomes diversified throughout contributors. Some fine-tuned fashions delivered sturdy, contextually related solutions, whereas others displayed indicators of overfitting — a situation the place a mannequin learns the coaching knowledge too exactly, resulting in repetitive or irrelevant responses when uncovered to new inputs. Overtrained fashions, as an illustration, are likely to echo phrases from the dataset moderately than generalizing to unseen eventualities. Armed with these insights, contributors evaluated their fashions’ efficiency and decided which variations to undergo the AWS AI League leaderboard and which to refine or discard. This iterative course of emphasised experimentation, knowledge high quality, and parameter tuning as key success components in attaining high-performing generative AI fashions.

Gamification ignites participation.

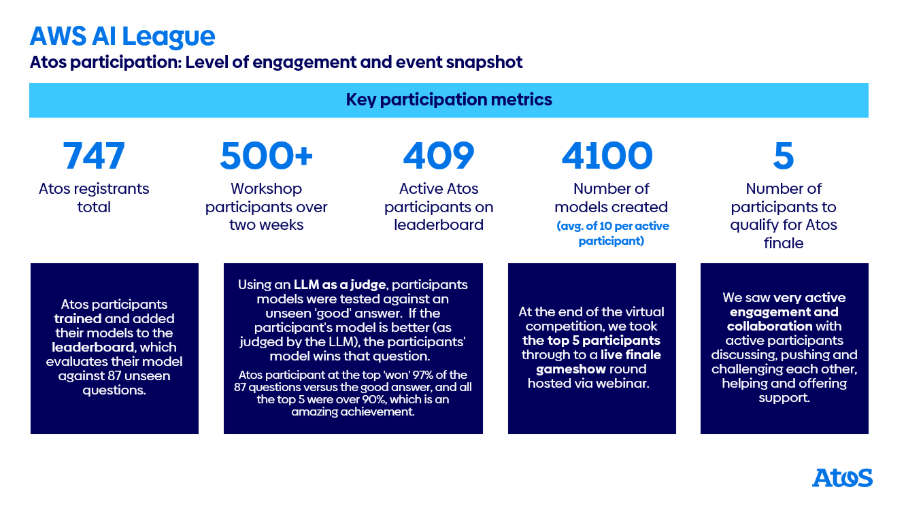

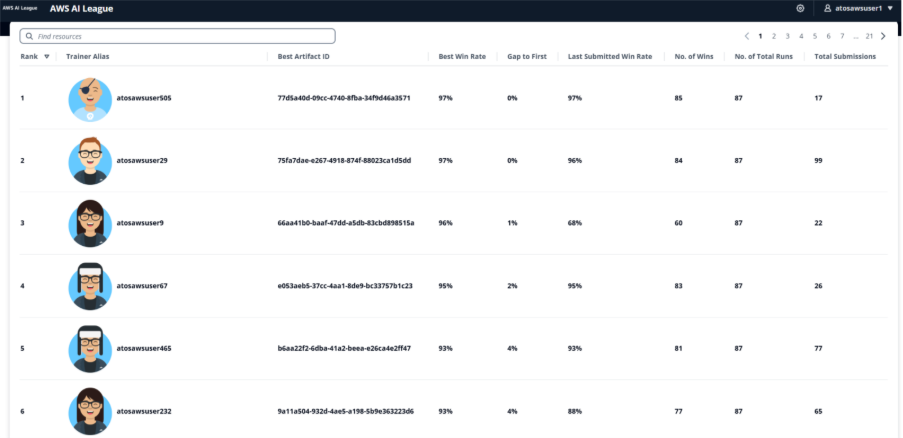

Fingers-on labs and workshops are a good way to supply folks with a possibility to be taught by doing however offering a gamified method the place you’re competing with different folks takes it to a different degree. Atos noticed this with the AWS AI League. Following an preliminary kick-off workshop, Atos contributors created and submitted preliminary fashions, earlier than turning their method to maximizing their scores on the leaderboard by iteratively creating or enhancing their datasets and tuning their hyperparameters over a two-week digital league. By the completion of the digital spherical, Atos had their greatest degree of engagement for a gamified competitors, with 409 contributors on the leaderboard, with over 4,100 fine-tuned fashions having been created.

Regardless of the gamified nature of the competitors, communication channels and workplace hours had been full of individuals balancing sharing info with one another while avoiding giving all the things away. It was an incredible steadiness which made certain people who needed to participate and enhance had been supported sufficient, while additionally having to determine some issues out for themselves. The pleasant competitors was extremely fierce on the similar time, and to make the highest 5 a participant’s fine-tuned mannequin was required to attain not less than a 93%-win charge towards the solutions offered by a a lot bigger mannequin, exhibiting the facility of fine-tuning for area particular data. The digital stage of the competitors was totally automated with a Llama 3.2 90B LLM as a choose offering the scoring. Upon completion of the digital spherical, the highest 5 contributors had been taken ahead to a reside gameshow finale, competing for a spot within the AWS finals throughout AWS re:Invent Las Vegas in December.

To rank the highest 5, the reside finale launched further scoring strategies, in addition to offering the finalists with a possibility to affect their mannequin’s response. Finale scoring was cut up between 40% for LLM-as-a-Decide, 40% between our 5 human skilled judges from Atos, and 20% for viewers voting. 5 rounds of questions offered an ample probability to take a look at the mannequin’s efficiency, and through every query the finalists had been capable of affect mannequin output with some system prompting, and hyperparameter tuning for inference (temperature and high p to manage the randomness and creativity of the reply). Finalists solely had 90 seconds to tune their inference and submit their remaining solutions, so it was a tense and shut competitors.

Tricks to fine-tune your technique to success

The fine-tuning competitors comes down to 2 key components – the contributors’ means to generate dataset for the topic of the competitors, and a capability to search out the optimum hyperparameters to make use of for fine-tuning with the dataset.

While AWS offered a PartyRock software to generate a dataset, a number of the Atos contributors took inspiration from the offered software and remixed their very own. The thought of this software was to a) generate extra knowledge and b) generate numerous and distinctive knowledge, each enhancements over the AWS offered software. Some contributors selected to make use of various generative AI instruments they’d entry to, to generate their very own responses, however this required them to create system prompts that the PartyRock software took care of to confirm knowledge was offered in the suitable format, for instance.

Bigger datasets didn’t essentially result in higher outcomes, so there was additionally a requirement to evaluation the datasets that had been generated and work out the way to enhance them. Profitable contributors additionally used generative AI for this, with normal suggestions on the way to enhance (e.g. for the Atos use case areas of insurance coverage which will have been lacking from the dataset), in addition to extra particular suggestions and actions being taken on the dataset, for instance eradicating objects within the dataset that had been too comparable. This resulted in a new PartyRock software being created and shared amongst contributors to supply enchancment suggestions.

Individuals had management over a number of crucial hyperparameters that considerably influenced fine-tuning outcomes. Epochs decide what number of occasions the coaching course of passes over your entire dataset—too few epochs end in underfitting the place the mannequin hasn’t realized sufficient, whereas too many could cause overfitting the place the mannequin memorizes coaching knowledge moderately than generalizing. Studying charge controls the magnitude of updates the mannequin makes throughout every coaching step; a excessive studying charge permits quicker coaching however dangers overshooting optimum values, whereas a low studying charge supplies extra exact changes however requires longer coaching time.

Further parameters included batch dimension, which impacts coaching stability and reminiscence utilization, and Low-Rank Adaptation (LoRA) parameters resembling lora_r and lora_alpha, which management the effectivity of the fine-tuning course of. Profitable contributors approached hyperparameter tuning systematically, both altering single values at a time to isolate their results or adjusting associated parameters collectively whereas rigorously logging outcomes to determine patterns

Understanding mannequin efficiency and overfitting

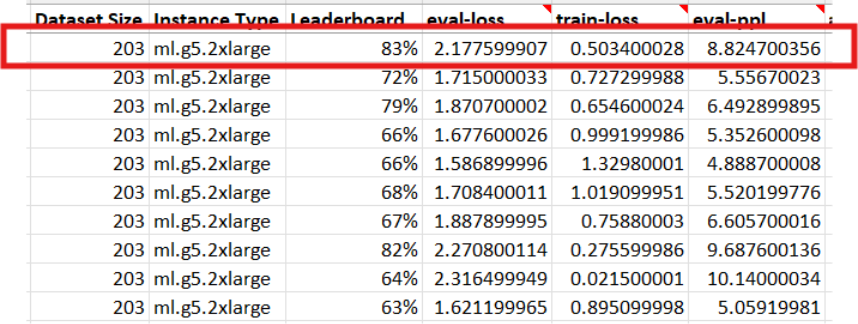

This discrepancy highlights an vital side of mannequin habits. Throughout fine-tuning, the mannequin regularly turns into higher at answering questions derived from the coaching and analysis datasets, that are subsets of the identical underlying knowledge. Nevertheless, the leaderboard evaluated every mannequin utilizing 87 unseen questions — examples that had been not included within the coaching knowledge.

Throughout fine-tuning, contributors may additionally monitor metrics resembling analysis loss (eval-loss) and perplexity (ppl), which assist point out how nicely a mannequin suits the coaching knowledge. Decrease eval-loss and perplexity typically recommend the mannequin is studying the dataset successfully, whereas massive gaps between coaching and analysis metrics can sign overfitting and decreased means to generalize. Analysis loss is the loss worth calculated on the validation or analysis dataset throughout coaching. It measures how nicely the mannequin predicts the right subsequent tokens for examples it has indirectly educated on in that step. Perplexity is a generally used metric for language fashions that represents how “shocked” the mannequin is by the analysis knowledge. Decrease perplexity signifies the mannequin is healthier capable of predict the right subsequent tokens, suggesting it has realized the underlying patterns within the dataset extra successfully.

Consequently, some fashions grew to become overfitted, that means they carried out extraordinarily nicely on the information they’d seen however struggled to generalize to new questions. This sample could possibly be noticed by deploying the mannequin to an inference endpoint and interacting with it instantly: overfitted fashions usually produced irrelevant or repetitive responses, a transparent signal that they’d memorized patterns from the coaching set moderately than studying to motive extra broadly.

Upskilling ambitions achieved

By the AWS AI League Atos’ ambition was to place generative AI tech into contributors’ palms and permit them to really feel extra assured speaking about and utilizing it after the occasion, while having some enjoyable and group constructing alongside the best way. Individuals realized how a smaller 3 billion parameter mannequin (Llama 3.2 3B Instruct) may outperform a a lot bigger 90 billion parameter mannequin by means of fine-tuning with related area data, on this occasion turning into a real digital insurance coverage underwriter assistant capable of reply advanced circumstances with applicable suggestions on threat areas and applicable ranges of deductibles and so forth. As generative AI and agentic AI develop, we see extra use circumstances for particular data inside AI brokers. Advantageous tuning a mannequin to supply this particular data may end up in a a lot smaller mannequin which may present quicker inference at a decrease price than bigger fashions, one thing that will probably be essential as we enter the age of agentic AI. As you progress towards agentic AI architectures the place a number of specialised AI brokers collaborate to unravel advanced issues, having cost-effective, domain-specific fashions turns into essential. Advantageous-tuned fashions can function specialised brokers inside bigger agentic methods, every dealing with particular domains whereas sustaining quick response occasions and manageable prices.

Conclusion

As you proceed to discover generative AI implementations, the flexibility to effectively construct, customise, and deploy specialised fashions turns into more and more crucial. The AWS AI League supplies a structured pathway for companions like Atos to deepen their AI capabilities—whether or not enhancing present choices or creating fully new, AI-driven providers that tackle real-world buyer wants. The AWS AI League program demonstrates how gamified studying can speed up companions’ AI innovation whereas driving measurable enterprise outcomes. The AWS AI League delivered measurable outcomes for Atos past participant engagement. This system confirmed that fine-tuned 3B parameter fashions may obtain win charges exceeding 93% towards a lot bigger 90B parameter fashions for domain-specific duties, demonstrating the cost-efficiency of specialised mannequin growth. From a useful resource perspective, the fine-tuned fashions required much less computational infrastructure—working on ml.g5.4xlarge situations in comparison with the ml.g5.48xlarge situations wanted for bigger base fashions—translating to price financial savings for inference at scale. The compressed studying timeline was significantly priceless, with contributors with the ability to develop sensible AI abilities in simply two weeks that may usually require months of conventional coaching. The 409 lively contributors and 4,100+ fine-tuned fashions created through the occasion represented an acceleration in Atos’s journey towards their 2026 aim of 100% AI fluency throughout their workforce. Publish-event surveys indicated that 85% of contributors felt extra assured discussing and implementing generative AI options with prospects, instantly supporting Atos’s enterprise aims

In case you’re thinking about constructing AI capabilities by means of hands-on, gamified studying, you possibly can be taught extra about internet hosting their very own AWS AI League occasion on the official web site.

To be taught extra about implementing AI options:

You may as well go to the AWS Synthetic Intelligence weblog for extra tales about companions and prospects implementing generative AI options throughout varied industries.

In regards to the authors