Basis fashions ship spectacular out-of-the-box efficiency for common duties, however many organizations want fashions to devour their enterprise information. Mannequin customization helps you bridge the hole between general-purpose AI and your particular enterprise wants when constructing functions that require domain-specific experience, imposing communication types, optimizing for specialised duties like code era, monetary reasoning, or guaranteeing compliance with business rules. The problem lies in tips on how to customise successfully. Conventional supervised fine-tuning delivers outcomes, however solely you probably have 1000’s of rigorously labeled examples displaying not simply the right closing reply, but in addition the entire reasoning path to achieve it. For a lot of real-world functions, particularly these duties the place a number of legitimate answer paths exist, creating these detailed step-by-step demonstrations can typically be costly, time-consuming.

On this publish, we discover reinforcement fine-tuning (RFT) for Amazon Nova fashions, which could be a highly effective customization method that learns by way of analysis somewhat than imitation. We’ll cowl how RFT works, when to make use of it versus supervised fine-tuning, real-world functions from code era to customer support, and implementation choices starting from totally managed Amazon Bedrock to multi-turn agentic workflows with Nova Forge. You’ll additionally be taught sensible steering on information preparation, reward perform design, and finest practices for reaching optimum outcomes.

A brand new paradigm: Studying by analysis somewhat than imitation

What when you might train a automotive to not solely be taught all of the paths on a map, however to additionally discover ways to navigate if a mistaken flip is taken? That’s the core concept behind reinforcement fine-tuning (RFT), a mannequin customization method we’re excited to carry to Amazon Nova fashions. RFT shifts the paradigm from studying by imitation to studying by analysis. As an alternative of offering 1000’s of labeled examples, you present prompts and outline what makes a closing reply right by way of take a look at instances, verifiable outcomes, or high quality standards. The mannequin then learns to optimize these standards by way of iterative suggestions, discovering its personal path to right options.

RFT helps mannequin customization for code era and math reasoning by verifying outputs robotically, eliminating the necessity for offering detailed step-by-step reasoning. We made RFT obtainable throughout our AI companies to fulfill you wherever you’re in your AI journey: begin easy with the fully-managed expertise obtainable in Amazon Bedrock, acquire extra management with SageMaker Coaching Jobs, scale to superior infrastructure with SageMaker HyperPod, or unlock frontier capabilities with Nova Forge for multi-turn conversations and {custom} reinforcement studying environments.

In December 2025, Amazon launched the Nova 2 household—Amazon’s first fashions with built-in reasoning capabilities. Not like conventional fashions that generate responses immediately, reasoning fashions like Nova 2 Lite have interaction in step-by-step downside decomposition, performing intermediate pondering steps earlier than producing closing solutions. This prolonged pondering course of mirrors how people method complicated analytical duties. When mixed with RFT, this reasoning functionality turns into notably highly effective, RFT can optimize not simply what reply the mannequin produces, however the way it causes by way of issues, instructing it to find extra environment friendly reasoning paths whereas decreasing token utilization. As of at this time, RFT is just supported with text-only use instances.

Actual-World Use Circumstances

RFT excels in situations the place you may outline and confirm right outcomes, however creating detailed step-by-step answer demonstrations at scale is impractical. Under are a number of the use instances, the place RFT could be a good choice:

- Code era: You need code that’s not simply right, but in addition environment friendly, readable, and handles edge instances gracefully, resembling qualities you may confirm programmatically by way of take a look at execution and efficiency metrics.

- Customer support: That you must consider whether or not replies are useful, keep your model’s voice, and strike the proper tone for every scenario. These are judgment calls that may’t be lowered to easy guidelines however could be assessed by an AI choose educated in your communication requirements.

- Different functions: Content material moderation, the place context and nuance matter; multi-step reasoning duties like monetary evaluation or authorized doc evaluation; and power utilization, the place you could train fashions when and tips on how to name APIs or question databases. In every case, you may outline and confirm right outcomes programmatically, even when you may’t simply display the step-by-step reasoning course of at scale.

- Exploration-heavy issues: Use instances like recreation taking part in and technique, useful resource allocation, and scheduling profit from instances the place the mannequin makes use of totally different approaches and learns from suggestions.

- Restricted labeled information situations: Use instances the place restricted labeled datasets can be found like domain-specific functions with few expert-annotated examples, new downside domains with out established answer patterns, expensive-to-label duties (medical analysis, authorized evaluation). In these use instances, RFT helps to optimize the rewards computed from the reward features.

How RFT Works

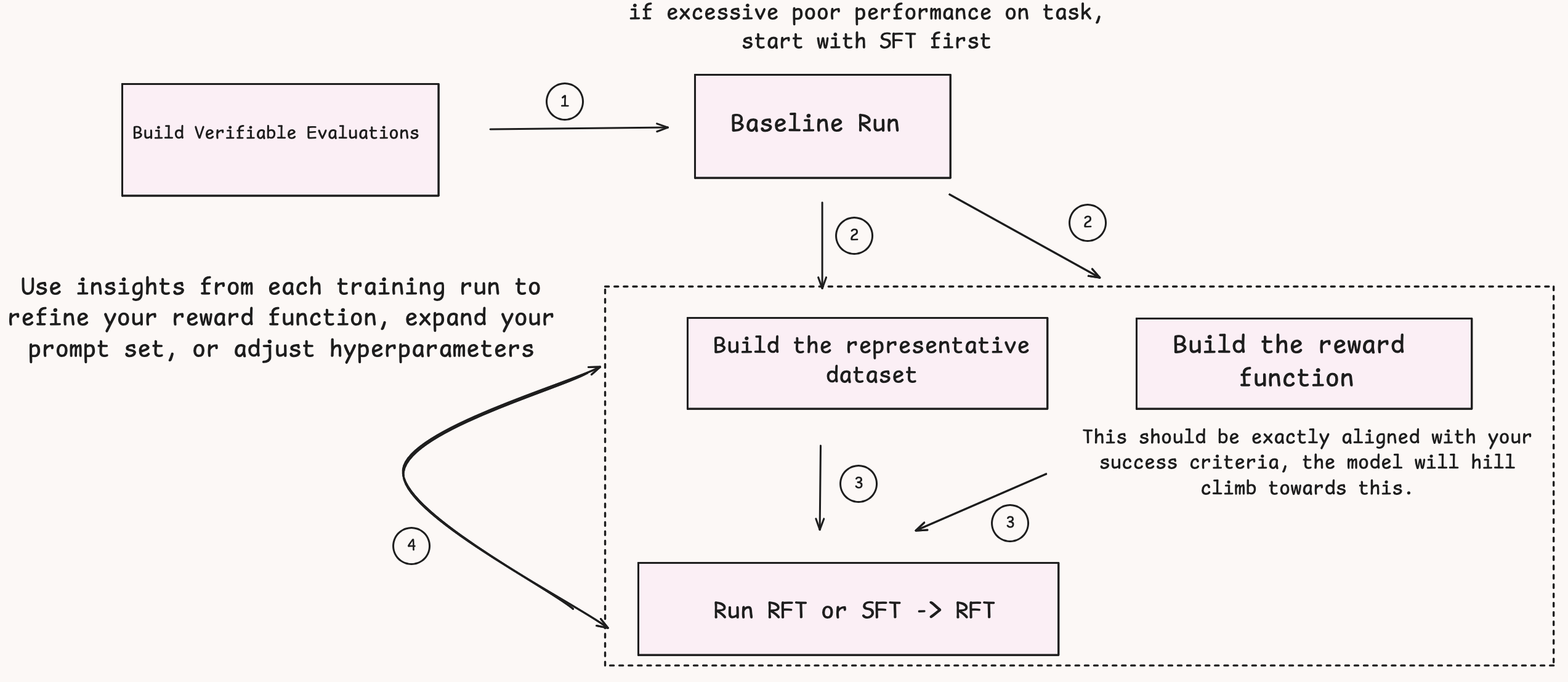

RFT operates by way of a three-stage automated course of (proven in Determine 1):

Stage 1: Response era – The actor mannequin (the mannequin you’re customizing) receives prompts out of your coaching dataset and generates a number of responses per immediate—usually 4 to eight variations. This variety provides the system a variety of responses to guage and be taught from.

Stage 2: Reward computation – As an alternative of evaluating responses to labeled examples, the system evaluates high quality utilizing reward features. You’ve gotten two choices:

- Reinforcement studying through verifiable rewards (RLVR): Rule-based graders carried out as AWS Lambda features, excellent for goal duties like code execution or math downside verification the place you may programmatically examine correctness.

- Reinforcement studying from AI suggestions (RLAIF): AI-based judges that consider responses based mostly on standards you configure, superb for subjective duties like assessing helpfulness, creativity, or adherence to model voice.

Stage 3: Actor mannequin coaching – The system makes use of the scored prompt-response pairs to coach your mannequin by way of a reinforcement studying algorithm, like Group Relative Coverage Optimization (GRPO), optimized for language fashions. The mannequin learns to maximise the chance of producing high-reward responses whereas minimizing low-reward responses. This iterative course of continues till the mannequin achieves your required efficiency.

Determine 1: Illustration of how single move of RFT works

Key Advantages of RFT

The next are the important thing advantages of RFT:

- No large, labeled datasets required – RFT solely wants prompts and a technique to consider high quality. If utilizing Bedrock RFT, you may even leverage present Bedrock API invocation logs as RFT information, eliminating the necessity for specifically created datasets.

- Optimized for verifiable outcomes – Not like supervised fine-tuning that requires express demonstrations of tips on how to attain right solutions, RFT is optimized for duties the place you may outline and confirm right outcomes, however a number of legitimate reasoning paths could exist.

- Lowered token utilization – By optimizing the mannequin’s reasoning course of, RFT can scale back the variety of tokens required to perform a process, reducing each price and latency in manufacturing.

- Safe and monitored – Your proprietary information by no means leaves AWS’s safe atmosphere throughout the customization course of, and also you get real-time monitoring of coaching metrics to trace progress and guarantee high quality.

Implementation tiers: From easy to complicated

Amazon gives a number of implementation paths for reinforcement fine-tuning with Nova fashions, starting from totally managed experiences to customizable infrastructure. By following this tiered method you may match your RFT implementation to your particular wants, technical experience, and desired stage of management.

Amazon Bedrock

Amazon Bedrock offers an entry level to RFT with a totally managed expertise that requires minimal ML experience. By means of the Amazon Bedrock console or API, you may add your coaching prompts, configure your reward perform as an AWS Lambda, and launch your reinforcement fine-tuning job with just some clicks. Bedrock handles all infrastructure provisioning, coaching orchestration, and mannequin deployment robotically. This method works nicely for simple use instances the place you could optimize particular standards with out managing infrastructure. The simplified workflow makes RFT accessible to groups with out devoted ML engineers whereas nonetheless delivering highly effective customization capabilities. Bedrock RFT helps each RLVR (rule-based rewards) and RLAIF (AI-based suggestions) approaches, with built-in monitoring and analysis instruments to trace your mannequin’s enchancment. To get began, see the Amazon Nova RFT GitHub repository.

Amazon SageMaker Serverless Mannequin Customization

Amazon SageMaker AI’s serverless mannequin customization is purpose-built for ML practitioners who’re prepared to maneuver past immediate engineering and RAG, and into fine-tuning LLMs for high-impact, specialised use instances. Whether or not the objective is enhancing complicated reasoning, domain-specific code era, or optimizing LLMs for agentic workflows together with planning, device calling, and reflection, SageMaker’s providing removes the standard infrastructure and experience limitations that gradual experimentation. At its core, the service brings superior reinforcement studying methods like GRPO with RLVR/RLAIF to builders with out requiring complicated RL setup, alongside a complete analysis suite that goes nicely past fundamental accuracy metrics. Complementing this, AI-assisted artificial information era, built-in experiment monitoring, and full lineage and audit path assist spherical out a production-grade customization pipeline. Deployment flexibility permits groups to ship fine-tuned fashions to SageMaker endpoints, Amazon Bedrock, or {custom} infrastructure, making it a compelling end-to-end serverless answer for groups seeking to speed up their mannequin customization cycles and unlock the complete potential of fashions like Amazon Nova in real-world functions.

SageMaker Coaching Jobs

For groups that want extra management over the coaching course of, Amazon SageMaker Coaching Jobs provide a versatile center floor with managed compute and talent to tweak a number of hyperparameters. You can too save intermediate checkpoints and use them to create iterative coaching workflows like chaining supervised fine-tuning (SFT) and RFT jobs to progressively refine your mannequin. You’ve gotten the pliability to decide on between LoRA and full-rank coaching approaches, with full management over hyperparameters. For deployment, you may select between Amazon Bedrock for totally managed inference or Amazon SageMaker endpoints the place you management occasion varieties, batching, and efficiency tuning. This tier is good for ML engineers and information scientists who want customization past Amazon Bedrock however don’t require devoted infrastructure. SageMaker Coaching Jobs additionally combine seamlessly with the broader Amazon SageMaker AI ecosystem for experiment monitoring, mannequin registry, and deployment pipelines. Amazon Nova RFT on SageMaker Coaching Job makes use of YAML recipe recordsdata to configure coaching jobs. You may receive base recipes from the SageMaker HyperPod recipes repository.

Finest practices:

- Information format: Use JSONL format with one JSON object per line.

- Reference solutions: Embrace floor fact values that your reward perform will examine in opposition to mannequin predictions.

- Begin small: Start with 100 examples to validate your method earlier than scaling.

- Customized fields: Add any metadata your reward perform wants for analysis.

- Reward Operate: Design for velocity and scalability utilizing AWS Lambda.

- To get began with Amazon Nova RFT job on Amazon SageMaker Coaching Jobs, see the SFT and RFT notebooks.

SageMaker HyperPod

SageMaker HyperPod delivers enterprise-grade infrastructure for large-scale RFT workloads with persistent Kubernetes-based clusters optimized for distributed coaching. This tier builds on all of the options obtainable in SageMaker Coaching Jobs—together with checkpoint administration, iterative coaching workflows, LoRA and full-rank coaching choices, and versatile deployment— on a a lot bigger scale with devoted compute assets and specialised networking configurations. The RFT implementation in HyperPod is optimized for increased throughput and quicker convergence by way of state-of-the-art asynchronous reinforcement studying algorithms, the place inference servers and coaching servers work independently at full velocity. These algorithms account for this asynchrony and implement cutting-edge methods used to coach basis fashions. HyperPod additionally offers superior information filters that offer you granular management over the coaching course of and scale back the probabilities of crashes. You acquire granular management over hyperparameters to maximise throughput and efficiency. HyperPod is designed for ML platform groups and analysis organizations that must push the boundaries of RFT at scale. Amazon Nova RFT makes use of YAML recipe recordsdata to configure coaching jobs. You may receive base recipes from the SageMaker HyperPod recipes repository.

- For extra info, see the RFT based mostly analysis to get began with Amazon Nova RFT job on Amazon SageMaker HyperPod.

Nova Forge

Nova Forge offers superior reinforcement suggestions coaching capabilities designed for AI analysis groups and practitioners in constructing refined agentic functions. By breaking free from single-turn interplay and Lambda timeout constraints, Nova Forge permits complicated, multi-turn workflows with custom-scaled environments operating in your individual VPC. This structure provides you full management over trajectory era, reward features, and direct interplay with coaching and inference servers capabilities important for frontier AI functions that customary RFT tiers can not assist. Nova Forge makes use of Amazon SageMaker HyperPod because the coaching platform together with offering different options resembling information mixing with the Amazon Nova curated datasets together with intermediate checkpoints.

Key Options:

- Multi-turn dialog assist

- Reward features with >15-minute execution time

- Extra algorithms and tuning choices

- Customized coaching recipe modifications

- State-of-the-art AI methods

Every tier on this development builds on the earlier one, providing a pure progress path as your RFT must evolve. Begin with Amazon Bedrock for preliminary experiments, transfer to SageMaker Coaching Jobs as you refine your method, and graduate to HyperPod or Nova Forge utilizing HyperPod for specialised use instances. This versatile structure ensures you may implement RFT on the stage of complexity that matches your present wants whereas offering a transparent path ahead as these wants develop.

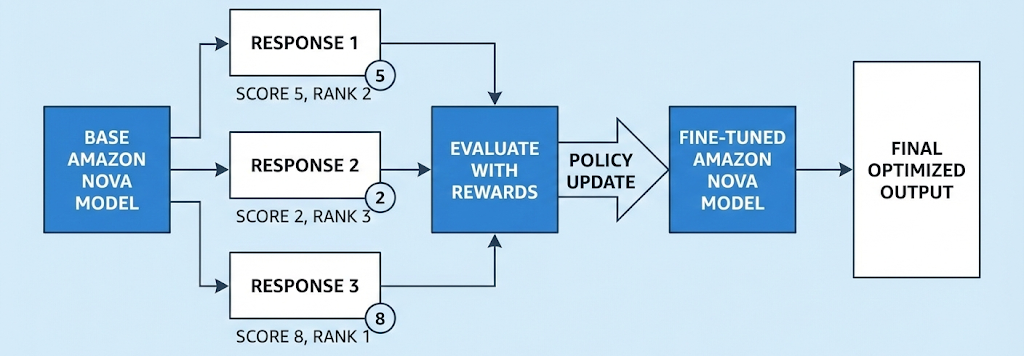

Systematic method to reinforcement fine-tuning (RFT)

Reinforcement fine-tuning (RFT) progressively improves pre-trained fashions by way of structured, reward-based studying iterations. The next is a scientific method to implementing RFT.

Step 0: Consider baseline efficiency

Earlier than beginning RFT, consider whether or not your mannequin performs at a minimally acceptable stage. RFT requires that the mannequin can produce at the least one right answer amongst a number of makes an attempt throughout coaching.

Key requirement: Group relative insurance policies require end result variety throughout a number of rollouts (usually 4-8 generations per immediate) to be taught successfully. The mannequin wants at the least one success or at the least one failure among the many makes an attempt so it might probably distinguish between constructive and destructive examples for reinforcement. If all rollouts constantly fail, the mannequin has no constructive sign to be taught from, making RFT ineffective. In such instances, you must first use supervised fine-tuning (SFT) to determine fundamental process capabilities earlier than trying RFT. In instances the place the failure modes are primarily resulting from lack of expertise, in these instances as nicely SFT may be simpler start line, whereas if the failure modes are resulting from poor reasoning, then RFT may be a greater choice to optimize on reasoning high quality.

Step 1: Establish the proper dataset and reward perform

Choose or create a dataset of prompts that signify the situations your mannequin will encounter in manufacturing. Extra importantly, design a reward perform that:

- Crisply follows what your analysis metrics monitor: Your reward perform ought to immediately measure the identical qualities you care about in manufacturing.

- Captures what you want from the mannequin: Whether or not that’s correctness, effectivity, model adherence, or a mix of targets.

Step 2: Debug and iterate

Monitor coaching metrics and mannequin rollouts all through the coaching course of

Coaching metrics to look at:

- Reward tendencies over time (ought to usually improve)

- Coverage divergence (KL) from the bottom mannequin

- Era size over time

Mannequin rollout evaluation:

- Pattern and evaluation generated outputs at common intervals

- Observe how the mannequin’s conduct evolves throughout coaching steps

Widespread points and options

Points solvable immediately within the reward perform:

- Format correctness: Add reward penalties for malformed outputs

- Language mixing: Penalize undesirable language switches

- Era size: Reward acceptable response lengths in your use case

Points requiring dataset/immediate enhancements:

- Restricted protection: Create a extra complete immediate set masking numerous problem

- Lack of exploration variety: Guarantee prompts enable the mannequin to discover numerous situations and edge instances

RFT is an iterative course of. Use insights from every coaching run to refine your reward perform, increase your immediate set, or modify hyperparameters earlier than the following iteration.

Key RFT options and when to decide on what

This part outlines the important thing options of RFT by way of a scientific breakdown of its core parts and capabilities for efficient mannequin optimization.

Full Rank in comparison with LoRA

RFT helps two coaching approaches with totally different useful resource tradeoffs. Full Rank coaching updates all mannequin parameters throughout coaching, offering most mannequin adaptation potential however requiring extra computational assets and reminiscence. Low-Rank Adaptation (LoRA) gives parameter-efficient fine-tuning that updates solely a small subset of parameters by way of light-weight adapter layers whereas preserving many of the mannequin frozen.

LoRA requires considerably much less computational assets and leads to smaller mannequin artifacts. Importantly, LoRA fashions deployed in Amazon Bedrock assist on-demand inference—you don’t want devoted situations and solely pay for the tokens you utilize. This makes LoRA a wonderful default start line: you may rapidly iterate and validate your custom-made mannequin with out upfront infrastructure prices. As your visitors demand grows or high-performance necessities justify the funding, you may transition to full rank coaching with devoted provisioned throughput situations for optimum throughput and lowest latency.

Reasoning in comparison with non-reasoning

RFT helps each reasoning and non-reasoning fashions, every optimized for several types of duties. Reasoning fashions generate express intermediate pondering steps earlier than producing closing solutions, making them superb for complicated analytical duties like mathematical problem-solving, multi-step logical deduction, and code era the place displaying the reasoning course of provides worth. You may configure reasoning effort ranges—excessive for optimum reasoning functionality or low for minimal overhead. Non-reasoning fashions present direct responses with out displaying intermediate reasoning steps, optimizing velocity and value. They’re finest suited to duties like chat-bot model Q&A the place you need quicker execution with out the reasoning overhead, although this will end in decrease high quality outputs in comparison with reasoning mode. The selection depends upon your process necessities: use reasoning mode when the intermediate pondering steps enhance accuracy, and also you want most efficiency on complicated issues. Use non-reasoning mode whenever you prioritize velocity and value effectivity over the potential high quality enhancements that express reasoning offers.

When to Use RFT in comparison with SFT

| Methodology | When it really works finest | Strengths | Limitations |

| Supervised effective‑tuning (SFT) | Effectively‑outlined duties with clear desired outputs, for instance, “Given X, the right output is Y.” | • Straight teaches factual information (for instance, “Paris is the capital of France”) • Very best when you will have excessive‑high quality immediate‑response pairs • Offers constant formatting and particular output constructions | • Requires express, labeled examples for each desired conduct • Could battle with duties that contain ambiguous or a number of legitimate options |

| Reinforcement effective‑tuning (RFT) | Situations the place a reward perform could be outlined, even when just one legitimate answer exists | • Optimizes complicated reasoning duties • Generates its personal coaching information effectively, decreasing the necessity for a lot of human‑labeled examples • Permits balancing competing targets (accuracy, effectivity, model) | • Wants the mannequin to provide at the least one right answer amongst a number of makes an attempt (usually 4‑8) • If the mannequin constantly fails to generate right options, RFT alone is not going to be efficient |

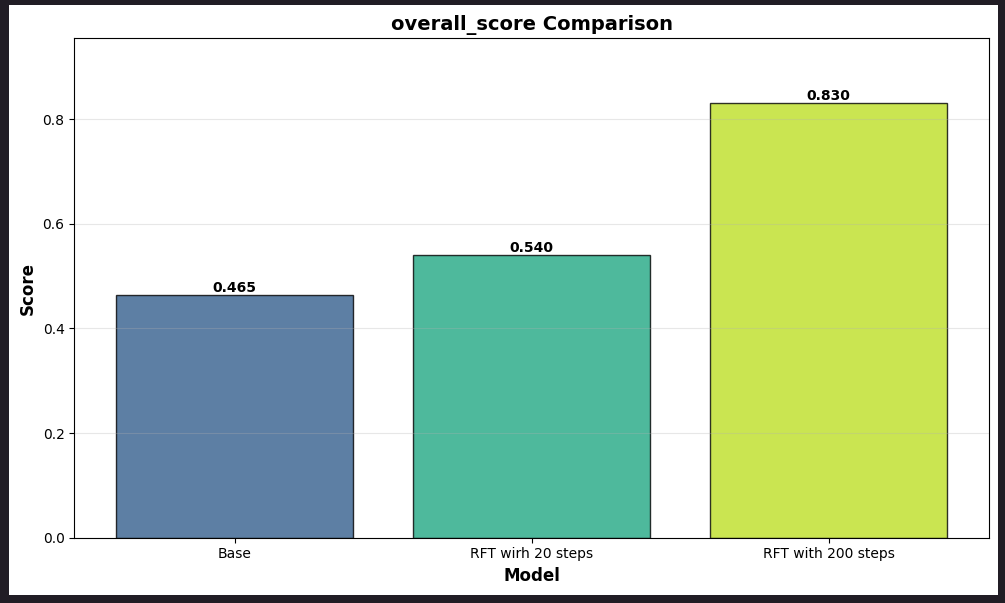

Case research: Monetary Evaluation Benchmark (FinQA) optimization with RFT

On this case research, we are going to stroll customers by way of an instance case research of FinQA, a monetary evaluation benchmark, and use that to display the optimization achieved in responses. On this instance we are going to use 1000 samples from the FinQA public dataset.

Step 1: Information preparation

Put together the dataset in a format that’s suitable with RFT schema as talked about RFT on Nova. RFT information follows the OpenAI conversational format. Every coaching instance is a JSON object containing. For our FinQA dataset, publish formatting an instance information level in prepare.jsonl will look as proven beneath:

Required fields:

- messages: Array of conversational turns with system, consumer, and optionally assistant roles

- reference_answer: Anticipated output or analysis standards for reward calculation

Non-obligatory fields:

- id: Distinctive identifier for monitoring and deduplication

- instruments: Array of perform definitions obtainable to the mannequin

- Customized metadata fields: Any further metadata for use whereas calculating rewards (for instance,

task_id,difficulty_level,area)

Step 2: Constructing the reward and grader perform

The reward perform is the core part that evaluates mannequin responses and offers suggestions alerts for coaching. It have to be carried out as an AWS Lambda perform that accepts mannequin responses and returns reward scores. At present, AWS Lambda features include a limitation of as much as quarter-hour execution time. Alter the timeout of the Lambda perform based mostly in your wants.

Finest practices:

The next are the suggestions to optimize your RFT implementation:

- Begin small: Start with 100-200 examples and few coaching epochs.

- Baseline with SFT first: If reward scores are constantly low, carry out SFT earlier than RFT.

- Design environment friendly reward features: Execute in seconds, decrease exterior API calls.

- Monitor actively: Observe common reward scores, look ahead to overfitting.

- Optimize information high quality: Guarantee numerous, consultant examples.

Step 3: Launching the RFT job

As soon as we now have information ready, we are going to launch RFT utilizing a SageMaker Coaching Jobs. The 2 key inputs for launching the RFT job are the enter dataset (input_data_s3) and the reward perform Lambda ARN. Right here we use the RFT container and RFT recipe as outlined within the following instance. The next is a snippet of how one can kick off the RFT Job: rft_training_job =rft_launcher(train_dataset_s3_path, reward_lambda_arn)

Operate:

Observe: To decrease the price of this experiment, you may set occasion depend to 2 as a substitute of 4 for LoRA

Step 4: Launching the RFT Eval Job

As soon as the RFT job is accomplished, you can even take the checkpoint generated after RFT and use that to guage the mannequin. This checkpoint can then be utilized in an analysis recipe, overriding the bottom mannequin, and executed in our analysis container. The next is a snippet of how you should utilize the generated checkpoint for analysis. Observe the identical code will also be used for operating a baseline analysis previous to checkpoint analysis.

The perform could be known as utilizing the next command:

- For baselining use:

- For publish RFT analysis use:

Operate:

Step 5: Monitoring the RFT metrics and iterating accordingly

As soon as the Jobs are launched, you may monitor the Job progress in Amazon CloudWatch logs for SageMaker Coaching Jobs to take a look at the RFT particular metrics. You can too monitor the CloudWatch logs of your reward Lambda perform to confirm how the rollouts and rewards are working. It’s good follow to validate the reward Lambda perform is calculating rewards as anticipated and isn’t moving into “reward hacking” (maximizing the reward sign in unintended ways in which don’t align with the precise goal).

Overview the next key metrics:

- Critic reward distribution metrics: These metrics (critic/rewards/imply, critic/rewards/max, critic/rewards/min) assist in discovering how the reward form seems to be like and if the rewards are on a path of gradual improve.

- Mannequin exploratory conduct metrics: This metrics assist us in understanding the exploratory nature of the mannequin. The upper actor/entropy signifies increased coverage variation and mannequin’s potential to discover newer paths.

Conclusion

With RFT you may carry out mannequin customization by way of evaluation-based studying, requiring solely prompts and high quality standards somewhat than large, labeled datasets. For totally managed implementation, begin with Amazon Bedrock. If you happen to want extra versatile management, transfer to SageMaker Coaching Jobs. For enterprise-scale workloads, SageMaker HyperPod offers the mandatory infrastructure. Alternatively, discover Nova Forge for multi-turn agentic functions with {custom} reinforcement studying environments.

Concerning the authors