Managing massive picture collections presents important challenges for organizations and people. Conventional approaches depend on guide tagging, primary metadata, and folder-based group, which might turn into impractical when coping with 1000’s of photos containing a number of folks and complicated relationships. Clever picture search programs handle these challenges by combining pc imaginative and prescient, graph databases, and pure language processing to rework how we uncover and set up visible content material. These programs seize not simply who and what seems in images, however the complicated relationships and contexts that make them significant, enabling pure language queries and semantic discovery.

On this submit, we present you methods to construct a complete picture search system utilizing the AWS Cloud Improvement Equipment (AWS CDK) that integrates Amazon Rekognition for face and object detection, Amazon Neptune for relationship mapping, and Amazon Bedrock for AI-powered captioning. We display how these companies work collectively to create a system that understands pure language queries like “Discover all images of grandparents with their grandchildren at birthday events” or “Present me footage of the household automobile throughout street journeys.”

The important thing profit is the flexibility to personalize and customise search give attention to particular folks, objects, or relationships whereas scaling to deal with 1000’s of images and complicated household or organizational constructions. Our strategy demonstrates that integrating Amazon Neptune graph database capabilities with Amazon AI companies permits pure language picture search that understands context and relationships, transferring past easy metadata tagging to clever picture discovery. We showcase this by means of a whole serverless implementation that you may deploy and customise to your particular use case.

Answer overview

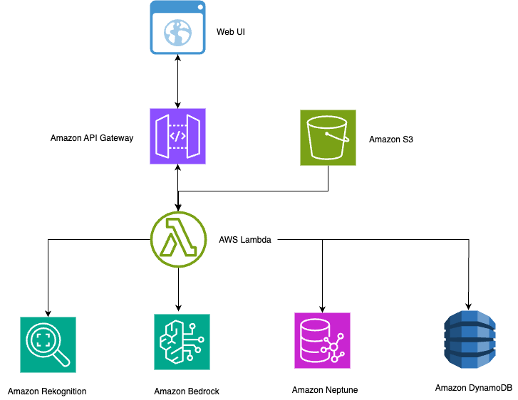

This part outlines the technical structure and workflow of our clever picture search system. As illustrated within the following diagram, the answer makes use of serverless AWS companies to create a scalable, cost-effective system that robotically processes images and permits pure language search.

The serverless structure scales effectively for a number of use instances:

- Company – Worker recognition and occasion documentation

- Healthcare – HIPAA-compliant picture administration with relationship monitoring

- Training – Pupil and school picture group throughout departments

- Occasions – Skilled images with automated tagging and shopper supply

The structure combines a number of AWS companies to create a contextually conscious picture search system:

The system follows a streamlined workflow:

- Photos are uploaded to S3 buckets with automated Lambda triggers.

- Reference images within the faces/ prefix are processed to construct recognition fashions.

- New images set off Amazon Rekognition for face detection and object labeling.

- Neptune shops connections between folks, objects, and contexts.

- Amazon Bedrock creates contextual descriptions utilizing detected faces and relationships.

- DynamoDB shops searchable metadata with quick retrieval capabilities.

- Pure language queries traverse the Neptune graph for clever outcomes.

The whole supply code is on the market on GitHub.

Stipulations

Earlier than implementing this answer, guarantee you have got the next:

Deploy the answer

Obtain the whole supply code from the GitHub repository. Extra detailed setup and deployment directions can be found within the README.

The challenge is organized into a number of key directories that separate issues and allow modular improvement:

The answer makes use of the next key Lambda capabilities:

- image_processor.py – Core processing with face recognition, label detection, and relationship-enriched caption technology

- search_handler.py – Pure language question processing with multi-step relationship traversal

- relationships_handler_neptune.py – Configuration-driven relationship administration and graph connections

- label_relationships.py – Hierarchical label queries, object-person associations, and semantic discovery

To deploy the answer, full the next steps:

- Run the next command to put in dependencies:

pip set up -r requirements_neptune.txt

- For a first-time setup, enjoyable the next command to bootstrap the AWS CDK:

cdk bootstrap

- Run the next command to provision AWS assets:

cdk deploy

- Arrange Amazon Cognito consumer pool credentials within the net UI.

- Add reference images to ascertain the popularity baseline.

- Create pattern household relationships utilizing the API or net UI.

The system robotically handles face recognition, label detection, relationship decision, and AI caption technology by means of the serverless pipeline, enabling pure language queries like “particular person’s mom with automobile” powered by Neptune graph traversals.

Key options and use instances

On this part, we focus on the important thing options and use instances for this answer.

Automate face recognition and tagging

With Amazon Rekognition, you may robotically determine people from reference images, with out guide tagging. Add just a few clear photos per particular person, and the system acknowledges them throughout your complete assortment, no matter lighting or angles. This automation reduces tagging time from weeks to hours, supporting company directories, compliance archives, and occasion administration workflows.

Allow relationship-aware search

Through the use of Neptune, the answer understands who seems in images and the way they’re related. You’ll be able to run pure language queries akin to “Sarah’s supervisor” or “Mother along with her youngsters,” and the system traverses multi-hop relationships to return related photos. This semantic search replaces guide folder sorting with intuitive, context-aware discovery.

Perceive objects and context robotically

Amazon Rekognition detects objects, scenes, and actions, and Neptune hyperlinks them to folks and relationships. This permits complicated queries like “executives with firm automobiles” or “academics in school rooms.” The label hierarchy is generated dynamically and adapts to totally different domains—akin to healthcare or training—with out guide configuration.

Generate context-aware captions with Amazon Bedrock

Utilizing Amazon Bedrock, the system creates significant, relationship-aware captions akin to “Sarah and her supervisor discussing quarterly outcomes” as a substitute of generic ones. Captions could be tuned for tone (akin to goal for compliance, narrative for advertising, or concise for government summaries), enhancing each searchability and communication.

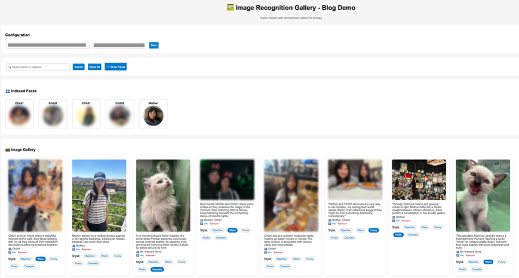

Ship an intuitive net expertise

With the net UI, customers can search images utilizing pure language, view AI-generated captions, and modify tone dynamically. For instance, queries like “mom with youngsters” or “outside actions” return related, captioned outcomes immediately. This unified expertise helps each enterprise workflows and private collections.

The next screenshot demonstrates utilizing the net UI for clever picture search and caption styling.

Scale graph relationships with label hierarchies

Neptune scales to mannequin 1000’s of relationships and label hierarchies throughout organizations or datasets. Relationships are robotically generated throughout picture processing, enabling quick semantic discovery whereas sustaining efficiency and suppleness as information grows.

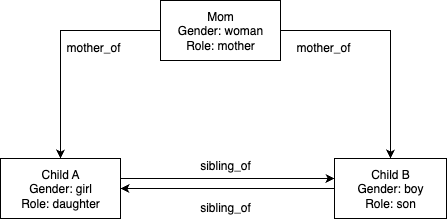

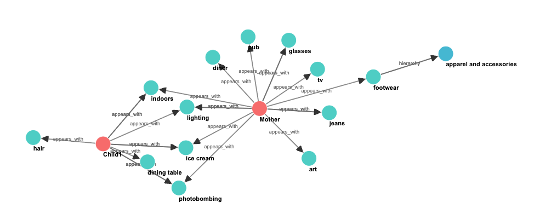

The next diagram illustrates an instance particular person relationship graph (configuration-driven).

Individual relationships are configured by means of JSON information constructions handed to the initialize_relationship_data() operate. This configuration-driven strategy helps limitless use instances with out code modifications—you may merely outline your folks and relationships within the configuration object.

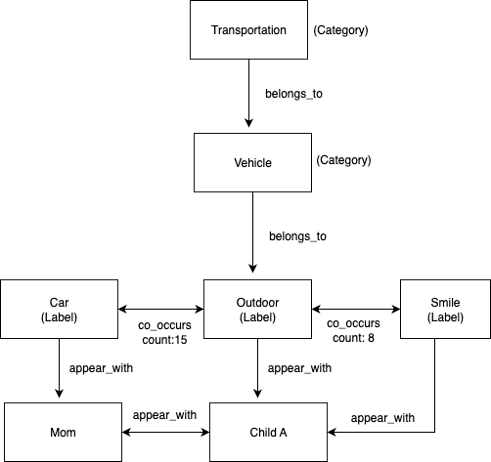

The next diagram illustrates an instance label hierarchy graph (robotically generated from Amazon Rekognition).

Label hierarchies and co-occurrence patterns are robotically generated throughout picture processing. Amazon Rekognition gives class classifications that create the belongs_to relationships, and the appears_with and co_occurs_with relationships are constructed dynamically as photos are processed.

The next screenshot illustrates a subset of the whole graph, demonstrating multi-layered relationship varieties.

Database technology strategies

The connection graph makes use of a versatile configuration-driven strategy by means of the initialize_relationship_data() operate. This mitigates the necessity for hard-coding and helps limitless use instances:

The label relationship database is created robotically throughout picture processing by means of the store_labels_in_neptune() operate:

With these capabilities, you may handle massive picture collections with complicated relationship queries, uncover images by semantic context, and discover themed collections by means of label co-occurrence patterns.

Efficiency and scalability concerns

Take into account the next efficiency and scalability elements:

- Dealing with bulk uploads – The system processes massive picture collections effectively, from small household albums to enterprise archives with 1000’s of photos. Constructed-in intelligence manages API fee limits and facilitates dependable processing even throughout peak add intervals.

- Value optimization – The serverless structure makes positive you solely pay for precise utilization, making it cost-effective for each small groups and enormous enterprises. For reference, processing 1,000 photos sometimes prices roughly $15–25 (together with Amazon Rekognition face detection, Amazon Bedrock caption technology, and Lambda operate execution), with Neptune cluster prices of $100–150 month-to-month no matter quantity. Storage prices stay minimal at beneath $1 per 1,000 photos in Amazon S3.

- Scaling efficiency – The Neptune graph database strategy scales effectively from small household constructions to enterprise-scale networks with 1000’s of individuals. The system maintains quick response occasions for relationship queries and helps bulk processing of enormous picture collections with automated retry logic and progress monitoring.

Safety and privateness

This answer implements complete safety measures to guard delicate picture and facial recognition information. The system encrypts information at relaxation utilizing AES-256 encryption with AWS Key Administration Service (AWS KMS) managed keys and secures information in transit with TLS 1.2 or later. Neptune and Lambda capabilities function inside digital non-public cloud (VPC) subnets, remoted from direct web entry, and API Gateway gives the one public endpoint with CORS insurance policies and fee limiting. Entry management follows least-privilege ideas with AWS Id and Entry Administration (IAM) insurance policies that grant solely minimal required permissions: Lambda capabilities can solely entry particular S3 buckets and DynamoDB tables, and Neptune entry is restricted to approved database operations. Picture and facial recognition information stays inside your AWS account and isn’t shared outdoors AWS companies. You’ll be able to configure Amazon S3 lifecycle insurance policies for automated information retention administration, and AWS CloudTrail gives full audit logs of information entry and API requires compliance monitoring, supporting GDPR and HIPAA necessities with further Amazon GuardDuty monitoring for risk detection.

Clear up

To keep away from incurring future prices, full the next steps to delete the assets you created:

- Delete photos from the S3 bucket:

aws s3 rm s3://YOUR_BUCKET_NAME –recursive

- Delete the Neptune cluster (this command additionally robotically deletes Lambda capabilities):

cdk destroy

- Take away the Amazon Rekognition face assortment:

aws rekognition delete-collection --collection-id face-collection

Conclusion

This answer demonstrates how Amazon Rekognition, Amazon Neptune, and Amazon Bedrock can work collectively to allow clever picture search that understands each visible content material and context. Constructed on a completely serverless structure, it combines pc imaginative and prescient, graph modeling, and pure language understanding to ship scalable, human-like discovery experiences. By turning picture collections right into a data graph of individuals, objects, and moments, it redefines how customers work together with visible information—making search extra semantic, relational, and significant. Finally, it displays the reliability and trustworthiness of AWS AI and graph applied sciences in enabling safe, context-aware picture understanding.

To study extra, check with the next assets:

Concerning the authors