On this article, you’ll study sensible prompt-engineering patterns that make massive language fashions helpful and dependable for time sequence evaluation and forecasting.

Subjects we’ll cowl embrace:

- Tips on how to body temporal context and extract helpful alerts

- Tips on how to mix LLM reasoning with classical statistical fashions

- Tips on how to construction knowledge and prompts for forecasting, anomalies, and area constraints

With out additional delay, let’s start.

Immediate Engineering for Time Sequence Evaluation

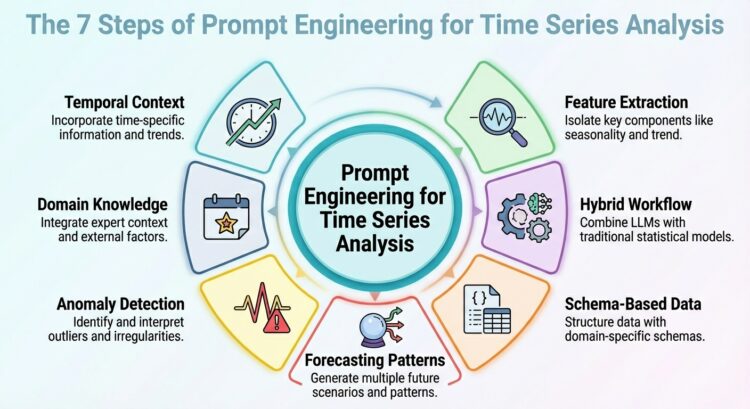

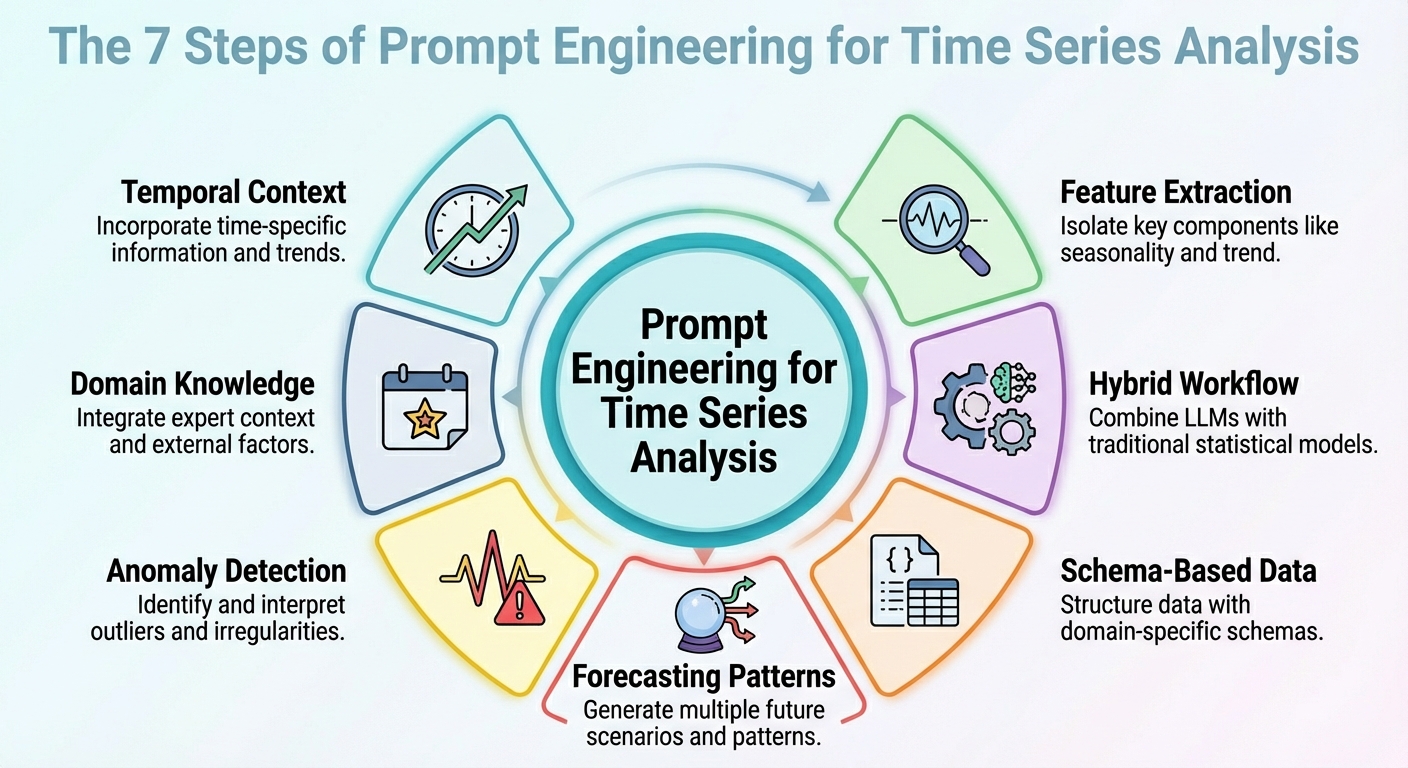

Picture by Editor

Introduction

Unusual as it might sound, massive language fashions (LLMs) could be leveraged for knowledge evaluation duties, together with particular situations akin to time sequence evaluation. The secret is to accurately translate your immediate engineering expertise into the precise evaluation state of affairs.

This text outlines seven immediate engineering methods that can be utilized to leverage time sequence evaluation duties with LLMs.

Except mentioned in any other case, the descriptions of those methods are accompanied by illustrative examples revolving round a retail gross sales knowledge state of affairs, concretely, contemplating a time sequence dataset consisting of every day gross sales over time for its evaluation.

1. Contextualizing Temporal Construction

First, an efficient immediate to get a helpful mannequin output ought to be one which helps it perceive the temporal construction of the time sequence dataset. This consists of attainable mentions of upward/downward traits, seasonality, identified cycles like promotions or holidays, and so forth. This context data will assist your LLM interpret, as an example, temporal fluctuations as — nicely, simply that: fluctuations, reasonably than noise. In sum, describing the construction of the dataset clearly within the context accompanying your prompts typically goes additional than intricate reasoning directions in prompts.

Instance immediate:

“Right here is the every day gross sales (in models) for the final one year. The information exhibits a weekly seasonality (greater gross sales on weekends), a steadily rising long-term development, and month-to-month spikes on the finish of every month because of pay-day promotions. Use that data when forecasting the following 30 days.”

2. Function and Sign Extraction

As an alternative of asking your mannequin to carry out direct forecasts from uncooked numbers, why not immediate it to extract some key options first? This might embrace latent patterns, anomalies, and correlations. Asking the LLM to extract options and alerts and incorporate them into the immediate (e.g., via abstract statistics or decomposition) helps reveal the explanations behind future predictions or fluctuations.

Instance immediate:

“From the previous one year of gross sales knowledge, compute the typical every day gross sales, the usual deviation, establish any days the place gross sales exceeded imply plus twice the usual deviation (i.e., potential outliers), and word any recurring weekly or month-to-month patterns. Then interpret what components would possibly clarify high-sales days or dips, and flag any uncommon anomalies.”

3. Hybrid LLM + Statistical Workflow

Let’s face it: LLMs in isolation will typically wrestle with duties requiring numeric precision and capturing temporal dependencies in time sequence. For that reason, merely combining their use with classical statistical fashions is a method to yield higher outcomes. How may a hybrid workflow like this be outlined? The trick is to inject LLM reasoning — high-level interpretation, speculation formulation, and context comprehension — alongside quantitative fashions akin to ARIMA, ETS, or others.

As an example, LeMoLE (LLM-Enhanced Combination of Linear Consultants) is an instance of a hybrid strategy that enriches linear fashions with prompt-derived options.

The end result blends contextual reasoning and statistical rigor: the most effective of two worlds.

4. Schema-based Knowledge Illustration

Whereas uncooked time sequence datasets are normally poorly suited codecs to move as LLM inputs, utilizing structured schemas like JSON or compact tables may very well be the important thing that permits the LLM to interpret these knowledge way more reliably, as demonstrated in a number of research.

Instance JSON snippet to be handed alongside a immediate:

|

{ “gross sales”: [ {“date”: “2024-12-01”, “units”: 120}, {“date”: “2024-12-02”, “units”: 135}, ..., {“date”: “2025-11-30”, “units”: 210} ], “metadata”: { “frequency”: “every day”, “seasonality”: [“weekly”, “monthly_end”], “area”: “retail_sales” } } |

Immediate to accompany the JSON knowledge with:

“Given the above JSON knowledge and metadata, analyze the time sequence and forecast the following 30 days of gross sales.”

5. Prompted Forecasting Patterns

Designing and correctly structuring forecasting patterns throughout the immediate — akin to short-term vs. long-term horizons or simulating particular “what-if” situations — will help information the mannequin to provide extra usable responses. This strategy is efficient for producing extremely actionable insights in your requested evaluation.

Instance:

|

Process A — Brief–time period (subsequent 7 days): Forecast anticipated gross sales.

Process B — Lengthy–time period (subsequent 30 days): Present a baseline forecast plus two situations: – Situation 1 (regular situations) – Situation 2 (with a deliberate promotion on days 10–15)

In addition, present a 95% confidence interval for each situations. |

6. Anomaly Detection Prompts

This one is extra task-specific and focuses on correctly crafting prompts which will assist not solely forecast with LLMs but in addition detect anomalies — together with statistical strategies — and cause about their seemingly causes, and even recommend what to analyze. The secret is, as soon as extra, to first preprocess with conventional time sequence instruments after which immediate the mannequin for interpretation of findings.

Instance immediate:

“Utilizing the gross sales knowledge JSON, first flag any day the place gross sales deviate greater than 2× the weekly normal deviation from the weekly imply. Then for each flagged day, clarify attainable causes (e.g., out-of-stock, promotion, exterior occasions) and suggest whether or not to analyze (e.g., verify stock logs, advertising and marketing marketing campaign, retailer foot site visitors).”

7. Area-Infused Reasoning

Area data like retail seasonality patterns, vacation results, and so forth., uncovers helpful insights, and embedding it into prompts helps LLMs carry out analyses and predictions which are extra significant and likewise interpretable. This boils right down to leveraging the relevance of “dataset context,” each semantically and domain-specific, because the lighthouse that guides mannequin reasoning.

A immediate like this might assist the LLM do higher at anticipating month-end spikes or gross sales drops because of vacation reductions:

“That is the every day gross sales knowledge of a retail chain. Gross sales are inclined to spike on the finish of every month (clients obtain salaries), drop on public holidays, and improve throughout promotional occasions. There may be additionally an occasional inventory scarcity, leading to dips for sure SKUs. Use this area data when analyzing the sequence and forecasting.”

Wrapping Up

This text described seven totally different methods, largely based and supported by current research, to make simpler prompts for time sequence evaluation and forecasting duties aided by LLMs.