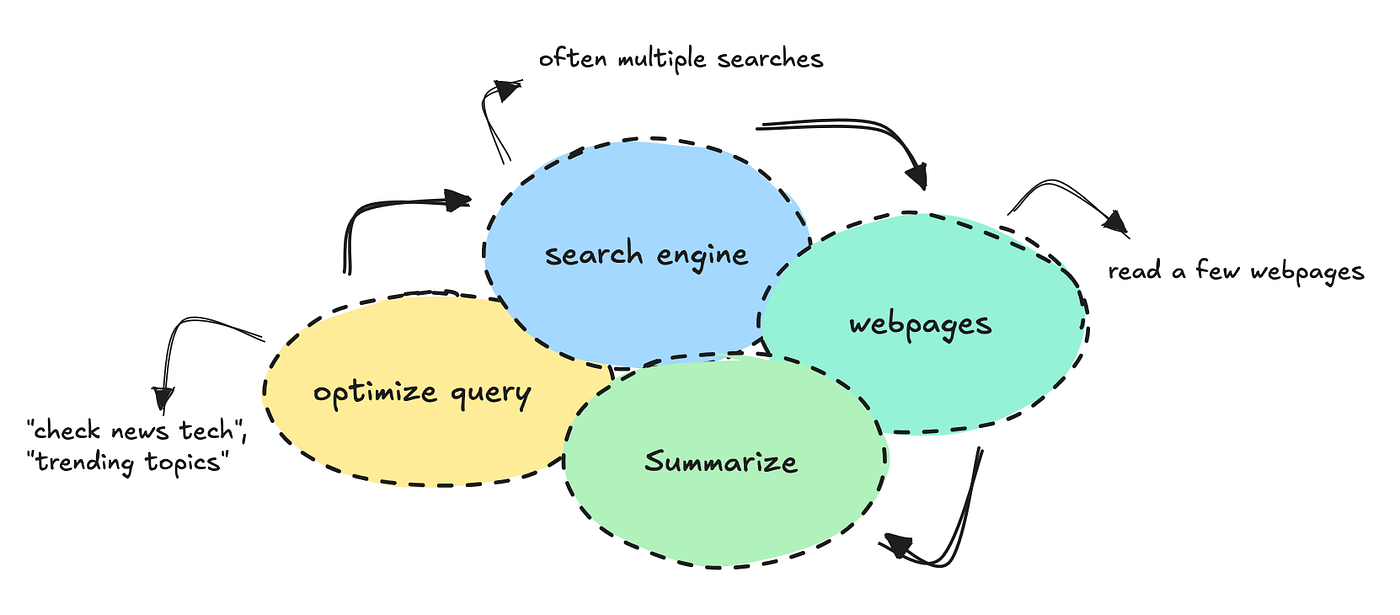

ChatGPT one thing like: “Please scout all of tech for me and summarize tendencies and patterns based mostly on what you assume I’d be fascinated about,” you understand that you simply’d get one thing generic, the place it searches just a few web sites and information sources and palms you these.

It’s because ChatGPT is constructed for normal use circumstances. It applies regular search strategies to fetch data, typically limiting itself to a couple net pages.

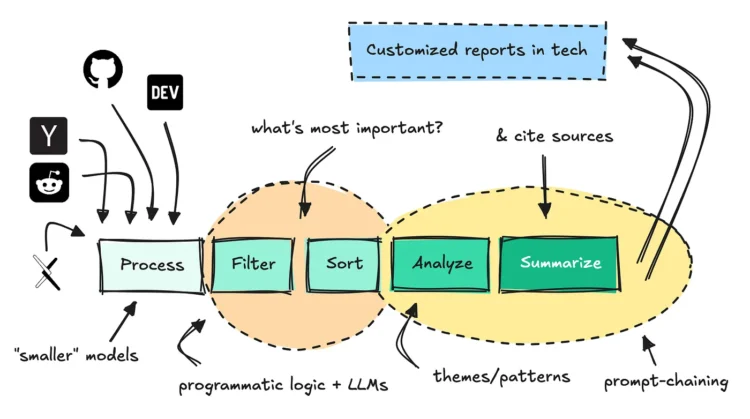

This text will present you the way to construct a distinct segment agent that may scout all of tech, combination tens of millions of texts, filter information based mostly on a persona, and discover patterns and themes you’ll be able to act on.

The purpose of this workflow is to keep away from sitting and scrolling via boards and social media by yourself. The agent ought to do it for you, grabbing no matter is beneficial.

We’ll be capable to pull this off utilizing a singular information supply, a managed workflow, and a few immediate chaining methods.

By caching information, we will maintain the price down to a couple cents per report.

If you wish to attempt the bot with out booting it up your self, you’ll be able to be part of this Discord channel. You’ll discover the repository right here if you wish to construct it by yourself.

This text focuses on the overall structure and the way to construct it, not the smaller coding particulars as you will discover these in Github.

Notes on constructing

In the event you’re new to constructing with brokers, you would possibly really feel like this one isn’t groundbreaking sufficient.

Nonetheless, if you wish to construct one thing that works, you will have to use various software program engineering to your AI purposes. Even when LLMs can now act on their very own, they nonetheless want steerage and guardrails.

For workflows like this, the place there’s a clear path the system ought to take, you need to construct extra structured “workflow-like” methods. When you’ve got a human within the loop, you’ll be able to work with one thing extra dynamic.

The rationale this workflow works so effectively is as a result of I’ve an excellent information supply behind it. With out this information moat, the workflow wouldn’t be capable to do higher than ChatGPT.

Making ready and caching information

Earlier than we will construct an agent, we have to put together a knowledge supply it may possibly faucet into.

One thing I believe lots of people get unsuitable once they work with LLM methods is the assumption that AI can course of and combination information completely by itself.

In some unspecified time in the future, we would be capable to give them sufficient instruments to construct on their very own, however we’re not there but when it comes to reliability.

So once we construct methods like this, we want information pipelines to be simply as clear as for some other system.

The system I’ve constructed right here makes use of a knowledge supply I already had accessible, which suggests I perceive the way to educate the LLM to faucet into it.

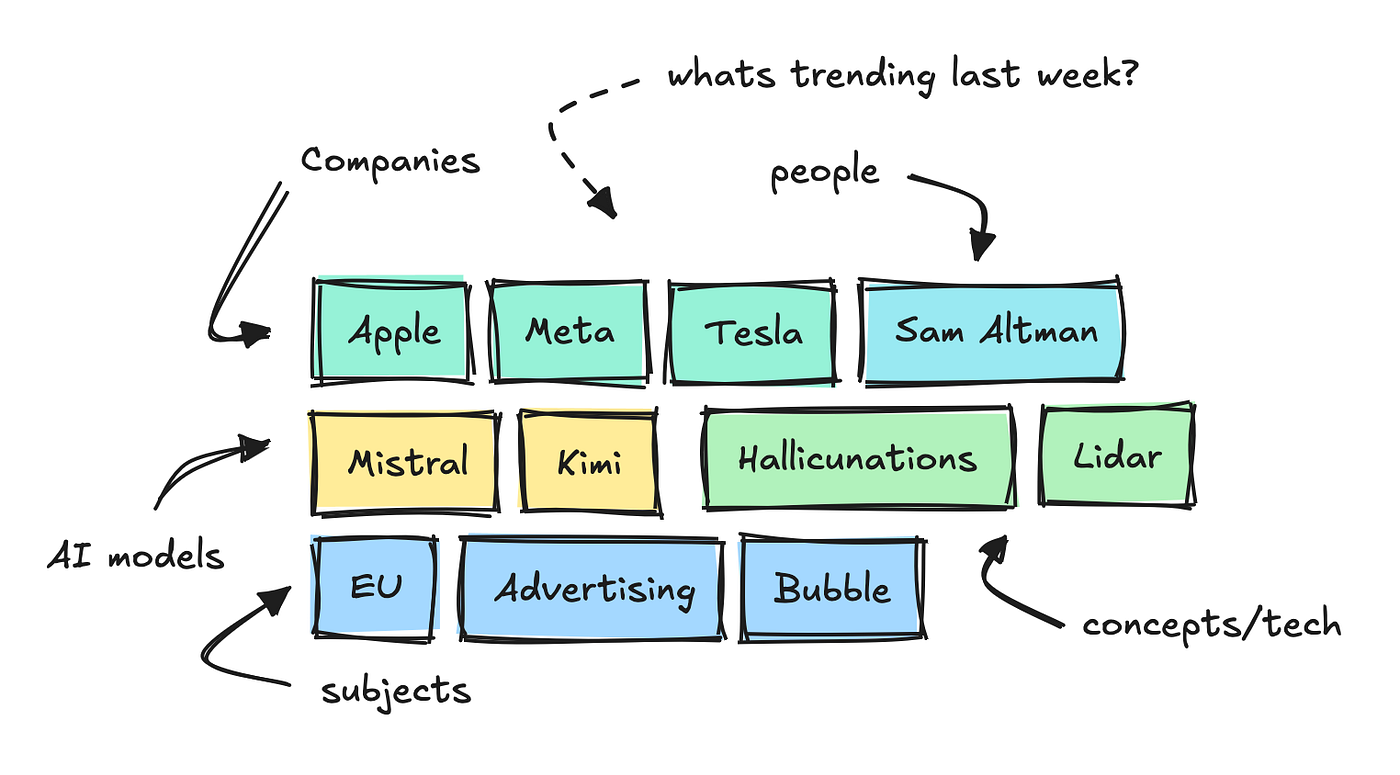

It ingests 1000’s of texts from tech boards and web sites per day and makes use of small NLP fashions to interrupt down the primary key phrases, categorize them, and analyze sentiment.

This lets us see which key phrases are trending inside completely different classes over a selected time interval.

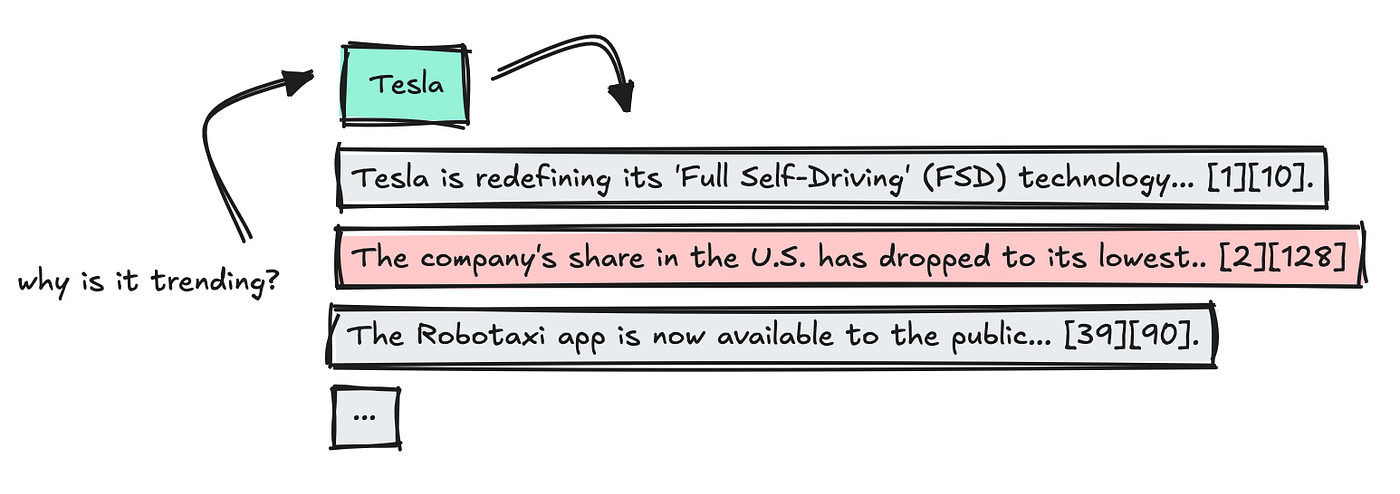

To construct this agent, I added one other endpoint that collects “info” for every of those key phrases.

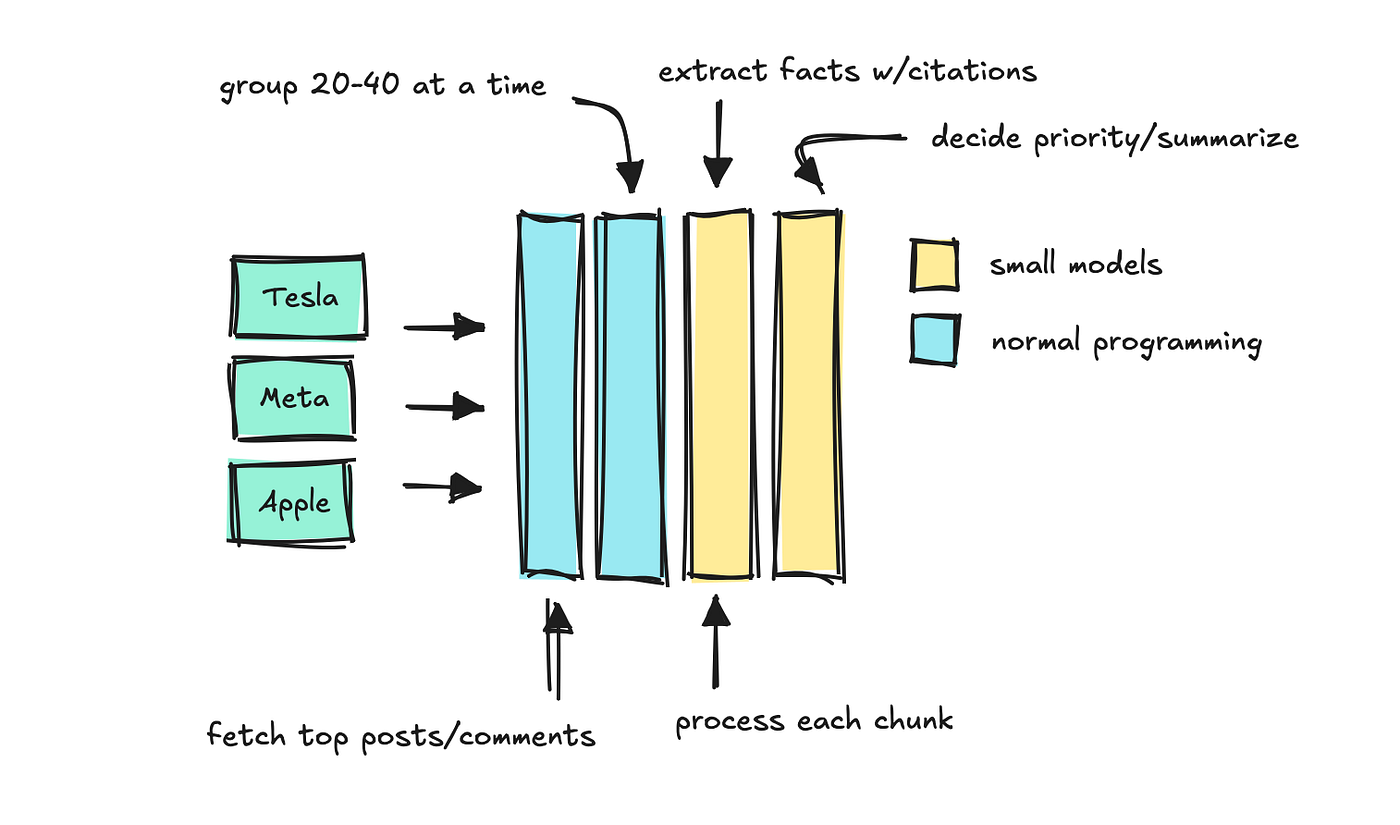

This endpoint receives a key phrase and a time interval, and the system types feedback and posts by engagement. Then it course of the texts in chunks with smaller fashions that may determine which “info” to maintain.

We apply a final LLM to summarize which info are most essential, maintaining the supply citations intact.

This can be a type of immediate chaining course of, and I constructed it to imitate LlamaIndex’s quotation engine.

The primary time the endpoint is known as for a key phrase, it may possibly take as much as half a minute to finish. However because the system caches the outcome, any repeat request takes just some milliseconds.

So long as the fashions are sufficiently small, the price of operating this on just a few hundred key phrases per day is minimal. Later, we will have the system run a number of key phrases in parallel.

You possibly can most likely think about now that we will construct a system to fetch these key phrases and info to construct completely different stories with LLMs.

When to work with small vs bigger fashions

Earlier than transferring on, let’s simply point out that selecting the best mannequin dimension issues.

I believe that is on everybody’s thoughts proper now.

There are fairly superior fashions you should use for any workflow, however as we begin to apply increasingly LLMs to those purposes, the variety of calls per run provides up rapidly and this may get costly.

So, when you’ll be able to, use smaller fashions.

You noticed that I used smaller fashions to quote and group sources in chunks. Different duties which can be nice for small fashions embrace routing and parsing pure language into structured information.

In the event you discover that the mannequin is faltering, you’ll be able to break the duty down into smaller issues and use immediate chaining, first do one factor, then use that outcome to do the following, and so forth.

You continue to wish to use bigger LLMs when it is advisable discover patterns in very massive texts, or once you’re speaking with people.

On this workflow, the price is minimal as a result of the information is cached, we use smaller fashions for many duties, and the one distinctive massive LLM calls are the ultimate ones.

How this agent works

Let’s undergo how the agent works below the hood. I constructed the agent to run inside Discord, however that’s not the main target right here. We’ll give attention to the agent structure.

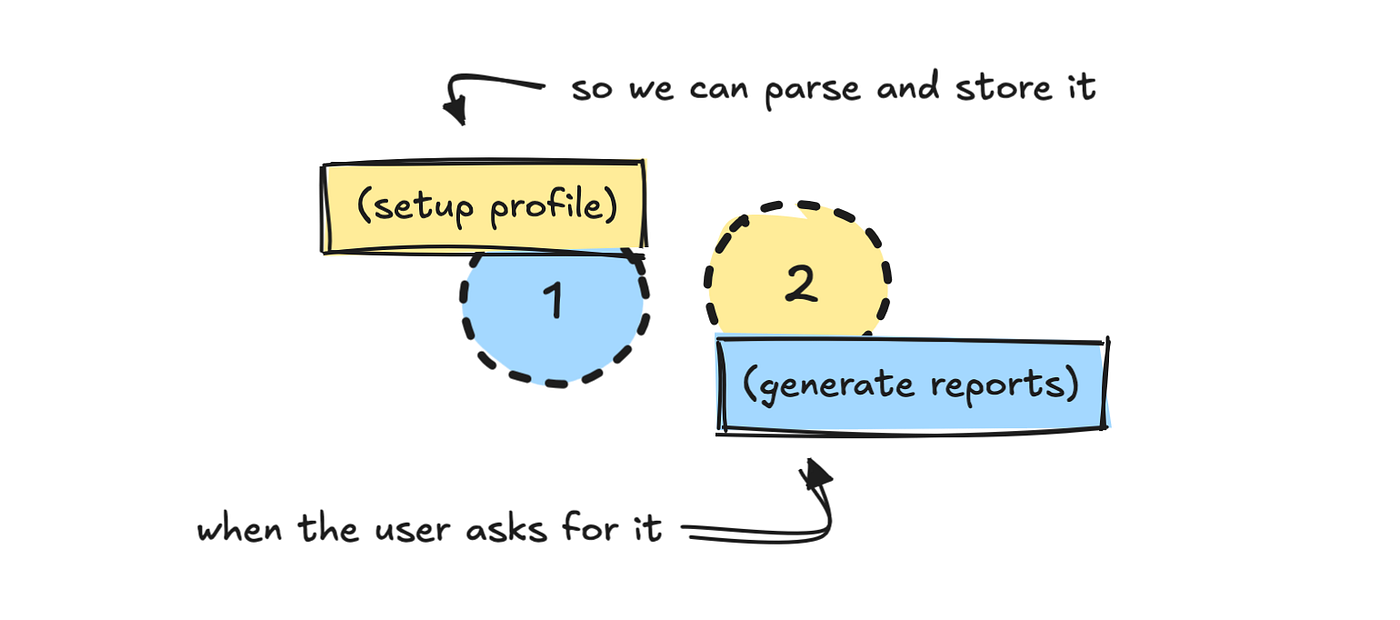

I break up the method into two components: one setup, and one information. The primary course of asks the consumer to arrange their profile.

Since I already know the way to work with the information supply, I’ve constructed a reasonably intensive system immediate that helps the LLM translate these inputs into one thing we will fetch information with later.

PROMPT_PROFILE_NOTES = """

You might be tasked with defining a consumer persona based mostly on the consumer's profile abstract.

Your job is to:

1. Choose a brief persona description for the consumer.

2. Choose essentially the most related classes (main and minor).

3. Select key phrases the consumer ought to observe, strictly following the foundations under (max 6).

4. Resolve on time interval (based mostly solely on what the consumer asks for).

5. Resolve whether or not the consumer prefers concise or detailed summaries.

Step 1. Character

- Write a brief description of how we must always take into consideration the consumer.

- Examples:

- CMO for non-technical product → "non-technical, skip jargon, give attention to product key phrases."

- CEO → "solely embrace extremely related key phrases, no technical overload, straight to the purpose."

- Developer → "technical, fascinated about detailed developer dialog and technical phrases."

[...]

"""

I’ve additionally outlined a schema for the outputs I would like:

class ProfileNotesResponse(BaseModel):

persona: str

major_categories: Listing[str]

minor_categories: Listing[str]

key phrases: Listing[str]

time_period: str

concise_summaries: boolWith out having area information of the API and the way it works, it’s unlikely that an LLM would work out how to do that by itself.

You can attempt constructing a extra intensive system the place the LLM first tries to be taught the API or the methods it’s supposed to make use of, however that may make the workflow extra unpredictable and dear.

For duties like this, I attempt to all the time use structured outputs in JSON format. That approach we will validate the outcome, and if validation fails, we re-run it.

That is the simplest method to work with LLMs in a system, particularly when there’s no human within the loop to examine what the mannequin returns.

As soon as the LLM has translated the consumer profile into the properties we outlined within the schema, we retailer the profile someplace. I used MongoDB, however that’s non-compulsory.

Storing the persona isn’t strictly required, however you do have to translate what the consumer says right into a type that permits you to generate information.

Producing the stories

Let’s take a look at what occurs within the second step when the consumer triggers the report.

When the consumer hits the /information command, with or with out a time interval set, we first fetch the consumer profile information we’ve saved.

This offers the system the context it must fetch related information, utilizing each classes and key phrases tied to the profile. The default time interval is weekly.

From this, we get a listing of high and trending key phrases for the chosen time interval that could be attention-grabbing to the consumer.

With out this information supply, constructing one thing like this could have been tough. The info must be ready prematurely for the LLM to work with it correctly.

After fetching key phrases, it might make sense so as to add an LLM step that filters out key phrases irrelevant to the consumer. I didn’t try this right here.

The extra pointless data an LLM is handed, the more durable it turns into for it to give attention to what actually issues. Your job is to make it possible for no matter you feed it’s related to the consumer’s precise query.

Subsequent, we use the endpoint ready earlier, which incorporates cached “info” for every key phrase. This offers us already vetted and sorted data for each.

We run key phrase calls in parallel to hurry issues up, however the first particular person to request a brand new key phrase nonetheless has to attend a bit longer.

As soon as the outcomes are in, we mix the information, take away duplicates, and parse the citations so every reality hyperlinks again to a selected supply by way of a key phrase quantity.

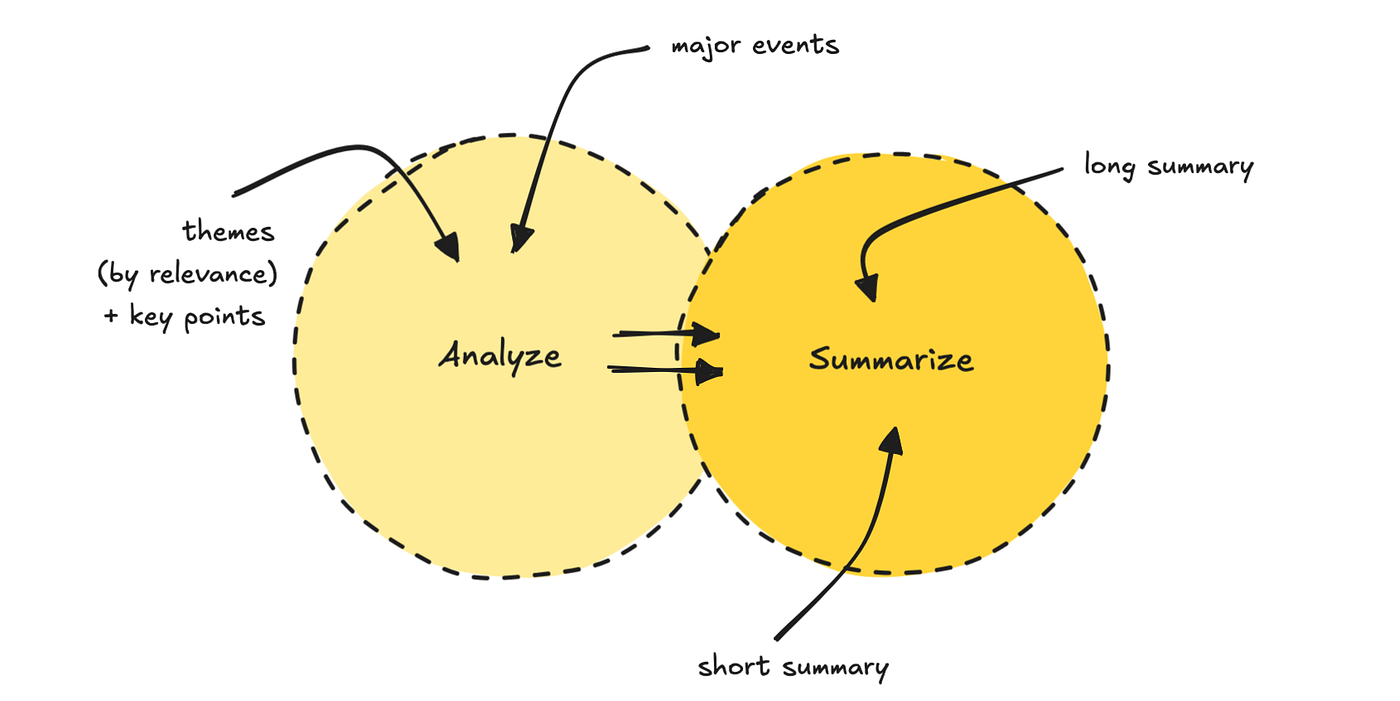

We then run the information via a prompt-chaining course of. The primary LLM finds 5 to 7 themes and ranks them by relevance, based mostly on the consumer profile. It additionally pulls out the important thing factors.

The second LLM move makes use of each the themes and authentic information to generate two completely different abstract lengths, together with a title.

We are able to do that to verify to scale back cognitive load on the mannequin.

This final step to construct the report takes essentially the most time, since I selected to make use of a reasoning mannequin like GPT-5.

You can swap it for one thing quicker, however I discover superior fashions are higher at this final stuff.

The total course of takes a couple of minutes, relying on how a lot has already been cached that day.

Take a look at the completed outcome under.

If you wish to take a look at the code and construct this bot your self, you will discover it right here. In the event you simply wish to generate a report, you’ll be able to be part of this channel.

I’ve some plans to enhance it, however I’m glad to listen to suggestions should you discover it helpful.

And if you need a problem, you’ll be able to rebuild it into one thing else, like a content material generator.

Notes on constructing brokers

Each agent you construct shall be completely different, so that is certainly not a blueprint for constructing with LLMs. However you’ll be able to see the extent of software program engineering this calls for.

LLMs, a minimum of for now, don’t take away the necessity for good software program and information engineers.

For this workflow, I’m largely utilizing LLMs to translate pure language into JSON after which transfer that via the system programmatically. It’s the simplest method to management the agent course of, but additionally not what individuals normally think about once they consider AI purposes.

There are conditions the place utilizing a extra free-moving agent is right, particularly when there’s a human within the loop.

However, hopefully you realized one thing, or obtained inspiration to construct one thing by yourself.

If you wish to observe my writing, observe me right here, my web site, Substack, or LinkedIn.

❤