https://github.com/syrax90/dynamic-solov2-tensorflow2 – Supply code of the venture described within the article.

Disclaimer

⚠️ Initially, notice that this venture is just not production-ready code.

and Why I Determined to Implement It from Scratch

This venture targets individuals who don’t have high-performance {hardware} (GPU significantly) however wish to research pc imaginative and prescient or not less than on the way in which of discovering themselves as an individual on this space. I attempted to make the code as clear as attainable, so I used Google’s description fashion for all strategies and lessons, feedback contained in the code to make the logic and calculations extra clear and used Single Duty Precept and different OOP rules to make the code extra human-readable.

Because the title of the article suggests, I made a decision to implement Dynamic SOLO from scratch to deeply perceive all of the intricacies of implementing such fashions, together with your entire cycle of practical manufacturing, to higher perceive the issues that may be encountered in pc imaginative and prescient duties, and to realize useful expertise in creating pc imaginative and prescient fashions utilizing TensorFlow. Wanting forward, I’ll say that I used to be not mistaken with this selection, because it introduced me lots of new abilities and information.

I might suggest implementing fashions from scratch to everybody who wish to perceive their rules of working deeper. That’s why:

- If you encounter a misunderstanding about one thing, you begin to delve deeper into the particular drawback. By exploring the issue, you discover a solution to the query of why a specific strategy was invented, and thus increase your information on this space.

- If you perceive the speculation behind an strategy or precept, you begin to discover tips on how to implement it utilizing current technical instruments. On this manner, you enhance your technical abilities for fixing particular issues.

- When implementing one thing from scratch, you higher perceive the worth of the trouble, time, and sources that may be spent on such duties. By evaluating them with comparable duties, you extra precisely estimate the prices and have a greater concept of the worth of comparable work, together with preparation, analysis, technical implementation, and even documentation.

TensorFlow was chosen because the framework just because I take advantage of this framework for many of my machine studying duties (nothing particular right here).

The venture represents implementation of Dynamic SOLO (SOLOv2) mannequin with TensorFlow2 framework.

SOLO: A Easy Framework for Occasion Segmentation,

Xinlong Wang, Rufeng Zhang, Chunhua Shen, Tao Kong, Lei Li

arXiv preprint (arXiv:2106.15947)

SOLO (Segmenting Objects by Areas) is a mannequin designed for pc imaginative and prescient duties, specifically as an example segmentation. It’s completely anchor-free framework that predicts masks with none bounding packing containers. The paper presents a number of variants of the mannequin: Vanilla SOLO, Decoupled SOLO, Dynamic SOLO, Decoupled Dynamic SOLO. Certainly, I carried out Vanilla SOLO first as a result of it’s the best of all of them. However I’m not going to publish the code as a result of there isn’t any giant distinguish between Vanilla and Dynamic SOLO from implementation viewpoint.

Mannequin

Truly, the mannequin might be very versatile in line with the rules described within the SOLO paper: from the variety of FPN layers to the variety of parameters within the layers. I made a decision to begin with the best implementation. The essential concept of the mannequin is to divide your entire picture into cells, the place one grid cell can symbolize just one occasion: decided class + segmentation masks.

Spine

I selected ResNet50 because the spine as a result of it’s a light-weight community that fits for starting completely. I didn’t use pretrained parameters for ResNet50 as a result of I used to be experimenting with extra than simply authentic COCO dataset. Nonetheless, you need to use pretrained parameters in the event you intend to make use of the unique COCO dataset, because it saves time, quickens the coaching course of, and improves efficiency.

spine = ResNet50(weights='imagenet', include_top=False, input_shape=input_shape)

spine.trainable = FalseNeck

FPN (Function Pyramid Community) is used because the neck for extracting multi-scale options. Throughout the FPN, we use all outputs C2, C3, C4, C5 from the corresponding residual blocks of ResNet50 as described within the FPN paper (Function Pyramid Networks for Object Detection by Tsung-Yi Lin, Piotr Dollár, Ross Girshick, Kaiming He, Bharath Hariharan, Serge Belongie). Every FPN degree represents a particular scale and has its personal grid as proven above.

Observe: You shouldn’t use all FPN ranges in the event you work with a small customized dataset the place all objects are roughly the identical scale. In any other case, you prepare further parameters that aren’t used and consequently require extra GPU sources in useless. In that case, you’d have to regulate the dataset in order that it returns targets for simply 1 scale, not all 4.

Head

The outputs of the FPN layers are used as inputs to layers the place the occasion class and its masks are decided. Head accommodates two parallel branches for the goal: Classification department and Masks kernel department.

Observe: I excluded Masks Function from the Head based mostly on the Vanilla Head structure. Masks Function is described individually under.

- Classification department (within the determine above it’s designated as “Class”) – is liable for predicting the category of every occasion (grid cell) in a picture. It consists of a sequence of Conv2D -> GroupNorm -> ReLU units organized in a row. I utilized a sequence of 4 such units.

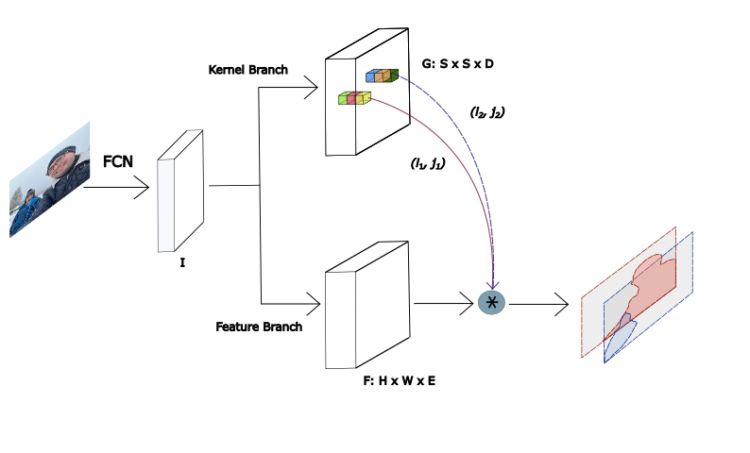

- Masks department (within the determine above it’s designated as “Masks”) – here’s a important nuance: in contrast to within the Vanilla SOLO mannequin, it doesn’t generate masks instantly. As an alternative, it predicts a masks kernel (known as “Masks kernel” in Part 3.2.3 Dynamic SOLO of the paper), which is later utilized by means of dynamic convolution with the Masks characteristic described under. This design differentiates Dynamic SOLO from Vanilla SOLO by lowering the variety of parameters and making a extra environment friendly, light-weight structure. The Masks department predicts a masks kernel for every occasion (grid cell) utilizing the identical construction because the Classification department: a sequence of Conv2D -> GroupNorm -> ReLU units organized in a row. I additionally carried out 4 such units within the mannequin.

Observe: For small customized datasets, you’ll be able to usen even 1 such set for each the masks and classification branches, avoiding coaching pointless parameters

Masks Function

The Masks characteristic department is mixed with the Masks kernel department to find out the ultimate predicted masks. This layer fuses multi-level FPN options to provide a unified masks characteristic map. The authors of the paper evaluated two approaches to implementing the Masks characteristic department: a particular masks characteristic for every FPN degree or one unified masks characteristic for all FPN ranges. Just like the authors, I selected the final one. The Masks characteristic department and Masks kernel department are mixed through dynamic convolution operation.

Dataset

I selected to work with the COCO dataset format, coaching my mannequin on each the unique COCO dataset and a small customized dataset structured in the identical format. I selected COCO format as a result of it has already been broadly researched, that makes writing code for parsing the format a lot simpler. Furthermore, the LabelMe device I selected to construct my customized dataset capable of convert a dataset on to COCO format. Moreover, beginning with a small customized dataset reduces coaching time and simplifies the event course of. Another reason to create a dataset by your self is the chance to higher perceive the dataset creation course of, take part in it instantly, and acquire new abilities in interacting with instruments like LabelMe. A small annotation file might be explored quicker and simpler than a big file if you wish to dive deeper into the COCO format.

Listed here are among the sub-tasks relating to datasets that I encountered whereas implementing the venture (they’re offered within the venture):

- Knowledge augmentation. Knowledge augmentation of a picture dataset is the method of increasing the dataset by making use of varied picture transformation strategies to generate new samples that differ from the unique ones. Mastering augmentation strategies is crucial, particularly for small datasets. I utilized strategies akin to Horizontal flip, Brightness adjustment, Random scaling, Random cropping to present an concept of how to do that and perceive how necessary it’s that the masks of the modified picture matches its new (augmented) picture.

- Changing to focus on. The SOLO mannequin expects a particular information format for the goal. It takes a normalized picture as enter, nothing particular. However for the goal, the mannequin expects extra complicated information:

- We now have to construct a grid for every scale separating it by the variety of grid cells for the particular scale. That implies that if we have now 4 FPN ranges – P2, P3, P4, P5 – for various scales, then we may have 4 grids with a sure variety of cells for every scale.

- For every occasion, we have now to outline by location the one cell to which the occasion belongs amongst all of the grids.

- For every outlined, the class and masks of the corresponding occasion are utilized. There may be a further drawback of changing the COCO format masks right into a masks consisting of ones for the masks pixels and zeros for the remainder of the pixels.

- Mix all the above into an inventory of tensors because the goal. I perceive that TensorFlow prefers a strict set of tensors over constructions like an inventory, however I made a decision to decide on an inventory for the added flexibility that you simply would possibly want in the event you determine to alter the variety of scales.

- Dataset in reminiscence or Generated. The are two fundamental choices for dataset allocation: storing samples in reminiscence or producing information on the fly. Regardless of of allocation in reminiscence has lots of benefits and there’s no drawback for lots of you to add total coaching dataset listing of COCO dataset into reminiscence (19.3 GB solely) – I deliberately selected to generate the dataset dynamically utilizing tf.information.Dataset.from_generator. Right here’s why: I believe it’s a very good talent to study what issues you would possibly encounter interacting with large information and tips on how to resolve them. As a result of when working with real-world issues, datasets might not solely comprise extra samples than COCO datasets, however their decision can also be a lot greater. Working with dynamically generated datasets is mostly a bit extra complicated to implement, however it’s extra versatile. After all, you’ll be able to exchange it with tf.information.Dataset.from_tensor_slices, if you want.

Coaching Course of

Loss Operate

SOLO doesn’t have an ordinary Loss Operate that’s not natively carried out in TensorFlow, so I carried out it on my own.

$$L = L_{cate} + lambda L_{masks}$$

The place:

- (L_{cate}) is the standard Focal Loss for semantic class classification.

- (L_{masks}) is the loss for masks prediction.

- (lambda) coefficient that’s set to three within the paper.

$$

L_{masks}

=

frac{1}{N_{pos}}

sum_k

mathbb{1}_{{p^*_{i,j} > 0}}

d_{masks}(m_k, m^*_k)

$$

The place:

- (N_{pos}) is the variety of constructive samples.

- (d_{masks}) is carried out as Cube Loss.

- ( i = lfloor okay/S rfloor ), ( j = okay mod S ) — Indices for grid cells, indexing left to proper and high to backside.

- 1 is the indicator operate, being 1 if (p^*_{i,j} > 0) and 0 in any other case.

$$L_{Cube}=1 – D(p, q)$$

The place D is the cube coefficient, which is outlined as

$$

D(p, q)

=

frac

{2 sum_{x,y} (p_{x,y} cdot q_{x,y})}

{sum_{x,y} p^2_{x,y} + sum_{x,y} q^2_{x,y}}

$$

The place (p_{x,y}), (q_{x,y}) are pixel values at (x,y) for predicted masks p and floor reality masks q. All particulars of the loss operate are described in 3.3.2 Loss Operate of the authentic SOLO paper

Resuming from Checkpoint.

In case you use a low-performance GPU, you would possibly encounter conditions the place coaching your entire mannequin in a single run is impractical. So as to not lose your educated weights and proceed to execute the coaching course of – this venture offers a Resuming from Checkpoint system. It means that you can save your mannequin each n epochs (the place n is configurable) and resume coaching later. To allow this, set load_previous_model to True and specify model_path in config.py.

self.load_previous_model = True

self.model_path = './weights/coco_epoch00000001.keras'Analysis Course of

To see how successfully your mannequin is educated and the way nicely it behaves on beforehand unseen pictures, an analysis course of is used. For the SOLO mannequin, I might break down the method into the next steps:

- Loading a check dataset.

- Getting ready the dataset to be suitable for the mannequin’s enter.

- Feeding the info into the mannequin.

- Suppressing ensuing masks with decrease likelihood for a similar occasion.

- Visualization of the unique check picture with the ultimate masks and predicted class for every occasion.

Essentially the most irregular process I confronted right here was implementing Matrix NMS (non-maximum suppression), described in 3.3.4 Matrix NMS of the authentic SOLO paper. NMS eliminates redundant masks representing the identical occasion with decrease likelihood. To keep away from predicting the identical occasion a number of occasions, we have to suppress these duplicate masks. The authors offered Python pseudo-code for Matrix NMS and one in all my duties was to interpret this pseudo-code and implement it utilizing TensorFlow. My implementation:

def matrix_nms(masks, scores, labels, pre_nms_k=500, post_nms_k=100, score_threshold=0.5, sigma=0.5):

"""

Carry out class-wise Matrix NMS on occasion masks.

Parameters:

masks (tf.Tensor): Tensor of form (N, H, W) with every masks as a sigmoid likelihood map (0~1).

scores (tf.Tensor): Tensor of form (N,) with confidence scores for every masks.

labels (tf.Tensor): Tensor of form (N,) with class labels for every masks (ints).

pre_nms_k (int): Variety of top-scoring masks to maintain earlier than making use of NMS.

post_nms_k (int): Variety of closing masks to maintain after NMS.

score_threshold (float): Rating threshold to filter out masks after NMS (default 0.5).

sigma (float): Sigma worth for Gaussian decay.

Returns:

tf.Tensor: Tensor of indices of masks saved after suppression.

"""

# Binarize masks at 0.5 threshold

seg_masks = tf.solid(masks >= 0.5, dtype=tf.float32) # form: (N, H, W)

mask_sum = tf.reduce_sum(seg_masks, axis=[1, 2]) # form: (N,)

# If desired, choose high pre_nms_k by rating to restrict computation

num_masks = tf.form(scores)[0]

if pre_nms_k is just not None:

num_selected = tf.minimal(pre_nms_k, num_masks)

else:

num_selected = num_masks

topk_indices = tf.argsort(scores, path='DESCENDING')[:num_selected]

seg_masks = tf.collect(seg_masks, topk_indices) # choose masks by high scores

labels_sel = tf.collect(labels, topk_indices)

scores_sel = tf.collect(scores, topk_indices)

mask_sum_sel = tf.collect(mask_sum, topk_indices)

# Flatten masks for matrix operations

N = tf.form(seg_masks)[0]

seg_masks_flat = tf.reshape(seg_masks, (N, -1)) # form: (N, H*W)

# Compute intersection and IoU matrix (N x N)

intersection = tf.matmul(seg_masks_flat, seg_masks_flat, transpose_b=True) # pairwise intersect counts

# Increase masks areas to full matrices

mask_sum_matrix = tf.tile(mask_sum_sel[tf.newaxis, :], [N, 1]) # form: (N, N)

union = mask_sum_matrix + tf.transpose(mask_sum_matrix) - intersection

iou = intersection / (union + 1e-6) # IoU matrix (keep away from div-by-zero)

# Zero out diagonal and decrease triangle (preserve i= score_threshold # boolean mask of those above threshold

new_scores = tf.where(keep_mask, new_scores, tf.zeros_like(new_scores))

# Select top post_nms_k by the decayed scores

if post_nms_k is not None:

num_final = tf.minimum(post_nms_k, tf.shape(new_scores)[0])

else:

num_final = tf.form(new_scores)[0]

final_indices = tf.argsort(new_scores, path='DESCENDING')[:num_final]

final_indices = tf.boolean_mask(final_indices, tf.higher(tf.collect(new_scores, final_indices), 0))

# Map again to authentic indices

kept_indices = tf.collect(topk_indices, final_indices)

return kept_indices

Under is an instance of pictures with overlaid masks predicted by the mannequin for a picture it has by no means seen earlier than:

Recommendation for Implementation from Scratch

- Which information can we map to which operate? It is vitally necessary to be sure that we feed the correct information to the mannequin. The information ought to match what is predicted at every layer, and every layer processes the enter information in order that the output is appropriate for the following layer. As a result of we finally calculate the loss operate based mostly on this information. Primarily based on the implementation of SOLO, I spotted that some objectives will not be so simple as they appear at first look. I described this within the Dataset chapter.

- Analysis the paper. It’s unattainable to flee studying the paper you’re about to construct your mannequin based mostly on. I do know it’s apparent, however regardless of the numerous references to different earlier works and papers, you have to perceive the rules. If you begin researching a paper, it’s possible you’ll be confronted with lots of different papers that you have to learn and perceive earlier than you are able to do so, and this may be fairly a difficult process. However normally, even essentially the most up-to-date paper relies on a set of rules which were identified for a while and should not new. Because of this you’ll find lots of materials on the Web that describes these rules very clearly. You should utilize LLM applications for this objective, which might summarize the knowledge, give examples, and allow you to perceive among the works and papers.

- Begin with small steps. That is trivial recommendation, however to implement a pc imaginative and prescient mannequin with hundreds of thousands of parameters, you don’t have to waste time on ineffective coaching, dataset preparation, analysis, and so forth. if you’re within the improvement stage and should not certain that the mannequin will work appropriately. Furthermore, you probably have a low-performance GPU, the method takes even longer. So, don’t begin with large datasets, many parameters, and a sequence of layers. You’ll be able to even let the mannequin overfit within the first stage of improvement with a small dataset and a small variety of parameters, to make certain that the info is appropriately matched to the targets of the mannequin.

- Debug your code. Debugging your code means that you can make certain that you’ve gotten anticipated code behaviour and information worth on every step. I perceive that everybody who not less than as soon as developed a software program product is aware of about it, they usually don’t want the recommendation. However I wish to spotlight it anyway as a result of constructing fashions, writing Loss Operate, making ready datasets for enter and targets we work together with math operations and tensors lots. And it requires elevated consideration from us in contrast to routine programming code we face on a regular basis and know the way it works with out debugging.

Conclusion

It is a transient description of the venture with none technical particulars, to present a basic image and keep away from studying fatigue. Clearly, an outline of a venture devoted to a pc imaginative and prescient mannequin can’t be slot in one article. If I see curiosity within the venture from readers, I could write a extra detailed evaluation with technical particulars.