Who ought to learn this text?

This text goals to offer a primary newbie stage understanding of NeRF’s workings by means of visible representations. Whereas numerous blogs provide detailed explanations of NeRF, these are sometimes geared towards readers with a robust technical background in quantity rendering and 3D graphics. In distinction, this text seeks to clarify NeRF with minimal prerequisite data, with an optionally available technical snippet on the finish for curious readers. For these within the mathematical particulars behind NeRF, a listing of additional readings is offered on the finish.

What’s NeRF and How Does It Work?

NeRF, quick for Neural Radiance Fields, is a 2020 paper introducing a novel technique for rendering 2D pictures from 3D scenes. Conventional approaches depend on physics-based, computationally intensive methods reminiscent of ray casting and ray tracing. These contain tracing a ray of sunshine from every pixel of the 2D picture again to the scene particles to estimate the pixel shade. Whereas these strategies provide excessive accuracy (e.g., pictures captured by cellphone cameras intently approximate what the human eye perceives from the identical angle), they’re typically sluggish and require important computational sources, reminiscent of GPUs, for parallel processing. Consequently, implementing these strategies on edge gadgets with restricted computing capabilities is almost not possible.

NeRF addresses this subject by functioning as a scene compression technique. It makes use of an overfitted multi-layer perceptron (MLP) to encode scene info, which may then be queried from any viewing course to generate a 2D-rendered picture. When correctly educated, NeRF considerably reduces storage necessities; for instance, a easy 3D scene can usually be compressed into about 5MB of information.

At its core, NeRF solutions the next query utilizing an MLP:

What is going to I see if I view the scene from this course?

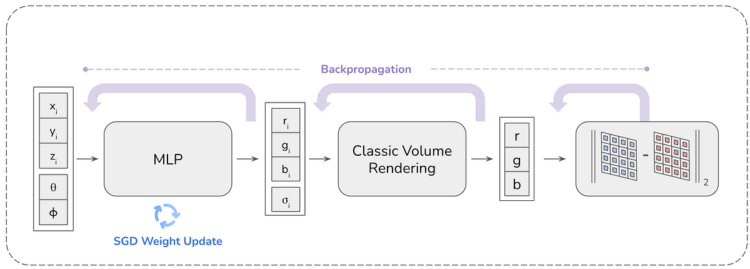

This query is answered by offering the viewing course (by way of two angles (θ, φ), or a unit vector) to the MLP as enter, and MLP offers RGB (directional emitted shade) and quantity density, which is then processed by means of volumetric rendering to supply the ultimate RGB worth that the pixel sees. To create a picture of a sure decision (say HxW), the MLP is queried HxW occasions for every pixel’s viewing course, and the picture is created. For the reason that launch of the primary NeRF paper, quite a few updates have been made to reinforce rendering high quality and pace. Nonetheless, this weblog will give attention to the unique NeRF paper.

Step 1: Multi-view enter pictures

NeRF wants numerous pictures from totally different viewing angles to compress a scene. MLP learns to interpolate these pictures for unseen viewing instructions (novel views). The data on the viewing course for a picture is offered utilizing the digital camera’s intrinsic and extrinsic matrices. The extra pictures spanning a variety of viewing instructions, the higher the NeRF reconstruction of the scene is. In brief, the essential NeRF takes enter digital camera pictures, and their related digital camera intrinsic and extrinsic matrices. (You possibly can be taught extra concerning the digital camera matrices within the weblog under)

Step2 to 4: Sampling, Pixel iteration, and Ray casting

Every picture within the enter pictures is processed independently (for the sake of simplicity). From the enter, a picture and its related digital camera matrices are sampled. For every digital camera picture pixel, a ray is traced from the digital camera middle to the pixel and prolonged outwards. If the digital camera middle is outlined as o, and the viewing course as directional vector d, then the ray r(t) may be outlined as r(t)=o+td the place t is the space of the purpose r(t) from the middle of the digital camera.

Ray casting is completed to establish the components of the scene that contribute to the colour of the pixel.