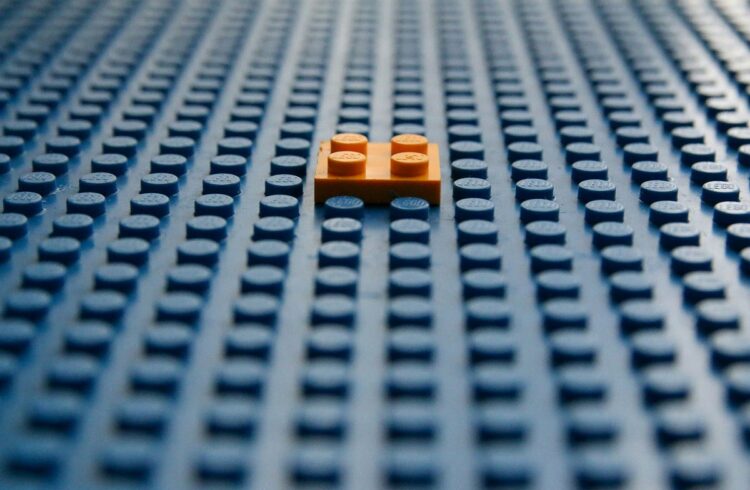

As illustrated in determine 1, DSPy is a pytorch-like/lego-like framework for constructing LLM-based apps. Out of the field, it comes with:

- Signatures: These are specs to outline enter and output behaviour of a DSPy program. These may be outlined utilizing short-hand notation (like “query -> reply” the place the framework mechanically understands query is the enter whereas reply is the output) or utilizing declarative specification utilizing python lessons (extra on this in later sections)

- Modules: These are layers of predefined parts for highly effective ideas like Chain of Thought, ReAct and even the straightforward textual content completion (Predict). These modules summary underlying brittle prompts whereas nonetheless offering extensibility via customized parts.

- Optimizers: These are distinctive to DSPy framework and draw inspiration from PyTorch itself. These optimizers make use of annotated datasets and analysis metrics to assist tune/optimize our LLM-powered DSPy applications.

- Information, Metrics, Assertions and Trackers are a number of the different parts of this framework which act as glue and work behind the scenes to counterpoint this total framework.

To construct an app/program utilizing DSPy, we undergo a modular but step-by-step strategy (as proven in determine 1 (proper)). We first outline our activity to assist us clearly outline our program’s signature (enter and output specs). That is adopted by constructing a pipeline program which makes use of a number of abstracted immediate modules, language mannequin module in addition to retrieval mannequin modules. One we’ve all of this in place, we then proceed to have some examples together with required metrics to consider our setup that are utilized by optimizers and assertion parts to compile a strong app.

Langfuse is an LLM Engineering platform designed to empower builders in constructing, managing, and optimizing LLM-powered functions. Whereas it presents each managed and self-hosting options, we’ll deal with the self-hosting possibility on this publish, offering you with full management over your LLM infrastructure.

Key Highlights of Langfuse Setup

Langfuse equips you with a set of highly effective instruments to streamline the LLM improvement workflow:

- Immediate Administration: Effortlessly model and retrieve prompts, guaranteeing reproducibility and facilitating experimentation.

- Tracing: Achieve deep visibility into your LLM functions with detailed traces, enabling environment friendly debugging and troubleshooting. The intuitive UI out of the field allows groups to annotate mannequin interactions to develop and consider coaching datasets.

- Metrics: Monitor essential metrics resembling price, latency, and token utilization, empowering you to optimize efficiency and management bills.

- Analysis: Seize person suggestions, annotate LLM responses, and even arrange analysis capabilities to repeatedly assess and enhance your fashions.

- Datasets: Handle and manage datasets derived out of your LLM functions, facilitating additional fine-tuning and mannequin enhancement.

Easy Setup

Langfuse’s self-hosting resolution is remarkably straightforward to arrange, leveraging a docker-based structure which you could rapidly spin up utilizing docker compose. This streamlined strategy minimizes deployment complexities and lets you deal with constructing your LLM functions.

Framework Compatibility

Langfuse seamlessly integrates with standard LLM frameworks like LangChain, LlamaIndex, and, after all, DSPy, making it a flexible instrument for a variety of LLM improvement frameworks.

By integrating Langfuse into your DSPy functions, you unlock a wealth of observability capabilities that allow you to observe, analyze, and optimize your fashions in actual time.

Integrating Langfuse into Your DSPy App

The combination course of is easy and entails instrumenting your DSPy code with Langfuse’s SDK.

import dspy

from dsp.trackers.langfuse_tracker import LangfuseTracker# configure tracker

langfuse = LangfuseTracker()

# instantiate openai consumer

openai = dspy.OpenAI(

mannequin='gpt-4o-mini',

temperature=0.5,

max_tokens=1500

)

# dspy predict supercharged with computerized langfuse trackers

openai("What's DSPy?")

Gaining Insights with Langfuse

As soon as built-in, Langfuse offers a variety of actionable insights into your DSPy utility’s conduct:

- Hint-Primarily based Debugging: Comply with the execution move of your DSPY applications, pinpoint bottlenecks, and determine areas for enchancment.

- Efficiency Monitoring: Monitor key metrics like latency and token utilization to make sure optimum efficiency and cost-efficiency.

- Person Interplay Evaluation: Perceive how customers work together together with your LLM app, determine widespread queries, and alternatives for enhancement.

- Information Assortment & High quality-Tuning: Accumulate and annotate LLM responses, constructing useful datasets for additional fine-tuning and mannequin refinement.

Use Circumstances Amplified

The mix of DSPy and Langfuse is especially necessary within the following situations:

- Advanced Pipelines: When coping with advanced DSPy pipelines involving a number of modules, Langfuse’s tracing capabilities change into indispensable for debugging and understanding the move of knowledge.

- Manufacturing Environments: In manufacturing settings, Langfuse’s monitoring options guarantee your LLM app runs easily, offering early warnings of potential points whereas maintaining a tally of prices concerned.

- Iterative Improvement: Langfuse’s analysis and dataset administration instruments facilitate data-driven iteration, permitting you to repeatedly refine your LLM app primarily based on real-world utilization.

To actually showcase the ability and flexibility of DSPy mixed with wonderful monitoring capabilities of langfuse, I’ve not too long ago utilized them to a novel dataset: my current LLM workshop GitHub repository. This current full day workshop accommodates quite a lot of materials to get you began with LLMs. The intention of this Q&A bot was to help individuals throughout and after the workshop with solutions to a bunch NLP and LLM associated subjects lined within the workshop. This “meta” use case not solely demonstrates the sensible utility of those instruments but additionally provides a contact of self-reflection to our exploration.

The Process: Constructing a Q&A System

For this train, we’ll leverage DSPy to construct a Q&A system able to answering questions concerning the content material of my workshop (notebooks, markdown recordsdata, and so on.). This activity highlights DSPy’s potential to course of and extract info from textual information, an important functionality for a variety of LLM functions. Think about having a private AI assistant (or co-pilot) that may make it easier to recall particulars out of your previous weeks, determine patterns in your work, and even floor forgotten insights! It additionally presents a robust case of how such a modular setup may be simply prolonged to some other textual dataset with little to no effort.

Allow us to start by establishing the required objects for our program.

import os

import dspy

from dsp.trackers.langfuse_tracker import LangfuseTrackerconfig = {

'LANGFUSE_PUBLIC_KEY': 'XXXXXX',

'LANGFUSE_SECRET_KEY': 'XXXXXX',

'LANGFUSE_HOST': 'http://localhost:3000',

'OPENAI_API_KEY': 'XXXXXX',

'OPENAI_BASE_URL': 'XXXXXX',

'OPENAI_PROVIDER': 'XXXXXX',

'CHROMA_DB_PATH': './chromadb/',

'CHROMA_COLLECTION_NAME':"supercharged_workshop_collection",

'CHROMA_EMB_MODEL': 'all-MiniLM-L6-v2'

}

# setting config

os.environ["LANGFUSE_PUBLIC_KEY"] = config.get('LANGFUSE_PUBLIC_KEY')

os.environ["LANGFUSE_SECRET_KEY"] = config.get('LANGFUSE_SECRET_KEY')

os.environ["LANGFUSE_HOST"] = config.get('LANGFUSE_HOST')

os.environ["OPENAI_API_KEY"] = config.get('OPENAI_API_KEY')

# setup Langfuse tracker

langfuse_tracker = LangfuseTracker(session_id='supercharger001')

# instantiate language-model for DSPY

llm_model = dspy.OpenAI(

api_key=config.get('OPENAI_API_KEY'),

mannequin='gpt-4o-mini'

)

# instantiate chromadb consumer

chroma_emb_fn = embedding_functions.

SentenceTransformerEmbeddingFunction(

model_name=config.get(

'CHROMA_EMB_MODEL'

)

)

consumer = chromadb.HttpClient()

# setup chromadb assortment

assortment = consumer.create_collection(

config.get('CHROMA_COLLECTION_NAME'),

embedding_function=chroma_emb_fn,

metadata={"hnsw:area": "cosine"}

)

As soon as we’ve these purchasers and trackers in place, allow us to rapidly add some paperwork to our assortment (discuss with this pocket book for an in depth stroll via of how I ready this dataset within the first place).

# Add to assortment

assortment.add(

paperwork=[v for _,v in nb_scraper.notebook_md_dict.items()],

ids=doc_ids, # should be distinctive for every doc

)

The subsequent step is to easily join our chromadb retriever to the DSPy framework. The next snippet created a RM object and assessments if the retrieval works as meant.

retriever_model = ChromadbRM(

config.get('CHROMA_COLLECTION_NAME'),

config.get('CHROMA_DB_PATH'),

embedding_function=chroma_emb_fn,

consumer=consumer,

ok=5

)# Check Retrieval

outcomes = retriever_model("RLHF")

for end in outcomes:

show(Markdown(f"__Document__::{outcome.long_text[:100]}... n"))

show(Markdown(f">- __Document id__::{outcome.id} n>- __Document score__::{outcome.rating}"))

The output seems to be promising on condition that with none intervention, Chromadb is ready to fetch probably the most related paperwork.

Doc::# Fast Overview of RLFHThe efficiency of Language Fashions till GPT-3 was type of wonderful as-is. ...

- Doc id::6_module_03_03_RLHF_phi2

- Doc rating::0.6174977412306334

Doc::# Getting Began : Textual content Illustration Picture

The NLP area ...

- Doc id::2_module_01_02_getting_started

- Doc rating::0.8062083377747705

Doc::# Textual content Technology ...

- Doc id::3_module_02_02_simple_text_generator

- Doc rating::0.8826038964887366

Doc::# Picture DSPy: Past Prompting

...

...

- Doc id::12_module_04_05_dspy_demo

- Doc rating::0.9200280698248913

The ultimate step is to piece all of this collectively in getting ready a DSPy program. For our easy Q&A use-case we make put together an ordinary RAG program leveraging Chromadb as our retriever and Langfuse as our tracker. The next snippet presents the pytorch-like strategy of creating LLM primarily based apps with out worrying about brittle prompts!

# RAG Signature

class GenerateAnswer(dspy.Signature):

"""Reply questions with quick factoid solutions."""context = dspy.InputField(desc="could comprise related details")

query = dspy.InputField()

reply = dspy.OutputField(desc="typically lower than 50 phrases")

# RAG Program

class RAG(dspy.Module):

def __init__(self, num_passages=3):

tremendous().__init__()

self.retrieve = dspy.Retrieve(ok=num_passages)

self.generate_answer = dspy.ChainOfThought(GenerateAnswer)

def ahead(self, query):

context = self.retrieve(query).passages

prediction = self.generate_answer(context=context, query=query)

return dspy.Prediction(context=context, reply=prediction.reply)

# compile a RAG

# notice: we're not utilizing any optimizers for this instance

compiled_rag = RAG()

Phew! Wasn’t that fast and easy to do? Allow us to now put this into motion utilizing just a few pattern questions.

my_questions = [

"List the models covered in module03",

"Brief summary of module02",

"What is LLaMA?"

]for query in my_questions:

# Get the prediction. This accommodates `pred.context` and `pred.reply`.

pred = compiled_rag(query)

show(Markdown(f"__Question__: {query}"))

show(Markdown(f"__Predicted Answer__: _{pred.reply}_"))

show(Markdown("__Retrieved Contexts (truncated):__"))

for idx,cont in enumerate(pred.context):

print(f"{idx+1}. {cont[:200]}..." )

print()

show(Markdown('---'))

The output is certainly fairly on level and serves the aim of being an assistant to this workshop materials answering questions and guiding the attendees properly.