On this article, you’ll learn the way machine studying is evolving in 2026 from prediction-focused methods into deeply built-in, action-oriented methods that drive real-world workflows.

Matters we’ll cowl embrace:

- Why agentic AI and generative AI are reshaping how machine studying methods are designed and deployed.

- How specialised fashions, edge deployment, and operational maturity are altering what efficient machine studying seems to be like in apply.

- Why human collaboration, explainability, and accountable design have gotten important as machine studying strikes deeper into decision-making.

Let’s not waste any extra time.

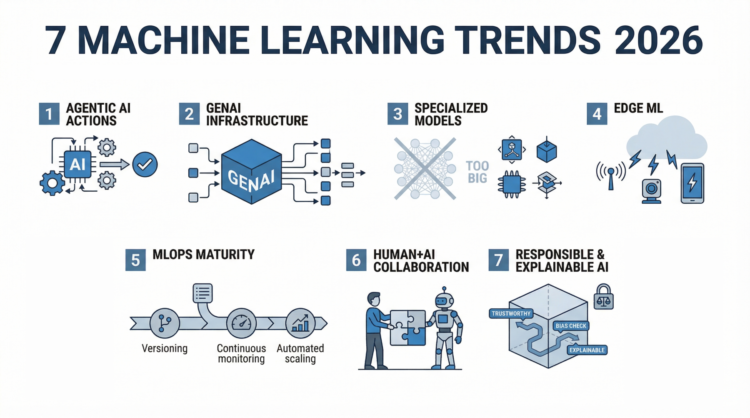

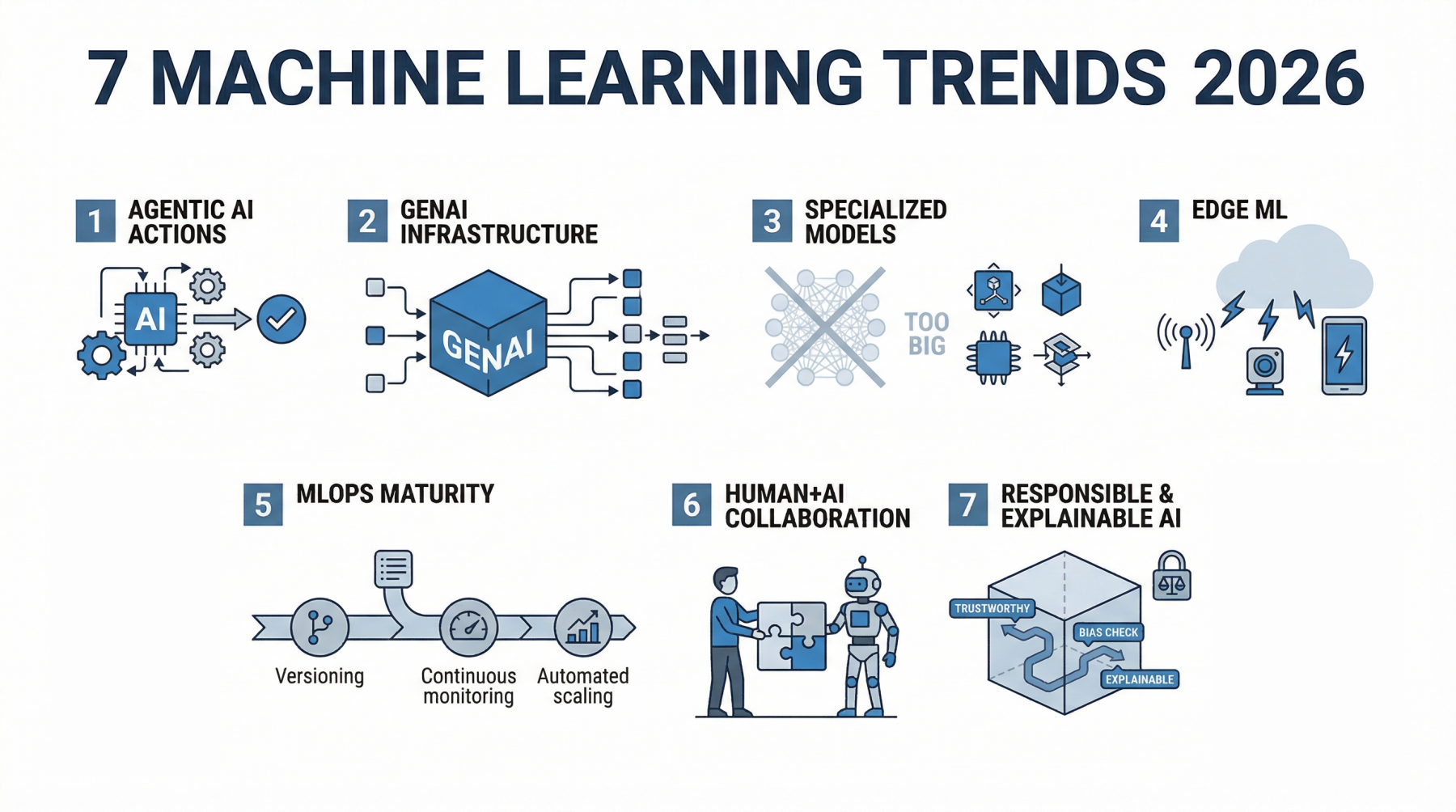

7 Machine Studying Developments to Watch in 2026

Picture by Editor

The Shifting Development Panorama

A few years in the past, most machine studying methods sat quietly behind dashboards. You gave them knowledge, they returned predictions, and a human nonetheless needed to determine what to do subsequent. That boundary is fading. In 2026, machine studying is now not simply one thing you question. It’s one thing that acts, usually with out ready for permission.

The shift didn’t occur in a single day. In 2023 and 2024, the main target was on functionality. Larger fashions, higher benchmarks, and extra spectacular demos. Groups rushed to plug AI into merchandise simply to show they may. What adopted was a actuality verify. A lot of these early implementations struggled in manufacturing. They had been costly, exhausting to keep up, and infrequently disconnected from actual workflows.

Now the main target has modified. Machine studying is being designed round outcomes, not simply outputs. Programs are anticipated to finish duties, not simply help with them. A buyer assist mannequin doesn’t simply counsel replies; it resolves tickets. A knowledge pipeline doesn’t simply flag anomalies; it triggers actions. The distinction is delicate, however it modifications how the whole lot is constructed.

This shift can also be mirrored in how a lot cash is transferring into the house. International AI spending is projected to achieve $2.02 trillion by 2026. On the similar time, the machine studying market is predicted to develop towards $1.88 trillion by 2035. These aren’t speculative investments anymore. They mirror methods which are already being embedded into core enterprise operations.

What stands out in 2026 is not only how highly effective these fashions are, however how deeply they’re built-in. Machine studying is now not sitting on the facet as an experimental function. It’s a part of the workflow itself, shaping choices, automating processes, and, in lots of circumstances, operating them finish to finish.

Listed below are the 7 tendencies truly shaping how machine studying is being constructed and utilized in 2026.

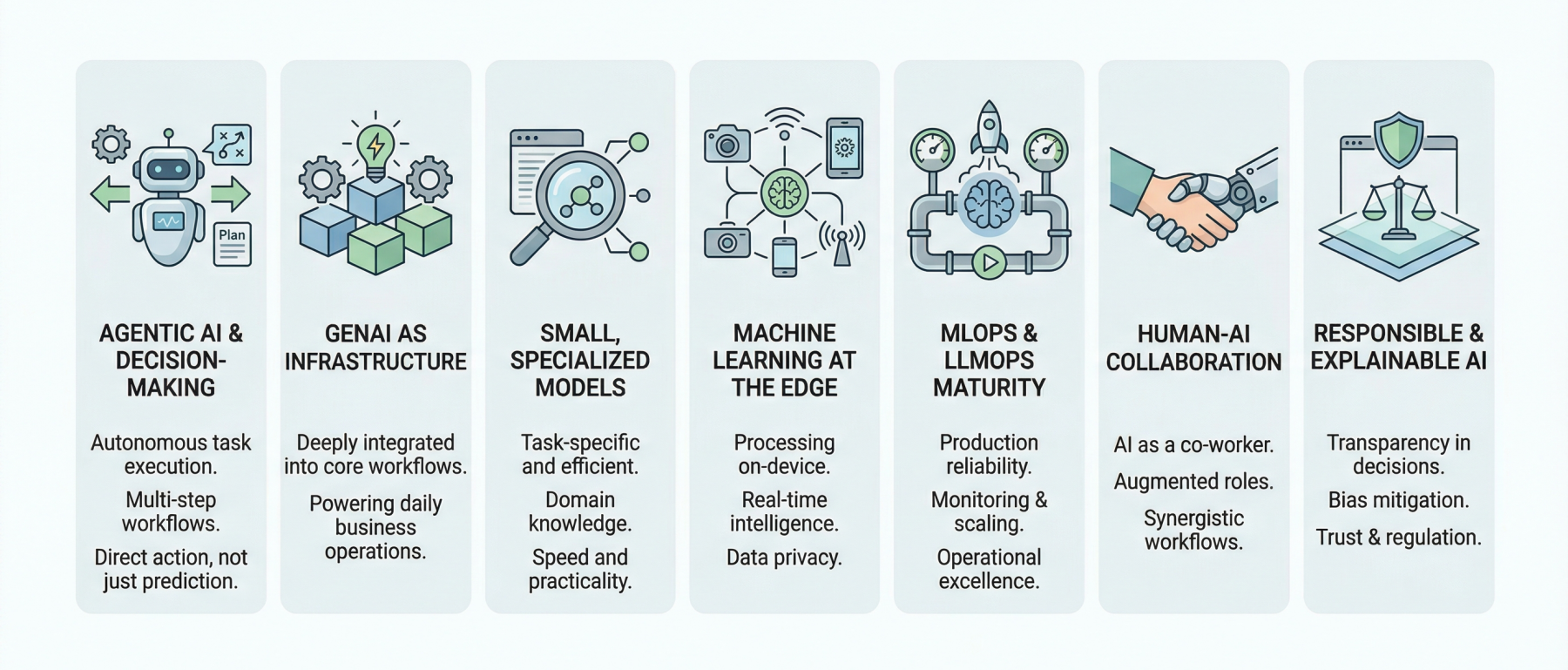

Development 1: Agentic AI Strikes From Assistants to Choice-Makers

For a very long time, machine studying methods behaved like quiet assistants. You gave them enter, they returned an output, and the accountability of appearing on that output stayed with a human or one other system. That mannequin is breaking down.

Agentic AI modifications the function fully. As an alternative of ready for directions, these methods can plan, make choices, and perform duties from begin to end.

The distinction turns into clear once you examine it to conventional machine studying. A typical mannequin would possibly predict buyer churn or classify assist tickets. Helpful, however restricted. An agentic system takes it additional. It identifies a high-risk buyer, decides on the perfect retention technique, drafts a customized message, and triggers the outreach. The output is now not only a prediction. It’s an motion.

What makes this potential is the power to deal with multi-step workflows. Agentic methods can break down a purpose into smaller duties, execute them in sequence, and alter alongside the best way. They will pull knowledge from totally different sources, name APIs, generate responses, and refine choices based mostly on suggestions. That is nearer to how a human approaches an issue than how a standard mannequin operates.

You’ll be able to already see this shift throughout industries. In buyer assist, AI brokers are resolving complete tickets with out escalation. In operations, they’re managing stock choices by combining demand forecasts with provide constraints. In healthcare, they help with duties like summarizing affected person data and recommending subsequent steps, lowering the time clinicians spend on routine work.

The numbers mirror how rapidly that is transferring. The AI brokers market is predicted to achieve $93.2 billion by 2032. On the similar time, stories counsel that as much as 40% of enterprise functions might embrace AI brokers by 2026. That stage of adoption factors to one thing greater than a pattern. It indicators a shift in how software program itself is designed.

That is arguably crucial change in machine studying proper now. As soon as methods can act on their very own, the whole lot else begins to evolve round that functionality. Mannequin design, infrastructure, and even consumer interfaces start to revolve round autonomy reasonably than help.

Development 2: Generative AI Turns into Infrastructure, Not a Function

There was a time when including generative AI to a product felt like a headline. A chatbot right here, a content material generator there. It was seen, typically spectacular, however usually remoted from the remainder of the system.

That part is ending. In 2026, generative AI is now not handled as an add-on. It’s changing into a part of the underlying infrastructure that powers on a regular basis workflows.

You’ll be able to see this shift in how groups are utilizing it. In software program growth, it’s embedded instantly into coding environments, serving to write, overview, and even refactor code in actual time. Equally, in enterprise operations, it generates stories, summarizes conferences, and pulls insights from massive datasets with out requiring handbook evaluation.

What’s totally different now is not only functionality, however placement. Generative fashions are now not sitting on the perimeters of functions. They’re built-in into the core workflow.

This shift has additionally pressured a transfer from experimentation to manufacturing. Early adopters spent the final two years testing what generative AI might do. Now the main target is on reliability, price, and consistency. Fashions are being fine-tuned, mixed with conventional machine studying methods, and related to structured knowledge sources. The result’s a hybrid method the place generative AI handles unstructured duties like textual content and reasoning, whereas conventional fashions deal with prediction and optimization.

The influence is already measurable. Corporations are reporting as much as a 30% discount in workload after integrating generative AI into their workflows. That type of enchancment will not be coming from remoted options. It comes from deep integration.

At this level, the dialog has shifted. Organizations are now not asking whether or not they need to undertake generative AI. The extra related query is the place it’s nonetheless lacking, and which elements of the workflow are nonetheless working with out it.

Development 3: Smaller, Specialised Fashions Begin Profitable

For some time, progress in machine studying was simple to measure. Larger fashions meant higher efficiency. Extra parameters, extra knowledge, and higher outcomes. That logic pushed the trade towards large methods that required severe compute, massive budgets, and sophisticated infrastructure.

In 2026, smaller and extra specialised fashions are gaining floor, not as a result of they’re extra spectacular, however as a result of they’re extra sensible. These fashions are designed for particular duties, skilled on centered datasets, and optimized for real-world use reasonably than benchmark efficiency.

Small language fashions (SLMs) are an excellent instance. As an alternative of making an attempt to deal with each potential activity, they’re constructed to carry out extraordinarily properly inside a slim area. That might be authorized doc evaluation, buyer assist conversations, or inner information retrieval. In these circumstances, a smaller mannequin that understands the context deeply usually outperforms a bigger, extra basic one.

The benefits are exhausting to disregard. Smaller fashions are cheaper to run, sooner to reply, and simpler to deploy. They will run on native servers and even instantly inside functions with out relying closely on exterior infrastructure. This reduces latency and provides groups extra management over efficiency and knowledge privateness.

There’s additionally a shift in how success is measured. As an alternative of asking how highly effective a mannequin is generally, groups are asking how properly it performs in a selected context. A mannequin that delivers constant, correct outcomes for a single business-critical activity is usually extra useful than a big mannequin that performs fairly properly throughout many duties however lacks precision the place it issues.

That is the place the give attention to effectivity is available in. Corporations are beginning to prioritize fashions that ship sturdy outcomes with decrease operational prices. Coaching and operating massive fashions is pricey, and never each use case justifies that funding. Smaller fashions provide a greater steadiness between efficiency and price, particularly when deployed at scale.

The underlying shift is easy. The trade is transferring away from uncooked scale as the first purpose and towards usability. In apply, meaning constructing fashions that match the issue, not fashions that attempt to cowl the whole lot.

At this level, mannequin dimension is now not a flex. Return on funding is what issues, and specialised fashions are making a robust case.

Development 4: Machine Studying Strikes to the Edge (IoT + Actual-Time Intelligence)

For years, most machine studying methods lived within the cloud. Knowledge was collected, despatched to centralized servers, processed, after which returned as predictions. That mannequin labored, however it got here with trade-offs: latency, bandwidth prices, and rising considerations round knowledge privateness.

In 2026, that setup is beginning to shift. Extra fashions are being pushed nearer to the place knowledge is definitely generated.

That is what edge machine studying seems to be like in apply. As an alternative of sending video feeds, sensor knowledge, or consumer inputs to the cloud, the mannequin runs instantly on the machine or close to it. A safety digital camera can detect uncommon exercise in actual time. A cell app can course of voice or picture knowledge immediately. Industrial machines can monitor efficiency and react with out ready for a spherical journey to a distant server.

The distinction between cloud machine studying and edge machine studying comes down to hurry and management. Cloud methods are highly effective and scalable, however they introduce delays. Edge methods cut back that delay to close zero as a result of the computation occurs domestically. To be used circumstances that depend upon fast responses, that distinction issues.

Actual-time inference is the place this turns into crucial. In areas like autonomous methods, healthcare monitoring, and sensible infrastructure, even small delays can have an effect on outcomes. Working fashions on the edge ensures choices are made as occasions occur, not seconds later.

There’s additionally a rising push round privateness. Sending massive volumes of uncooked knowledge to the cloud raises considerations, particularly when that knowledge contains delicate data. Edge machine studying permits a lot of that processing to occur domestically, with solely obligatory insights being shared. This reduces publicity and makes compliance simpler for corporations working beneath strict knowledge laws.

The dimensions of related units is one other issue driving this pattern. The variety of IoT units is predicted to achieve 39 billion by 2030. With that many units producing steady streams of knowledge, sending the whole lot to the cloud is now not environment friendly or sensible.

What is going on right here will not be an entire shift away from the cloud, however a redistribution of computation. Some duties will at all times require centralized processing, however an growing variety of choices are being made on the edge.

Development 5: MLOps and LLMOps Develop into Obligatory

It has by no means been simpler to construct a machine studying mannequin. With open-source instruments, pre-trained fashions, and APIs, a working prototype will be up and operating in hours. The exhausting half begins after that.

Working these methods reliably in manufacturing is the place most groups battle. That is the place MLOps is available in. It focuses on the whole lot that occurs after a mannequin is constructed: versioning, monitoring, deployment, scaling, and steady updates. As fashions turn into extra advanced, particularly with the rise of generative AI, this has expanded into LLMOps and even AgentOps. Every layer introduces new challenges. Immediate administration, response analysis, instrument integration, and multi-step execution all have to be dealt with rigorously.

The shift from experimentation to manufacturing has uncovered gaps that had been simple to disregard earlier than. A mannequin that performs properly in testing can behave unpredictably in real-world circumstances. Knowledge modifications, consumer conduct evolves, and small errors can scale rapidly. With out correct monitoring, these points usually go unnoticed till they have an effect on customers.

Groups at the moment are treating machine studying methods the identical manner they deal with crucial software program infrastructure. Meaning monitoring efficiency over time, managing totally different variations of fashions, and establishing pipelines that permit updates with out breaking present methods. It additionally means constructing safeguards: logging outputs, detecting anomalies, and creating fallback mechanisms when issues go flawed.

Scaling is one other strain level. A mannequin that works for just a few customers would possibly fail beneath heavy demand. Latency will increase, prices rise, and efficiency turns into inconsistent. MLOps practices assist handle this by optimizing how fashions are served and guaranteeing assets are used effectively.

What is evident in 2026 is that machine studying is now not a facet undertaking. It’s a part of the core system. When it fails, the product fails with it. Because of this operational maturity is changing into a aggressive benefit. Groups that may deploy, monitor, and enhance fashions constantly will transfer sooner and construct extra dependable methods. People who can’t will spend extra time fixing points than delivering worth.

At this level, realizing easy methods to construct a mannequin will not be sufficient. The actual differentiator is realizing easy methods to run it at scale.

Development 6: Human + AI Collaboration Turns into the Default

The early narrative round AI centered closely on alternative: jobs misplaced, roles automated, and whole features taken over. What’s changing into clearer in 2026 is one thing extra sensible. Many of the worth is coming from collaboration, not substitution.

AI is beginning to really feel much less like a instrument and extra like a co-worker. The distinction reveals up in how work will get executed. As an alternative of utilizing software program to execute mounted duties, persons are working alongside methods that may counsel, generate, overview, and refine outputs in actual time. The human units route, offers context, and makes remaining choices. The AI handles the heavy lifting in between.

In hospitals, this would possibly appear to be a system that summarizes affected person histories, highlights key dangers, and suggests potential subsequent steps, permitting clinicians to give attention to judgment and affected person interplay. In advertising, groups are utilizing AI to generate marketing campaign concepts, check variations, and analyze efficiency sooner than handbook processes would permit. In engineering, builders are writing, reviewing, and debugging code with AI methods that may sustain with the tempo of growth.

What stands out is not only velocity, however how roles are evolving. Duties that used to take hours at the moment are accomplished in minutes, which modifications how time is spent. As an alternative of specializing in execution, persons are spending extra time on technique, validation, and inventive problem-solving.

There’s already a measurable influence. AI-assisted workflows are bettering productiveness throughout industries, with many organizations reporting vital effectivity positive factors as these methods turn into a part of day by day operations. These positive factors aren’t coming from eradicating people from the loop, however from altering how they work inside it.

This shift additionally introduces a brand new type of talent. Realizing easy methods to ask the correct questions, information outputs, and consider outcomes turns into simply as essential as technical experience. Individuals who can successfully collaborate with AI methods are capable of transfer sooner and produce higher outcomes.

The concept of competing with AI is slowly shedding relevance. The actual benefit now comes from studying easy methods to work with it and understanding the place human judgment nonetheless issues most.

Development 7: Accountable and Explainable AI Takes Middle Stage

As machine studying methods turn into extra embedded in decision-making, one query retains developing: can we belief what these methods are doing?

For a very long time, many fashions operated like black bins. They produced correct outcomes, however the reasoning behind these outcomes was troublesome to hint. That was acceptable when the stakes had been low. It turns into an issue when those self same methods are utilized in areas like finance, healthcare, hiring, or regulation enforcement.

That is the place explainable AI, sometimes called XAI, begins to matter. It focuses on making mannequin choices extra clear. As an alternative of simply giving an output, the system can present which inputs influenced that call and the way strongly. This makes it simpler for groups to validate outcomes, catch errors, and construct confidence in how the system behaves.

On the similar time, regulation is beginning to meet up with adoption. Governments and regulatory our bodies are introducing frameworks that require corporations to be extra accountable for a way their AI methods are constructed and used. This contains how knowledge is collected, how fashions are skilled, and the way choices are made. Compliance is now not only a authorized concern; it’s changing into a part of the product itself.

Bias and equity are additionally getting extra consideration. Machine studying methods be taught from knowledge, and if that knowledge displays present biases, the mannequin can amplify them. In sensible phrases, this may result in unfair outcomes in areas like mortgage approvals, hiring choices, or danger assessments. Addressing this requires greater than technical fixes. It includes cautious knowledge choice, steady monitoring, and clear accountability for outcomes.

Corporations are beginning to take this critically, not simply due to regulation, however due to consumer expectations. Individuals need to perceive how choices that have an effect on them are made. If a system denies a request or flags a danger, there must be a transparent clarification behind it.

This rising give attention to accountable AI is seen throughout each trade and coverage. Moral issues are now not handled as facet discussions. They’re changing into a part of how methods are designed from the beginning.

The reason being easy. With out belief, adoption slows down. It doesn’t matter how highly effective a system is that if persons are hesitant to depend on it. In 2026, constructing correct fashions is just a part of the job. Constructing methods that folks can perceive and belief is simply as essential.

Wrapping Up

In 2026, machine studying is now not only a set of instruments or experimental options. It has moved into the background of workflows, quietly powering choices, automating duties, and collaborating with people. The emphasis is shifting from constructing greater or flashier fashions to creating methods that may act autonomously, combine seamlessly with present processes, and ship real-world influence.

The tendencies now we have explored — agentic AI, generative AI as infrastructure, specialised fashions, edge computing, operational excellence by way of MLOps, human-AI collaboration, and accountable AI — aren’t remoted developments. Collectively, they characterize a brand new commonplace: machine studying methods that work, reliably and meaningfully, on the coronary heart of enterprise and day by day life.

Machine studying in 2026 is much less about constructing smarter fashions and extra about constructing methods that really do the work.